Platform Lock-In Is Coming for Your AI Stack

Platform Lock-In Is Coming for Your AI Stack

March 30, 2026

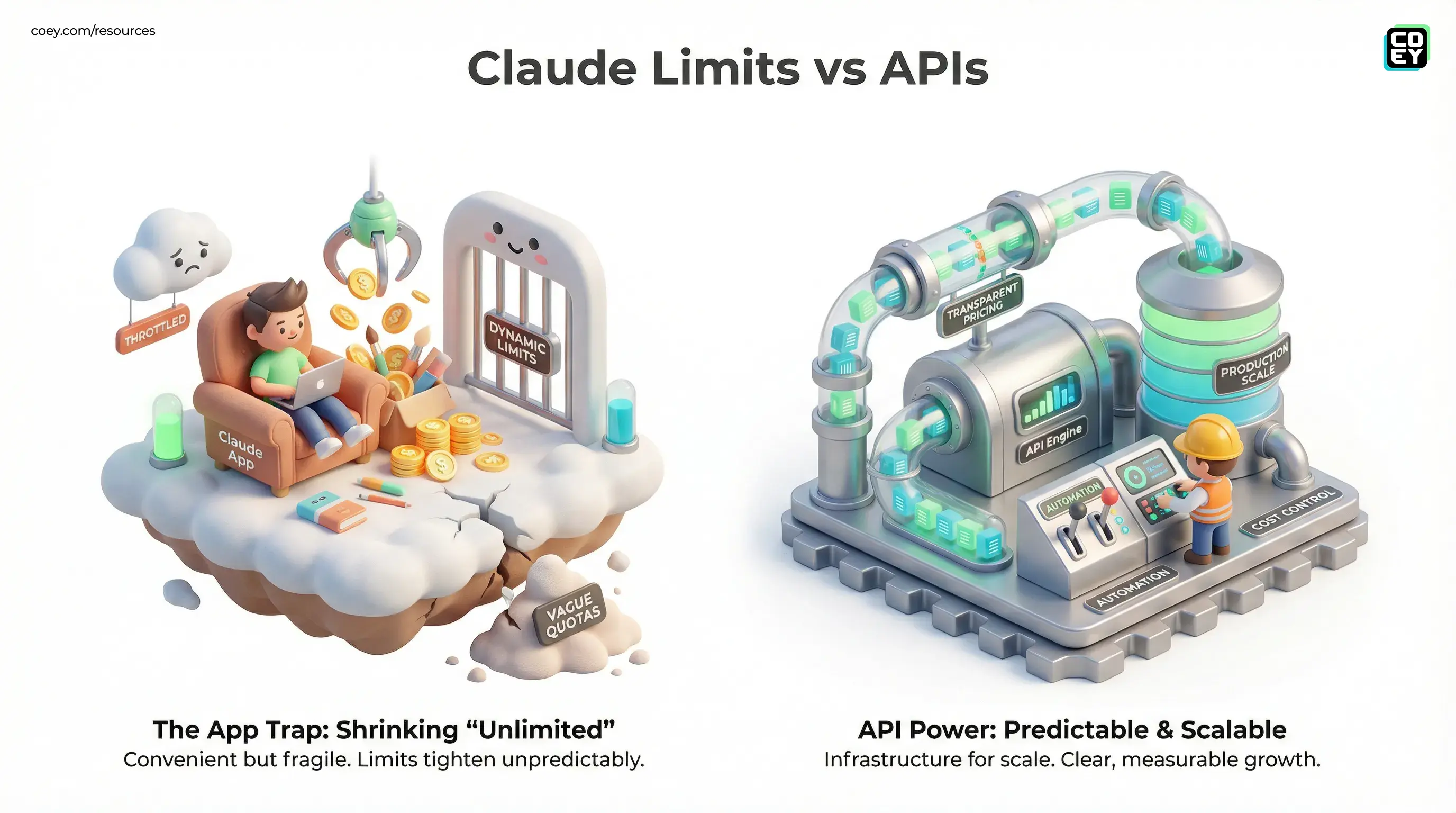

If your team’s creative engine lives inside one AI app, you are not buying convenience so much as renting stability until the math breaks. Recent shifts in consumer AI limits, including Anthropic’s evolving Claude usage policies and more aggressive peak-hour management, are a reminder that “more usage” is not the same thing as predictable capacity. The first paragraph of the fine print always wins eventually, and in AI, that fine print tends to move. Anthropic’s own pricing page still frames plans in relative terms, including Pro plus Max 5x and Max 20x tiers, while its support docs and API docs make clear that limits can vary by demand, workload, and rate class.

That does not make any one company uniquely bad. It makes the category economically honest for about five seconds. AI apps have spent the last stretch in growth mode: generous subscriptions, fuzzy ceilings, and vibes-heavy promises that let creators, marketers, and operators build habits fast. Now the bill is showing up in the least surprising way possible: tighter limits, more dynamic throttling, and an increasing gap between what the product marketing implies and what heavy production workflows actually need.

Translation: if your workflow depends on a single vendor’s generosity, your workflow does not really belong to you.

Why “unlimited” keeps shrinking

The core issue is simple. Consumer AI subscriptions are usually sold around convenience, not transparent unit economics. Anthropic’s Claude plans, for example, position Pro as roughly 5x the free tier, with Max 5x and Max 20x tiers offering substantially more usage than Pro, but those are still relative buckets rather than hard production guarantees. Meanwhile, the Claude API pricing is explicit: you can see token costs, long-context pricing, and batch discounts in plain numbers.

That difference matters more than it sounds. Relative usage claims are great for onboarding. They are much less great when you are trying to run content ops, campaign drafting, or review workflows at scale. If the baseline is undefined, the provider can change the effective ceiling without changing the headline plan name. Very demure. Very enterprise-unfriendly.

Reports around Claude’s peak-hour changes fit that pattern. Public reporting and recent user posts noted more aggressive usage management during busy periods after a temporary off-peak promotion that expanded access and ended on March 27. That is not a scandal. It is what happens when a fast-growing AI product collides with GPU costs, queue management, and power users who treat “chat” like an all-you-can-eat inference buffet.

The app economy was always subsidized

Most major AI apps have followed the same playbook:

- Start generous so people move real work into the product.

- Keep limits vague so the service can flex as demand spikes.

- Build dependence through convenience, history, projects, and custom workflows.

- Tighten later when infrastructure costs and investor expectations show up with receipts.

If that sounds familiar, it should. Rideshare did it. Streaming did it. Cloud software did it. AI is doing it with extra GPU drama and a shinier landing page.

The practical issue is not that subsidies existed. Early subsidies helped teams experiment with human-plus-machine workflows cheaply, which was useful. The issue is treating those subsidies like permanent infrastructure. They are not. They are customer acquisition wearing a productivity costume.

Where the economics get real fast

| Layer | What looks good | What bites later |

|---|---|---|

| Consumer app plan | Easy seats, simple pricing, fast adoption | Dynamic caps, vague quotas, workflow stalls |

| API access | Transparent pricing, automation-ready, measurable | Needs orchestration and cost controls |

| Portable workflow layer | Swap providers, route tasks, reduce lock-in | More setup upfront, but less chaos later |

Why this matters for marketers

For non-technical teams, the temptation is obvious: just use the app everyone likes. It writes fast, remembers context, and feels easier than wiring APIs, prompts, routers, or approval flows. But app-first convenience becomes a liability the moment your work becomes repeatable enough to automate.

If your content calendar, campaign QA, landing page rewrites, or reporting summaries depend on one chat product’s UI, you are exposed to three problems:

- Capacity risk: usage ceilings can change right when your team is busiest.

- Budget ambiguity: you cannot accurately forecast output if the real quota is hidden.

- Workflow fragility: a throttled app is not a dependable production layer.

This is where the gap between “helpful AI app” and “automation-ready system” becomes painfully clear. A chat app is a product. An API is infrastructure. The first is great for experiments and one-off work. The second is what you use when the workflow has to run on Tuesday at 9:07 a.m. without vibes.

API access is the real dividing line

Anthropic’s API docs spell out pricing and rate limits in a way consumer plans do not. As of late March 2026, Claude API pricing is published per million tokens, with examples including Sonnet 4.5 at $3 input and $15 output per million tokens, Opus 4.1 at $15 input and $75 output, and Haiku 4.5 at $1 input and $5 output, plus a 50 percent Batch API discount. That is why APIs matter: not because your CMO wants to read token tables for fun, but because transparent metering is what makes forecasting, routing, and automation possible.

OpenAI shows the same split. Its API rate-limit documentation defines usage in operational terms, while ChatGPT plans remain more product-shaped and subject to plan-level controls rather than fixed public throughput guarantees. That is the broader market signal: if you need predictable automation, you move toward APIs, orchestration layers, and provider choice. If you stay entirely inside the app, you are accepting policy drift as part of the deal.

Non-technical version: if a tool exposes a stable API, you can plug it into your stack, monitor it, budget it, and replace it if needed. If it does not, you are mostly borrowing someone else’s interface.

What is actually ready now

This is not a call to abandon AI apps altogether. They are still useful. For brainstorming, drafting, concepting, and quick-turn collaboration, they remain fantastic. The smarter move is to separate creative interaction from production dependency.

Good uses for app-first AI

- early ideation and rough drafting

- exploratory research

- one-off analysis and creative jams

- personal productivity where occasional limits are tolerable

Better uses for API-first AI

- scheduled content generation

- structured output into CMS, CRM, or DAM systems

- multi-step review and approval flows

- fallback routing across providers when one service degrades

| Workflow type | Best fit | Reality check |

|---|---|---|

| Solo drafting | Consumer AI app | Fast and fine until limits hit |

| Team production ops | API plus orchestration | More setup, far better control |

| High-volume automation | Portable multi-provider stack | Best defense against lock-in and outages |

How smart teams avoid getting trapped

The winning move is not loyalty. It is portability.

That means writing prompts and workflows that are not married to one interface, exporting important knowledge out of proprietary chat histories, and building a routing layer where alternate models can step in when pricing or limits change. If your team is already thinking about governance and interoperability, our earlier post on NIST’s AI Agent Standards push is useful context for where enterprise expectations are heading. And if you are further along in designing provider flexibility, Model Routing: Creative Ops for Smart Automation maps out the operational side of making that portability real.

Because this is the bigger story: AI platforms are maturing from novelty apps into infrastructure vendors. Infrastructure vendors optimize for margin, reliability, and control. You should too.

The bottom line

Rate hikes, usage caps, and quiet throttling are not temporary glitches in the AI app market. They are the market growing up. As more teams move real creative and marketing work into AI tools, the old subsidy-heavy model becomes harder to sustain. Providers will keep adjusting. Some will do it clearly, others with enough ambiguity to make your ops lead develop a new eye twitch.

The fix is not panic. It is architecture. Keep the spark human, let machines do the grind, but build your system so no single app gets to hold your throughput hostage. Use chat apps for speed. Use APIs for scale. Use portability for sanity.

Because human plus machine is the future. Human plus one vendor’s moving goalposts? That is just a future invoice.