Creatives That Learn From Outcomes

Creatives That Learn From Outcomes

October 22, 2025

Your ad creative wants a memory and a paycheck

Marketing teams still run their channels like a bake sale: toss out A/B tests, crown a winner, and promise to “get creative” next time. But today, you have a gold mine of live performance signals that could be teaching your ad and content machine to improve itself every single hour. Welcome to performance-trained marketing: reinforcement learning and modern bandit algorithms custom-built for big budgets, brand rules, and real-world chaos. Not academic cosplay. This is a practical feedback loop that lets creative ideas evolve based on real outcomes and finally earn their keep. For a taste of the bleeding edge, check out Meta’s recent work on AI-driven creative tools for advertisers.

Why yesterday’s A/B tests are not cutting it

Classic split testing is slow, expensive, and blissfully unaware of cost. Perfect if your speed goal is glacial and your favorite metric is “vibes.” But as campaigns stretch across dynamic multi-channel environments, where audiences shift hourly, creative fatigue sets in before your sample size is ready, and offers expire while you wait for results, A/B is hopelessly outdated.

| Approach | Speed to learn | Cost awareness | Risk control | Best use |

|---|---|---|---|---|

| Classic A/B | Slow | Low | High, primitive guardrails | Stable traffic, binary changes |

| Multi-armed bandits | Fast, continuous | Medium | Medium, responsive limits | Ongoing creative rotation |

| Reinforcement learning w/ guardrails | Fast (post bootstrap) | High by design | High, with constraints | Full-funnel optimization |

Punchline: A/B is not dead, but it should not be your only trick. When your ads, emails, and videos actively learn from outcomes in near real time, you compound performance lift without compounding headcount. For process-minded marketers, this is automation you can quantify.

The performance-trained stack in one picture

[Signals]

clicks, add_to_cart, signups, LTV, refunds, costs

|

[Reward Builder]

sculpts ROI + quality + safety into a single score

|

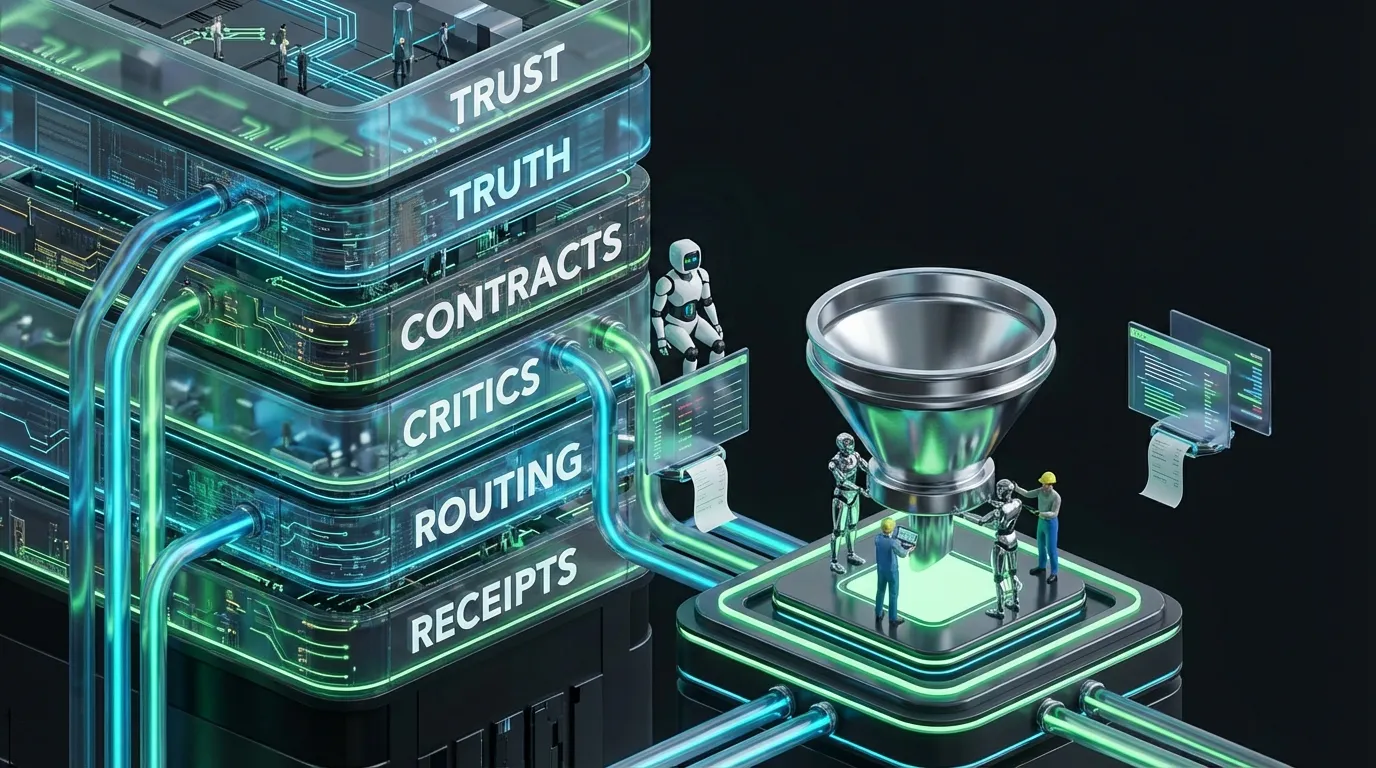

[Policy]

- Router: which audience, channel, time

- Generator: creative variant by schema

- Critic: brand, legal, style, compliance checks

|

[Bandit / RL Layer]

pick variants, explore a bit, exploit a lot

|

[Budget + Guardrails]

limits, spend caps, safety

|

[Channels]

ads, email, web, short video

|

[Attribution + Evaluator]

holdouts, IPS/DR estimators, error bars

|

[Memory]

update priors and creative motifs

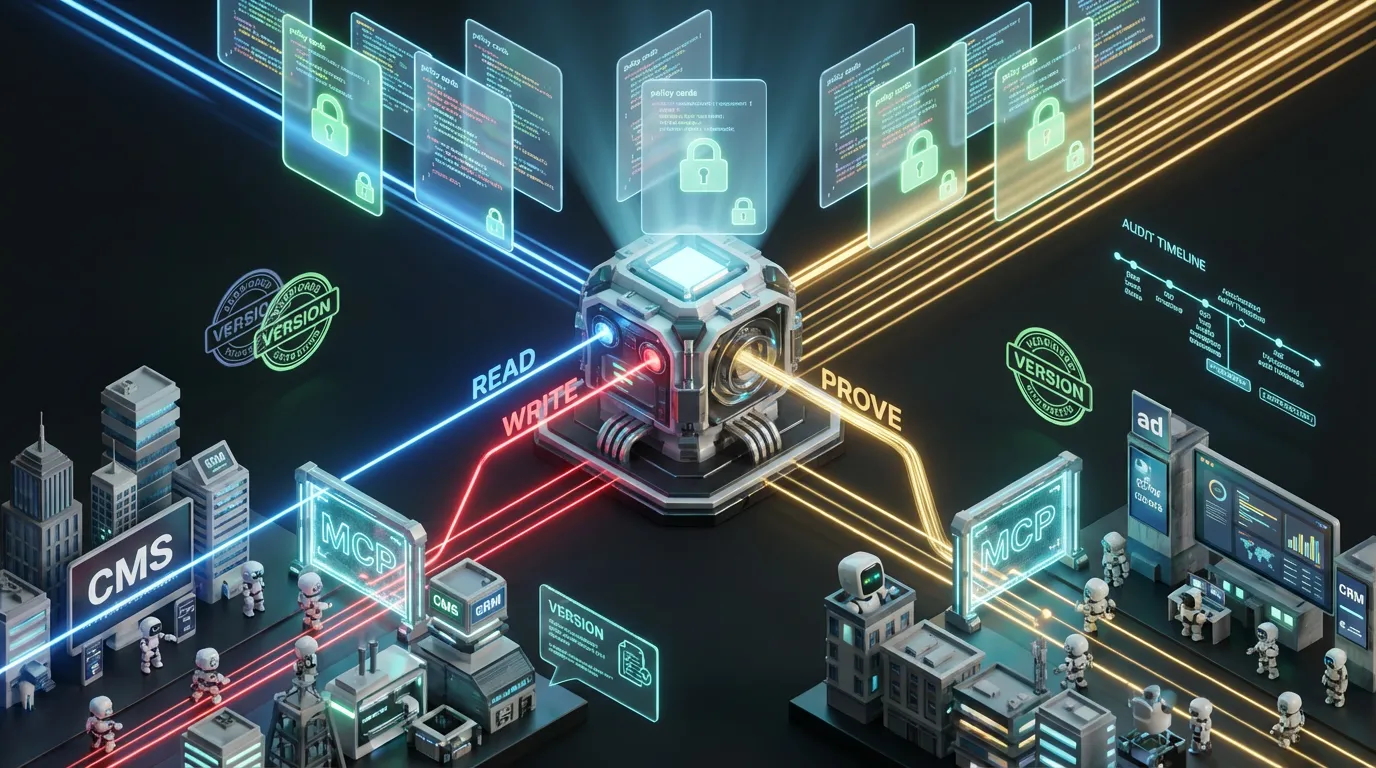

All the right buzzwords in the right slots: retrieval-augmented generation (RAG) for facts, lightweight models for speed and “no,” massive models for gnarly creative synthesis, and a policy engine that vetoes bribes from rogue robots.

Reward shaping marketers can live with

Reinforcement learning requires a reward function. In marketing English: you need a scoreboard. The goal is to match business reality, think margin, lift, and compliance, without making incentives perverse.

# Pseudocode reward for an impression

reward = 0

# Positive revenue actions

reward += w_conv * is_conversion * margin_dollars

reward += w_ltv * modeled_ltv_90d

# Subtract costs and warranty risks

reward -= w_spend * spend_dollars

reward -= w_refund * refund_dollars

# Penalize bad creative and legal risk

reward -= w_claim_risk * claim_violation

reward -= w_fatigue * creative_fatigue_score

# Encourage fair exploration

reward += w_explore * underexposed_audience

# Normalize and clip for stability

reward = clip(normalize(reward), -r_max, r_max)

Tip: Don’t waste two quarters debating “the perfect weights.” Make them tunable. Start with clear incentives: margin and payback. Add penalties for policy violations, unsubstantiated claims, or spammy hooks. Enforce clipping to future-proof yourself against Goodhart’s Law on day zero.

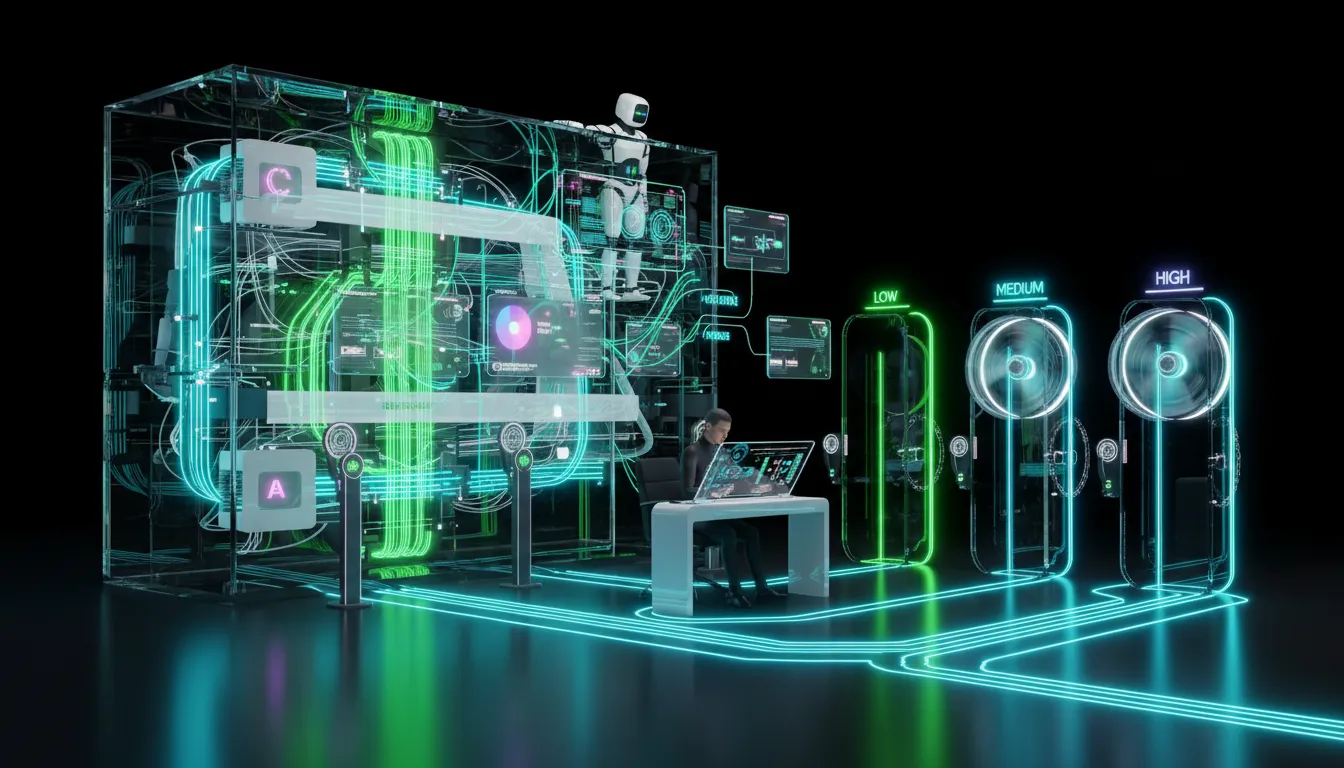

Constraints: keeping RL out of the doghouse

- Safety first: Block outputs that trip brand or legal wires. Make these hard stops, not gentle warnings.

- Budget caps: Never let any single creative run wild with your credit card. Cap spend per audience and per variant.

- Pacing: Rotate and enforce impression limits per user. Prevent fatigue in the name of “personalization.”

- Schema locks: Structured outputs for ad copy, subject lines, and video. No off-roading into “creative soup.”