Kling O1 Unifies AI Video Generation and Editing

Kling O1 Unifies AI Video Generation and Editing

January 5, 2026

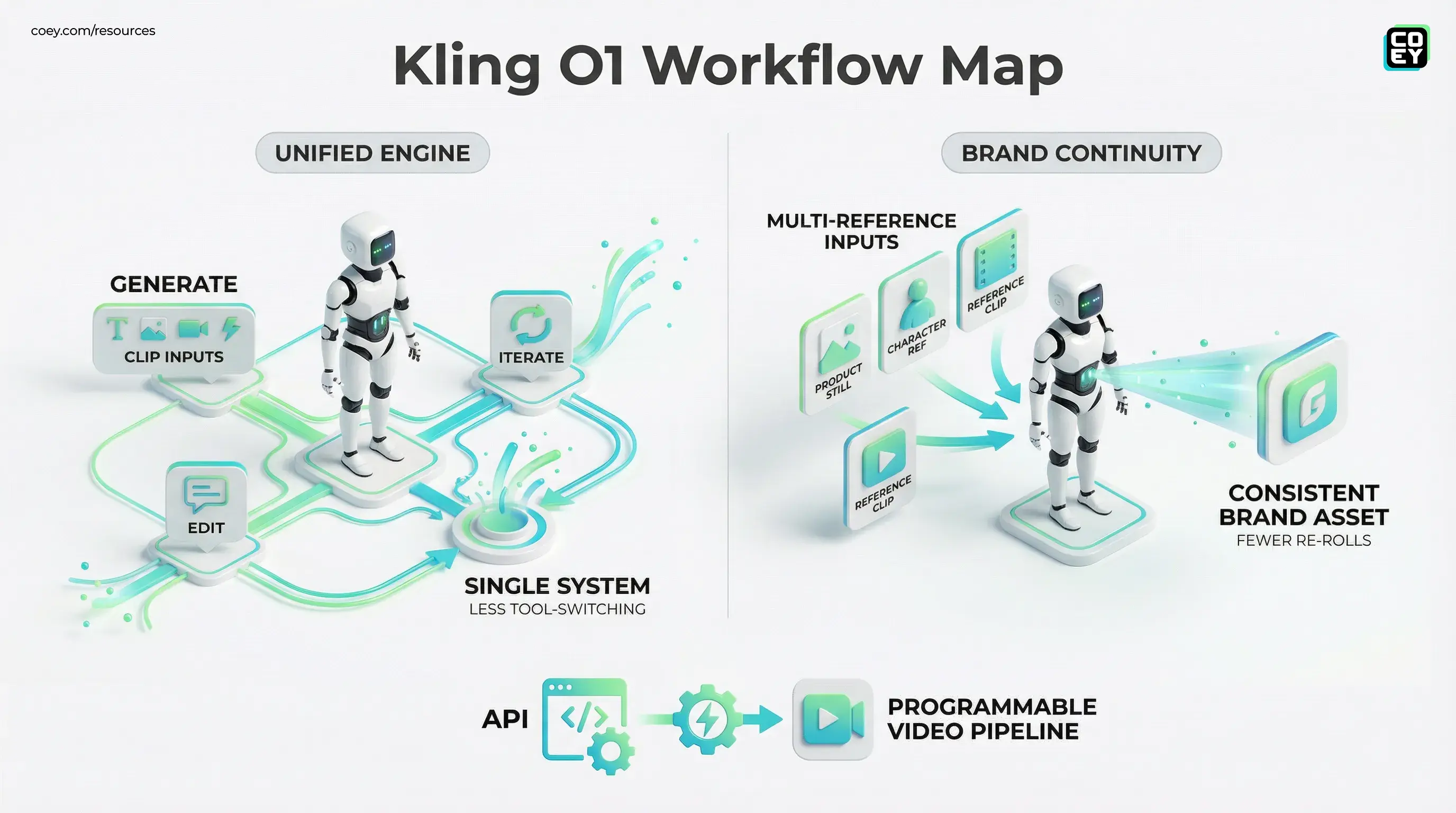

Kling AI just introduced Kling O1, pitching it as a unified multimodal video system: generate the clip, then keep iterating with natural-language edits, without hopping between a text-to-video tool, an editor, and a patchwork of post-production fixes. For marketing and creative teams, that’s the real promise: less tool-switching, fewer broken handoffs, and a shorter path from concept to usable asset.

This isn’t the “AI made a video” era anymore. The bar is whether the model can survive real feedback loops: change the setting, swap a prop, tighten the look, keep the character consistent, and avoid brand drift. Kling O1 is explicitly targeting that loop.

What Kling O1 actually shipped

At a high level, Kling O1 combines generation and editing into one system rather than forcing creators into the typical generate, export, fix, regenerate spiral. Kling is positioning it as a single engine that can:

- Generate video from text, images, and reference clips

- Accept multiple reference images to anchor continuity

- Perform prompt-based edits via plain-English instructions

- Maintain style coherence across scenes and revisions

The most practical detail: O1 supports multi-reference inputs. Kling’s own O1 materials describe multi-reference processing as supporting up to 10 reference images. In hands-on creator usage, people often report lower practical counts depending on the UI path, but the strategic point holds: multi-reference control is the difference between a cool clip and a repeatable brand format.

Why unified matters for marketing ops

In creative operations, the hidden cost isn’t generating the first draft. It’s the tenth revision.

Most teams don’t fail at AI video because the model is bad. They fail because the workflow is brittle:

- The character drifts between scenes

- The product morphs, or invents extra parts

- Every revision means re-exporting, reuploading, re-editing

- Iteration time stacks up until the team quietly goes back to stock footage

Kling O1’s unified approach is meant to keep the work inside one system: you generate, then issue edit instructions like change the background to a crowded city, remove the coffee cup, or make the lighting warmer. If it works as advertised, it reduces one of the biggest taxes in creative production: context loss between tools.

The operational win isn’t that the model is smarter. It’s that the workflow stops bleeding time every time someone says “small tweak.”

Continuity: the make-or-break feature

Kling O1 is leaning hard into continuity across shots and edits, which is exactly what marketers and agencies need for campaign systems, not one-off visuals.

Continuity is where a lot of gen-video tools still fall apart. You can get a beautiful 5 to 10 second clip, but the second you try to turn it into a sequence, the character’s face changes, the wardrobe drifts, and the same product becomes the product’s uncanny cousin.

O1’s multi-reference workflow suggests a clearer path:

- Use brand and product stills as anchors

- Use character references to reduce identity drift

- Use reference clips to guide motion and style direction

That means performance teams can chase what they actually want: high-volume iteration without sacrificing recognizable brand elements.

For more on building repeatable workflows with Kling, see Kling AI Now Enables Repeatable Video Automation.

Natural-language editing: less NLE, more creative control

Text-based editing isn’t new as a concept, but it’s notable to see it positioned as a primary workflow inside a video generation system.

For non-technical teams, this matters because it changes who can iterate:

- You don’t need an editor for every minor revision

- You don’t need to open a timeline for every quick change

- You can respond to feedback faster, especially in paid social cycles

This does not replace professional editing for complex work. Timing, pacing, narrative rhythm, sound design, and compliance still need human judgment. But for common marketing edits, natural language can be a real throughput unlock.

API availability: can this plug into workflows?

This is where things get interesting for teams trying to scale output with systems, not just humans.

Kling O1 isn’t only a web tool story. It is already being distributed via API-first platforms, most notably through fal.ai’s announcement that O1 is available exclusively as an API on fal.ai: fal.ai’s Kling O1 release.

That single detail changes the category from cool creator product to programmable video capability. If you can call it via API, you can wire it into real flows:

- Generate drafts automatically from a content brief form

- Batch produce variants for A/B testing

- Trigger edits from review feedback (version B but with warmer lighting)

- Route outputs into your DAM, Slack approval, or project management pipeline

Automation readiness snapshot

| What teams need | What O1 enables | Reality check |

|---|---|---|

| Batch variant generation | API-driven submissions for many runs | You still need QA gates and retry logic |

| Brand consistency | Multi-reference inputs to anchor identity | Expect drift in edge cases; keep human review |

| Fast revisions | Prompt-based edits on existing outputs | Not every edit will be perfect; watch for unintended changes |

| End-to-end automation | Composable via API plus workflow tools | The system is still on you: approvals, governance, logging |

If your team lives in tools like Make or n8n, API access is what turns O1 into a production component rather than a someone go generate videos task.

Real-world readiness: what’s useful vs what’s shiny

Kling O1 is clearly aimed at real production constraints, but it still needs a grown-up read.

What looks genuinely production-friendly

- Unified generate plus edit loop reduces toolchain friction

- Reference-based control aligns with how brands actually work (assets, guidelines, repeatability)

- API availability via partners suggests it can be operationalized, not just demoed

What will still require humans

- Brand governance: claims, tone, visual appropriateness, rights and consent

- Quality control: continuity breaks will still happen in non-trivial scenes

- Final editorial judgment: pacing, story beats, platform-specific formatting

In other words: O1 can remove grind, not responsibility. That’s the collaboration model that scales: humans provide intent and taste, machines provide speed and iteration.

The bigger implication: video is becoming programmable

Kling O1 is another signal that generative video is evolving from tool into infrastructure. Once you can generate and revise in a single system, and call it via API, you can start treating video like modern content operations treat everything else:

- templated

- versioned

- automated

- measured

- iterated

That’s how creativity scales without burning out teams. Not by chasing magical one-click campaigns, but by building reliable human plus machine loops where the machine does the repetition and the humans keep the work intelligent.

If Kling O1’s unified approach holds up under real campaign pressure, it’s less new model drop and more your video pipeline just got a backend.