AMD Hummingbird XT and XTX Make Open Video Faster

AMD Hummingbird XT and XTX Make Open Video Faster

January 10, 2026

AMD’s AI team has released Hummingbird-XT as an open text-to-video model and alongside it, a long-video focused sibling, Hummingbird-XTX positioning the pair as a speed-and-practicality play in the open video ecosystem. This isn’t “AI video is cool” news. It’s “AI video might finally behave like a pipeline component” news.

For creators, performance marketers, and creative ops teams, the headline isn’t just that AMD shipped models. It’s that they’re explicitly chasing the constraints that kill video automation in the real world: slow sampling loops, inconsistent continuity, and the brutal compute bill that turns “let’s generate variants” into “never mind.”

Open video models don’t win on vibes. They win when they can generate fast enough, repeatably enough, that you can treat video like programmable inventory not a one-off experiment.

What AMD actually shipped

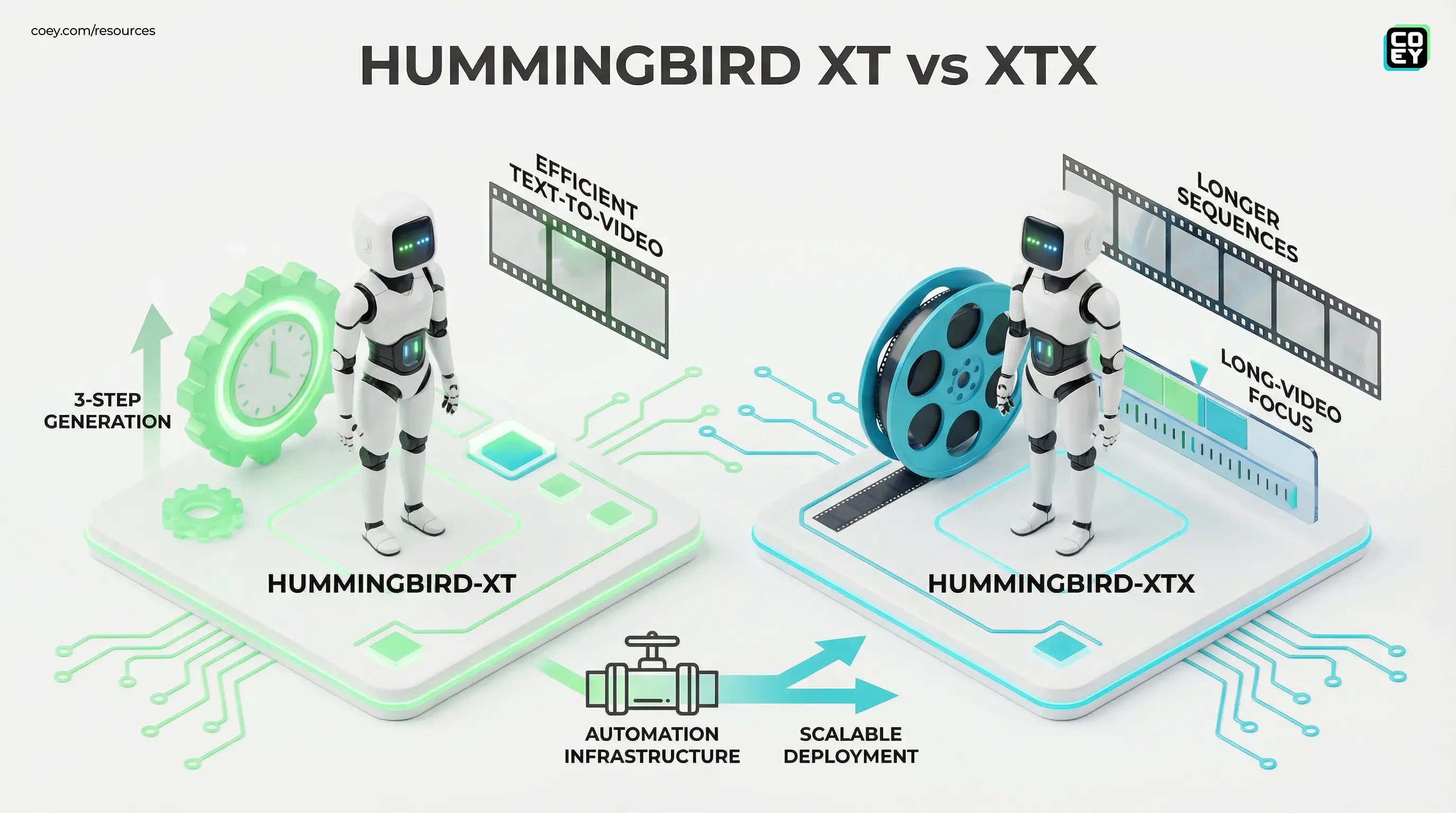

AMD’s Hummingbird release is best understood as a two-model strategy:

- Hummingbird-XT: an efficiency-optimized text-to-video model that AMD is highlighting for very low-step inference. AMD leadership has publicly described XT as supporting 3-step generation (positioned as a core efficiency feature) rather than this being purely community marketing.

- Hummingbird-XTX: a long-video focused variant. AMD describes XTX as DiT-based and oriented around generating longer sequences more efficiently.

AMD has also published an implementation-oriented ROCm blog that frames the broader Hummingbird-XT effort around efficient generation and deployment on consumer-grade AMD platforms, emphasizing practical inference strategies for longer outputs and smoother deployment realities. (If you’re an ops-minded team, that’s the tell: they’re thinking about serving, not just showing.)

AMD ROCm blog: Hummingbird-XT deployment notes

Why “3 steps” is the real headline

In open video, speed is not a nice-to-have. It’s the difference between a model you can integrate and a model that lives forever in a Discord “look what I made” thread.

Most diffusion-based video pipelines still suffer from a familiar trio of pain:

- Render latency: too many sampling steps to iterate like a normal creative team.

- Continuity drift: backgrounds morph, objects mutate, motion jitters great for surreal art, terrible for brand work.

- Variant throughput: generating 50 ad hooks is only useful if it finishes before the campaign window closes.

Hummingbird-XT’s “few step” posture is basically a bet that video generation needs to feel closer to image generation’s iteration loop fast enough to support selection, review, and re-run cycles without burning a day per concept.

Speed is not about impatience. Speed is about keeping humans in the loop without humans losing the will to live.

Automation potential: can it plug into workflows?

Here’s the pragmatic read: AMD shipped open artifacts (code plus weights), not a polished hosted product. That means you don’t get a friendly “paste prompt, get MP4” enterprise API out of the box but you do get the thing ops teams actually want long term: control.

Because the model is open, teams can productionize it in a few common patterns:

- Internal inference service: wrap generation in a small service your stack can call (HTTP endpoint), then route outputs to storage plus review.

- Queue-based batch rendering: treat generation like a render farm job submit prompts, run workers, collect outputs, retry failures.

- Hybrid creative pipelines: generate “first draft” clips locally or on-prem, then finish in traditional tools (captions, pacing, compliance).

Translation for non-technical leaders: this can be automated, but it’s “bring your own plumbing.” If your org already runs internal services (even lightweight ones), Hummingbird can become a callable component. If you don’t, it’s still usable but it will behave more like a power-user tool than a plug-and-play product.

API availability (what’s real vs. implied)

Official public hosted API: not the core story right now. AMD’s release is oriented around open distribution and deployment guidance rather than “here’s your production endpoint.”

Practical API reality: open models are inherently API-capable because you can stand up your own endpoint. The difference is effort and reliability engineering (monitoring, retries, cost controls, governance).

| Question | Answer | Ops implication |

|---|---|---|

| Is it callable? | Yes (self-host) | Wrap inference as a service or worker |

| Is it turnkey? | No (not a SaaS endpoint) | Plan for MLOps-style deployment effort |

| Is it automation-friendly? | Yes (batch plus queues) | Best ROI in variant generation plus selection flows |

Where these models matter most for marketers

Let’s keep it creator-to-creator: most marketing teams don’t need “cinema.” They need volume plus iteration plus on-brand repeatability. That’s why this release is interesting AMD is targeting the exact axis where performance marketers live.

High-leverage workflow fits

- Paid social variant factories: generate multiple hooks, scenes, and visual metaphors quickly, then let humans pick winners.

- Campaign localization: produce region-specific backgrounds or on-screen text treatments without restarting from scratch.

- Concept exploration: use gen-video as a pre-production ideation engine before committing to shoots or full motion design.

And yes, AMD’s hardware positioning matters. If you’re already invested in AMD GPUs, the “optimized for AMD platforms” story can reduce friction. If you’re not, you should still care because competitive pressure on open video efficiency benefits everyone (including your cloud bill).

If you want a good comparison point on “open model intended for throughput and workflows,” see our recent coverage of local-first open video: HunyuanVideo 1.5 Makes Local AI Video Practical.

Real-world readiness: what’s usable vs. what’s shiny

Open video models tend to over-promise in the abstract and under-deliver in production. So here’s the balanced take.

What looks legitimately practical

- Efficiency-first posture: AMD is explicitly pushing deployment and throughput, not just demo aesthetics.

- Open availability: AMD has stated the models are publicly released with downloadable artifacts (code and weights).

- Long-video intent (XTX): longer generation isn’t just a flex; it’s what makes sequences and explainers less painful.

What still needs proof in the trenches

- Consistency under brand constraints: “better continuity” is not the same as “your product never melts.”

- Workflow stability: batch rendering needs predictable failure modes, retries, and version pinning.

- Governance: you still need QA gates especially if outputs go anywhere near paid spend or public claims.

Open models are not set it and forget it. They’re “you own the capability,” which is better if you also own the process.

What to watch next

The adoption curve for open video models is usually defined by the ecosystem that forms around them: community inference servers, optimized kernels, wrappers, and UI front-ends that make them less “research repo” and more “production component.”

AMD has made the release discoverable via its official links and documentation, which accelerates that ecosystem effect. If you want to track the core release surface directly, start here: AMD Hummingbird T2V repo. For the image-to-video side of the broader Hummingbird line, AMD also hosts model artifacts on Hugging Face: AMD Hummingbird I2V.

Bottom line

Hummingbird-XT and Hummingbird-XTX are AMD making a clear statement: open video generation needs to be fast, scalable, and deployable not just impressive in a single cherry-picked clip. For marketing and creative ops teams, the upside is real: shorter iteration cycles, more variant throughput, and a path to treating video generation like automation infrastructure.

Just don’t confuse “open weights” with “ready-to-run factory.” The models are ingredients. The compounding advantage comes from building the human plus machine loop around them: structured briefs in, batch renders out, human selection and finishing, and governance that keeps your brand safe while your output goes brrrr.