Veo 3.1 Finally Ships True Vertical AI Video

Veo 3.1 Finally Ships True Vertical AI Video

January 14, 2026

Google DeepMind just pushed an update to Veo 3.1 that finally aligns generative video with how the internet actually ships content: native vertical (9:16) video generation. And yes, that’s a bigger deal than it sounds, because “just crop it” is how campaigns quietly die in post.

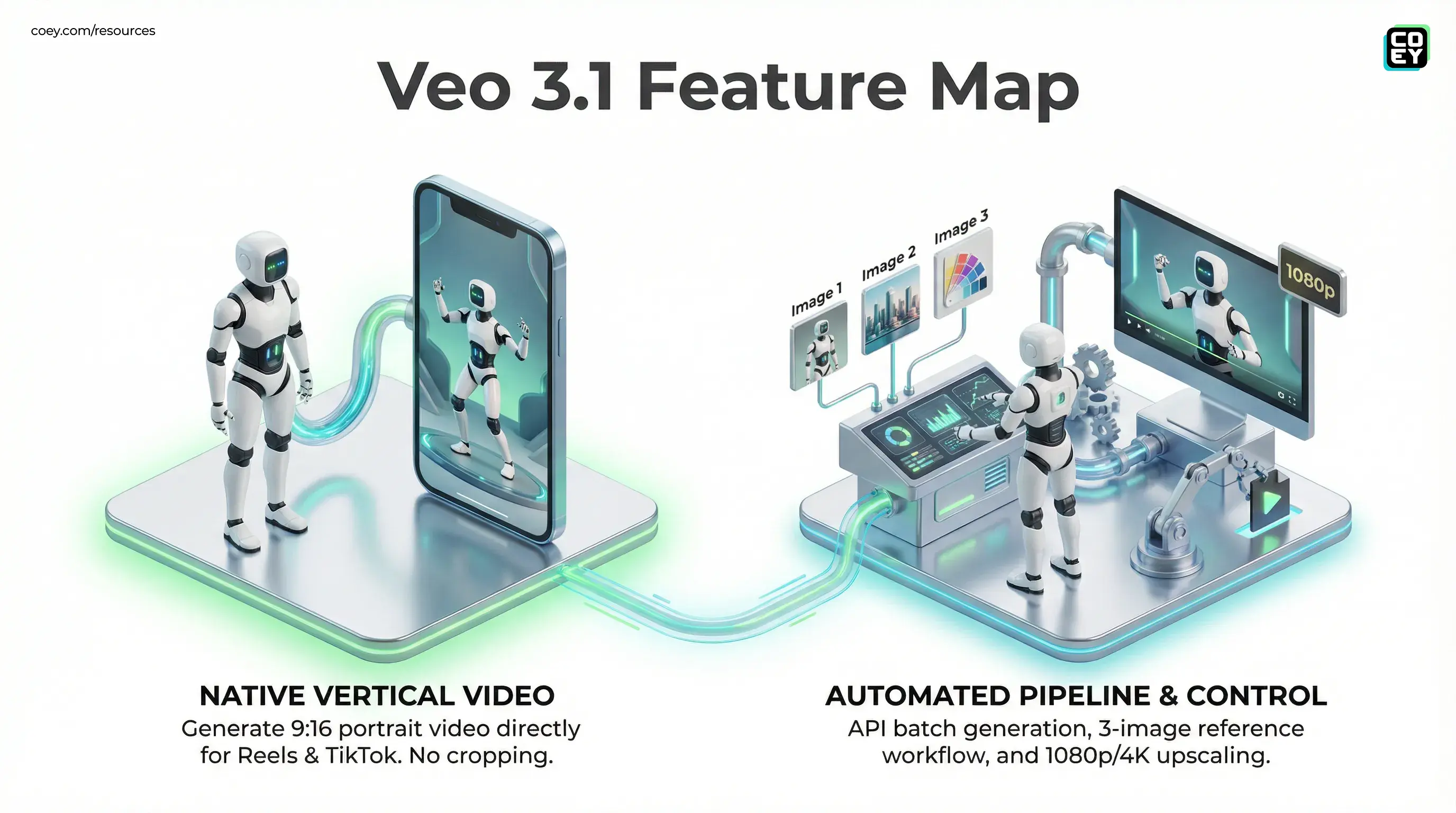

Alongside vertical output, Veo 3.1 adds stronger scene consistency, expands its “Ingredients to Video” reference workflow to up to three images, and offers integrated upscaling to 1080p (with 4K available in certain pro and enterprise surfaces). The practical signal here isn’t “look what AI can do.” It’s: Google is turning video generation into an automation primitive, something you can trigger, batch, and route through real pipelines, not just toy around with in a UI.

Vertical video is now native

Veo 3.1’s headline upgrade is simple: you can generate portrait video directly in 9:16 without doing the “edit suite gymnastics” routine. That matters because vertical is no longer a format, it’s the default behavior of attention.

Previously, even high-quality AI video teams would generate in landscape and then:

- crop and reframe by hand (time sink),

- use auto-reframing tools (often janky), or

- regenerate the entire clip hoping the subject stays in frame (expensive prompt roulette).

Translation: native 9:16 output removes a whole class of “death by a thousand micro-edits” that kills speed in short-form production.

Google’s positioning is also telling: this isn’t framed as a creator “nice-to-have.” It’s framed as a workflow capability, especially through “Ingredients to Video,” where portrait reference images can guide composition from the start.

Consistency upgrades: less drift, fewer reshoots

Generative video has always had a reliability problem: characters morph, objects teleport, backgrounds wobble like they’re remembering themselves mid-shot. Veo 3.1’s update focuses on tightening that up, improved character, object, and background consistency across scenes.

For marketers and creative leads, this is the difference between:

- “We got one good clip.” (cool demo, unusable campaign)

- “We can get five usable variants.” (repeatable pipeline)

Consistency is what makes automation worth it. If outputs don’t stay stable, you can’t safely batch-generate, A/B test, or build templated campaign systems without humans cleaning up every result.

Ingredients to Video gets more controllable

The “Ingredients to Video” workflow is Veo’s growing wedge into real production: you feed reference images and prompts, and it generates video that’s supposed to respect those inputs instead of freelancing.

With Veo 3.1, Google expands this to up to three reference images. That sounds minor, but in practice it’s a control unlock:

- Image 1: product shot or subject anchor

- Image 2: background or setting reference

- Image 3: style, lighting, or wardrobe cue

That’s how brands actually work. Creative direction isn’t a single JPEG, it’s multiple constraints at once. Three inputs doesn’t solve brand consistency overnight, but it raises the ceiling on “usable without repainting it in post.”

Resolution and upscaling: where “demo” becomes “deliverable”

Veo 3.1 also adds integrated upscaling: 1080p is supported, and 4K is available on some higher-tier and enterprise surfaces.

This matters less for the “AI looks cool” crowd and more for teams shipping paid placements and polished creative. You can’t run a serious campaign on mushy, artifact-heavy outputs without your brand looking like it’s sponsored by 2012 compression.

| Output option | What it’s good for | Workflow reality |

|---|---|---|

| Native vertical 9:16 | Shorts, Reels, TikTok | Less reframing and edit debt; faster publish |

| 1080p upscale | Paid social, brand channels | More shippable without external upscalers |

| 4K upscale (select higher tiers) | Premium placements, high-end edits | Best if you’re already in Google’s pro or enterprise stack |

Where Veo 3.1 actually lives

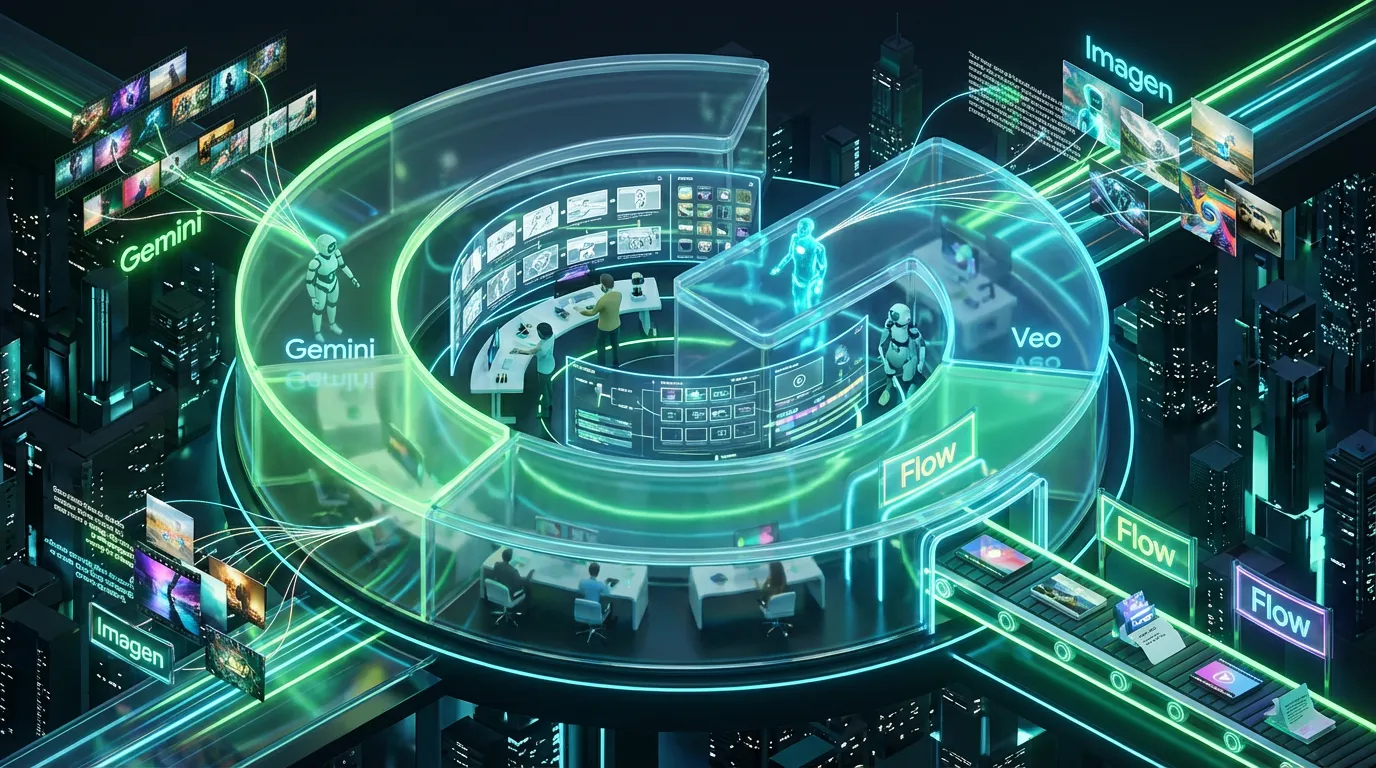

Google is distributing Veo 3.1 across consumer and professional surfaces, which is the other big signal: they want this model to be both a creator tool and a programmable capability.

- Gemini app (consumer creation plus experimentation)

- YouTube surfaces, including Shorts-adjacent creation (availability can vary by product and rollout)

- Flow (Google’s AI filmmaking tool: overview)

- Gemini API (developer access and automation potential; Veo 3.1 is offered in paid preview in the API)

- Vertex AI (enterprise-grade deployment plus scaling)

If you want the COEY breakdown of what Veo 3.1 changes for vertical and workflow control, see: Veo 3.1 Lets You Control Vertical Video and End Frames.

Automation lens: is it pipeline-ready?

Here’s the grown-up question: can Veo 3.1 plug into workflows without humans babysitting it?

Mostly yes, if you’re using the API surfaces. Google’s own developer framing positions Veo 3.1 as accessible via the Gemini API (and also via Vertex AI for enterprise), which is the difference between “cool feature” and “automation building block.” Google’s developer announcement is here: Veo 3.1 in the Gemini API.

For non-technical teams, API access means:

- Batch generation (100 variations overnight instead of 3 clips manually)

- Trigger-based content (generate new creative when a product launches, a price changes, or a campaign needs refresh)

- System integration (send outputs into storage, review, and scheduling tools automatically)

| Workflow move | API advantage | What’s still human |

|---|---|---|

| Generate vertical variants | Programmatic 9:16 output at scale | Selecting the best takes |

| Brand steering via references | Up to 3-image conditioning reduces drift | Providing good ingredients and constraints |

| High-res deliverables | 1080p or 4K upscaling built-in (where enabled) | Final edit polish and compliance review |

Reality check: “API available” doesn’t mean “hands-free.” It means you can build a conveyor belt, but you still need QA gates, budget caps, and brand checks unless you enjoy explaining weird fingers to your CMO.

Provenance and trust: SynthID is in the mix

Google also continues to attach provenance infrastructure to outputs: videos generated using Google’s tools include an imperceptible SynthID watermark. Google has also rolled out Gemini app capabilities that can help users check for SynthID in videos generated or edited with Google AI tools.

For brands, this is less about virtue signaling and more about risk management. The more AI video gets used in public-facing campaigns, the more you’ll want:

- internal tracking (what model generated this?)

- audit trails (who approved it?)

- channel policy alignment (what disclosures are required?)

What this means for real teams

Veo 3.1’s update is not a “new era of cinema” moment. It’s something better: an ops upgrade that removes friction in the exact place modern content teams bleed time: short-form formatting, consistency, and rerender chaos.

For executives: this is a sign that AI video is moving from experimental to operational, especially as Google keeps routing the capability through Vertex AI and the Gemini API.

For marketing and creative ops: the win is straightforward: fewer handoffs, fewer edits, more throughput. Vertical-first generation plus better reference control is exactly the kind of reliability that makes human creativity scale.

And for everyone tempted to overhype it: you still need a system. Without QA gates and structured inputs, you’ll just generate faster chaos. With them, Veo 3.1 starts to look like a legitimate collaborator in a production pipeline, one that finally understands the internet is vertical.