Luma Ray3 doubles down on “reasoning” AI video, now with native HDR for real production

Luma Ray3 doubles down on “reasoning” AI video, now with native HDR for real production

September 21, 2025

Reasoning meets color science: Luma’s Ray3 aims past the demo reel

Luma has launched Ray3, a new-generation video model that leans into “reasoning” (context-aware planning across shots) while outputting native High Dynamic Range. If you care about sequence continuity, brand fidelity, and post-friendly files, this matters. The company’s overview and user guide outline a leap from single-shot novelty to sequence-level intent, plus studio-grade HDR formats for finishing workflows (Luma Ray3 user guide).

COEY take: Ray3 isn’t just “prettier video.” It is a shot at automating the unglamorous glue in production: continuity, consistency, and color, so humans can spend more time directing and less time fixing.

What “reasoning” means for AI video

Reasoning, in practice, means Ray3 can plan across a sequence rather than treating every clip as an isolated island. That shows up as steadier camera language, more coherent motion, and fewer jarring jumps when you string clips together. For marketers, that’s the difference between “good enough for social” and “credible client preview.” For creators, it’s the path from mood boards to scenes with intent.

- Better temporal coherence: characters, motion, and scene logic carry between shots instead of resetting every time.

- Draft mode for speed: an exploration tier that generates concept passes significantly faster before you commit to high-detail renders.

- Input flexibility: text-to-video and image-to-video pipelines help you lock tone and composition quickly before refining.

Native HDR: production-grade from the jump

Ray3’s other headline is native HDR. Instead of faking dynamic range in post, the model can produce high bit-depth outputs suitable for professional grading pipelines (ACES/EXR is explicitly supported). That matters for brand color, compositing, and blending AI shots with live action without the “why does this look crushed?” problem.

- High bit-depth outputs: Ray3 supports 10-, 12-, and 16‑bit HDR, including ACES2065‑1 EXR sequences, which speaks to real finishing needs (Post Magazine).

- Cleaner handoff to editorial: grading latitude and highlight detail survive the journey into Premiere, Resolve, or Nuke.

- Brand safety: saturated product colors and skin tones hold up across channels, minimizing painful “doesn’t match the hero” feedback loops.

Access: Dream Machine and a new Adobe lane

Ray3 runs in Luma’s own Dream Machine service and (headline alert) inside Adobe Firefly. Adobe’s announcement frames Ray3 as an early-access model embedded in Firefly’s Text to Video feature for short cinematic clips (up to roughly 10 seconds), with content credentials and Creative Cloud sync baked in (Adobe announcement).

- Firefly integration: generate clips, then hand off to Premiere Pro or After Effects using your normal CC asset pipeline.

- Promotional window: Adobe is offering a limited-time period with generous usage for Firefly and Creative Cloud Pro subscribers, encouraging real workflow tests.

- Pricing and tiers: on Luma’s side, Ray3 availability maps to subscription levels, with draft vs. high-res/HDR output access varying by plan (Luma pricing).

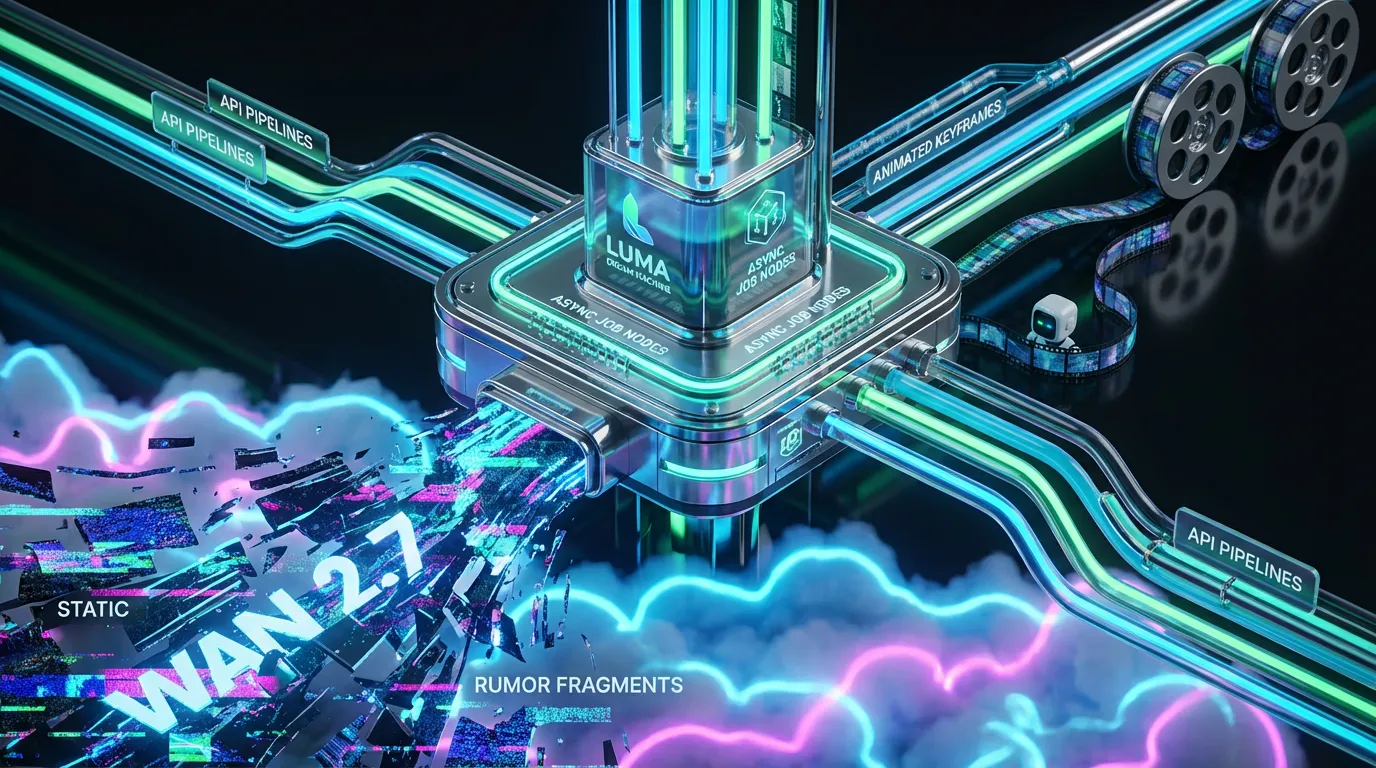

Automation lens: can you plug Ray3 into your stack?

Short answer

Partially, today. If your stack lives in the Adobe ecosystem, Firefly + Creative Cloud gives you a controllable path for ingest, review, and edit. Luma’s Dream Machine offers web-based runs and account tiers for throughput. For fully headless automation (programmatic generation via API, batch jobs, and webhooks), the situation is evolving.

What’s ready now

- Sequence-aware generation that reduces manual shot stitching and re-rendering.

- Native HDR outputs (including ACES EXR) that slide into grading and VFX without conversion drama.

- Adobe Firefly integration for streamlined creative iteration inside CC libraries.

What’s not (yet) plug-and-play

- Public, documented API for Ray3: Ray3’s direct API endpoints are not broadly published at time of writing. If you’re imagining n8n/Make/Zapier on day one, temper expectations.

- Programmatic shot lists: Reasoning is promising, but specifying complex multi-shot storyboards with precise timing and continuity via structured inputs is still emerging.

- Audio-aware generation: Ray3 focuses on picture; automated sound design, VO alignment, and music timing still require pipeline glue.

| Automation area | Doable today | What’s missing for full automation |

|---|---|---|

| Bulk generation | Queue drafts, iterate, upscale to HDR in-app | Headless API with job batching, rate controls, and webhooks |

| Brand consistency | Prompt/style references; image-to-video for look lock | Formal style tokens, reference libraries, version pinning |

| Post handoff | ACES EXR/HDR export; CC sync via Firefly | Direct EDL/XML timeline export, LUT roundtrips, color pipeline presets |

| Compliance & provenance | Content Credentials via Adobe Firefly | End-to-end provenance across non-Adobe hops and archives |

Current vs. future: what creators and marketers can do now

Do now

- Pitch at speed: Use Draft mode to spin 10–20 concept clips, pick winners, and only upscale what sells the idea.

- Keep color intact: Start HDR-native to avoid banding and crushed highlights when grade time hits.

- Storyboard smarter: Use sequence-aware prompts to line up A/B/C variants of the same mini-narrative for stakeholder review.

- Blend with live action: Composite EXR sequences against plates with far less cleanup than LDR web exports.

Wait for it

- Headless orchestration: Until Ray3 exposes stable endpoints, large-scale nightly runs and auto-curation remain semi-manual.

- Shot-accurate control: Expect iterative improvement in frame-accurate beats, character continuity across many shots, and precise camera choreography via structured inputs.

- Audio-aware sequences: Picture is ahead of sound; you’ll still rely on DAW timelines or AI audio tools to sync VO and music.

Multi-format relevance: how Ray3 ripples across text, photo, video, audio

- Text → Video: Natural-language briefs become draft sequences you can quickly re-prompt or branch.

- Image → Video: Upload look references or product shots to anchor brand palette and lighting.

- Video → Video: Use generated EXRs as inserts, transitions, or VFX elements in live-action edits.

- Audio tie-in: Pair Ray3 clips with VO/music in your NLE; automation opportunity is sequencing visuals to beat maps once APIs land.

Who should care: and why

- Brand and performance marketers: Faster A/B creative at consistent quality. HDR keeps product colors true across placements.

- Agencies and post houses: Previz and pitch reels that do not collapse under grading. Less time fixing, more time directing.

- Indie filmmakers and content studios: Sequence-level control on a budget; better integration with professional finishing tools.

- Platform builders and automation teams: Watch the API space; the moment endpoints open, there is real upside in batch generation, automated creative QA, and asset routing.

The fine print: availability and pricing

Ray3 is live within Luma’s Dream Machine with plan-based access to draft and high-res/HDR outputs. If your team wants to road-test inside Adobe’s ecosystem, Firefly provides a clear on-ramp with Creative Cloud handoff and provenance tags (Luma pricing; Adobe announcement).

Bottom line: real-world readiness

Ray3 pushes AI video out of the novelty zone and into credible production territory. The headline is not just “looks better”; it automates more of what used to be manual: sequence logic, color headroom, and handoff to finishing. If you’re a creator or marketer scaling content, the playbook looks like this: brainstorm with Drafts, lock tone with image references, graduate to HDR finals, and finish inside your NLE. For full-stack automation (endpoints, batch controls, webhooks), keep your eyes on the roadmap; the first teams to wire Ray3 into creative ops will set the new speed limit.

Until then, the combination of reasoning + native HDR is a very real upgrade for anyone shipping polished video at pace. That’s not hype; that’s the workflow getting lighter where it counts.