Your Content Strategy for the Answer Engine Era

Your Content Strategy for the Answer Engine Era

October 8, 2025

Stop refreshing your SEO leaderboard. The age of the blue link is over. Today, search is conversational, synthetic, and powered by AI answer engines that riff, compress, and sometimes improvise truths about your brand. If you are still tuning sites for a static Google SERP or losing sleep over backlink pyramids, you are prepping for yesterday’s game. It is time for a reality check: optimizers, meet the generative answer engine. Your content factory cannot win this new world with last decade’s pipes.

The Zero-Click Era Is Here. Resistance Is Futile.

GenAI search panels, SGE, and Meta AI live answers mean users get what they need right on the results page or inside a chat window without visiting your site. This does not kill content strategy. It shifts the battleground upstream. Whoever feeds the answer engine clear, recent, and credibly structured material wins mindshare, brand mentions, and top-funnel presence. Generic “Best X in 2025” listicles are flatlining. Authoritative, well-attributed, and uniquely voiced content still earns citations in AI summaries. Agile teams win more than they lose.

- AI-driven answers shrink total clicks for many queries.

- Brands with structured, machine-friendly, and trusted material are surfaced, cited, and even paraphrased across conversational interfaces.

The playbook is not “please click my link.” The playbook is “make the engine cite me and get my explanations into the final answer.”

The Modern Triangle: SEO, AEO, GEO

| Discipline | Primary Goal | What You Optimize | Signature Tactics | Key Risks | North Star Metric |

|---|---|---|---|---|---|

| SEO | Rankings and Clicks | Page Structure, Site Architecture | Technical SEO, Linkbuilding, Intent Match | Commodity Content, Slow Feedback | Organic Sessions, CTR |

| AEO | Win Featured/Direct Answers | Snippets, Entities | FAQ/HowTo Markup, Concise Answers | Format Overfitting, Shallow Answers | Snippet Inclusion Rate |

| GEO | Be paraphrased and cited correctly | Knowledge Objects, Provenance Trails | Entity Graphs, Source Markup, Model-Friendly Context | Hallucinations, Brand Voice Drift | Answer Share, Citation Quality |

The stack has not been erased, just layered. SEO earns the crawl. AEO gets you into answer boxes. GEO (Generative Engine Optimization) helps you win in AI-driven result feeds and conversational agents by packaging content for models and provenance, not just human readers.

How to Reboot Your Content Ops for Answer Engines

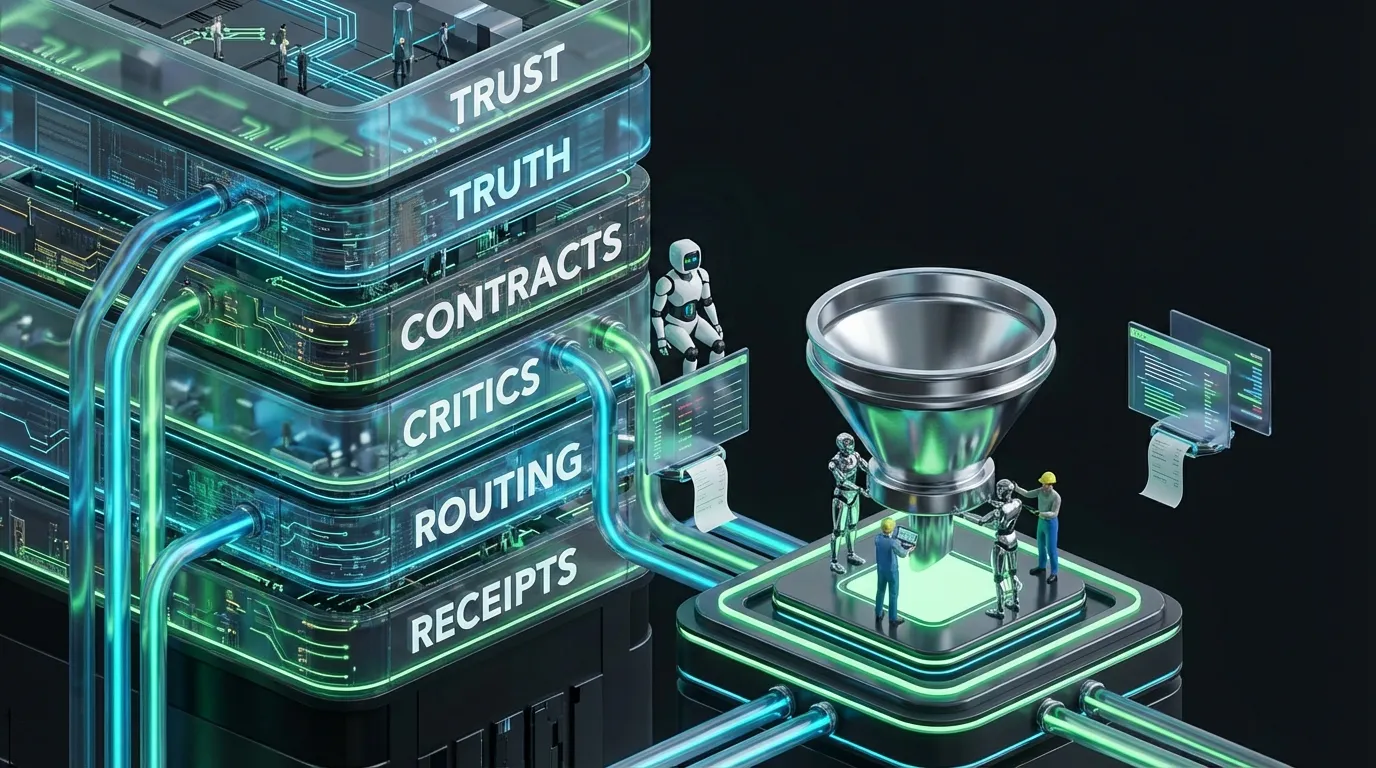

1. Build a Real Source of Truth

- Develop an entity graph for your business: products, features, claims, pricing, compliance, FAQs, studies.

- Give each object a unique, addressable ID and a version. Think modular, reusable “knowledge atoms.”

- Write canonical definitions that are terse, unambiguous, and maintained. LLMs reward crispness and clarity.

2. Prewrite Structured Answers, Not Just Posts

- Start with the exact question a real user would ask. Answer in 2 to 4 sentences, citing sources and claims.

- Add a short “next question” context. You are assembling context for summarizers.

- Link every claim to its keystone source inside your system, not buried in PDFs.

3. Publish for Humans and for Machines

- Maintain human-readable pages and layer in modern schema markup.

- Release machine-friendly companion files: compressed objects with dates and provenance baked in.

- Track versioning in companions so updates propagate without manual rewrite.

4. Treat Every Media Type as a Data Point

- Ensure alt text matches your canonical definitions, not just generic descriptions.

- Create time-coded transcripts from key podcasts or videos. Answer engines crawl these cues.

- Standardize all product and variant names across thumbnails, captions, and slide decks. Consistency fuels models.

Steal These Answer Engine Templates

Canonical Answer in JSON-LD

{

"@context": "https://schema.org",

"@type": "FAQPage",

"mainEntity": [{

"@type": "Question",

"name": "What is <Product X>?",

"acceptedAnswer": {

"@type": "Answer",

"text": "<Canonical answer, who benefits, and why it stands out>",

"identifier": "answer_product_x_2",

"dateModified": "2025-09-30",

"about": [{"@type":"Thing","name":"Product X","identifier":"prod_x"}],

"isBasedOn": [{"@type":"CreativeWork","identifier":"ref_study_210"}]

}

}]

}

Machine Companion Document Example

# entity: prod_x

version: 2.0

canonical: Product X is the leading <category> that helps <audience> achieve <outcome> via <method>.

benefits:

- <measurable benefit 1>

- <benefit 2 (includes guardrails)>

constraints:

- Not suitable for <audience>

- Needs <prerequisite>

claims:

- id: ref_study_210

summary: Independent analysis saw <result> in <context>.

confidence: high

faq:

- q: How does pricing work?

a: We offer <model> with <tiers>; discounts for <criteria>.

These companions sit alongside your mainstream CMS output. One update and every surface human and machine inherits it instantly.

Measurement That Actually Matters for AI Reality

Old school GA and GSC dashboards are not enough. You need metrics that track machine utilization and drift.

- Answer share: The percent of priority queries where your brand, products, or definitions are surfaced by answer engines.

- Citation quality: Are you cited as a source or paraphrased without links or credit?

- Snippet adoption: How often do your structured answers appear in official snippets and AI-generated panel answers?

- Time to inclusion: How fast does a new canonical answer propagate to answer engines?

- Drift score: How far do public models stray from your defined truth? Lower is better. Audit this regularly.

- Compute burn per approved asset: If you are leveraging automation, measure total cost for final content shipped, not just words produced.

Integrate Feedback Loops Across the Stack

Monitor What the Machines Say About You

- Automate regular queries to leading answer engines like Google SGE, Meta AI, and Perplexity for your must-win questions.

- Archive and snapshot responses. Review for deviations from your canonicals and flag risky drift or hallucinated claims.

- Limit content agent recursion. If risk crosses a threshold, route to humans for arbitration.

Respond Rapidly to Model Drift

- On drift detection, update canonicals and machine companions first. Then push to human pages and notify stakeholders.

- Integrate change alerts into your CRM and analytics dashboards. Sales and support should align to the current truth.

- Route all changes involving legal, regulated, or high-trust claims through human signoff.

The Answer Economy: APIs Over Outlets

Leading engines are signing deals for direct content feeds and programmatic syndication. Some, like Perplexity, license and surface verified sources through publisher programs. Your new distribution partners might be APIs and data brokers instead of editors. Ensure your best answers are easy to license, cite, and verify at scale.

- Structure explicit rights, provenance, and machine-readable licenses for all canonicals you want syndicated.

- Publish machine-friendly attributions so it is clear how you want to be cited.

- Enforce consistent entity and product names across your own stack and public channels to avoid fragmentation and confusion.

Automation That Scales Without Torching Your Budget

Running everything on GenAI autopilot sounds slick until you see the bill or a rogue model turns a disclaimer into a sales promise. Smart teams build hybrid workflows with humans keeping their foot on the brake for sensitive functions.

Reference Pipeline for Human-in-the-Loop Automation

- Intent router: Auto-classify queries as definition, comparison, how-to, pricing, or support.

- Retriever: Pull up-to-date canonicals, claims, and references from your entity graph or store.

- Dual-draft: Generate both human-readable and machine-readable versions.

- Policy layer: Run automated tone, compliance, and claim checks. Flag anything above medium risk for human review.

- Editorial cut: Editors monitor sensitive, branded, or high-impact content; random spot checks elsewhere.

- Publish/index: Push to the site, structured feeds, and API endpoints as needed.

- Observe/adapt: Log engine answers and citations. If the drift score creeps up, revise canonicals.

Rethink Roles: Automation Plus Human Oversight

| Role | Owns | Bot/Human Split |

|---|---|---|

| Content Lead | Canonicals, Brand Voice, Approval Gates | Humans approve and write high-value work; bots draft and update atomic units |

| SEO Lead | Technical/Schema Integrity | Bots monitor; humans audit exceptions |

| Automation Engineer | Pipeline Design, Guardrails | Bots execute; humans resolve escalations |

| Compliance | Regulated Claims, Public Policy | Machine checks for low to medium; humans review all final decisions |

Testing: The Lightweight Companion File Move

Some teams experiment with site-level llms.txt files as model hints for AI crawlers. There is no universal compliance, but it is a signaling experiment worth trying.

# llms.txt

# Guide LLMs to canonicals and claims. (Experimental only.)

Allow: /knowledge/canonicals/

Allow: /knowledge/claims/

Disallow: /drafts/

Disallow: /internal/

Sitemap: https://example.com/sitemap.xml

Answers-Feed: https://example.com/feeds/canonicals.json

Attribution-Policy: cite-original

File or not, real leverage comes from high-quality, well-structured, and consistently maintained canonicals.

Brand and Budget Guardrails: Keep It Lean and Clean

- Do not automate sensitive fields: Pricing, legal, health, and regulated claims need a human barrier.

- Limit agent recursion: Hard caps on retry and test cycles. Avoid infinite “improve the answer” loops.

- Audit everything: Version prompts, policies, and outputs. Rollbacks must be one click, not a nightmare.

- Use model tiers: Cheap models for classification, heavyweights for final generative bursts.

- Cache invariants: Freeze brand voice schematics and tone elements to control costs and avoid drift.

How Sales and Support Should Use Canonicals

Your answer engine wins mean nothing if your CRM is stuck in last year’s truth. Sync canonical answers with your sales stack. Arm support with “issue canonicals” and stepwise solutions published as structured content. The bots will echo them and your agents will thank you.

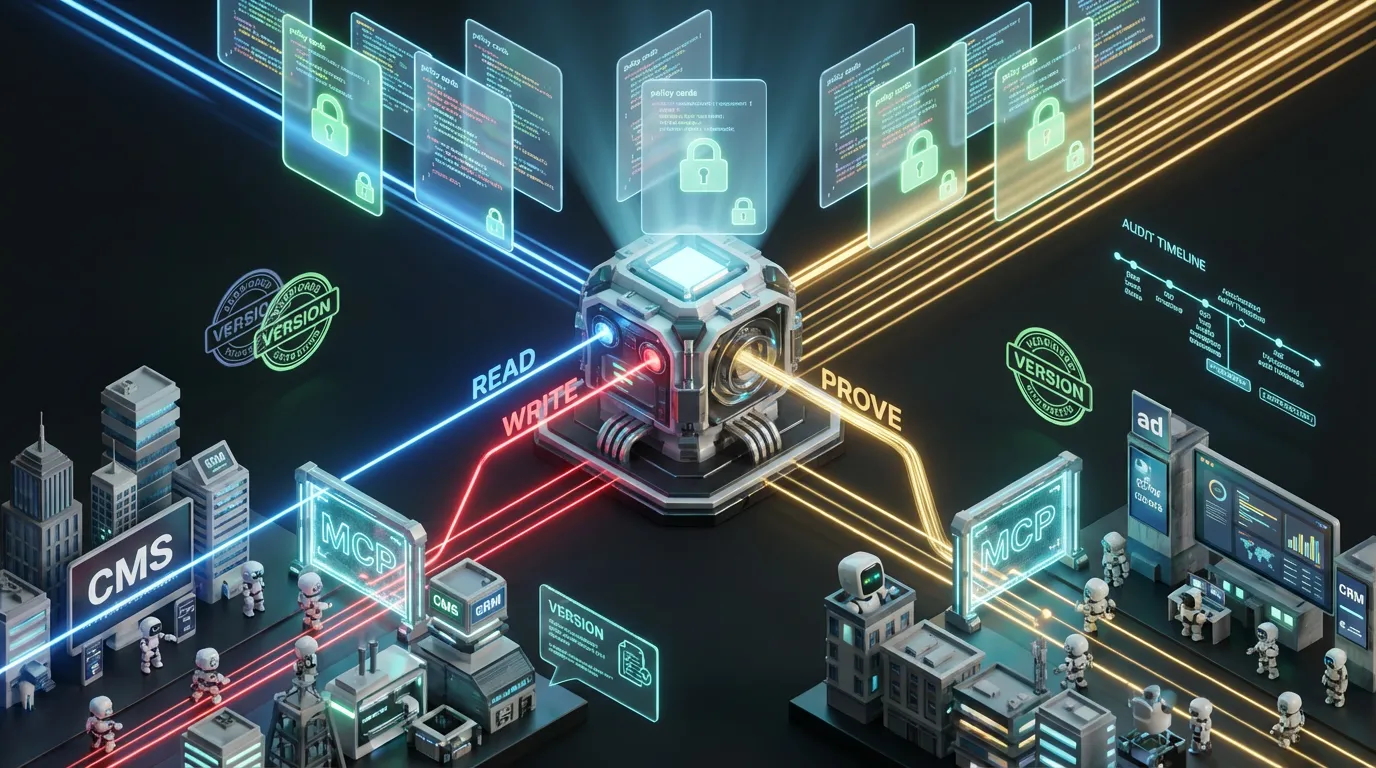

How This Plays with Your Tech Stack (No Replatform Needed)

- CMS: Add fields for canonicals, entity IDs, claim IDs, and machine companions.

- Automation platform: Map retrieval, drafting, compliance checks, publication, and feedback in one view.

- Data layer: Store canonicals and claims as versioned JSON, easy to pull, diff, or revert.

- Integrations: Use modern protocols such as OpenAPI or OAI plugins so assistants can securely fetch your canonicals.

Executive Checklist for the Post-SEO Content Game

- Do we have canonical, structured, versioned answers for our top 50 strategic queries?

- Are these discoverable and routinely audited for drift and consistency?

- Does our KPI dashboard track answer share, citation quality, and time to inclusion?

- Is there a clear human signoff line? Who owns it?

- Do we know compute and content burn rates? Are we reusing and caching effectively?

- Can every public claim be audited instantly?

The game is not tricking new models into spamming your URL. The game is making your canonicals the most reliable and frictionless truths models can grab, cite, and remix, always with receipts.

Put It All Together or Get Outplayed

Generative search and answer engines did not kill good content. They killed slow, unstructured, and fungible content. Structure, provenance, and disciplined feedback loops now rule. Treat your factory like a product: canonical sources, controlled releases, telemetry, and tight automation. Let bots handle grunt work and enforce rulebooks. Keep humans accountable for the things that matter. That is the edge: showing up where the game is played, spending less time untangling AI drift, and more time shipping. That is not luck or vibes. That is automation first at industrial scale.