Simulate Your Market With Synthetic Audiences

Simulate Your Market With Synthetic Audiences

October 23, 2025

Your next “focus group” doesn’t RSVP, need branded tote bags, or even technically exist. Synthetic audiences let you pressure test messaging, pricing, creative, and flows using AI-powered digital stand-ins, simulated customers loaded with real data and market constraints. Modern platforms and open LLMs make this approach not just possible but practical. Synthetic audiences don’t replace human research. They turn discovery into an automation problem, delivering a first draft of real-world answers in hours instead of burning weeks and budgets. Save human resources for deep validation, not slow guesswork. For a practical primer on how to weave this into marketing automation, see Meet Your Synthetic Customers.

What Synthetic Audiences Actually Are

At its core, a synthetic audience is a virtual population of AI agents, each emulating a segment of your market. Every agent carries specific traits, constraints, goals, channels, and even behavioral quirks. You throw them into real contexts, landing pages, pricing tables, offers, onboarding flows, and see what sticks, breaks, or triggers WTF reactions. Scale them to thousands of simulations, run wild with multivariate testing, and (with the right guardrails) slice campaigns with audit-friendly receipts.

This isn’t magic. This is not Statistical Jesus descending in JSON form. Synthetic audiences are a diagnostic accelerant, screening out duds and surfacing promising variants long before real customers ever touch the glass. Use them for ideation, not for gospel truth.

Why Synthetic Audiences Are Exploding Now

- Data gravity shifted. Nearly all serious companies now sit on first-party data lakes and dynamic product catalogs. Advanced vector search gives models genuine “memory” and relevance on tap.

- Model maturity. Contemporary LLMs (hello, Llama 4) support roleplay, tool calls, reasoning chains, and complex outputs, making simulated behaviors not just plausible, but auditable and repeatable.

- Unit cost collapse. The cost of sequentially testing thousands of hypotheses is now less than the kickoff lunch of a focus group. If you cache the invariants and route models smartly, you stack the deck for speed and thrift.

What Synthetic Audiences Are Good For

- Message Screening: Test 20 headline variants across eight personas and multiple contexts. Graduate only top performers to real-world validation.

- Pricing Sensitivity: Simulate how different market segments react to various pricing ladders, bundles, and discounts, all while honoring persona-specific constraints (budget, ROI).

- Creative Preselection: Compare hooks, visuals, and copy formats without burning ad dollars on wild guesses.

- Onsite Flow Diagnostics: Deploy agents to onboarding journeys or checkouts. See precisely where motivation craters or friction stings before buying any real traffic.

- Sales Enablement: Roleplay common objections and tough prospects. Turn winning language into field-tested snippets.

This Is Not…

- Statistical Forecasting: Simulations pretest and diagnose; they are not substitutes for weighted, population-level studies.

- A Trust Fall: You must calibrate with real people. Human holdouts remain non-negotiable.

- Empathy-Automation: Agents follow constraints and logic. You design their limits. Empathy? That’s still you.

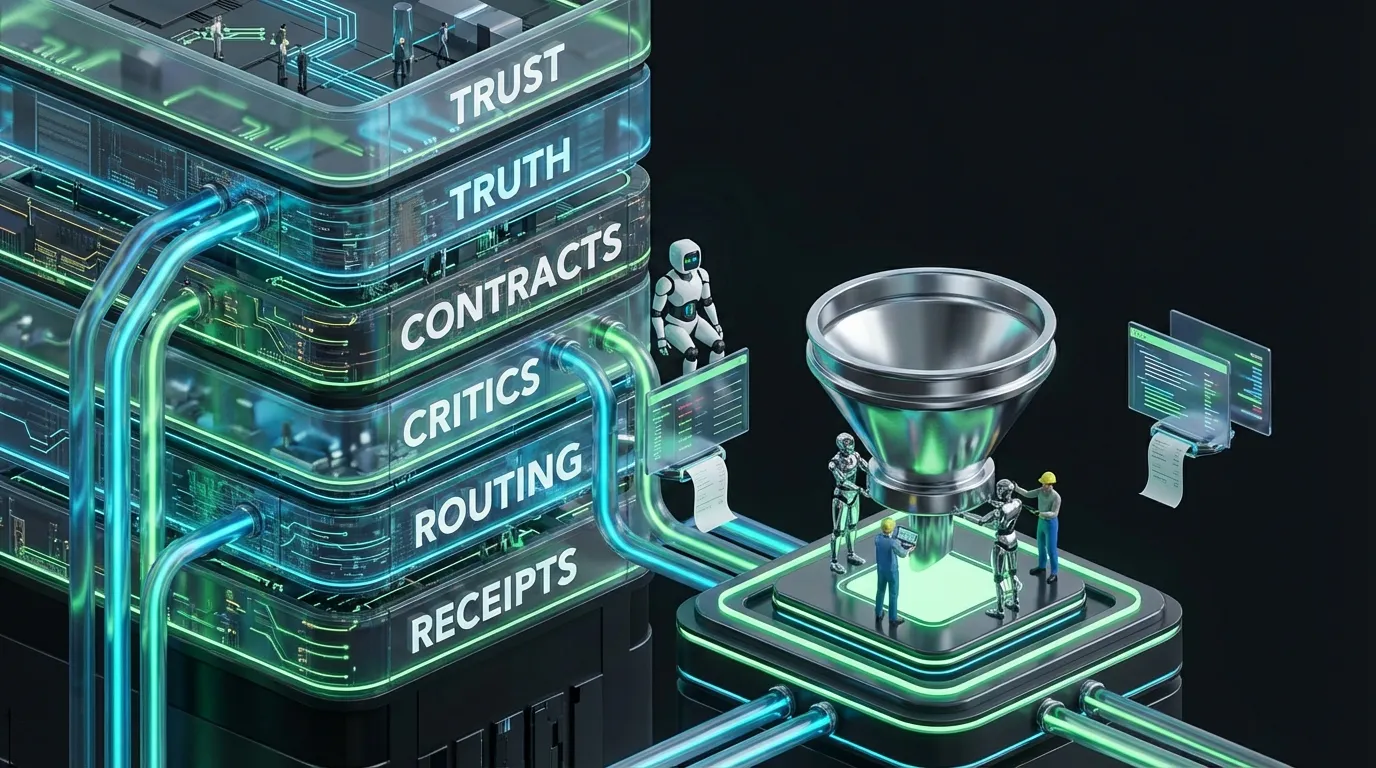

Anatomy of a Modern Simulation Stack

- Persona Graph: Segmented with traits, goals, constraints, and heuristics for decision-making.

- Truth Packs: Product facts, claims, pricing details, proof points, structured as machine-readable knowledge.

- Environment: Target surfaces like emails, landers, ads, expressed as rich prompts or API-callable evaluators.

- Agent Brains: LLMs with role-based conditioning and tool hooks for retrieval and reasoning.

- Evaluator Layer: Distinct models score for clarity, trust, actionability. Generators never grade themselves.

- Guardrails: Enforce policies, tone, claims, fairness, and introduce manual approvals where high risk looms.

- FinOps Controls: Model tiering, recursion bounds, cache trickery to keep experiments from setting finance on fire.

Persona Schema Example

{

"id": "retail_buyer_consumer_us_genz",

"segment": "B2C",

"demographic": "Gen Z",

"interests": ["ethics", "trendy tech"],

"budget_limits": 150,

"decision_heuristics": [

"relies on peer reviews",

"quick to abandon slow sites",

"favors brands with purpose"

],

"channels": ["TikTok", "Instagram", "SMS"],

"success_metric": "impulse_purchase_conversion"

}Sample Environment Prompt

{

"surface": "checkout_flow",

"offer": "premium_bundle",

"message": {

"headline": "Unlock next-level style",

"subhead": "Bundle savings, zero hassle.",

"cta": "Shop now"

},

"assets": {

"proof": ["influencer_video", "five_star_reviews"],

"feature_list": ["free_shipping", "easy_returns"]

}

}Under the Hood: How a Simulation Run Works

- Load persona, truth packs, and environment. Cache constants like brand tone and compliance policy.

- Agent “experiences” the creative or flow, retrieves facts if needed, and delivers a structured reaction (comprehension, trust, friction, propensity to act).

- Evaluator models judge those reactions. Outliers or low-confidence results? Flag for human review or escalate to a smarter model.

- Aggregate across all personas and contexts: promote top variants, retire duds, and, crucially, record every rationale.

Comparing Methods… Quickly

| Method | Speed | Cost | Bias Risk | Best Use |

|---|---|---|---|---|

| Synthetic audience | Hours | Low to moderate | Model, prompt | Rapid screening, diagnostics, shortlist narrowing |

| Panels or Surveys | Days to weeks | Moderate to high | Sampling, nonresponse | Population validation, inferential stats |

| Field Experiments | Weeks to months | High | Operational, ecological | Real-world lift, deployment readiness |

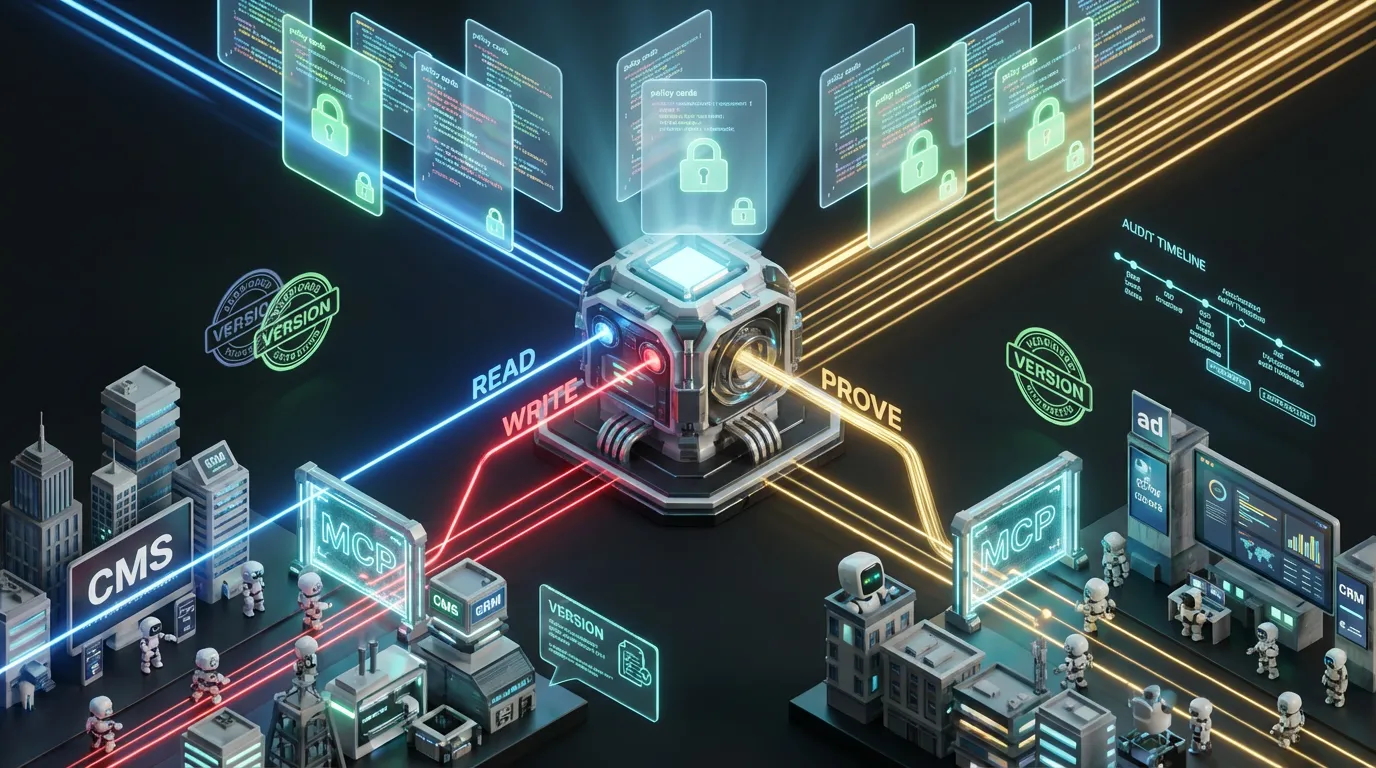

Quality and Governance: Who Keeps This Honest

- Calibrate: Routinely check synthetic “winners” against small, fresh human panels. Adjust persona rules and evaluator weights if results drift.

- Holdouts: Maintain a quarantined set of assets never used in simulation. Use for unbiased checks against overfitting.

- Bias & Fairness Audits: Ensure persona templates don’t encode old-school stereotypes. Log each experiment and run fairness checks by segment.

- Full Traceability: Every run must record persona IDs, model versions, prompts, hits, scores, costs. If you can’t replay it, you can’t trust it.

Metrics That Actually Matter

- Screening Precision: Share of synthetic shortlist picks that validate in real user tests.

- Time to Decision: Median hours from problem to prioritized shortlist, receipts included.

- Cost per Shortlisted Variant: All-in experimentation plus ops, divided by variants sent to human review.

- Outcome Drift: Gap between synthetic and human results over time, track delta, optimize downward.

- Coverage: % of real market segments actually modeled in your simulation layer.

Blueprint: Your First Pilot in Ten Simple Steps

- Pick a clear decision point, like picking ad headlines for Gen Z shoppers on TikTok.

- Define 4 to 6 personas that fit this scenario. Start simple, refine later.

- Assemble truth packs, product facts, UVPs, proof, all tagged and versioned.

- Draft 10 to 20 variants, keeping tone and length policy explicit.

- Build environment stubs, a basic JSON definition for each experiment.

- Deploy agent evaluations with context retrieval and persona roleplay.

- Evaluator models score clarity, trust, intention. Require direct citations for claims.

- Promote top 3 variants. Test quickly with a small, real human panel.

- Compare synthetic and human performance. Tweak if needed.

- Document speed, cost, and screening precision. Scale if metrics check out.

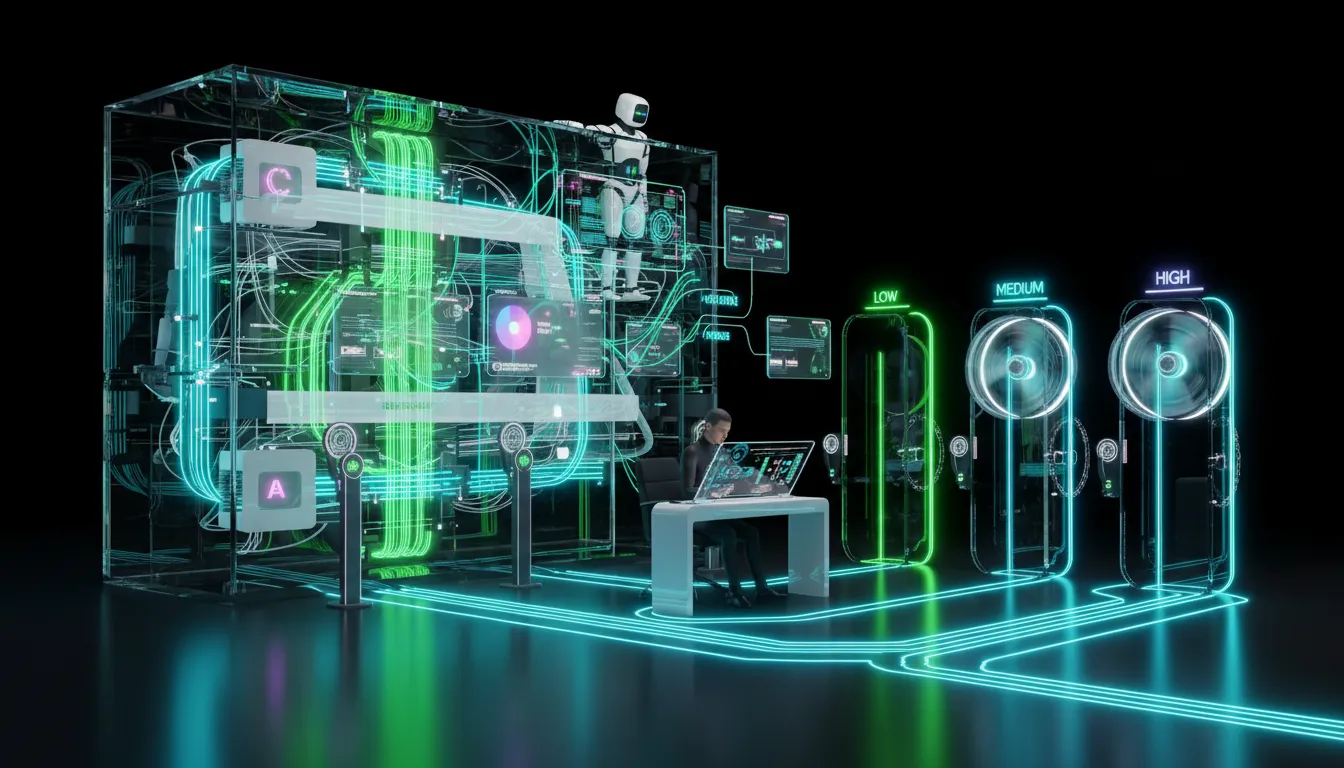

FinOps Routing: How to Keep Your CFO Smiling

- Cache Constants: Brand voice, persona boilerplate, compliance policy, do not regenerate every run.

- Model Tiering: Cheap models for brute-force, premium ones only for tie-breaks and high-stake reviews.

- Early Stops: “Two strikes and out” for variants failing with initial personas. Do not run doomed concepts to the finish.

- Budget Ceilings: Set strict run and daily experiment limits. Track cost per decision with receipt-level granularity.

Build or Buy: How to Actually Get Started

| Path | Best For | Tradeoffs |

|---|---|---|

| Ready-made platforms | Teams without ML staff; rapid pilots | Opaque persona logic, less custom evaluator control |

| Custom orchestration | Strict compliance, niche domains, deep integrations | Slower start, more dev and upkeep work |

| Hybrid | Most mid-market orgs | Two toolchains, but more control and agility |

Common Failure Modes (And the Fastest Fixes)

| Failure | Symptom | Fast Fix |

|---|---|---|

| Self-grading agents | Curiously consistent wins for select tones | Split generator and evaluator roles; randomize scoring |

| Prompt overfitting | Sky-high synthetic scores, flop in real tests | Introduce blinding, rotate models, periodic holdout validation |

| Persona bias | Unrealistic or stereotyped objections | Audit, adjust heuristics, run fairness analytics per segment |

| Cost spikes | Bill shock as variant count scales | Cache, early stops, prioritize by confidence |

| Poor traceability | Cannot explain why a winner won | Log everything: persona, prompt, model version, scores |

Ethics and Disclosure

- Be Transparent: Say your tests are synthetic, passing bots off as humans is a GDPR-unicorn waiting to happen.

- Respect Privacy: Use only aggregate data and compliant sources; never infer individual traits where privacy matters.

- Design for Inclusion: Simulate all major segments, not just easy ones. Tech should model your real buyers, not erase them.

How This Changes Your Automation Playbook

Synthetic audiences are not a separate island. Plug your truth packs and persona graphs into your sales and marketing automation. Use evaluator scores to promote creative, update your CMS, de-risk product launches, and keep messaging aligned across every touchpoint (bot or human). The result? A conveyor belt that cranks out high-grade variants and kills expensive guesswork faster than you can say “approval workflow.”

Playbooks, by Team Size

Solo Creators & Micro Teams

- One spreadsheet equals personas plus truth packs

- Run weekly screenings for your main channels

- Track cost per shortlist and incremental human validation

Mid-Market Marketing Teams

- Structured entity graph for products, claims, use cases

- Automated simulation with every campaign or creative brief

- Approvals and evaluator feedback piped to your CMS and ad tools

Enterprise

- Policy-as-code for claims, tone, fairness and compliance

- Regionalized personas, truth packs, and evaluators

- Quarterly calibration: human panels, field experiments, synthetic audit trails

The Take

Synthetic audiences let you pressure-test ideas, cull the weak, and reach human validation with a higher-grade shortlist fast. They are not a panacea, just a force multiplier for automation-first workflows that respect speed, rigor, and budget. Build constraints, keep receipts, enforce the generator and evaluator firewall, and calibrate ruthlessly. Do this, and you will spend less time sweating guesses and more time shipping work that actually survives the wild.