Build Your AI Marketing Control Plane

Build Your AI Marketing Control Plane

October 25, 2025

If you are still investing in point tools you are missing the layer that matters

Marketing automation has outgrown the app buffet era. Enterprise platforms like OpenAI’s GPT‑5, Adobe’s Content Credentials for brand and provenance controls, and HubSpot’s AI agents and orchestration no longer just “plug AI in” to last year’s process. Agencies now productize their entire AI stacks so brands self-serve automation. Content management systems ship with deeply ingrained style guides, audience intelligence, and real-time analytics. Startups sell self-rewriting websites that respond to campaign triggers faster than your social team ever could. And operations leaders are proving that industrializing content pipelines cuts more than fluff, it is a shot at real, bankable cost control.

This is not another “top 10 marketing tools” roundup. We are dissecting the new operating model beneath the AI marketing headlines. What is an AI marketing control plane. Why does your GTM even need one. Where does it deliver ROI versus face-planting? Most importantly, how do today’s automation-first CMOs build a control plane without setting money, sanity, or their brand on fire? For a deeper framing of this pattern, see our perspective in The Control Layer for AI Marketers.

What changed Platforms grew up and brands got bolder

- Agency stacks as products. Plan, create, approve, and launch inside one branded AI layer, no retainer required.

- Enterprise suites bundle in real governance. Brand voice, legal checks, A/B at scale, publish in less time than your legal review email chain used to take.

- Autonomous web builders test new ground. Landing pages, ad variants, and FAQs auto-draft. With the right guardrails, magic. Without them, public relations disaster.

- Marketing execs are not just curious, they are ready. Budgets grow even as anxiety over AI security, hallucinations, and compliance rises. The message is simple, production time is now. Control, not more features, is what is missing.

All roads lead to orchestration. If your stack just pipes prompts to models with no policy, routing, or publishing guardrails, you are not scaling marketing, you are running AI demo theater.

Meet the control plane The layer that makes AI accountable

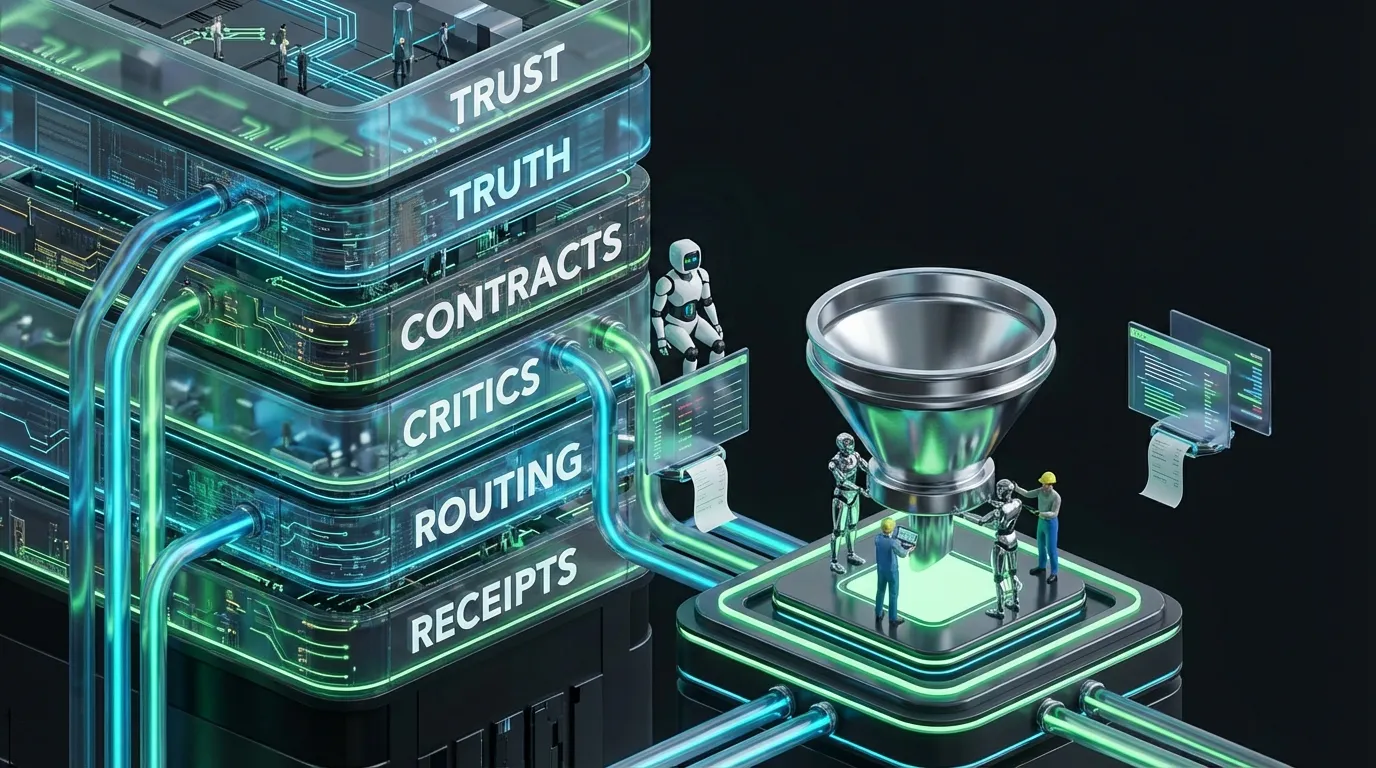

Think of the control plane like a conductor. It does not write music or play instruments; it sets the tempo, cues in the big brass, and stops the strings from being trampled. In concrete terms, this is the software and automation layer that sits above your tools and models, enforcing identity, policy, routing, observability, and handoffs.

| Layer | What it owns | Why it matters |

|---|---|---|

| Data layer | Offers, facts, brand assets, metrics, embeddings | Keeps generation grounded, your pricing page does not invent itself |

| Orchestration | Routing, queues, agent workspaces, HITL checkpoints | Turns fuzzy prompts into robust workflows with proof and repair |

| Policy | Voice, legal, budget, risk, compliance rules | Makes good taste and safety executable, not vibes |

| Observability | Cost per asset, validity score, cycle latency, logs | Scales trust and transparency, no black box budgets |

| Connectors | CRM, CMS, ad system, analytics, DAM, email | Closes the feedback loop between idea and live asset |

The control plane is the difference between shipping 10,000 on-brand assets and accidentally teaching the world your pricing secrets.

The anatomy of a control plane Your reference blueprint

{

"identity": {

"roles": ["editor", "legal", "ops", "growth"],

"scopes": ["read_source", "publish", "approve", "budget_admin"]

},

"data": {

"sources": ["products", "claims", "offers", "proof"],

"vector": {"index": "knowledge", "freshness_days": 7},

"enrichments": ["segment", "intent", "seasonality"]

},

"policies": {

"brand": {"voice": "conversational, specific", "limit": {"headline": 70}},

"claims": {"require_source": true, "block": ["guaranteed", "best-in-class"]},

"budget": {"per_asset_usd": 0.40, "max_premium_invocations": 2},

"risk": {"low": "auto", "medium": "editor", "high": ["legal", "brand"]}

},

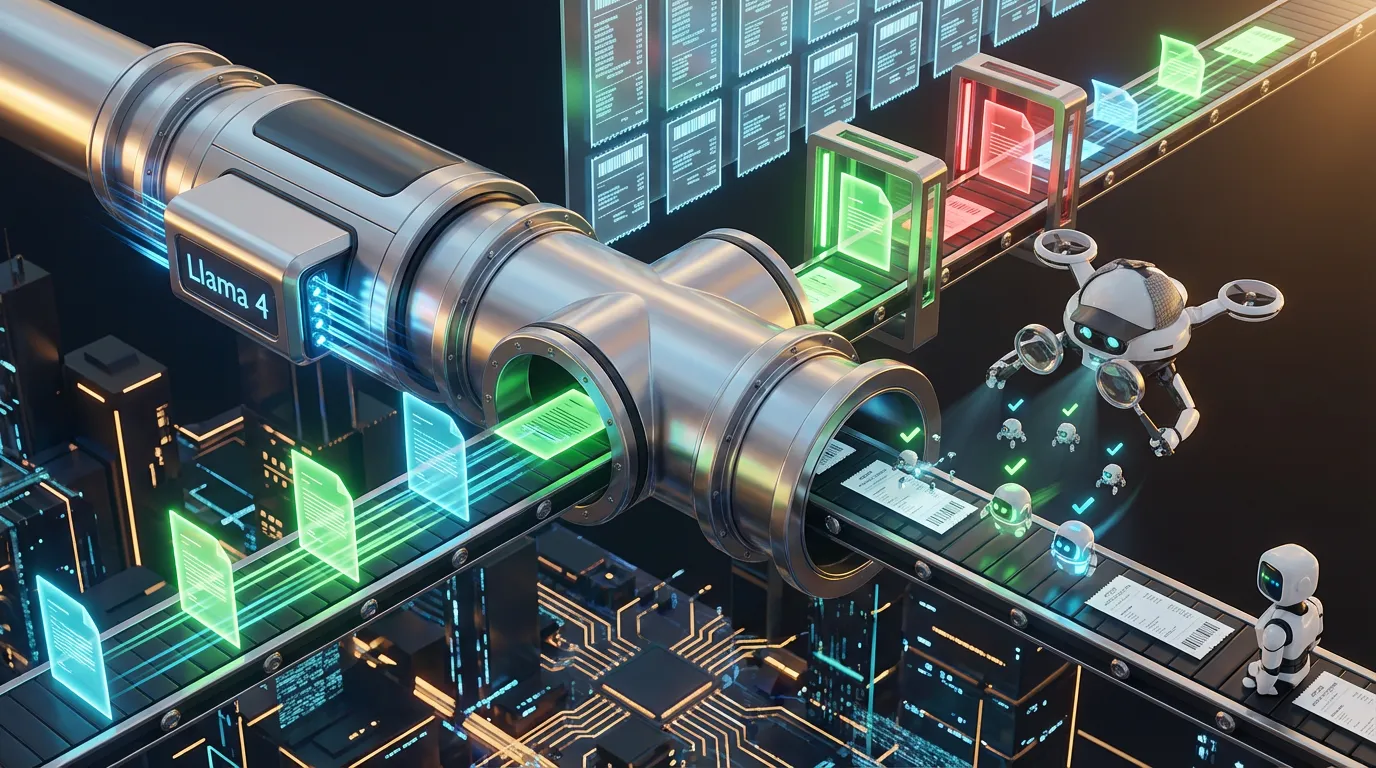

"orchestration": {

"router": "small_model_first",

"steps": [

{"name": "classify", "model": "small_llm", "max_ms": 60},

{"name": "fetch_facts", "k": 5},

{"name": "draft", "model": "small_llm", "schema": "AdSchemaV4"},

{"name": "critic", "model": "llm_critic", "checks": ["schema", "source", "tone"]},

{"name": "escalate", "if": "confidence < 0.85 or missing_source", "model": "premium_llm"},

{"name": "approve", "role": "editor", "if": "risk == medium"},

{"name": "publish", "destination": ["ads", "cms"], "webhook": "analytics_ingest"}

]

},

"telemetry": ["cost_per_asset", "first_pass_valid", "coverage", "time_to_live"]

}Ignore model names. The magic is the control plane’s agnosticism, plug in new models or critics, change routing rules, adapt policies, without retraining humans every quarter.

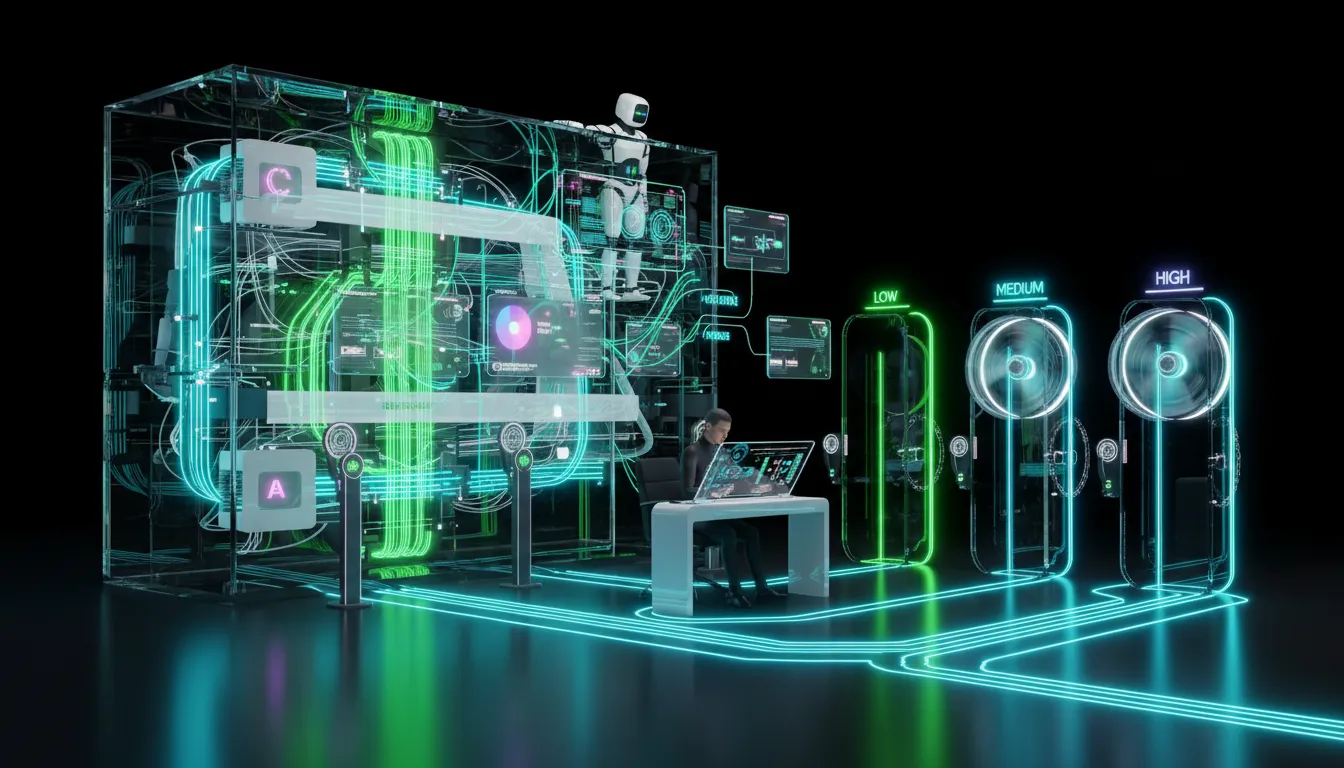

The maturity ladder Crawl, walk, run, do not faceplant

| Stage | Looks like this | Prioritize | Common trap |

|---|---|---|---|

| 0: Assistive | Copying prompts; pasting output; chaos by clipboard | Standardize output and schema | No consistency, nothing automates downstream |

| 1: Centralized prompts | Shared prompts, sources pasted by hand | Cache basics, create reusable templates | Token waste, questionable sourcing |

| 2: Retrieval-first | Facts come from versioned truth stores; critic layer enforces | Audit logging, critic-passing content | Skipping provenance just to move faster |

| 3: Hybrid agents | Model router; humans gate high or medium risk | Budgets, stopping rules, review cycles | Agent loops become invisible, expensive time bombs |

| 4: Closed loop | Performance feedback reroutes variant creation and spend | Holdouts, incremental improvement tracking | Optimizing for unaligned or vanity metrics |

Build versus buy The only wrong option is all-in on one bet

| Path | Good for | Best strength | Tradeoff |

|---|---|---|---|

| Agency stack | Teams wanting speed and results | Hands-off velocity, end-to-end launch | Control gaps, data residency blind spots |

| AI CMS suite | Personalization and on-brand assets | Governance close to publish point | Siloed channels, little orchestration |

| No-code orchestrator | Rapid iterators, scale-up mid-market | Rich connectors, speed to ship | Encoding policy is painful, limits on deep governance |

| Custom control plane | Big, complex orgs; multi-region, compliance | Full routing, policy-as-code, accountable logs | High engineering lift, long-term upkeep |

The only healthy answer is hybrid by default. Start with a proven stack, extend with no-code where it makes you faster, and bridge policy or data gaps with custom glue. Victory is not elegance, it is audit-ready outputs you can afford to scale.

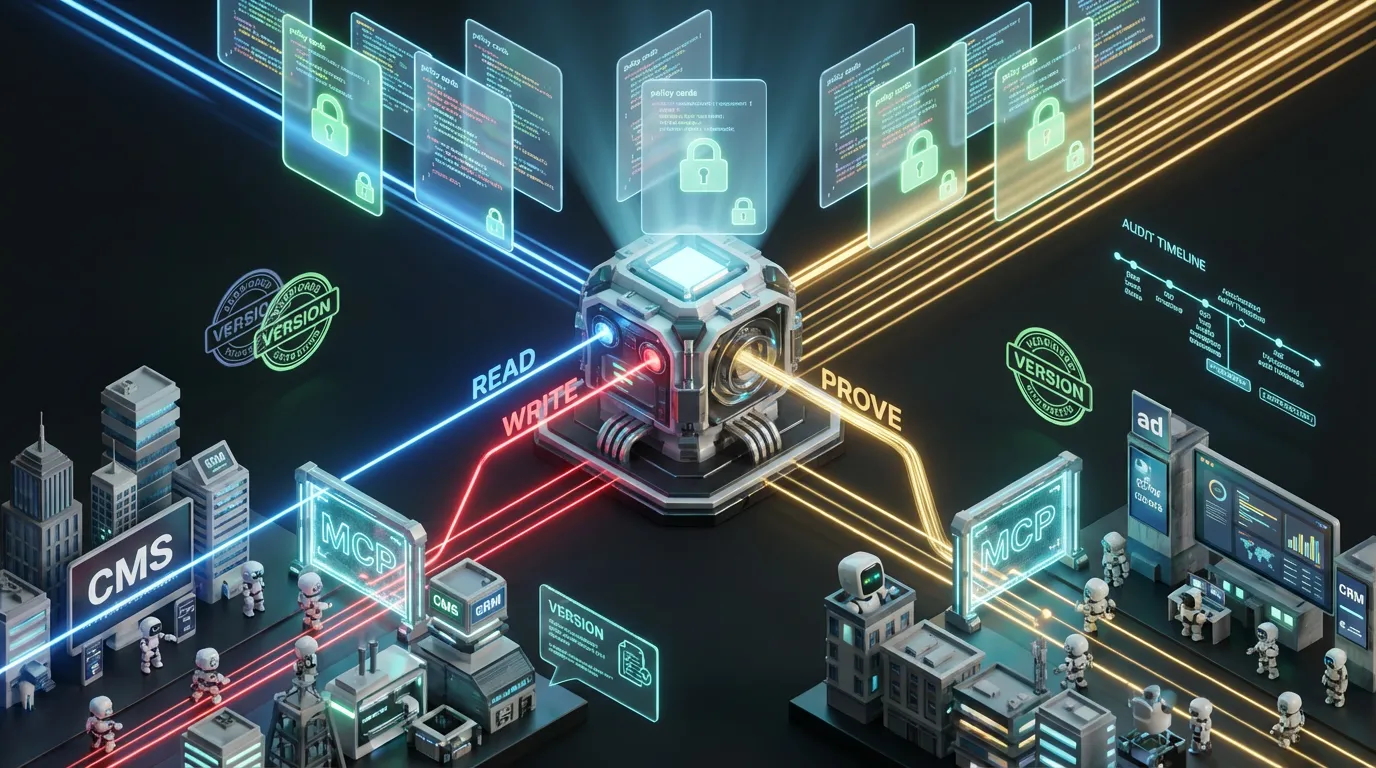

Governance before scale or your controls will become your next incident

- Risk tiers. Low risk gets autopilot, medium needs editor review, high risk requires legal or brand signoff plus diff and citations.

- Edit budgets. Cap how many template tweaks can go live per day, no more infinite “just one more” variant loops.

- Agent sandboxes. Agents are allowed only pre-registered tools or domains, not the whole internet. Every action gets logged and can be replayed.

- Kill switches. A literal button pauses flows, reverts bad launches, or reroutes bot traffic instantly, no Slack war rooms required.

- Receipts everywhere. Every output stamps model version, prompt ID, sources, reviewer, because audits are inevitable.

FinOps for agent workflows The cost controls CMOs covet

Agentic automation fails quietly on cost, not just botched tone. Win with structure, from caching to routing and validation.

- Invariants = cache first. Brand prompt, legalese, and boilerplate should never rack up token bills on repeat.

- Route by workload. Small models handle retrieval, classification, and critique, only escalate to premium when the job justifies it.

- Schema rules first. Enforce strict validation; if output breaks schema, fix with a smaller model instead of dumping context to something pricey.

- Budget-aware orchestration. Set per-asset and per-campaign ceilings. Trigger early stops when spend rises faster than quality.

| Metric | Why it matters | Healthy signal |

|---|---|---|

| Cost per asset | What the business pays, no more, no less | Flat or declining as output increases |

| First-pass validity | Does the machine hit approval in one? | 80 percent or better trending up |

| Source coverage | Approved claims actually cited | Regulated, 95 percent or above |

| Time to live | Lag from data change to asset in market | Minutes, not days |

Security without drama Even the best agent makes dumb mistakes

- Scoped identity. Every flow tied to a user or agent with minimum needed privileges; no more super-admin “test” accounts.

- Data partitioning. Segment product and customer data, keep PII and confidential info away from prompts by default.

- Tool allowlists. Agents can call only integrations you have approved, with enforced rate and output limits.

- Receipts and logs. Every interaction, call, and asset is logged. A missing audit trail is a no-go for scale.

- Mandatory staging. Drafts land in a sandbox unless risk is low and policy passes non-negotiable checks.

Where the control plane compounds Four workflows that pay off

1. Performance creative with variant factories

- Every night, new offers and claims refreshed from product databases.

- LLMs draft variant copy for target audiences with small models first, escalate only on risk or quality gaps.

- Critic model enforces structure and evidence. Only the best go to premium polish or human gatekeeping.

- Deployed winners track real uplift with holdout groups.

Result: More variant assets, less manual grunt work, smarter spend allocation.

2. Lifecycle email and in-app nudges

- AI router detects user intent and context in milliseconds.

- Retrieve three facts and a compliant call to action from truth stores.

- Draft, validate, and send, all in schema-constrained, brand-safe output.

- Publish to ESP or app, logging provenance to each profile.

Result: Hyper-personalization at scale without scary cost curves.

3. Sales enablement automatically

- Call summaries turned into actionable bullets with follow-up triggers.

- Pipeline and account data matched to approved proof points by region, segment, industry.

- Draft and escalate only for deals above policy risk cutoffs.

- All assets, sources, and approvals logged to CRM.

Result: Sales reps do actual selling instead of living in CRM limbo.

4. Support macros and knowledge surfacing

- Auto-mine resolved tickets for new macro candidates.

- Draft support docs with inline citations and screenshots.

- Critic then sandbox-test for real outcomes before publishing widely.

- Auto-expire outdated content per freshness policy.

Result: Faster first response rates, fewer escalations, and continuously aligned support.

What you must centralize vs distribute

| Centralize | Distribute |

|---|---|

| Policies, schemas, risk tiers, truth stores, routing rules, governance | Prompt flavor, channel-specific tactics, local context and experimentation |

| Identities, approvals, observability or telemetry | Creative ideation, micro-targeted tests, asset tweaks for edge cases |

Tl;dr: Centralize “how not to get fired.” Distribute “how to win in your market.” That is your recipe for creative speed with real accountability.

Your first 30 days Ship momentum, skip the waterfall

- Pick one workflow (email nudge, ad variant, or support macro). Write an unbreakable schema.

- Collect reliable sources, offers, proof, latest claims, version and date-stamp everything.

- Spin up a router, use a fast, cheap model for routing and retrieval. Draft with an LLM at the right price point.

- Add critics, enforce schema, source proof, and brand tone. Nothing ships without receipts.

- Set explicit spend limits, per-asset and premium LLM cap. Early stop when limits are breached.

- Gate by risk, only auto-publish low. Medium goes to editor, high to legal or brand council.

- Instrument every phase, measure cost, validity, ship speed, and real-world performance.

- Run a live test cell. Hold out a manual group as baseline. Publish clear savings and outcome results.

What not to do in 2025

- Do not launch fully autonomous publishing without lock-tight policies, critics, and rollbacks. The only thing easier than shipping badly is getting blamed for it.

- Do not chase leaderboard drama. Evaluate every model by edit time saved, acceptance rate, and true business cost, not leaderboard FOMO.

- Do not assume agentic equals cheap. Letting autonomous loops run wild is how budgets vaporize.

- Do not ignore parsing. If your content is not schema-first, neither your agent nor your auditors can parse it reliably.

Leadership FAQ

- Will this replace my team? No. AI automation eats copy-paste work. Your team sets taste, policy, and direction.

- Can we go “set and forget?” Only if you love brand risk. Smart control planes always keep humans in the high-leverage loop.

- How do I prove ROI? Monitor cost per asset, first-pass validity, and asset lift against control groups.

- Is this secure? Treat agents as interns, limited scope, audit every move, no wild west integrations.

The real take

The brands that win in AI-first marketing are not buying features, they are owning the orchestration layer. Platforms and vendors will keep shipping shiny new toys. The durable edge belongs to those who build a hybrid, model-agnostic control plane, small and fast models for routing and critique, premium only when proven, and everything stamped with proof. Make policy executable and receipts mandatory. Trust is about traceability and cost control, not just prompts. That is how you scale creative work, keep compliance happy, and turn automation from novelty into the marketing engine room.