Proof-First Automation: Modern Retrieval Stack

Proof-First Automation: Modern Retrieval Stack

November 12, 2025

RAG Grows Up Why Your Automation Stack Must Too

Two years ago, every dev under the sun cobbled together a Retrieval-Augmented Generation (RAG) system: slam some docs in a vector store, yank the top 3 chunks, and hope your large language model (LLM) said something useful. It worked for toy demos and low-stakes FAQs. It collapsed the moment you needed citations, tough analysis, or regulatory receipts. In 2025, the bar is higher: retrieval chains are now evidence engines. With GPT-5 and Google’s Gemini 2.0 models reshaping the landscape, the old chunk-and-hope pattern is a fast path to obsolescence. If your content ops, marketing automation, or sales workflows still bolt a dusty vector DB onto a chatbot, you are underperforming on trust, performance, and budget.

This deep dive is your map to the new retrieval stack built for scale and scrutiny. Think knowledge graphs for structure, RDF-style triplets for atomicity, hybrid sparse plus dense search for full recall, and intent-aware rerankers for precision. Add policy-as-code and spend controls to get automation you can ship, measure, and defend, including to legal.

Beyond Chunk Stuffing How Retrieval Became an Evidence Engine

Old RAG systems mashed up paragraphs and let the LLM play lawyer, judge, and improv comic. New-stack retrieval behaves like a meticulous analyst: it fetches multi-hop evidence, cross-checks claims, and leaves a verifiable paper trail machines and humans can audit. This unlocks automation you can trust for pricing pages, product comparisons, regulated content, and the new wave of assistant-native UX.

What Changed Under the Hood

- Graph-structured retrieval: Do not just store paragraphs. Build knowledge graphs mapping entities and relations such as Product X includes Feature Y, available only in Region Z. The model follows edges, not wishful thinking.

- Triplets as fact fuel: Break knowledge into subject-predicate-object triplets. Retrieval assembles answers from atomic, composable claims instead of fuzzy paragraphs.

- Hybrid search with dynamic balance: Dense search nails semantic queries. Sparse search pins down names, numbers, SKUs, and acronyms. Tune the mix per query for best-in-class recall.

- Instruction-following rerankers: New rerank models take plain-English intent like prioritize recent case studies, skip forums. Relevance jumps and brittle rules fade out.

- Incremental indexing and provenance: Modern pipelines update indices in near real time and every retrieved fact carries source IDs. Your stack stays fresh and defensible.

The Evidence Patterns That Work IRL

| Pattern | Method | Strengths | Weaknesses | Use Case |

|---|---|---|---|---|

| Vector-only RAG (Old School) | Embed text, cosine search top-k, stuff in prompt | Simple, quick to stand up | Misses specifics; brittle with numbers and SKUs | Walkthroughs, internal notes, low-risk docs |

| Graph RAG | Retrieve via entity and relation graph, multi-hop follow | Context-rich answers, handles policy and logic | Heavier setup; needs clean entity definition | Comparisons, audits, regulated surfaces |

| Triplet RAG | Store and combine subject-predicate-object triplets | Precise, cheap, modular retrieval | Triplet extraction and dedupe required | Specs, claims, price matrices, feature grids |

| Hybrid + Rerank | BM25 plus dense vector plus intention-aware rerank | High recall, excellent control | Complexity and tuning required | Docs with acronyms, jargon, IDs |

No More Vibes Only: Design Proof-First Outputs

Forget hand-wavy assertions. You need answers and proofs machines can check and audit. That means structured outputs with citations, provenance, and constraints.

{

"answer": {

"text": "Gemini 2.0 Flash supports a 1,000,000 token context window.",

"citations": [

{

"source_id": "gemini_2_flash_ga",

"type": "official_blog",

"verbatim": "Gemini 2.0 Flash... features a 1 million-token context window",

"url": "https://blog.google/technology/google-deepmind/gemini-model-updates-february-2025/"

}

],

"constraints": {"model": ["Gemini 2.0 Flash"], "release": ["2025"]},

"risk": "low",

"provenance": {

"retrieval": {

"strategy": "hybrid",

"dense_model": "Gemini 2.0 Flash",

"sparse": "bm25_v3",

"reranker": "intention_v2"

},

"graph_hops": 1,

"triplets_used": ["Gemini 2.0 Flash → supports → 1,000,000 token context window"]

}

}

}

If someone asks, “Why did your copilot say that?” you can produce receipts with no guesswork and no post hoc hunting.

Blueprint: Modern Retrieval Stack Architecture

[Ingest]

→ Parse PDFs, HTML, Excel, CMS

→ Extract entities, relations, generate triplets, build graph

→ Chunk for embedding, build sparse indices

[Retrieve]

→ Query hybrid (BM25 + vector)

→ Intent-based rerank

→ Optional graph walk, triplet reassembly

[Assemble]

→ Select valid facts, attach sources, resolve conflicts

→ Generate to compliance schema

[Critic]

→ Validate schema → claims → locale → cost → risk

| pass → Publish/Handoff

| fail → Auto-fix/Escalate

[Monitor]

→ Log validity, latency, cost per asset

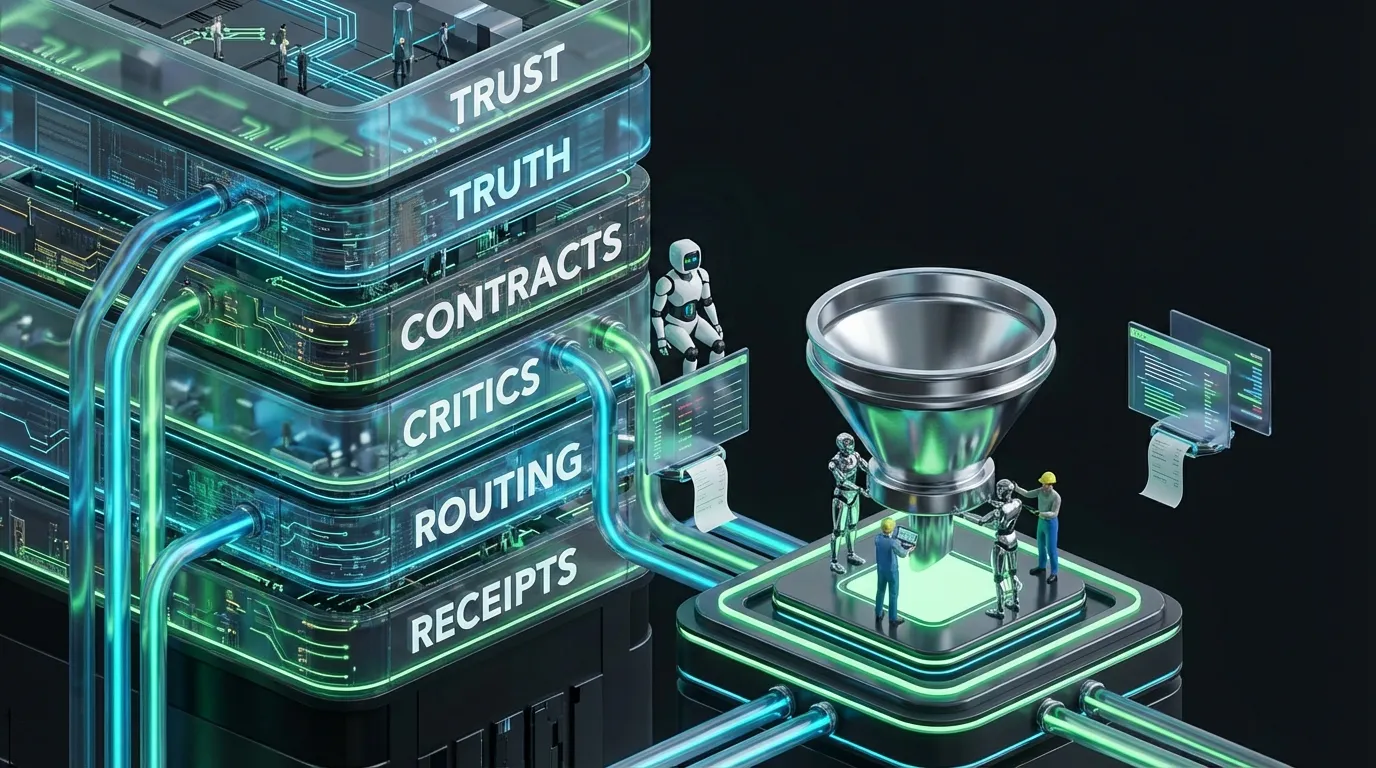

Policy as Code or Policy as Doorstop

Rules in PDFs are how your agents ignore you. Encode policy so your automations enforce rules proactively, every time, everywhere.

{

"policy_pack": {

"claims": {

"numeric_require_source": true,

"allowed_sources": ["official_docs", "pricing", "case_study"],

"blocked_phrases": ["guaranteed", "number one", "best in class"]

},

"routing": {

"prefer_small_models": true,

"escalate_on": ["missing_source", "novel_comparison", "security"]

},

"review": {

"auto_publish": ["typo_fix", "alt_text", "format_tweak"],

"editor": ["comparisons", "new products"],

"legal": ["pricing", "privacy", "medical"]

}

}

}

Multimodal Automation: How Retrieval Powers Every Surface

- Text automation: Answers cite their proof and link to source. Good for assistants, RFPs, and white-glove sales.

- Images: Retrieval provides exact colors, specs, and compliance overlays. OCR backs up asset checks.

- Video: Script builders and on-screen callouts draw from grounded claims. Visual critics catch issues before you publish.

- Audio: Claims and disclaimers are evidence-backed. Pacing critics keep voiceovers from sounding robotic.

How to Not Blow Your Budget: Cost Controls for Retrieval and Agents

Modern stacks love to burn tokens and call frontier models. Enforce thrift at every layer, or finance will throttle your API keys.

| Cost Leak | Symptom | Rapid Fix |

|---|---|---|

| Overused big models | Everything hits GPT-5 or top-tier models by default | Small-first routing; escalate only on miss or conflict |

| Planning loops without end | Agents keep refining and nothing ships | Confidence-based early stopping; cap deltas |

| Output variant sprawl | Hundreds of near-duplicates per prompt | Hard output caps and automatic deduplication |

| Over-retrieval | Top-k set to 20 just in case | Adaptive k and reranker score ceilings |

{

"budget_control": {

"max_asset_usd": 1.20,

"retry_limit": 1,

"max_frontier_calls": 1,

"retrieval_limits": {"topk_max": 8, "graph_hops_max": 2},

"router": {"prefer_small": true, "escalate_on": ["missing_source", "low_confidence"]}

}

}

Prove It Actually Works: Metrics Over Vibes

- First-pass validity: Percentage of answers passing all critics on first try

- Source coverage: Percentage of numeric or comparative claims with valid sources attached

- Eligibility: Share of answers approved for live use

- Time to publish: Minutes from brief to go live

- Provable asset cost: Total compute and human effort per evidence-attached asset

Reference Schema Your Stack Should Ship

{

"audience": {

"name": "midmarket_usa_ops",

"goals": ["cut manual workload", "meet SLAs"],

"proof_weight": 0.8,

"price_sensitive": 0.6,

"region": ["US"]

},

"answer_card": {

"headline": "Automate Approvals with Audit Trails",

"proof": {"text": "47% faster invoice processing", "source_id": "case_study_2381"},

"cta": {"label": "See demo", "url": "/demo"},

"compliance": {"region_lock": ["US"], "legal_tag": "finance_q4"}

}

}

Playbooks: Right-size Your Stack by Team Size

Solo Creators and Micro-Teams

- Start with a triplet store for product facts and core claims.

- Hybrid search plus light rerank with tight k (≤3).

- Auto-publish only low-risk outputs such as alt text and routine updates.

Mid-Market Orgs

- Map entity relationships: products, regions, pricing, features.

- Adopt instruction-following rerankers for all search.

- Add risk flagging and provenance manifests per content type.

Enterprise

- Regionalize policy packs and enforce claims and privacy with code checks.

- Isolate third-party data using clean rooms and usage logs.

- Benchmark retrieval with in-house evaluation suites.

The 30-Day Go-Live Plan for Modern Retrieval

Week 1: Inventory and Truth Extraction

- Pull pricing, specs, and validated stats into a structured truth pack.

- Generate entity lists and initial triplets, then resolve ambiguity and ID mismatches.

- Define schemas for FAQs, answer cards, and comparisons.

Week 2: Plug in Hybrid Retrieval and Reranking

- Deploy sparse plus dense search with intent-driven rerankers.

- Use adaptive k, log retrieval confidence, and enforce citation on every answer.

- No source equals no ship.

Week 3: Critics and Budget Controls

- Add critics for schema, claim proof, locale, risk tier, and formatting.

- Set hard caps on cost per asset and define escalate_on rules.

- Autofix small fails such as style and tone with safe default behaviors.

Week 4: Metrics and Iteration then Expand

- Track pass rates, source coverage, and lead time to live.

- Cull low-value critics and turn up signal thresholds.

- Add new content types only after baseline metrics hold for a full week.

If It Breaks: Fast Fixes for Classic Failure Modes

| Failure | Root Cause | Immediate Fix |

|---|---|---|

| Unsupported numeric claims | Model guesses numbers when no source is attached | Block uncited numbers at critic stage with no exceptions |

| Hallucinated feature parity | Retrieval blurs lines between SKUs | Apply SKU filters in retrieval and graph-derived constraints |

| Stale pricing | Indexes lag and surface old data | Use incremental ingestion and freshness checks for fields |

| Acronyms lost | Dense-only search skips jargon and IDs | Blend sparse retrieval and expand acronyms in preprocessing |

| Runaway costs | Uncapped planning with unmetered calls | Hard budget and call caps with early bailout to small models |

Benchmark What Matters Not Just Leaderboards

Open benchmarks are fun, but shipping is not a contest. Build your own eval harness tuned to your risk tolerance, audience, and workflows.

{

"tasks": [

{"name": "faq_update", "risk": "low"},

{"name": "answer_card", "risk": "medium"},

{"name": "product_comparison", "risk": "high"}

],

"evals": {

"validity": ["schema", "tone", "length"],

"grounding": ["has_citation", "allowed_source"],

"performance": ["conversion_rate_proxy", "readability"],

"cost": ["retrieval_latency_ms", "tokens_used", "usd_cost"]

},

"thresholds": {

"low": {"validity": 0.95, "grounding": 0.90},

"medium": {"validity": 0.92, "grounding": 0.95},

"high": {"validity": 0.98, "grounding": 0.98}

}

}

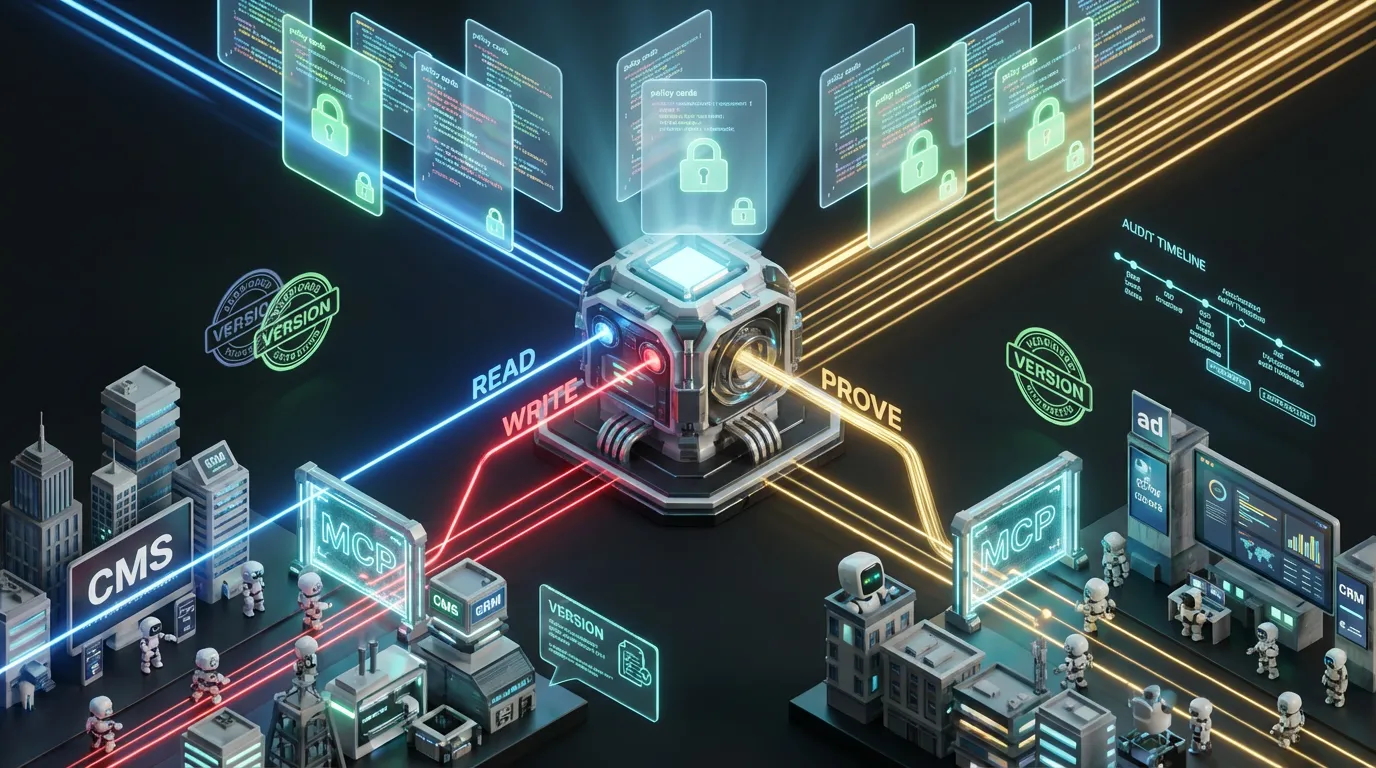

Governance That Actually Governs

- Risk tiers: Automatically ship low-risk assets and escalate anything novel, sensitive, or regulated.

- Provenance manifests: Every asset carries its receipts, including sources, retrieval stack, and constraints.

- Kill switches: One flag pauses or unpublishes risky content immediately.

No Hype Allowed Reality Checks

- Graphs do not write for you: They enforce truth, not taste. You still need voice and intent.

- Agent flows need change management: Fully autonomous stacks drift over time. Human gates pay off fast compared to cleaning up later.

- Rerankers are not a trash filter: Bad data in still yields bad answers. Clean ingest and canonical truth trump clever ranking.

The Take Build an Evidence Spine or Get Left Behind

Proof-first automation is table stakes. Assistant-native and programmatic surfaces reward verifiable answers and punish unsubstantiated fluff. Finance will notice runaway agents before you do. The stack that wins in 2025 treats retrieval as an evidence engine: graphs and triplets to encode your truth, hybrid search to fetch with recall, intent-driven rerankers for precision, and critics to lock in policy and cost. Build it and every workflow gets smarter. Your brand becomes proof-native. Your agents stop freelancing. Your team ships faster with receipts and zero regrets. If you want to see how this plays out in production systems, read our playbook on Agentic CMS as a modern operating layer.