Video Automation Goes Audio Native

Video Automation Goes Audio Native

December 3, 2025

Welcome to the internet, where feeds pulse with so much video you would need three hands to even keep up on mute. This year, though, there is a real leap hiding in the background noise: video automation is finally going audio native. Forget duct-taping subtitles on top of another slideshow set to the same royalty-free track; your next automated stack can generate script, voice, music, SFX, dubs and visuals as a single, coherent, governed entity. Here is why the new audio-first approach, enabled by stacks like ElevenLabs and OpenAI’s gpt-4o-mini-tts, is not just a flex; it is the new baseline for global reach, speed, and compliance.

Audio Native Destroys Caption First: Why It Matters Now

Let’s stop pretending text overlays are enough. When TikTok, YouTube, and Instagram were all mute-by-default, captions did most of the heavy lifting. Today, audio is a first-class citizen. Spoken sentiment, rhythm, and authenticity are not only what your audience expects; they are what agents, translators, and even recommendation algorithms are analyzing.

- Voice is your brand. Trust, tone, and rhythm cannot survive transcription alone.

- Multilingual actually means audio. Captions might pass for compliance, but they do not deliver immersive, sticky global user experience. Quality dubs convert; bland text gets lost.

- Structured audio is automation’s best friend. If your pipelines treat voice tracks, dubs, and compliance statements as JSON objects, not afterthoughts, you are set for scale and cost control.

If you are still layering voice over silent visuals and calling it “personalized video,” you are leaving international markets and brand integrity on the table.

The 2026 Audio-First Automation Stack

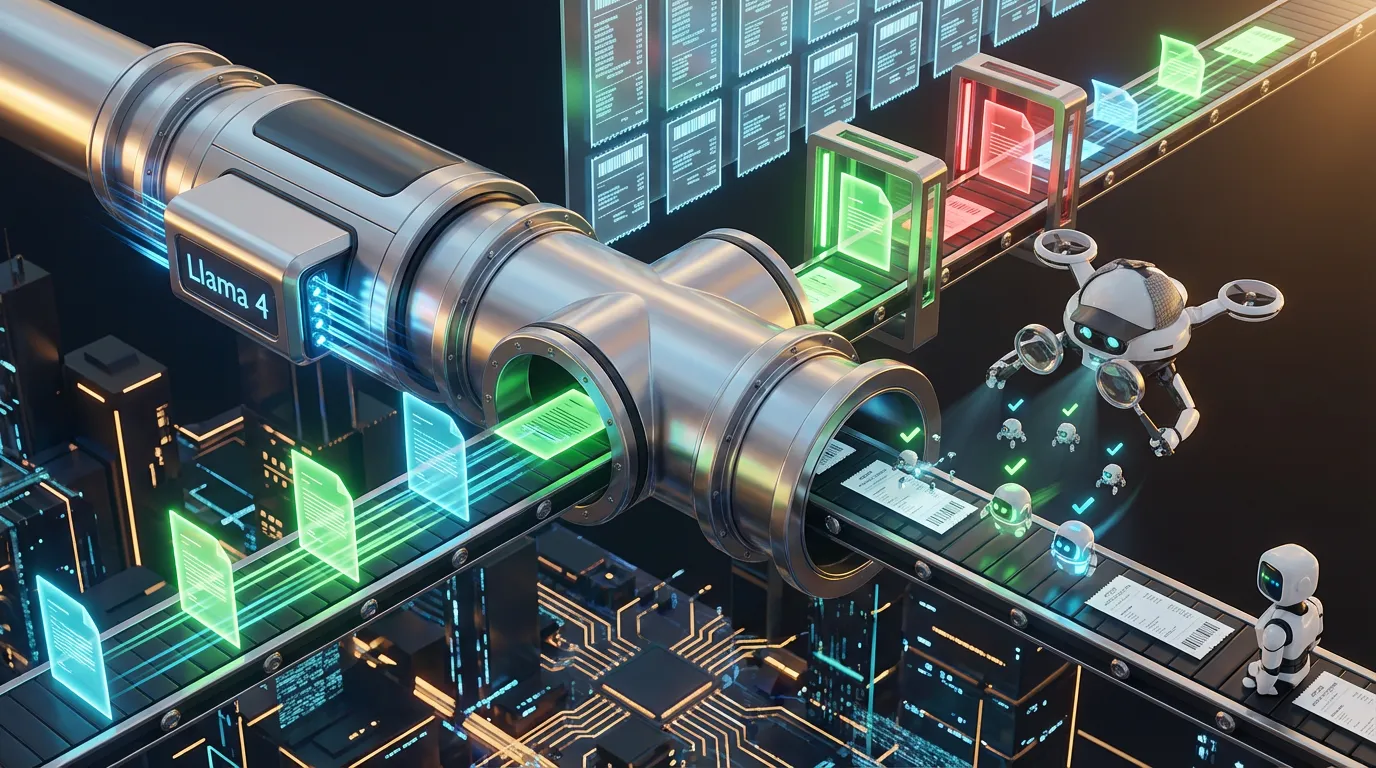

So, what is actually under the hood in a modern audio-driven pipeline? Here is the kit, built for speed and compliance rather than random sparkle.

- Advanced Speech Synthesis + Cloning: High‑fidelity TTS (for example: OpenAI gpt-4o-mini-tts, ElevenLabs Dubbing) that matches brand styles plus opt-in voice cloning for continuity.

- Lip Sync, Meet GenAI: Phoneme-to-mouth matching for realistic edits and dubs. No more uncanny valley.

- Prosody Control: Dial up energy, tune pace, or lean into a formal register, auto-tagged and repeatable.

- Composer-Quality Music + SFX Gen: Music and SFX generated with platform‑ready loudness, mood, and licensing encoded up front.

- Noise + Room Tone: Layered-in realism for creator content; sterile AI voices get skipped.

- Dubbing, Multi-track, Locale-Ready: Export every audio asset as a structured object with locale, disclosure, and rights tagging built in. No more scramble when your ad campaign pivots at 11 p.m.

The New Audio-Centric Pipeline Blueprint

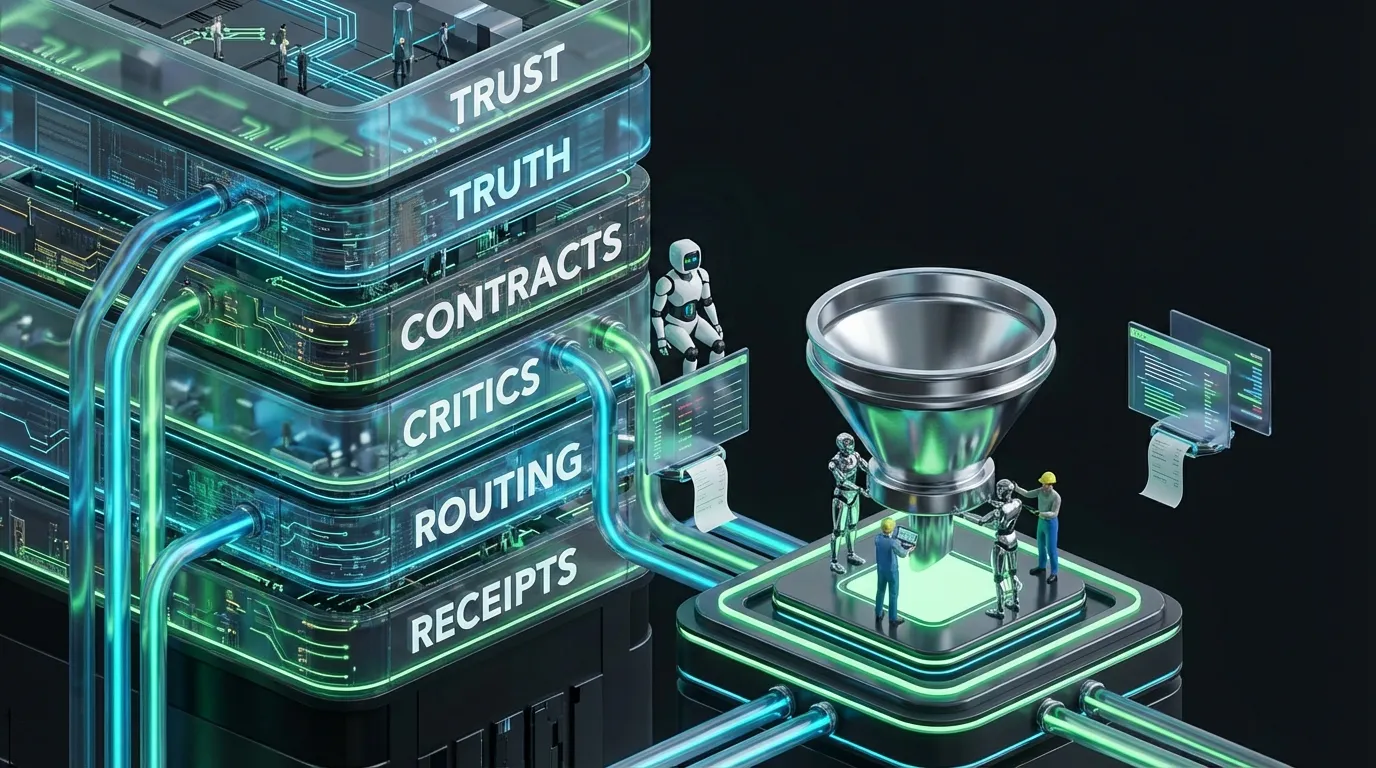

[Truth]

→ product specs • claims and sources • eligibility • design tokens • disclosure rules

[Plan]

→ script beats • shot list • audio intent (voice, mood, SFX) • locales

[Generate]

→ draft voice track • music stem • SFX • visual edits

[Align]

→ lip sync • beat-matching • lower thirds tied to audio moments

[Dub]

→ multi-language voices • captions • on-screen text reflow

[Critic]

→ claims • locale • disclosure • accessibility • audio loudness • cost

[Publish]

→ platform-ready package with stems and manifests

[Observe]

→ inclusion • watch-through • per-second defect escapes • unit cost