AI Creative Testing Without Burning Your Brand

AI Creative Testing Without Burning Your Brand

December 14, 2025

Creative automation in 2025 is now part of public meme culture. Chat-driven image features hit mainstream attention and sparked record usage, which shows how fast these tools shape perception. Consumer behavior moved right alongside the memes. Incredible models, yes. But the most visible fails are not about a hallucinating LLM. They come from missing governance and testing. If your content engine cannot distinguish edgy from off-putting, you are running a speedrun for reputation damage.

This is the central tension of automation-first marketing: We want adaptive content ops powered by cutting-edge platforms, but we also have budgets, brand, and taste to defend. There is no fix-all model or prompt. Success demands a layered approach, namely bandit experimentation for rapid learning, evaluator and simulator sandboxes for risk, and human-in-the-loop for tricky taste calls. Let’s untangle this stack.

The New Spectrum of AI Creative Testing

| Approach | How it Works | Where It Shines | Where It Fails |

|---|---|---|---|

| Classic A/B or Multivariate | Fixed splits, traditional stat tests | Clear read on a handful of variants | Slow learning, expensive mistakes while you wait |

| Multi-Armed Bandits | Adaptive traffic allocation to high performers | Faster learning, less exposure to losers, ties up less capital | Sensitive to early noise, ignores brand risk unless coded in |

| Agentic Creative Loops | Models generate, test, and evolve content autonomously | Massive variant scale, always-on iteration | Cost spikes, drift into off-brand territory, high risk without robust guardrails |

Jumping from A/B to fully agentic is tempting, but beware: without policy and taste enforcement, you are rolling dice in a minefield. Choose hybrid layers that combine the best of all worlds. Learn like a bandit, but do not behave like one.

Why Bandits Win Inside Modern Marketing Ops

For a deeper dive on bandits inside creative operations, see Creatives That Learn From Outcomes.

- Less regret in live tests: Stop losing money letting clear losers limp along. Bandits reroute traffic toward winners quickly.

- Always-on experimentation: No waiting for the next test cycle. Bandits never sleep, so optimization happens in real time.

- Segment sensitivity: Sophisticated bandits (contextual/hierarchical) can test by region, persona, or channel without splintering your team into chaos.

- Measurable economics: Track decision costs and per-variant lift, not just CTR or last-campaign baselines.

But bandits chase the goals you set. If those goals do not account for taste or policy, you are optimizing yourself into a nasty surprise. More than clicks, please.

Give Your Bandit a Conscience

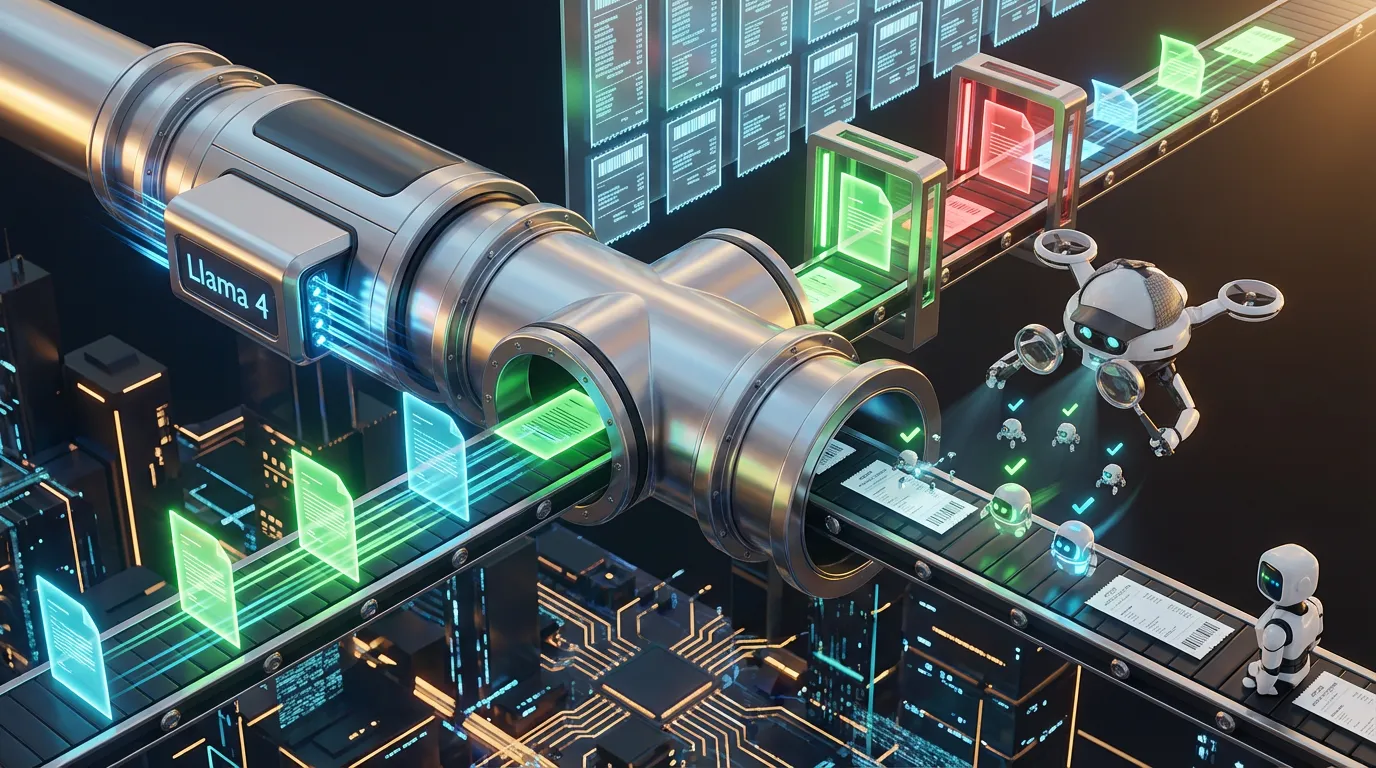

Wrap adaptive allocation with critic and simulator layers to block risk, penalize iffy creative, and spot violations before they forward to paid traffic. This turns your bandit from a pure optimizer into a brand-safe automation engine.

{

"creative_test": {

"objective": {

"metric": "weighted_uplift",

"weights": {"ctr": 0.5, "watch_time": 0.2, "positive_reacts": 0.2, "complaints": -0.5}

},

"bandit": {

"strategy": "thompson_sampling",

"exploration_floor": 0.05,

"warmup_impressions": 2000

},

"guardrails": {

"critics": ["schema", "claims", "rights", "locale", "visual_qc", "taste"],

"simulator": {"personas": ["genz_fun", "parent_value", "b2b_exec"], "min_pred_score": 0.62},

"block_on": ["policy_violation", "uncanny_faces", "taboo_map_hit"]

},

"budget": {"max_cost_per_variant_usd": 1.25, "retry_limit": 1, "frontier_calls": 1}

}

}

You are not telling the bandit to intuit taste. You are telling it that taste violations are disqualified before the race even starts.

Taste Packs Are Policy With an Opinion

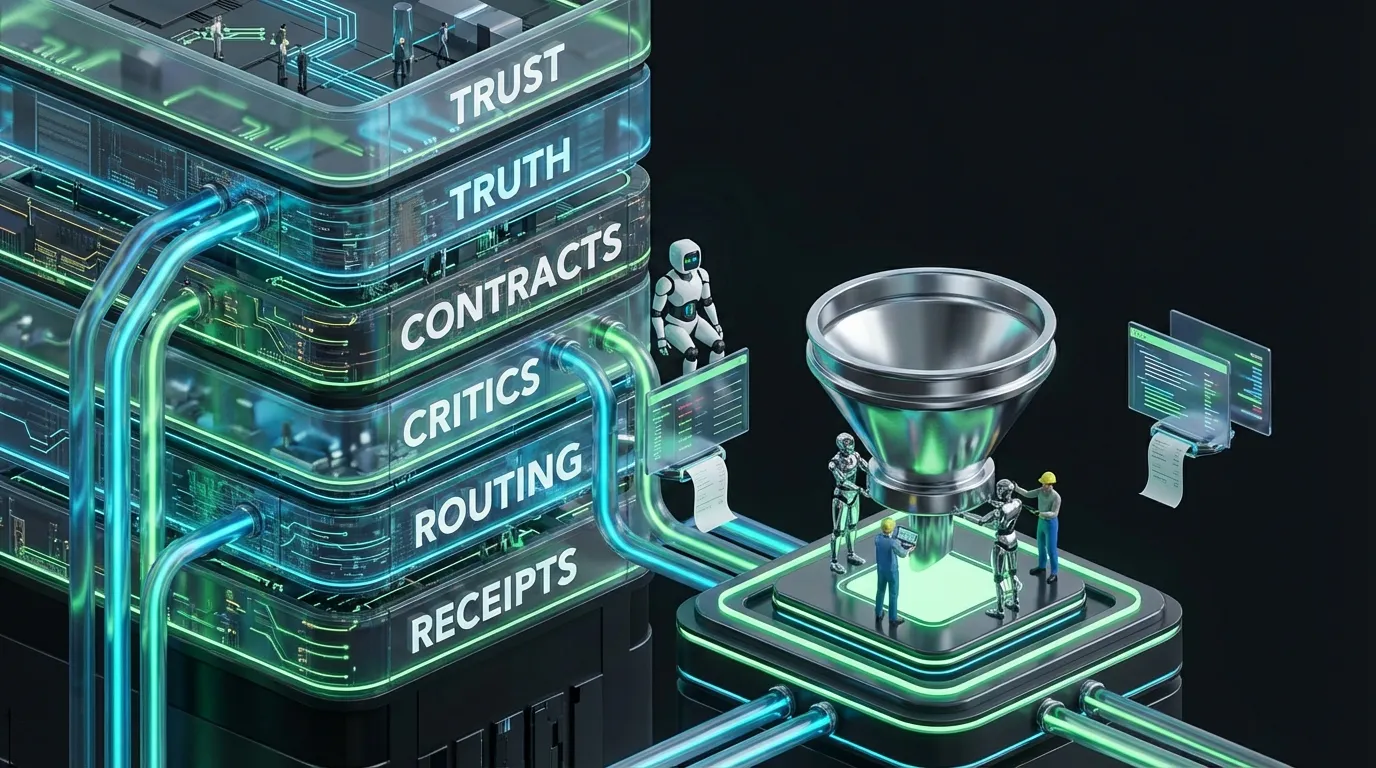

Critics enforce structure and claims. Taste packs encode your brand’s vibe, comedy, motifs, and unwritten taboos, alongside explicit cultural and visual no-go zones. These are not wishful thinking; they are policy, enforceable in code and model prompts.

{

"taste_pack": {

"brand": "urban_playful",

"tone": {"humor": "dry", "sarcasm": "light", "snark": "medium"},

"forbid": ["holiday_cynicism", "suffering_as_punchline", "mangled_hands"],

"taboos": {

"nl_NL": ["weather_misery_glorification"],

"ja_JP": ["disrespectful_casual_voice"],

"en_US": ["mean_spirited_pranks"]

},

"visual_qc": {"faces": "realistic", "hands": "anatomic", "signage": "locale_spellcheck"}

}

}

Recent fiascos prove the value: a visually gorgeous but culturally tone-deaf campaign likely gets flagged before a penny is spent with a proper taste pack in place.

Cost Control Is a Code Problem, Not a Pipe Dream

Agentic experiments eat tokens and retries for breakfast. Bake hard budgets into your routing. Otherwise, you are running an open tab for perpetual brainstorming.

{

"routing": {

"tasks": {

"copy_variants": {"prefer": ["llm-small"], "max_cost": 0.003},

"image_qc": {"prefer": ["vision-critic"], "repair": true},

"idiom_rewrite": {"prefer": ["llm-medium"], "escalate_on": ["low_confidence"]}

},

"rules": {"retry": 1, "escalate_on": ["schema_violation", "risk_high"]}

},

"budget": {

"per_variant_usd": 1.25,

"frontier_calls": 1,

"daily_spend_usd": 1000,

"cost_spike_pct": 10

}

}

Start lean. Force single repairs and rare escalations. If “genius” variants keep burning tokens, you have a bug, not a breakthrough.

Simulators: Crash Test Creative Before Your Audience Does

Bandits optimize live, but simulators give you a rehearsal. Persona-aware proxy audiences score creative on performance, cringe risk, and relatability before launch. Simulators also age, so calibrate them regularly or prepare for surprises.

{

"simulator": {

"personas": ["student_bargain", "new_parent", "ops_manager"],

"channels": ["short_video", "stories", "display"],

"scores": ["clarity", "energy", "relatability", "cringe_risk"],

"thresholds": {"relatability_min": 0.7, "cringe_risk_max": 0.25}

}

}

Simulators are not truth oracles. They are early warning systems. Only variants passing these checks are eligible for real traffic.

Metrics That Matter When Everything’s Adaptive

- Regret: Opportunity cost from not surfacing the actual best variant.

- First pass validity: % of variants clearing all critics without manual fix.

- Cost per decision: Total spend divided by determinative allocation shifts.

- Incremental lift: Performance improvement versus rolling baseline, not just last campaign’s best.

- Complaint rate: User reports per thousand impressions, segmented per locale.

- Defect escapes: Taste or policy fails that make it past checks per thousand assets.

If your dashboard only shows CTR, congratulations, you are training your system for virality at the cost of trust. Build negative signals into your code, not just your postmortems.

Failure Modes and Fast Fixes

| Failure | Why It Happens | Fix |

|---|---|---|

| Early winner that tanks later | Over-exploitation on insecure data | Raise warmup, set exploration floor, automate rolling backchecks |

| Passes metrics but fails taste | No taste packs/taboo maps | Ship taste critics, enforce locale-specific blocking |

| Uncanny assets go live | Weak or missing vision QC | Enforce face/hand checks; locale signage spellcheck; automate repairs |

| Unexpected cost spikes | Unlimited retries and model cascades | Cap repairs; restrict “frontier” calls; small model routing first |

| Policy takedowns | Poor rights/disclosure enforcement | Automate policy as code, no publish without full tokens/disclosures |

Critics Should Be Boring and Relentless

Critics are checklists made actionable. They are not taste arbiters; they are structural and policy enforcers that free your creative teams to experiment without classic footguns.

{

"critics": {

"structure": {"schema_id": "AdCardV7", "enforce": true},

"claims": {"numeric_require_source": true, "allow_from": ["pricing", "lab", "ops"]},

"rights": {"license_token": "required", "territory_lock": true, "expiry_required": true},

"locale": {"currency": "auto", "date": "auto"},

"accessibility": {"captions": true, "contrast_min": 4.5},

"taste": {"pack_id": "urban_playful_v3"},

"cost_guard": {"max_cost_per_asset_usd": 1.25, "retry_limit": 1}

}

}

Block risky variants before the bandit even sees them. Fix once automatically. If it fails again, escalate to a human.

From Creative Chaos to Creative Supply Chain

Automation-first marketing requires every asset to have structure, sources for claims, rights tokens, and disclosures embedded. Assets missing receipts simply do not get shipped. Content with provenance is now preferred by platforms and algorithms. Testing is essential, but eligibility comes first.

Team Playbooks at Three Scales

For Solo Creators and Microbrands

- Start with just two formats (for example, short video and AdCard) with strict schemas.

- Draft 5–10 variants per cycle via the smallest model possible. Block publishing until full critic pass.

- Employ a bandit algorithm with a hard-coded exploration floor. Update your simulator monthly.

- Set low per-variant cost caps and allow only a single auto-repair.

- Human review for flagship content.

For Mid-Sized Marketing Teams

- Run contextual bandits by audience segment and locale.

- Centralize taste packs and taboo enforcement. Rights and disclosures are non-negotiable.

- Automate vision QC (face, hands, signage) for all visual content.

- Route new variants through smaller models. Only winners get a premium polish.

- Score regret, complaints, first-pass validity, and cost per decision weekly.

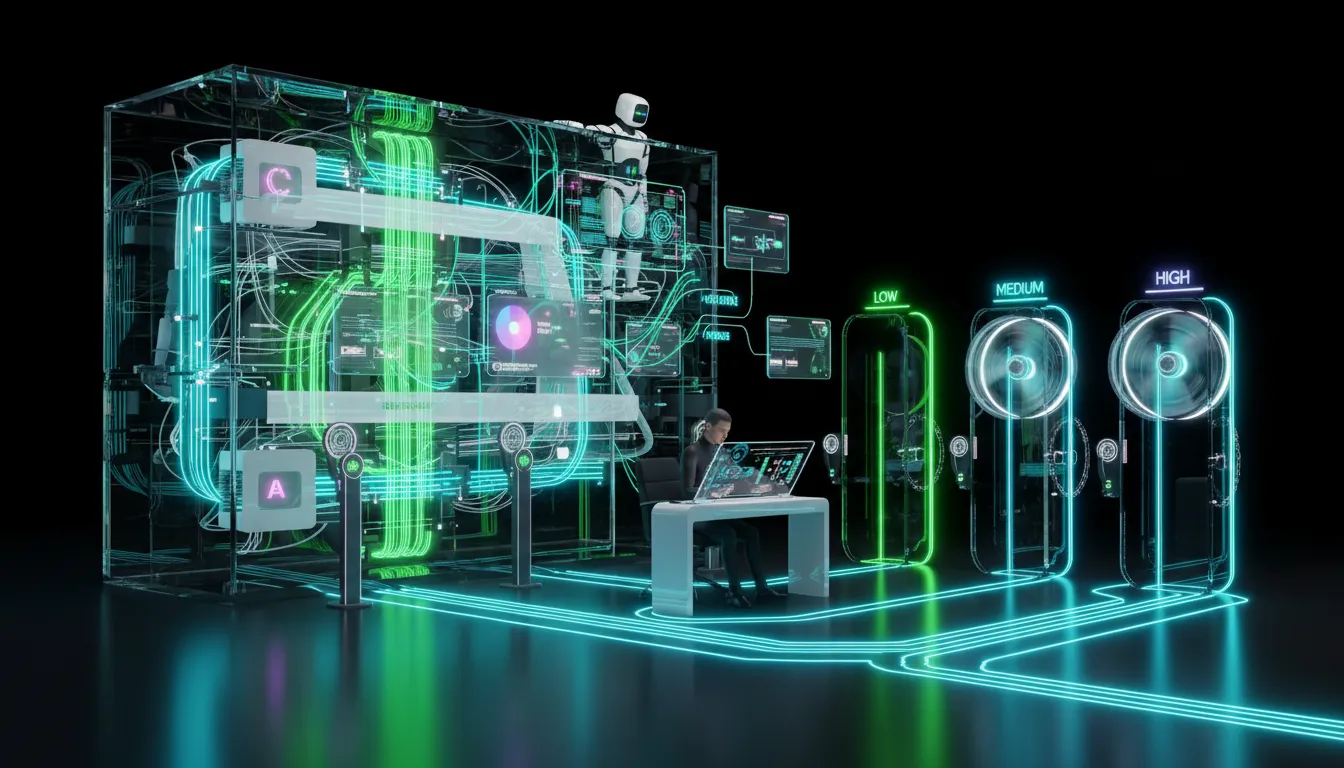

For Enterprise and Regulated Organizations

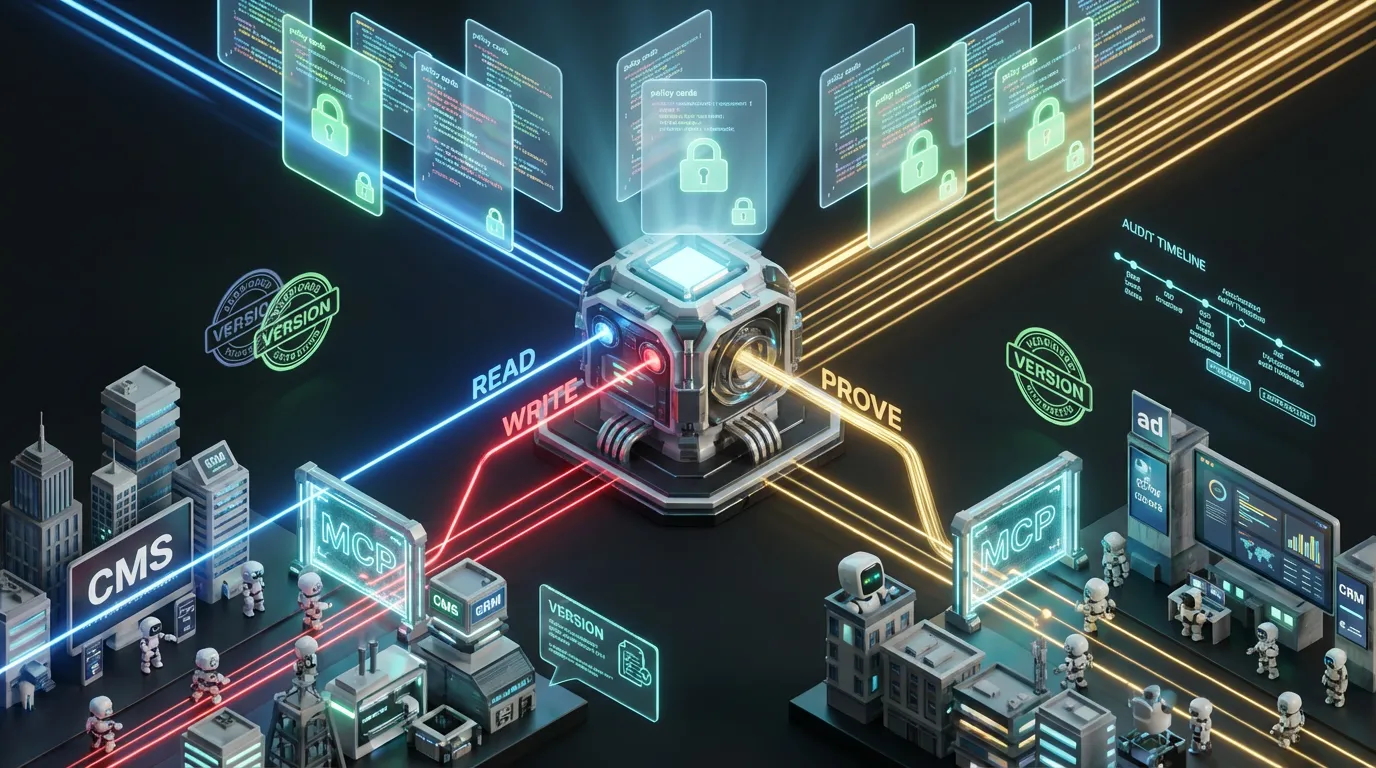

- Version every schema, critic, taste pack, and model. Enforce monthly audits.

- Segregate policies per region with territory locks and emergency kill switches.

- Risk tiering: low-risk edits autopublish; sensitive claims require explicit human approval.

- Every asset gets a provenance receipt. If channels strip metadata, reattach automatically.

- Enforce strict per-campaign and per-asset budget caps. Autonomous tools get zero blank checks.

No Code and Low Code Glue That Actually Delivers

- Smart routers and webhooks: Choreograph critics, simulators, and bandits across platforms with minimal custom code.

- Schema validators: Detect and reject drift as soon as assets are saved, not when complaints land.

- Event-driven caches: Ensure claims and pricing are current, auto-busting outdated data.

- Manifests in your DAM: Store rights, disclosures, claims, and provenance alongside every asset.

Scheduling and Drift Control

Reality check: creative performance shifts with trends, policy, and even meme cycles. Bake these controls into your testing stack:

- Warmup windows: Give new variants time before an early fluke locks in as the default.

- Exploration floors: Prevent premature winner-take-all starvation of alternatives.

- Dark periods: Pause high-risk automation during sensitive news cycles or cultural events.

- Retrospective recalibration: Remeasure and update simulator cutoffs as real-world engagement shifts.

The Pulled Ad Was a Systems Failure, Not a Scandal

That doomed ad did not need to be a PR punchline. A taste pack would have blocked it before campaign launch. A simulator would have flagged cringe potential. Rights and policy critics would have cleaned up any legal time bombs. And the bandit would have only learned from content that passed these checks. When you ship the stack, not just the vibe, you learn quickly and safely.

Your 30-Day Automation Rollout Plan

Week 1: Inventory and Schemas

- Choose two supported formats (for example, short video and AdCard). Draft strict schemas with no maybe fields for claims or rights.

- Invent your taste pack: three forbid rules plus two locale taboos.

Week 2: Critics and Simulator

- Ship critics for structure, claims, rights, locale, accessibility, cost, and taste.

- Deploy a basic simulator with three personas and a cringe threshold.

Week 3: Bandit in Shadow Mode

- Run your bandit on internal or holdout traffic. Log regret, validity, and cost for each decision.

- Set retries to one and record every failed check with a suggested fix.

Week 4: Canary Expansion

- Ship a canary set to production with a fast rollback option. Watch complaints and defect rates closely.

- Tune thresholds and scale to a second channel or region.

The COEY Take

Creative iteration is not a battle of A/B versus anarchy. The winning stack is proudly boring, hybrid, disciplined, and automation-first. Bandits facilitate rapid learning, critics enforce structure, simulators block risky work, and humans make conscious calls at the edge. Your costs stay capped. Your output stays eligible. Your brand stays trusted. For how to stand up the underlying operations, see Prompt Engineering Is Dead. Long Live AI Ops! Architect your content supply chain with rights, structure, and receipts, then wire your guardrails in code. Only then can adaptive testing unlock the promise of automation while safeguarding the future of your brand, not just this quarter’s campaign.