GLM-4.7: Open Model Built for Agent Workflows

GLM-4.7: Open Model Built for Agent Workflows

December 23, 2025

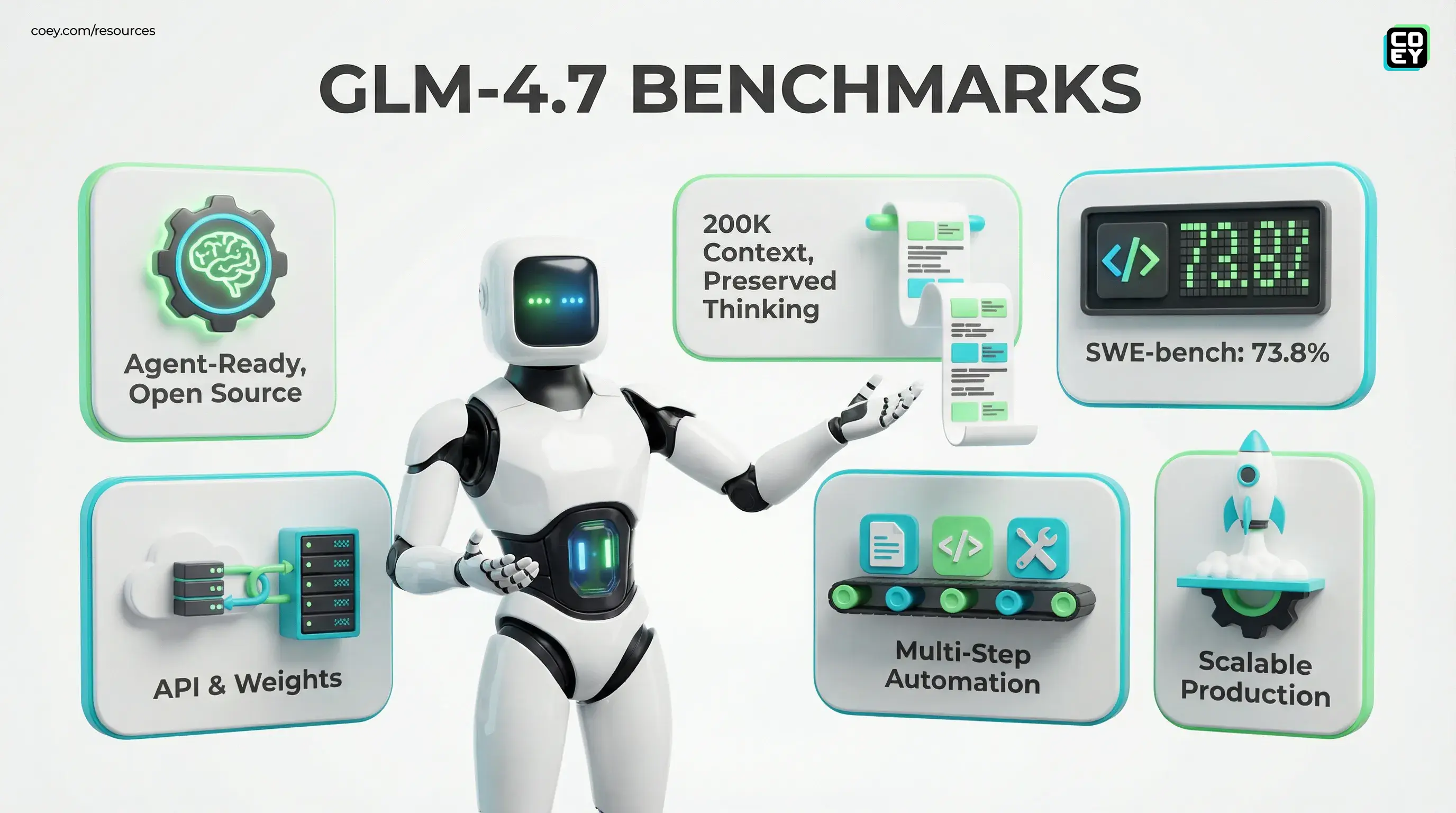

Zhipu AI, now branded as Z.ai, has released GLM-4.7 as an open-source, agent-ready LLM, aimed at teams who want frontier level performance without locking into a closed ecosystem. The launch matters because it is not positioned as just a better chat model. It is positioned for multi-step automation: coding-heavy workflows, long-context tasks, and tool-using agents that need to keep state without drifting halfway through the job.

At the center of the drop is Z.ai’s “preserved thinking” framing, basically “the model stays on task across long chains.” Whether that holds up in your stack depends on evals, your prompts, and your guardrails. But the bigger point is this: open models are no longer just “pretty good for free.” They are increasingly production candidates for creators, marketers, and operators who want to scale output with more control over cost, privacy, and integrations.

Primary access points include the Zhipu chat experience (https://chat.zhipu.cn), Z.ai’s developer platform (https://open.bigmodel.cn/), and downloadable weights on Hugging Face (https://huggingface.co/zai-org/GLM-4.7-FP8).

What shipped (not just vibes)

GLM-4.7 lands as a serious open-source release with signals that Z.ai wants it used in real systems, not just demo threads. Reported highlights include:

- Agent orientation: improved task planning and tool-calling behaviors aimed at multi-step workflows.

- Long-context support: GLM-4.7 is documented with a 200K token context window; practical throughput and stability still depend on your serving stack. See the GLM-4.7 docs here: https://docs.z.ai/guides/llm/glm-4.7.

- Coding focus: Z.ai positions GLM-4.7 heavily around software and automation, including a reported 73.8% on SWE-bench Verified in launch chatter and community tracking.

- Open distribution: weights are published for local or VPC deployment, not just a hosted chat sandbox. The Hugging Face release lists an MIT license.

Translation: This is not “another chatbot model.” It is an attempt to make open-source competitive for the agent era where the model is not the product, it is the engine inside your workflows.

Why “preserved thinking” matters

Agentic workflows break for boring reasons: context drift, instruction loss, inconsistent state, and the classic “it sounded confident and then wrecked your repo.” Z.ai’s “preserved thinking” is essentially a reliability pitch: fewer mid-chain failures when the model is used across:

- multi-step content assembly (brief → outline → draft → rewrite → format → schedule)

- automation chains (analyze metrics → propose actions → generate assets → push to tools)

- coding loops (read codebase → plan changes → implement → test → summarize PR)

For marketing and media teams, the difference is operational: an agent that stays coherent can be trusted with bigger chunks of the pipeline. Not “publish without review,” but “produce 80% of the work without you re-explaining the brief every step.”

Automation lens: can you actually use it in a stack?

Yes, more than many “model launches” because GLM-4.7 is available both as a hosted service and as downloadable weights. That means you can choose between convenience and control.

Automation readiness (today vs. soon)

| Capability | What you can do today | What still blocks full automation |

|---|---|---|

| Model access (hosted) | Call Z.ai via API on the Open Platform for prompt to response workflows | Vendor quotas and pricing, regional availability, and enterprise governance needs |

| Model access (self-host) | Run weights from Hugging Face in your own infra for private content plus predictable unit economics | GPU capacity, inference ops maturity, monitoring, and security hardening |

| Agent workflows | Use GLM-4.7 as the brain in orchestration tools (n8n, Make, custom) via HTTP calls | You still need tools, memory, evals, and guardrails. Agents fail without a control plane |

If your org is already wiring APIs together, this is immediately useful. If your org still treats “automation” as “someone copy-pastes from a chatbot,” you will still get speed, but you will not get compounding scale.

API availability (and what it means in plain English)

Z.ai’s Open Platform is the key detail for non-technical leaders: it is the difference between “a tool your team uses” and “a capability your systems can call.” With an API, you can automate:

- Content operations: generate variants, reformat for channels, produce structured metadata, run internal QA checks

- Growth workflows: write scripts that pull campaign stats, summarize learnings, generate new creative angles, and hand off to schedulers

- Support and success automation: summarize tickets, draft replies, escalate issues with full context preserved

- Developer productivity: generate utility scripts, refactor glue code, document repos, assist CI and CD notes

And if you do not want to send sensitive drafts to any vendor endpoint, weights give you the “bring the model to your data” option. That is not just a privacy flex. It unlocks deeper automation because you can connect the model directly to internal systems without playing compliance ping-pong.

Coding reliability is marketing infrastructure now

It is easy to file “coding benchmarks” under developer-only concerns, but in 2025, marketing runs on code. Every serious content engine eventually becomes a messy stack of:

- webhooks

- ETL jobs

- creative QA scripts

- reporting pipelines

- campaign and asset routers

So when GLM-4.7 pushes coding performance, the downstream impact is straightforward: more of the marketing machine becomes automatable. Not because the model writes Super Bowl ads. Because it can more reliably generate and maintain the boring plumbing that makes scaling creativity possible.

If your creative automation cannot ship clean JSON, handle edge cases, and stop hallucinating business logic, it is not “creative tech.” It is just expensive improv.

Multi-format impact: text, photo, video, audio

GLM-4.7 is a text language model, but language is the control layer for everything else. In practice, it becomes a multi-format orchestrator when paired with the right generators and editors.

Text

The obvious win: briefs, scripts, blog drafts, email sequences, ad variants, product descriptions, localization passes. The less obvious win: structured outputs that your CMS, DAM, or scheduler can ingest automatically.

Photo and design

GLM-4.7 will not generate images by itself, but it can:

- write prompt specs for your image model

- generate alt text and accessibility metadata

- create shot lists and layout directions for designers

Video

Where preserved thinking matters: long scripts, episodic consistency, repurposing workflows. Example chain: webinar transcript → structured chapter map → 12 shorts scripts → titles and descriptions → publishing checklist.

Audio

Audio pipelines still usually require separate TTS and voice tooling, but GLM-4.7 can automate the language layer: VO scripts, segment intros, ad reads, multilingual adaptations, and compliance-safe disclaimers.

What’s real now vs. what’s still hype

Real now

- Using GLM-4.7 via API to automate repeatable content tasks

- Running weights privately for internal drafting, QA, and routing

- Agent-like workflows that plan and call tools, if you provide the orchestration and checks

Still not magic

- Autopilot marketing without governance (you will scale mistakes faster than output)

- Hands-free compliance (you still need policy gates and receipts)

- Plug-and-play agents without integration work (the model is not the system)

Practical impact for brands and builders

For executives: GLM-4.7 is another sign the open-source tier is becoming strategically viable for automation-heavy orgs, especially when cost, privacy, or vendor lock-in is a real constraint.

For marketing leaders: this increases the odds you can build, or buy, a creative engine that runs continuously: ingest inputs, generate variants, QA them, route approvals, and publish, without paying premium per-token pricing forever.

For creators and media operators: open weights plus agent orientation is basically the recipe for building your own studio OS. Not a toy assistant. A pipeline that turns one idea into a cross-format package at scale.

If you want the previous milestone in this line, see our earlier coverage of Zhipu’s 200K context release: https://coey.com/resources/blog/2025/10/13/glm%e2%80%914-6-open-weights-200k-context-real-automation/.

GLM-4.7 is not the finish line. But it is a meaningful step toward the thing that actually scales creativity: intelligent machine collaboration that can be automated, integrated, and controlled, without the whole workflow living behind someone else’s UI.