Guardrails vs Agents in Content Automation

Guardrails vs Agents in Content Automation

January 8, 2026

The hard truth about your new AI “colleagues”

Every modern marketing stack is an overgrown garden of half-baked helpers. Your CMS powered by GPT-5 begs to rewrite your landing pages. Your CRM auto-condenses deal notes into sales haikus. Your ad platform drafts DALL-E-powered creative. Even your browser is auditioning for a podcast hosting gig, reading you posts in a strangely philosophical monotone.

And yet, under all this glittering “automation,” most teams are doing the single dumbest thing possible: letting humans become traffic cops. They’re copying, pasting, reformatting, triple-checking, and praying nothing mortifying sees daylight.

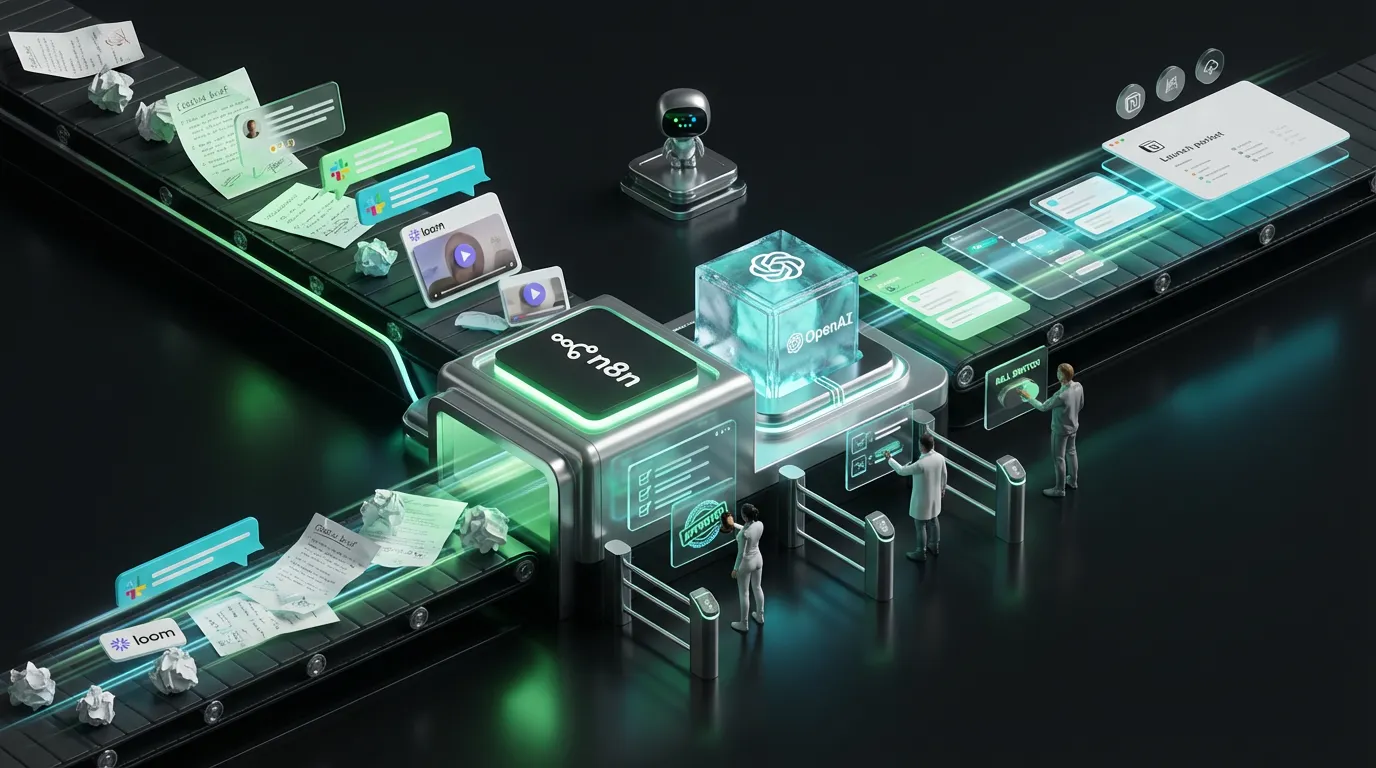

Deep Dive Thesis: The next big edge in marketing automation is not smarter autonomous agents. It’s guardrailed execution: structured outputs, automated critics, and control-plane enforcement that keeps tool-driven action safe enough to actually scale. It is the difference between AI-enabled velocity and turning your brand into a meme on LinkedIn.

Why more agents turned your ops into a minefield

Here’s the paradox: as AI tools get more powerful, their subtle screw-ups get more dangerous. A bot writing garbage is easy enough to catch. But when an agent acts, silently posting, updating, or syncing, its mistakes ripple through every system, quietly eroding trust.

- Draft failures: Obvious, local, and usually recoverable.

- Action failures: Stealthy and systemic, infecting the entire stack before you notice.

That’s the unspoken killer behind “AI fatigue.” The bottleneck isn’t that models can’t write. It’s that they can write and act, but nobody’s watching the door. Your workflow lacks the grown-up supervision it needs.

The industry signal: structure beats vibes

If you only track model releases, like “Is Claude 4 now smarter than Gemini Ultra?”, you’ll miss the real story. The true leap isn’t in model IQ, but in output discipline. Researchers and vendors are furiously converting model outputs from loose text blobs to clean, machine-readable payloads.

Case in point: OpenAI’s Structured Outputs paradigm. Suddenly, instructing models to return valid, schema-bound JSON isn’t an academic trick. It’s a production mandate. If a payload triggers downstream systems, structure is not an optimization. It’s a safety protocol.

Most automation isn’t “Write a paragraph.” It’s “Write the assets, map each input, validate, tag, escalate, and only then publish and log everything.” Structure is what turns creative output into process automation, not process chaos.

Guardrails: not “just QA,” but your new automation religion

A classic mistake: treating QA as a last-minute broom closet for screw-ups. In an automation-first world, QA is your always-on policy cop and auditor.

Think of it this way: your system should never trust an agent to do anything it can’t prove happened exactly as intended.

The three guardrails that matter most

- Contracts: Schemas for input and output so the next system can trust and route the result without second-guessing.

- Critics: Layered validators for claims, links, policy, and budget, not just “did it run,” but “does it make sense?”

- Receipts: Immutable logs, think CI/CD auditing, that track exactly what changed, and why.

Sound familiar? It’s old-school DevOps, reborn as grown-up marketing ops.

The new security loophole: structured output as the next attack surface

Structured output is not just more reliable. It’s a new security boundary. Attackers aren’t sleeping. They have already discovered they can weaponize schemas, sneaking malicious payloads through “valid” fields that safety systems barely peek into.

A brutal proof point: the recent paper Output Constraints as Attack Surface: Exploiting Structured Generation to Bypass LLM Safety Mechanisms. TL;DR: If your trust layer ends at “valid JSON,” attackers will RSVP and bring friends.

Translation: Valid schemas are a baseline. If your validation doesn’t check meaning, not just formatting, you’re automating mayhem, not safety.

Guardrailed automation in a real-world marketing flow

Let’s get practical. Here’s how a growth team would actually turn a new offer into a multi-channel campaign, without getting burned:

[Trigger]

new_offer_created | price_updated | campaign_brief_submitted

[Assemble truth]

fetch: offer contract | product facts | approved claims | consent rules

[Generate]

produce structured assets: ads | landing page blocks | email drafts

[Critic layer]

schema_valid | claims_have_sources | links_ok | blocked_phrases | budget_caps

[Route]

auto-publish low-risk | queue medium | require signoff high-risk

[Write]

push to: CMS | ESP | Ads platform | CRM

[Receipts]

store: diffs | approvals | tool calls | costs

Miss a checkpoint and it’s not “automation.” It’s chaos taped to a jetpack.

Shipping real guardrails: Where to begin without losing your mind

Most teams stumble by trying to boil the ocean. Pick a single pipeline that’s both high-volume and could do real damage if something goes sideways.

Choose a pipeline with a real blast radius

- Paid social asset generation

- Triggered lifecycle emails

- Landing page copy updates driven by product feeds

Define an enforceable minimum contract

Keep the contract boring and easy to police. For example, a paid social contract:

{

"asset": {

"asset_type": "paid_social_ad",

"channel": "linkedin",

"locale": "en-US",

"headline": "",

"primary_text": "",

"cta": "",

"landing_page_url": "",

"claims": [

{"text": "", "source_id": ""}

],

"disclosures": [""],

"risk_tier": "high"

}

}Add two critics before you add ten agents

- Schema critic: Instantly reject anything that fails validation.

- Claims critic: Block any claim without a properly linked source.

The unsung budget killer agents love: runaway retries

Agentic workflows rack up bills like they’re at an open bar, burning tokens, re-running failed calls, and dragging premium models into menial work. The cost-overrun stories are legend.

Guardrailed automation turns cost into an enforceable policy, not a line item you discover when Finance starts screaming.

| Policy lever | What it controls | Why it matters |

|---|---|---|

| Retry limit | Max re-tries per job | Prevents invisible infinite loops and surprise overages |

| Tool call cap | How many tools or agents can be invoked per job | Shuts down “just one more lookup” patterns |

| Model budget | Which model tiers are allowed | Keeps simple jobs cheap; escalates only when necessary |

Hybrid workflows: the only grown-up option

You won’t fully automate taste, legal, or brand reputation anytime soon. What is automatable? Everything checkable by a machine, leaving humans to do what only humans can do.

This is the “human in the loop” doctrine, but without the infinite email chains. Machines auto-publish low-risk work. Humans step in only where escalation makes sense.

| Risk Tier | Automation does | Humans do |

|---|---|---|

| Low | Auto-publish after critics pass | Spot-check samples |

| Medium | Generate, validate, hold for review | Approve diffs, not full assets |

| High | Stage, produce receipts, block if needed | Full signoff before go-live |

Where to go next on COEY

Ready to build real automation? Start with the control-plane foundation:

The COEY take

The future is not armies of chatty agents running loose. It’s reliable, guardrailed AI that you trust to touch your systems without triggering a Slack fire drill or a regulatory fine.

Guardrails move AI from demo floor to real ops: not hype, not magic, but old-fashioned, enforceable discipline. That is how you scale content, sales, and marketing without scaling risk.

If your automation can’t validate, route, and log, it’s not automation. It’s just a faster way to screw up in public.