LTX-2 Makes Open Video Plus Audio Practical

LTX-2 Makes Open Video Plus Audio Practical

January 12, 2026

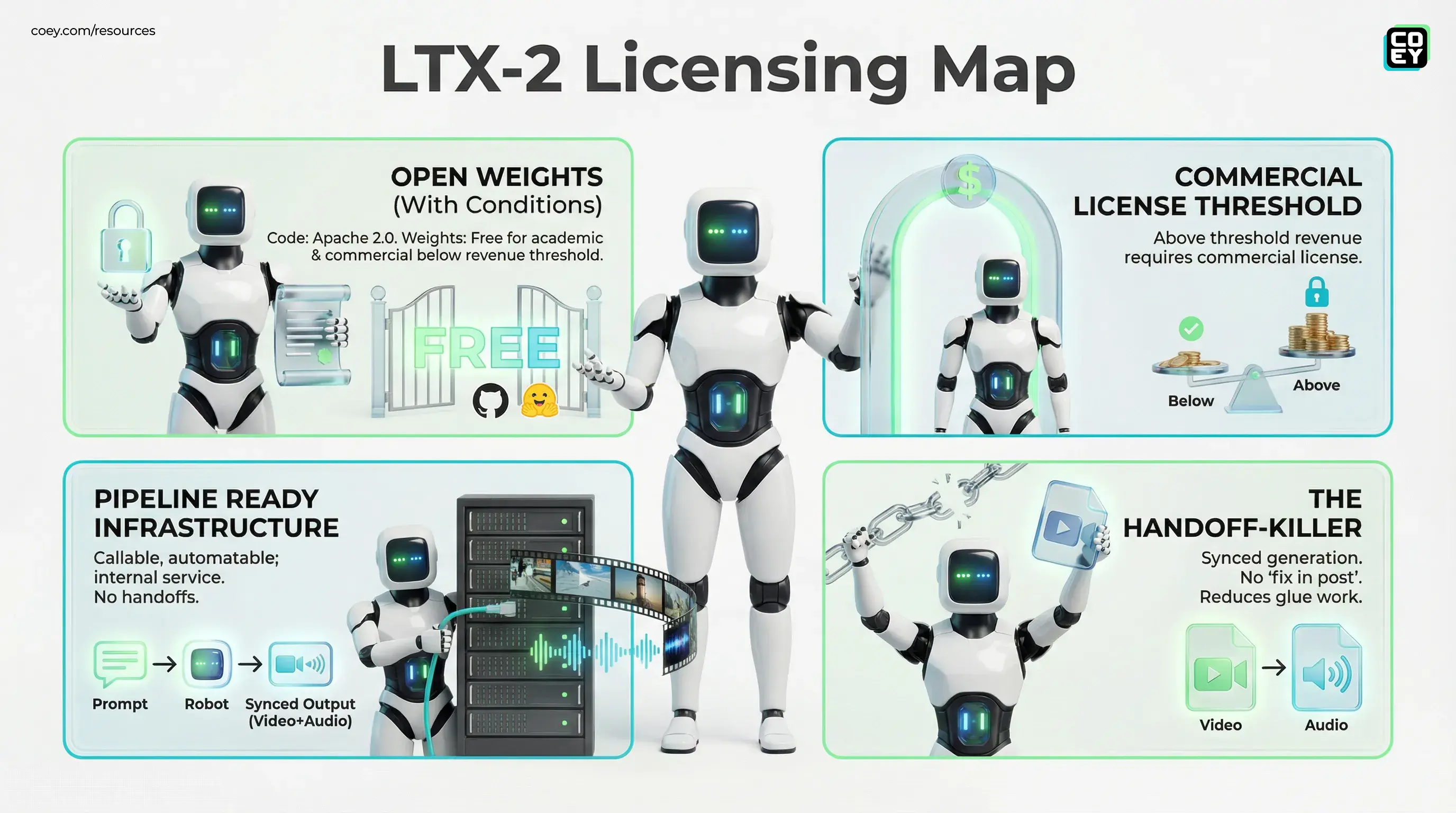

Lightricks just open-sourced LTX-2, an audio video foundation model that generates synced visuals and sound in one go, and they did not do the usual “code is open, but the good stuff is gated” routine. The code is out under Apache 2.0. The model weights are available for download (via GitHub and Hugging Face), but the weights are not purely Apache 2.0 for everyone: Lightricks states the release is free for academic research and for commercial use below a stated revenue threshold, with a commercial license required above that threshold.

The headline is not just “new model.” The headline is: video and audio generation is starting to look like software infrastructure. Meaning callable, automatable, and potentially something you can run inside your own pipeline without praying a third party UI does not change pricing, watermarking, or terms mid-campaign.

Joint generation is the cheat code. If your video and audio come from separate models, you are basically doing post production on a hallucination. LTX-2 tries to make sync the default, not a patch.

What LTX-2 actually is

LTX-2 is a DiT style (Diffusion Transformer) audio video model intended to generate synchronized video and audio from prompts, with support for multiple workflows (including text to video and image to video in the official tooling and common community integrations). Lightricks positions it as production ready open weights, not a research teaser.

There are multiple checkpoints and modes in the official release, including a 19B parameter family (commonly referenced as a dev checkpoint) and a distilled checkpoint designed for faster inference. In practice, that means LTX-2 is not one “thing.” It is a small ecosystem of weights and pipelines you choose based on whether you care more about iteration speed or output quality.

If you want the official model distribution surface beyond GitHub, Lightricks also hosts the weights on Hugging Face, which is important because it makes deployment predictable for teams that already have model registries and internal mirrors.

Why this matters (beyond the wow clip)

Most marketing teams do not fail at AI video because the output is “bad.” They fail because the workflow is brittle:

- You generate video in Tool A.

- You generate VO, music, or SFX in Tool B.

- You try to sync in Tool C.

- You “fix it in post,” which means a human becomes the glue for a process that was supposed to scale.

LTX-2’s biggest promise is that it reduces that glue work. Synchronized generation means your first draft can actually be reviewed as a clip, not as a Frankenstein assembly of parts that may or may not line up.

Automation does not die on quality. It dies on handoffs. LTX-2 is a handoff killer.

Open weights: freedom with a footnote

The code for LTX-2 is released under Apache 2.0. The weights are published openly for download, but Lightricks also states additional licensing terms apply to commercial use above a specified revenue threshold. The practical implication: many individuals and smaller companies can run it commercially, modify it, wrap it, and ship it inside products, but larger organizations should assume they may need a separate commercial agreement for production use.

However, the release messaging does include an additional commercial licensing requirement tied to organizational revenue. If you are an enterprise buyer, the grown up move is simple: treat Apache 2.0 as the baseline for the repo, then have Legal confirm your eligibility and whether your org triggers the stated commercial licensing requirement for the weights.

The public facing announcement that frames the release as open weights and production ready is carried via GlobeNewswire.

Automation potential: can you actually plug it in?

Here is the clean separation: LTX-2 is not just a hosted API product by default. It is an open model plus repo, which means you get control but you also own deployment.

That said, automation is exactly where open models shine, because you can treat them like any other internal service. The dominant real patterns look like this:

- Batch render workers: prompts go into a queue, GPUs pull jobs, outputs land in storage, reviewers get notified.

- Internal HTTP microservice: your stack hits an endpoint like

/generate, gets back a job ID, then pulls the MP4 and audio when ready. - Template driven variants: structured prompts (JSON) generate dozens of ad variations per product, offer, and locale.

For non technical teams, translate “not just a hosted API” as: you will not Zapier this in an afternoon, but if you already run any internal data or media services, LTX-2 can be made callable in the same way.

API availability snapshot

| Question | Answer | What it means |

|---|---|---|

| Is there a public SaaS endpoint? | Not part of the open source core release | You self host or use a third party wrapper |

| Is it automatable? | Yes | Run via scripts, queues, or an internal API |

| Is it workflow ready? | Depends | Ready for teams with compute plus ops maturity |

Real world readiness: what is solid vs. what is shiny

Lightricks is calling this production ready. The pragmatic take: the release posture is production friendly (open code, downloadable weights, multiple checkpoints), but production outcomes still depend on whether your org can run GPU workloads reliably.

What looks genuinely ready

- Open code plus downloadable weights means you can version lock your pipeline (no surprise UI changes, no “we deprecated that model”).

- Distilled checkpoints suggest the team cares about iteration speed, which is the difference between “cool demo” and “daily use.”

- Tooling surface area (official inference package and LoRA training support) points to ecosystem intent, not a one off drop.

What still needs adult supervision

- Brand consistency: open video still drifts, especially with products, logos, and recurring characters.

- Audio correctness: synced does not automatically mean usable dialogue, particularly in noisy scenes or non speech audio.

- Governance: if this feeds paid spend, you still need review gates, logging, and policy checks (claims, IP, safety).

The model is not the workflow. The compounding advantage comes when your workflow treats LTX-2 like a stage in an assembly line: brief to generate to validate to approve to publish.

Where marketers will feel this first

If you are an executive or marketing leader, the immediate relevance is not “we are making movies now.” It is:

- Variant velocity: faster generation of hooks, scenes, and audio treatments for paid social.

- Localization at scale: generating region specific creative without sending every iteration through an agency queue.

- Private creative pipelines: teams with sensitive launches can keep assets inside their own infrastructure.

This fits neatly into the broader trend COEY has been tracking: open video models shifting from impressive to operational. If you want a recent comparison point in that local first direction, see HunyuanVideo 1.5 Makes Local AI Video Practical.

Bottom line

LTX-2 is a meaningful escalation in open generative media: synced video plus audio with downloadable weights and a deployment posture that is compatible with real automation. It will not magically replace editors, strategists, or brand guardians. But it can replace a ton of grind: tool hopping, manual syncing, and the endless export and re edit loop that kills throughput.

For teams serious about scaling creativity through human plus machine collaboration, LTX-2 is less “look what AI can do” and more “here is a component you can actually build around” provided you are ready to own the pipeline and confirm the weights license terms for your use case.