Explainable Optimization Is Eating Marketing Automation

Explainable Optimization Is Eating Marketing Automation

January 19, 2026

The New Content Arms Race Is Not Writing It Is Choosing It

Generative AI used to be disruptive. Now it’s background noise. The content faucet was left running and drowned the internet in a hundred versions of the same three opinions, formatted for maximum engagement and minimum insight. With free-flowing auto-generated text and images everywhere, the edge in marketing did not just shift. It vanished and reappeared in a far less glamorous place: automation that can actually explain itself.

Most marketing automation today is a glorified copy machine. Spin a prompt, fill a queue, and let humans play creative roulette in Slack. Meanwhile, a quieter revolution is underway: systems that learn from real telemetry, iterate autonomously, and, crucially, show their work. Not just what they changed, but why. This is no longer optional. It is the moat.

Deep Dive thesis: Welcome to the era of explainable optimization: AI that co-creates strategy with marketers by reading live outcomes, proposing testable changes, and leaving a clear, auditable trail of logic and evidence for every action.

What Changed The AI Stopped Being a Writer And Became a Loop

Let’s be brutally honest: there are two types of AI cohabitating your martech stack:

- Producer AI: cranks out copy, designs, social posts, landing pages. Cheap, fast, and rarely unique.

- Feedback-loop AI: consumes performance data, forms hypotheses, tests ideas, and iterates based on evidence.

The first is everywhere. The second is where value compounds, because output does not scale. Learning does.

Research has started pulling large language models out of the “AI as copywriter” box and into a new role: partner in strategy. One example is MindFuse, which focuses on proposing optimizations grounded in real ad performance and surfacing interpretability signals to justify recommendations.

Why Marketers Should Care The Bottleneck Moved to Trust

Automation’s been here before. Teams love the dopamine hit of “move fast” until their stack ships a campaign-ending error. If your tools can now draft, schedule, launch, and tweak creative unprompted, your real pain is not “can it do it?” It is: can I trust it?

- Can you trust that the optimization is data-driven and not chasing ghosts?

- Can the system show its reasoning, so changes are not just “because AI said so”?

- Will it stop if it does not have enough evidence?

This is not ethics theater. It is operational survival. Real visibility into AI decision-making is now the only way to move at scale without chaos.

From A/B Tests to Continuous Learning Loops

Classic A/B testing assumes:

- You know where to look for lift.

- You have time to wait and analyze.

- You have humans on hand to interpret noisy results.

The modern explainable optimization loop assumes:

- You can float dozens of hypotheses for a fraction of the old cost.

- You can test continuously in small, contained bursts.

- Every move needs a human-readable justification, not just a confidence score.

This shift turns creative work into something closer to software ops: rapid deployments, automated logs, rollbacks, and escalation when risk thresholds are breached.

The Anatomy of an Explainable Optimization Loop

Here’s a practical loop for marketing teams that want results. This structure keeps you out of prompt soup and in the real world of deployment.

| Stage | What the AI Produces | What Humans Verify |

|---|---|---|

| Signal Ingest | Standardized performance facts | Data mapping, source accuracy |

| Hypothesis Generation | Ranked optimization proposals, with reasons | Business fit, brand risk, compliance |

| Draft and Deploy | Creative asset plus change receipt | Approval for medium and high-risk, audit logging |

Stage 1: Signal Ingest Stop Feeding the Model Garbage

Garbage in, garbage out. Explainability does not begin with the LLM. It begins with a clean, normalized performance object. Dumping dashboard exports into a prompt is not signal. That is storytelling.

- Key fields: channel, campaign, ad set, asset IDs

- performance metrics: impressions, spend, clicks, conversions

- attribution windows and conversion definitions

- audience details and placements

Insist on ironclad signal definitions. The loop only works if the numbers are not hallucinated.

Stage 2: Hypothesis Generation Reasons, Not Just Recommendations

Recommendations without reasoning are just faster opinions. Insist on structure. Every optimization proposal should answer:

- What is happening?

- What changes are proposed?

- What is the supporting evidence?

- What uncertainty remains?

- What test would validate this?

Use a schema like this for every proposal in your automation stack:

{

"proposal": {

"objective": "reduce_cpl",

"scope": {

"channel": "paid_social",

"campaign_id": "abc123",

"locale": "en-US"

},

"observation": {

"metric": "ctr",

"trend": "down",

"window": "last_7_days",

"evidence": ["creative_14 underperforms creative_09 by 23%"]

},

"hypothesis": "Hook mismatch with landing page promise",

"recommended_changes": [

{

"asset_type": "ad_copy",

"change": "swap first line for benefit-led hook",

"expected_effect": "increase_ctr"

}

],

"risk_tier": "medium",

"needs_human_approval": true

}

}Stage 3: Draft and Deploy Receipts and Reversibility

Deploying changes without audit receipts is not automation. It is plausible deniability. Every single push should require a change receipt containing:

- What changed (diff)

- Why it changed (proposal ID)

- What data supported it (source hash or fingerprint)

- Who approved it, if approval was needed

- How to roll back, if needed

If this governance stack feels familiar, it’s likely because we have laid out groundwork on Creative Supply Chains Beat Content Chaos and Why Policy Cards Beat Brand Guidelines recently.

Explainability Is Not a Moral Feature It’s a Scaling Feature

Is explainability worth the trouble? Only if you want to scale. Otherwise, plan to budget for full-time human reviewers forever.

Explainable optimization enables risk-segmented automation:

| Risk Tier | Default Automation | Common Use Case |

|---|---|---|

| Low | Auto-deploy after checks | Headline tweaks, CTA swaps |

| Medium | Draft, then require approval | Offer framing, landing hero copy |

| High | Hold and escalate | Pricing, guarantees, regulated claims |

Why Fully Agentic Optimization Is Still a Trap

Yes, autonomous agents are cool. They will also:

- Misinterpret noisy attribution and chase performance shadows

- Overfit to micro-trends, damaging long-term brand equity

- Waste budget retrying loops in the name of thoroughness

- Nudge brands off-message, one micro-edit at a time

Automation-first does not mean human-free. Humans should review nontrivial risks and validate learning, while automation focuses on throughput and leaving a clear decision trail.

How to Build This End to End With No-Code and Low-Code

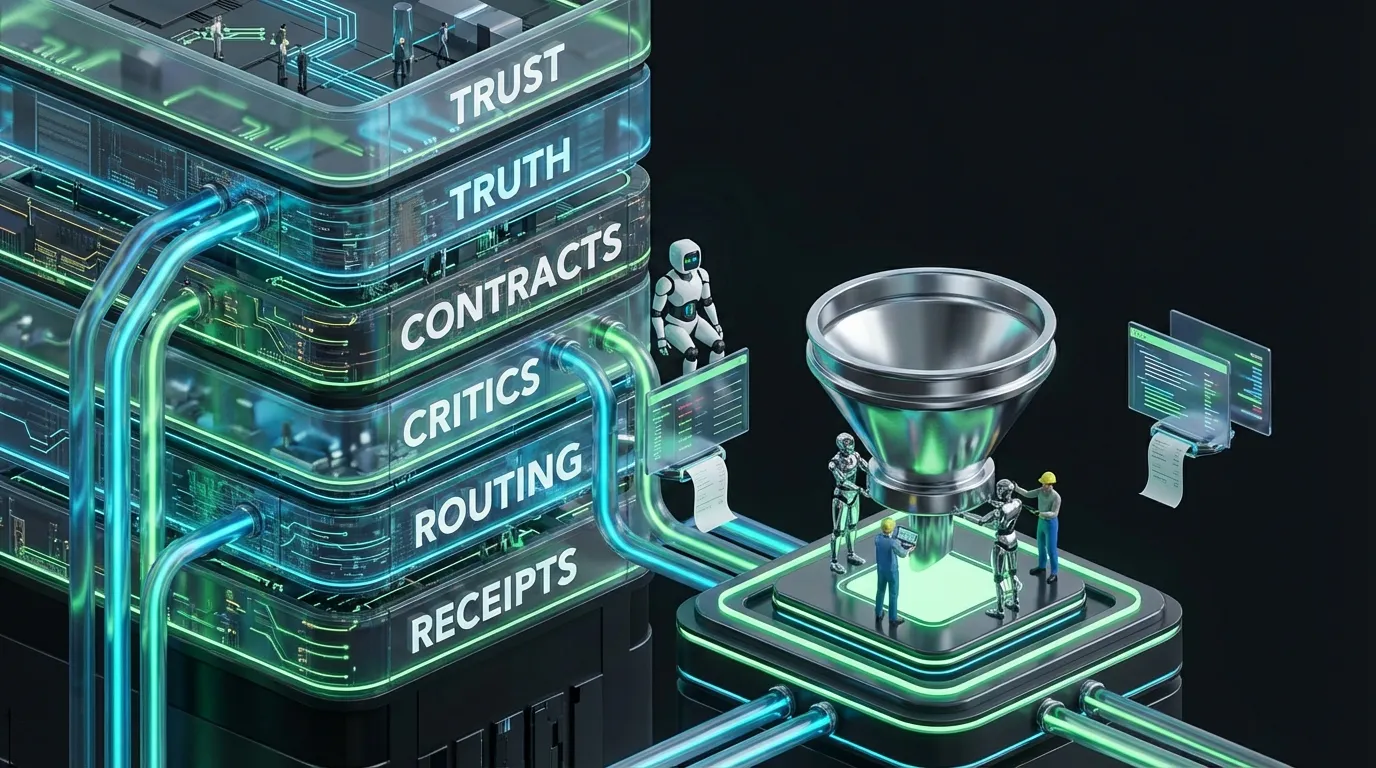

COEY’s world is wiring together products, data, and automation so the loop works without enterprise IT overhead.

- Data layer: ad platforms, web analytics, CRM events, product and offer catalogs

- Normalization layer: unified warehouse or live spreadsheet

- Decision layer: latest LLMs orchestrate structured proposals

- Critic layer: deterministic checks for policy, claims, or links

- Routing layer: approvals via Slack, email, or PM tools

- Execution layer: API connections push changes to ad platforms, CMS, ESP, CRM

Do not let model call budgets run wild. Discipline yourself with semantic caching and hard budget limits.

The KPI Nobody Tracks Explainability Coverage

CAC, ROAS, CTR are table stakes. If you want explainable automation, track explainability coverage:

- What percent of changes shipped with a reason and evidence object?

- What percent of claims included links to supporting data?

- What percent of edits have an explicit rollback plan?

This is the difference between “AI helps sometimes” and “AI is embedded in every campaign we run.”

Common Failure Modes And How to Avoid Them

Failure Mode 1: Optimizing the Wrong Metric

Congrats, your CTR is up 23 percent. Too bad revenue is flat. Avoid optimizing what is easy at the expense of what matters.

- Fix: link optimization to true business outcomes, even if results are delayed.

Failure Mode 2: Chasing Short Windows

Performance volatility over a day or two is mostly noise.

- Fix: use minimum viable sample sizes and confidence intervals before making changes.

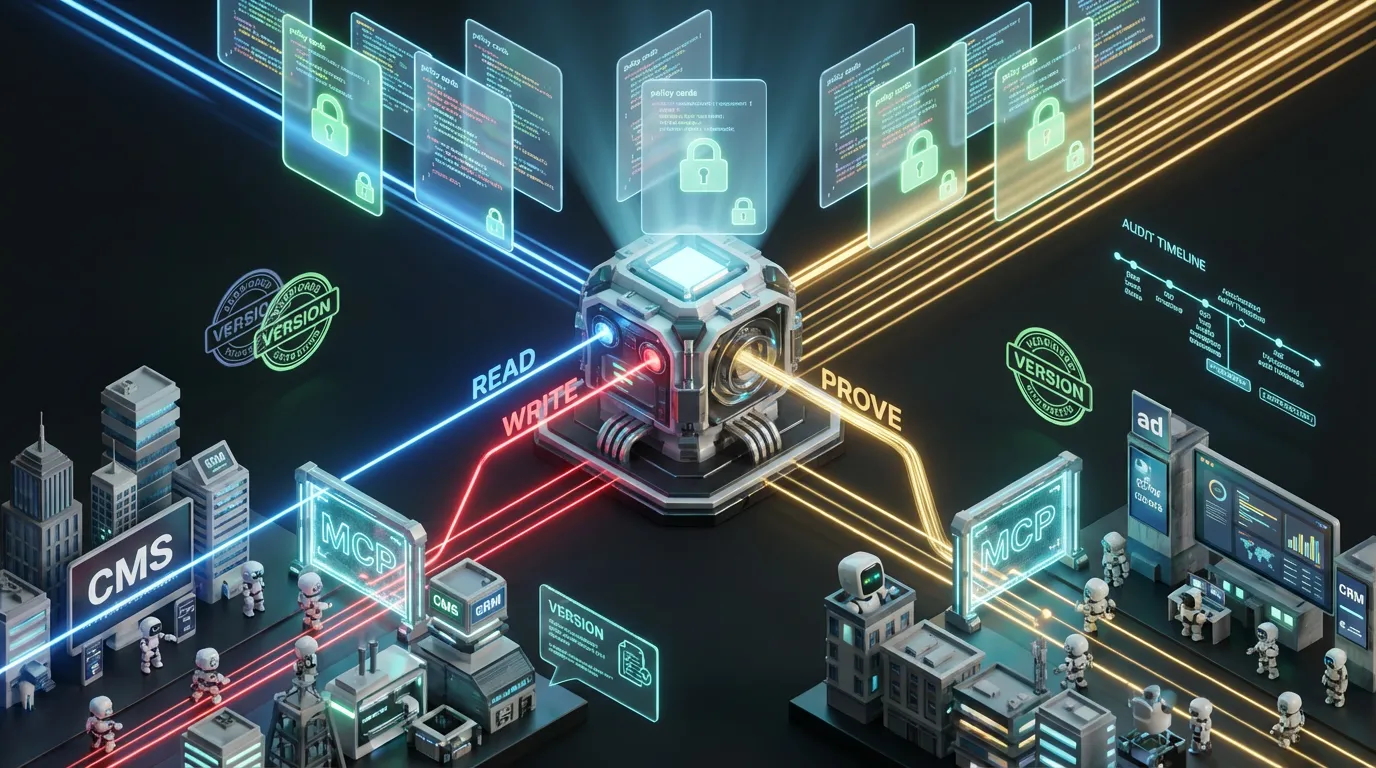

Failure Mode 3: No Policy Layer

If your AI can improve any asset, it will. Including the ones with legal or compliance flags.

- Fix: set explicit read, write, and proof-of-compliance boundaries for each workflow.

Failure Mode 4: No Abstention Behavior

If the evidence is weak, the system should admit it, or you will be explaining mistakes to Compliance.

- Fix: build abstention and escalation paths into every loop.

The COEY Take Explainable Loops Are the Next Marketing Ops Moat

The future is not “AI writes everything while you nap on a beach.” It is marketing management that operates like software engineering: continuous deployment, automated QA, and systemic governance.

Explainable optimization is what gets you there. You move faster but retain control. You iterate visibly, not in a black box. And you replace “trust me” with traceable reasoning for every meaningful change.

If you want to automate at scale, stop asking if your AI can generate. Start demanding it can justify.