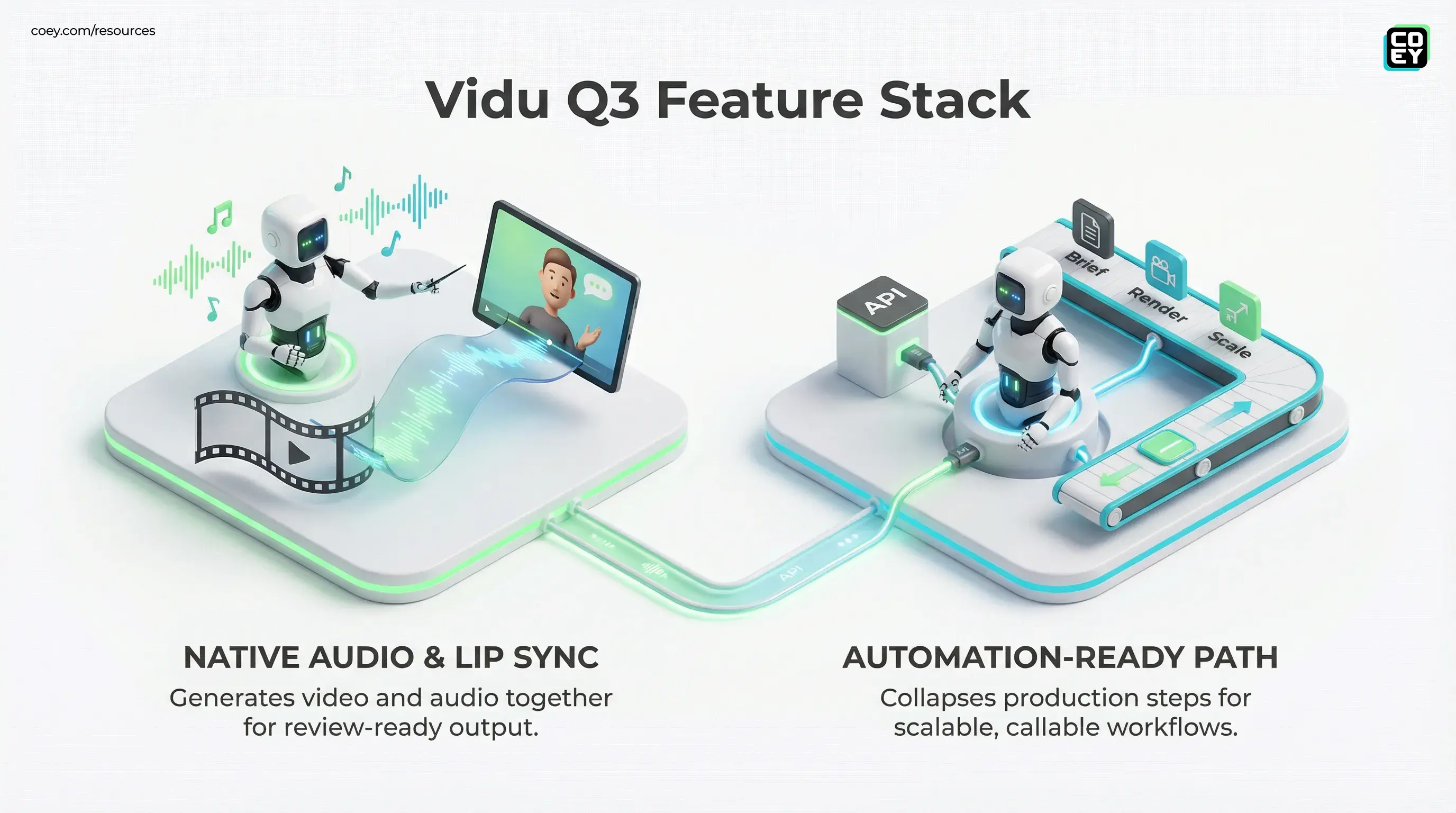

Vidu Q3 Makes “One-Pass” AI Video Real: Native Sound, Lip Sync, and an Automation-Ready Path

Vidu Q3 Makes “One-Pass” AI Video Real: Native Sound, Lip Sync, and an Automation-Ready Path

February 2, 2026

ShengShu-AI’s newest Vidu model, Vidu Q3, is a clear signal that text-to-video is graduating from “cool clip generator” into creative infrastructure you can actually wire into a production system. The headline feature is simple: it generates video and audio together, in the same run, with the sound designed to match the scene rather than pasted on after the fact. Start with the product hub at vidu.com.

If you’ve been building short-form at scale, you already know the pain: video generation in one tool, voice in another, SFX or music in a third, then the “why is the timing off” edit spiral in a fourth. Vidu Q3’s bet is that a lot of that glue work can disappear. Not because humans stop caring about taste, but because the machine finally outputs something reviewable on the first pass.

Audio-native generation is not a feature. It’s a workflow shift: fewer handoffs, fewer sync failures, and fewer human hours spent on mechanical assembly instead of creative direction.

What shipped (and what changed)

Vidu Q3 is positioned as a next-generation generative video model with a practical sweet spot for modern content teams: short, high-impact clips built for ads, social, and concepting. The most repeated capability claims across release chatter and third-party access pages are:

- Up to ~16 seconds per generation.

- 1080p output (Full HD).

- Native audio generation: dialogue or voice, ambience, sound effects, and music generated alongside visuals.

- Lip sync for speaking characters (shown in official and partner demos).

- Multi-shot coherence (presented as multi-shot generation with shot transitions, though results can vary by prompt and scene complexity).

The real story isn’t any single bullet. It’s that Vidu Q3 collapses multiple production steps into one atomic render. That changes what “iteration” looks like. Instead of shipping drafts that need post-production just to be legible, you can review an audiovisual cut immediately, then adjust prompt, regenerate, and keep moving.

Audio-native is the big unlock

Creators have been duct-taping audio onto AI video since day one. It works, but it’s brittle. The moment you change the timing of a scene, everything breaks: VO doesn’t line up, music hits the wrong beats, SFX are late, and your editor becomes an alignment tool.

Vidu Q3’s approach, generating sound as part of the same interpretive pass, has two implications that matter for real teams:

1) You get “review-ready” outputs faster

When audio matches motion and pacing from the start, stakeholders can judge the thing you actually care about: does it land? Not “could it land after two hours of cleanup.”

2) Regeneration becomes real creative control

With stitched workflows, iteration is expensive because every regen triggers downstream rework. With joint generation, iteration is closer to creative versioning: tweak the prompt, re-render, review, repeat.

In high-throughput marketing, the bottleneck isn’t generating assets. It’s turning assets into publishable cuts. Audio-native generation attacks the bottleneck directly.

Automation potential: can you plug it in?

This is where the hype usually dies. Plenty of tools demo well and then trap you inside a web UI with no reliable way to integrate into your stack.

Vidu is trending the other direction. There’s a clear product front door (vidu.com) and strong signals that programmatic access is part of the distribution strategy: as of late January 2026, Vidu Q3 is available via multiple third-party inference and API platforms (availability and feature flags can vary by provider). Some teams are also accessing “Vidu Q3” via Media.io’s Vidu Q3 page, which is less “official lab docs” and more “this is already being packaged for workflows.”

One more important reality check: public-facing API documentation for brand-new models is often partially gated (dashboards, keys, quotas, terms). That’s normal. What matters for executives is whether the pattern is present:

| Question | What Vidu Q3 signals | Ops implication |

|---|---|---|

| Can we automate generation? | Yes, via partners (third-party API ecosystem is live) | Batch variants, scheduled rendering, pipeline triggers |

| Can we integrate into existing tools? | Emerging (partners and resellers) | Faster pilots without waiting on deep native connectors |

| Is it production-ready? | Depends on governance plus QC | Needs review gates, logging, and brand constraints |

Where this is actually ready today

Let’s separate “looks incredible in a demo thread” from “can ship inside a real org.”

High-confidence use cases

- Paid social iteration: rapid hook testing, offer variants, CTA swaps, seasonal spins.

- Creative concepting: animatics, rough storyboards, pitch visuals with matching sonic mood.

- UGC-style scripting without UGC logistics: quick character-driven spots when you need volume more than perfection.

- Localization drafts: early-pass localized cuts where humans still approve final voice and claims.

“Proceed like an adult” use cases

- Dialogue-heavy brand spokesperson content: lip sync helps, but brand trust is fragile, humans must review.

- Regulated categories: finance and health claims and disclosures need policy gates, not vibes.

- Anything requiring exact product fidelity: AI video still drifts on logos, UI, and physical product details.

Workflow-ready doesn’t mean hands-off. It means the machine produces outputs that fit into a repeatable review-and-publish system.

What to watch next: the boring stuff

Vidu Q3’s capabilities are flashy, but adoption will be decided by the unsexy layer: control, consistency, and governance.

- Control surface: Can teams reliably specify camera motion, pacing, dialogue timing, and sonic mood without prompt wizardry?

- Repeatability: Can you produce brand-consistent variants, or does every render feel like a new universe?

- Automation hooks: Stable API endpoints, predictable pricing, quotas that match real volume, and versioning you can depend on (often via partners today).

- Commercial readiness: Clear terms, usage rights, and a path to safely deploy at scale (especially if audio includes vocals or dialogue).

If those pieces solidify, Vidu Q3 isn’t just “another video model.” It becomes a machine collaborator you can plug into content systems: briefs in, variations out, humans directing taste and strategy while the machine handles the rendering grind.

Bottom line

Vidu Q3 lands at the intersection creators actually care about: quality plus speed plus fewer handoffs. Native audio pushes AI video closer to “single-pass deliverables,” which is the threshold where automation starts compounding instead of creating more cleanup work.

For teams scaling creative output, the question isn’t whether Vidu Q3 can make impressive clips. It’s whether you can turn it into a repeatable pipeline component, callable, governable, and fast enough to keep humans in the flow. The direction is right. Now the pressure test is operational: API maturity (often via third-party platforms first), controls, and whether outputs hold up when you’re generating 50 variants a day instead of one hero demo.