ByteDance’s Seedance 2.0: Cinematic AI Video Goes Multimodal (and Hollywood Immediately Hits “Report”)

ByteDance’s Seedance 2.0: Cinematic AI Video Goes Multimodal (and Hollywood Immediately Hits “Report”)

February 22, 2026

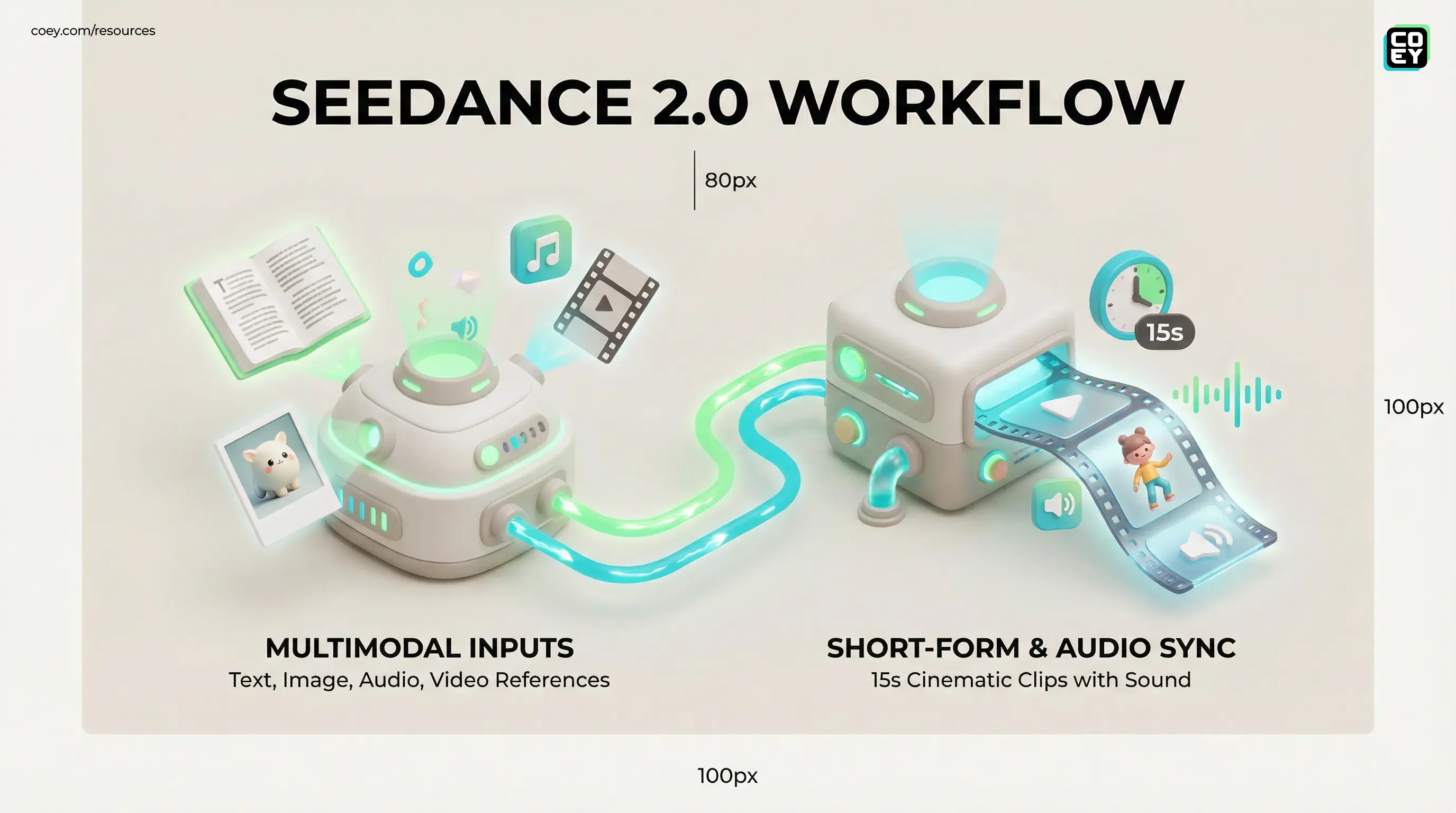

ByteDance just pushed generative video into a new phase with Seedance 2.0: a multimodal model that can take text, images, audio, and video references and spit out short, high-fidelity clips with synchronized sound. The outputs are impressive. The distribution strategy is even more impressive. And the legal fallout is also impressive, just in a different, cease-and-desist kind of way.

Seedance 2.0 is ByteDance’s latest flagship generative video system under its “Seed” research umbrella. It’s positioned as a cinematic audio-video generation model that supports multimodal conditioning, meaning it doesn’t just do “text-to-video” and hope for the best. You can guide it with reference imagery, reference video, and audio to shape pacing, vibe, and (in the best case) continuity.

What Seedance 2.0 actually is

ByteDance’s own positioning leans into “director-style control,” and from the demos circulating, the biggest qualitative jump isn’t raw sharpness. It’s that Seedance feels more like a system designed for production beats: quick cuts, stable motion, consistent environments, and audio that doesn’t feel stapled on in post.

Multimodal video isn’t a flex feature. It’s an operations feature. It reduces the “briefing tax” teams pay when they’re forced to describe visuals and timing in words alone.

What’s new vs. last-gen video models

The market is crowded with strong video generators, so the only way a new entrant matters is if it changes workflow math. Seedance 2.0’s most meaningful upgrades land in three buckets:

1) Multimodal control that’s actually usable

Seedance can accept multiple input types in one job: text plus reference assets (images and video) plus audio. For creators and marketers, this is the difference between “roll the dice” and “steer the ship.” If you’ve ever tried to keep a product, character, or style consistent across multiple variants, you already know why this matters.

2) Short-form output that matches real formats

Seedance 2.0 is optimized for short clips, often in the ~15-second range, which is exactly where modern marketing lives: Reels, Shorts, TikTok ads, UGC cutaways, punchy transitions, and fast b-roll. Nobody running paid social needs a 4-minute AI film. They need 12 clips by tomorrow morning.

3) Native audio-video synchronization

Seedance is being discussed as an audio-video generator, not just silent visuals. That matters because audio is the hidden time-sink in “AI video” workflows. If the model can generate or align to usable sound in the same pass, it compresses the pipeline dramatically.

Where this is landing: ByteDance’s distribution cheat code

ByteDance isn’t treating Seedance 2.0 like a standalone science project. It’s being pushed through creator-native surfaces, most notably within the CapCut ecosystem. If you want the COEY breakdown of why that distribution move matters, see Seedance 2.0 Lands Inside CapCut, and That’s the Real Flex.

Because here’s the uncomfortable truth: standalone AI video apps win hype cycles; embedded generation wins budgets.

Workflow reality: what teams will do with it first

Seedance 2.0’s early high-confidence use cases are the unglamorous ones that ship:

- AI b-roll to fill gaps: scene setters, cutaways, and “we need one more visual” moments.

- Variant factories for paid social: same offer, different environments, different pacing, different moods built for iterative testing.

- UGC augmentation: keep the human “truth anchor” (face-to-camera) and use AI shots to multiply context around it.

- Pre-viz for campaigns: fast motion storyboards to align stakeholders before spending real production budget.

API availability: can you automate Seedance 2.0?

This is the part that separates “cool tool” from “creative infrastructure.” There are two very different access stories happening at once:

UI access: yes, but not necessarily universal yet

In-product access (via ByteDance creator tools) is where Seedance looks most real today, but it’s still rolling out in phases. If your team can generate inside an editor and immediately cut, caption, and export, you’ve already shaved days off your production cycle.

Developer access: complicated, and currently volatile

Seedance 2.0 has been presented through partners, including fal.ai’s Seedance 2.0 page, which frames it as something you could integrate using SDKs and serverless inference.

But here’s the key reality check: third-party availability can shift quickly. If you’re planning automation around Seedance, treat API readiness as “watch closely,” not “bet the quarter on it.”

| Question execs ask | What’s true today | Implication |

|---|---|---|

| Can my team use it now? | Yes (UI-first, phased rollout) | Immediate workflow acceleration for humans shipping content |

| Can we automate or batch it? | Not reliably first-party; third-party availability is in flux | Automation at scale depends on stable developer endpoints |

| Is it production-ready? | Yes for short-form, with guardrails | Great for variants and b-roll; still needs approvals for brand safety |

Rule of thumb: If it’s callable via API, it’s composable. If it’s composable, it can become an always-on collaborator in your stack.

The messier headline: IP, likeness, and the “too real” problem

Seedance 2.0 didn’t just trigger creator excitement. It triggered institutional panic. Major entertainment stakeholders have publicly pushed back, arguing that the model enables outputs that replicate protected characters, scenes, and styles.

Axios reported that the Motion Picture Association sent a cease-and-desist letter to ByteDance over Seedance-related IP concerns (Axios coverage). AP News also captured broader Hollywood criticism and the growing fight over copyright and likeness rights in AI video (AP News report).

For marketers, the takeaway isn’t “never use it.” It’s “don’t be sloppy.” The more realistic the model gets, the more your workflow needs grown-up controls:

- No protected IP prompts: don’t generate “looks like” content and pretend that’s a strategy.

- No celebrity or talent likeness without rights: if your team can generate it, your legal exposure can scale too.

- Clear approvals: human review gates aren’t optional when realism rises.

What’s hype vs. what’s operational

Seedance 2.0 is not “the end of production.” It’s a serious compression of the parts of production that are repetitive, time-consuming, and usually not where human creativity is most valuable.

Operational (you can bank on this)

- Faster variant generation for paid social and short-form campaigns

- Lower stock-footage dependency for b-roll and transitions

- More shots on goal without scheduling shoots for every small refresh

Still spicy (plan guardrails)

- Perfect brand fidelity (logos, packaging details, on-screen text) still needs human QC

- Fully automated pipelines are limited until stable APIs and governance hooks are widely available

- Rights plus compliance becomes a bigger operational burden as realism improves

Bottom line

Seedance 2.0 is a meaningful leap because it’s multimodal, short-form-native, and increasingly editor-adjacent, which is how generative video becomes normal work instead of a lab demo. The automation upside is obvious, especially if developer access stabilizes, but the near-term reality is UI-led workflow acceleration with a growing need for rights discipline.

If your team’s goal is scaling creativity through human plus machine collaboration, Seedance 2.0 fits the winning pattern: humans set intent and taste; machines manufacture breadth; and the organization builds the guardrails so speed doesn’t turn into brand-risk roulette.