Utopai’s Pai Wants to Fix AI Video’s Worst Habit: Forgetting Who It’s Filming

Utopai’s Pai Wants to Fix AI Video’s Worst Habit: Forgetting Who It’s Filming

March 6, 2026

Utopai Studios just put a flag in the ground with Pai, a generative video model built for one thing most text to video tools still struggle with: narrative continuity. Not one pretty clip. Not a vibe. Actual story, with the same characters, the same world, and the same visual language surviving across multiple shots.

If you’ve ever tried to build a sequence with current gen video tools, you know the pain: the hero’s jacket changes, the mascot’s face morphs, the office turns into a spaceship, and suddenly your campaign series looks like five unrelated dreams someone had after eating spicy ramen at 2am.

Pai’s bet is simple: if AI video is going to be useful for brands and studios, it has to behave like a production system, not a slot machine.

What Pai actually shipped

Pai is positioned as a cinematic storytelling engine that turns scripts (or structured prompts) into multi shot sequences while keeping the cast and world stable. Utopai’s product page leans into story level control and directability, more direct the scene than pray the model understands the prompt. You can see how they frame it here: utopaistudios.com/pai.

One specific claim getting attention in early coverage: Pai supports multi shot sequences (reported as up to 16 shots), with outputs described as reaching up to about one minute and up to 4K output. That detail has been echoed by third party reporting, including TestingCatalog’s write up: testingcatalog.com/utopai-studios-opens-access-to-pai-long-form-ai-video-model-2.

The point isn’t the spec flex. The point is what the spec enables: structured sequences where continuity is treated as a first class requirement, not an accidental lucky outcome.

Continuity is the real feature

Most video models can make something that looks cool for 6 to 12 seconds. The failure mode shows up the moment you try to make scene 2 match scene 1. That’s where AI video becomes AI video plus two hours of manual patchwork.

Pai’s positioning centers on maintaining persistent narrative objects: characters, settings, props, and style cues across shots. Think of it like the model is trying to keep a working memory of what matters visually, then reuse it as the sequence progresses.

| Continuity problem | What usually happens | What Pai is targeting |

|---|---|---|

| Character drift | Face, hair, wardrobe morph between cuts | Same character identity across shots |

| World drift | Backgrounds change or re imagine themselves | Stable locations and set logic |

| Style drift | Lighting, color, camera language resets each clip | Consistent visual tone and framing |

For marketers, this is the difference between we can generate content and we can generate a series. And series is where brands actually build memory.

Why marketers should care (beyond the demo)

Long form narrative might sound like a filmmaker problem, but continuity is a marketing ops problem too. The modern playbook is serial: recurring characters, repeatable formats, and campaign systems that evolve week to week.

If Pai delivers even a modest lift in shot to shot stability, that changes the economics of:

- Recurring mascot campaigns (your brand character doesn’t get a new face every episode)

- Explainer series with a repeat presenter (animated or stylized spokesperson)

- Episodic paid social where the hook format stays the same but the message changes

- Localization where you want the same visuals with region specific overlays or VO

Continuity is throughput. The fewer fix it in post hours you burn, the more variants you can ship, test, and refine.

Also: continuity is what turns AI video from creative experiment into something your CMO will let touch the budget.

Access is real, but it’s early

Pai is accessible via Utopai’s signup flow at utopaistudios.com/pai, with demos showcased at pai.utopai.film.

Early reactions (including chatter on X) are broadly aligned on two things:

- Consistency looks meaningfully improved compared to typical one off clip generation.

- Uncanny artifacts still exist because yes, it’s still AI video.

That combination is normal for this phase. The real question is whether the usable output rate is high enough that teams can plan around it, not just admire it.

Automation potential: the part that decides if this scales

Now the pragmatic part. For COEY’s world, scaling creativity through human plus machine collaboration, the biggest divider is not quality. It’s automation readiness.

As of what’s publicly presented right now, Pai’s API and integration details are not clearly disclosed in the main product surfaces and recent launch chatter. That means we should treat Pai as workflow ready (human driven) but not yet provably pipeline ready (system driven).

| Question | What we can say today | Why it matters |

|---|---|---|

| Can a team use it now? | Yes, via public sign up | Great for pilots and creative validation |

| Can you automate it? | Not clearly documented publicly | Limits batch generation plus scheduled variants |

| Is it ops ready? | Too early to call | Needs job control, exports, metadata, governance |

For non technical leaders: API available is shorthand for can we plug this into our stack. Make or n8n, internal tools, creative request forms, Slack approvals, DAM storage, and scheduled rendering. Without that, you still get value, but you don’t get compounding value.

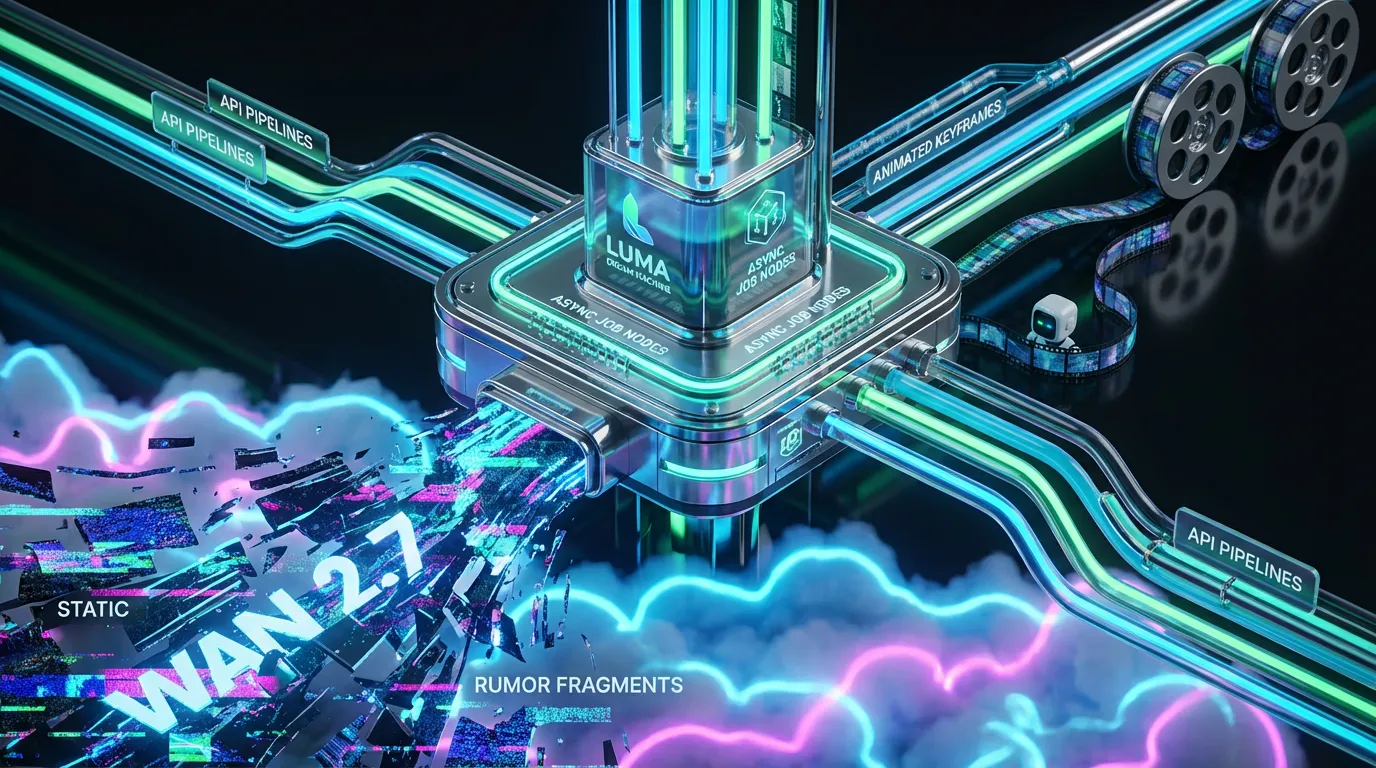

What API ready would unlock for Pai

If Utopai exposes stable endpoints (or SDKs) over time, Pai becomes a lot more than a shiny interface. It becomes a callable production node that could support:

- Batch episodic rendering: generate multiple scenes per script, per market, per persona

- Automated revision cycles: update a scene direction, regenerate only affected shots, preserve continuity

- Variant trees: one narrative spine to 20 hook variations routed to review then winners shipped

If it’s not callable, it’s not composable.

And if it’s not composable, it won’t become infrastructure, just another tab someone forgets to open.

Real world readiness: where Pai fits today

Pai looks best suited right now for teams who want stronger sequencing and are okay with a human in the loop workflow while the product matures.

High confidence, right now uses

- Pre viz and pitch sequences: fast narrative drafts to align stakeholders

- Brand world prototyping: define a recurring character plus setting that can power a series

- Creative testing: consistent episode format where only the message changes

Use with adult supervision

- Final, brand critical hero ads: until continuity plus realism are proven at your bar

- Anything with strict product fidelity: packaging, UI, on screen text still tends to be fragile in gen video

- Regulated categories: always keep approvals and compliance gates

None of that is a knock. It’s the normal reality of generative video in 2026: the winners will be the teams who treat it like draft acceleration plus system design, not like a magic replacement for production.

Bottom line

Pai is a meaningful release because it attacks the least glamorous, most expensive problem in AI video: continuity across shots. That’s the exact feature that makes narrative video usable for brands, not just impressive for timelines.

The near term value is clear: faster multi shot drafting with less character drift, which means less time doing continuity CPR in post. The open question, and the one that will decide whether Pai becomes true creative infrastructure, is whether Utopai follows up with API access, job orchestration, and integration hooks that let teams run narrative video the way modern marketing actually runs: in batches, in variants, and inside systems with approvals.