Grok-4.20 Beta Debuts as xAI’s New Reasoning Powerhouse

Grok-4.20 Beta Debuts as xAI’s New Reasoning Powerhouse

March 13, 2026

xAI just pushed Grok in a more “systems, not vibes” direction with Grok-4.20 Beta, now listed in the xAI model lineup and available via the developer platform at https://docs.x.ai/docs/models/. The headline is not that Grok got a little smarter. It is that xAI is increasingly packaging Grok as a callable reasoning component you can route work through, especially the parts of a workflow where other models tend to confidently faceplant.

This matters for creators, marketers, and execs for one reason: reasoning reliability is the bottleneck that decides whether AI stays a “draft helper” or becomes “workflow infrastructure.” Grok-4.20 Beta is xAI’s attempt to move Grok into the second category, without pretending it is flawless or magically autonomous.

If you can call it via API, you can automate it.

If you can only admire it in a UI, you are basically doing AI arts-and-crafts.

What actually shipped

Grok-4.20 Beta shows up as a reasoning-forward Grok model in xAI’s model catalog, and the surrounding platform story is built for workflow integration: tool or function calling and structured outputs are central to how xAI wants teams to use these models (start at docs.x.ai).

In practical terms, that is the difference between:

- “Here is a paragraph”

and - “Here is a JSON object your automation can act on without crying.”

xAI is also continuing the broader push that started with its API public beta announcement at https://x.ai/news/api/, treating Grok less like a destination app and more like an engine you plug into your stack.

The quiet but important implication

“Reasoning model” is not just a benchmark flex. It is a product positioning choice: Grok-4.20 Beta is meant for the moments in your pipeline where multi-step planning, constraint handling, or tool orchestration breaks weaker models.

That includes marketing work more than people like to admit.

Why this is a workflow upgrade (not a demo)

Most teams do not fail with AI because they cannot generate words. They fail because their workflow needs the model to do at least one of these reliably:

- follow rules across many steps

- keep context straight through long chains

- validate its own work

- choose between competing constraints

- call tools correctly (and not hallucinate tool outputs)

When those break, humans step back in, not because humans love editing, but because the system cannot be trusted end-to-end.

Grok-4.20 Beta is xAI basically saying: we are aiming Grok at the trust layer, not just the content layer.

Content is cheap now. Consistency is expensive.

Reasoning models are trying to make consistency cheaper.

API availability: can you automate it?

Yes, this is not a UI-only flex. Grok-4.20 Beta is available through xAI’s developer platform and API. The clean entry points are the docs homepage at https://docs.x.ai/docs/ and access management through the console at https://console.x.ai/home.

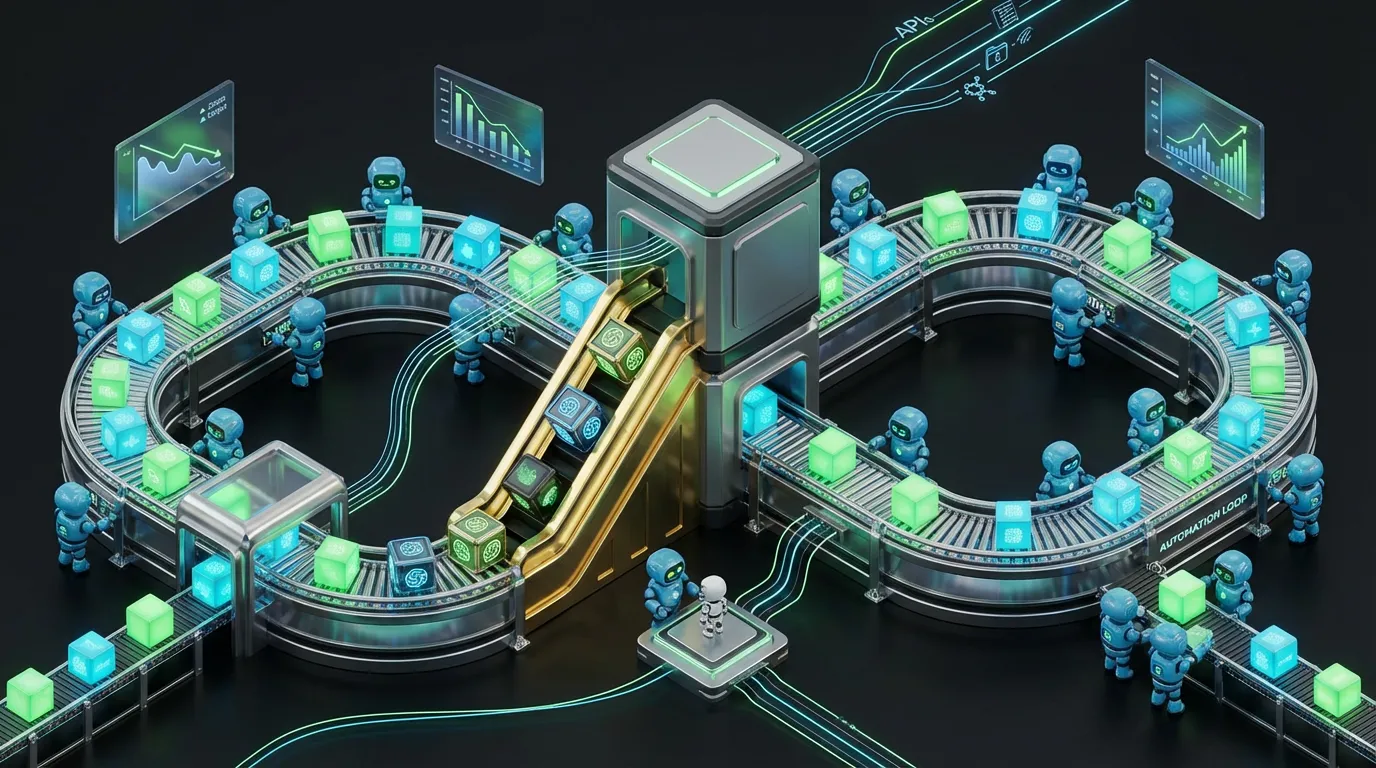

If you are non-technical, here is the translation: your team can wire this into automations the same way you would wire any other API service. Trigger it from a workflow tool, feed it data, get structured output back, pass the result downstream.

xAI has also been explicit that its API is designed to be familiar to teams already using an OpenAI-style SDK pattern, and they call out API compatibility in the announcement post at https://x.ai/news/api/. That matters because adoption does not hinge on “is it good?” alone. It hinges on “can we trial this without rebuilding our world?”

What “API-ready” really means for marketing ops

If you can call Grok-4.20 Beta via HTTP, you can:

- run it in Make, n8n, or Zapier via webhooks

- route tasks to it inside agent frameworks and internal orchestrators

- use it as a reasoning checkpoint inside existing content factories

Automation readiness snapshot

| Workflow question | Practical answer | What it unlocks |

|---|---|---|

| Can it plug into automations? | Yes, it is API-accessible via xAI | Triggerable, schedulable workflows |

| Can it return structured data? | Yes, structured outputs are part of the platform story | Less manual cleanup, more downstream actions |

| Does it support tool use? | Yes, tool or function calling is supported | Agents that do, not just say |

Where it is ready right now

This model is most useful when you treat it like a reasoning engine you call on purpose, not as the default model for everything.

High-leverage use cases (marketing plus exec ops)

- Campaign planning with constraints: channel mix, timing, budget rules, segment exclusions, offer logic, then outputting a structured plan your team can review and implement.

- Creative QA before publishing: flagging internal contradictions, missing disclaimers, claims that need sourcing, brand voice drift, or “this does not match the brief.”

- Reporting synthesis that does not break: combining metrics, commentary, and next actions into an executive-ready narrative without losing the thread halfway through.

- Agent routing plus decision points: using cheaper models for bulk generation, then calling Grok-4.20 Beta when the workflow hits a thinking step (validation, prioritization, conflict resolution).

The winning pattern is not “one model to rule them all.”

It is multi-model routing: cheap for volume, smart for decisions.

What to watch (because beta is beta)

“Beta” should trigger your adult supervision reflex. Reasoning models can still fail, sometimes in more interesting ways, because they will produce longer, more confident chains of logic.

Three pragmatic caveats

- Latency and cost may spike for deep reasoning

Stronger reasoning often means more compute. Plan to call it for decision nodes, not every headline variant. - Tool calling needs guardrails

Function calling is powerful, but giving a model the ability to trigger actions without permissioning is how you end up with “automation incident” Slack threads. - Long context is only useful if your inputs are clean

A huge context window does not fix messy inputs. If you shove unstructured chaos into the prompt, you will get structured-sounding chaos back.

What this means for scaling creativity

Grok-4.20 Beta fits a broader xAI pattern: turning Grok into programmable infrastructure, not just a chat experience.

COEY has been tracking this shift across recent Grok drops. For more context on how xAI is pushing Grok toward workflow automation, see this COEY post on Grok 4.20 Beta and the multi-agent workflow shift.

For teams scaling content and campaigns, the strategic shift is simple:

- Humans set intent (strategy, taste, brand judgment)

- Machines execute repeatable thinking work (planning, validation, synthesis, routing)

- Systems ship (with approvals, logs, and rollback paths)

If Grok-4.20 Beta delivers on reasoning consistency in real pipelines, not just benchmarks, it becomes a serious option for the automation middle layer where most teams still burn hours: planning, checking, and turning messy inputs into shippable structure.

Better generation is nice. Better reasoning is what lets you stop babysitting.