Google Flash Image Models: Scale Variants Cheaply

Google Flash Image Models: Scale Variants Cheaply

January 17, 2026

Google’s latest move in image generation is not another “look what the robot can paint” flex. It is a pricing and throughput play aimed at anyone who needs a lot of visuals: fast, decent, and automatable.

The cleanest way to track what is real is Google’s own Gemini image generation documentation, which covers Gemini image models and how to call them via API (Gemini API image generation documentation).

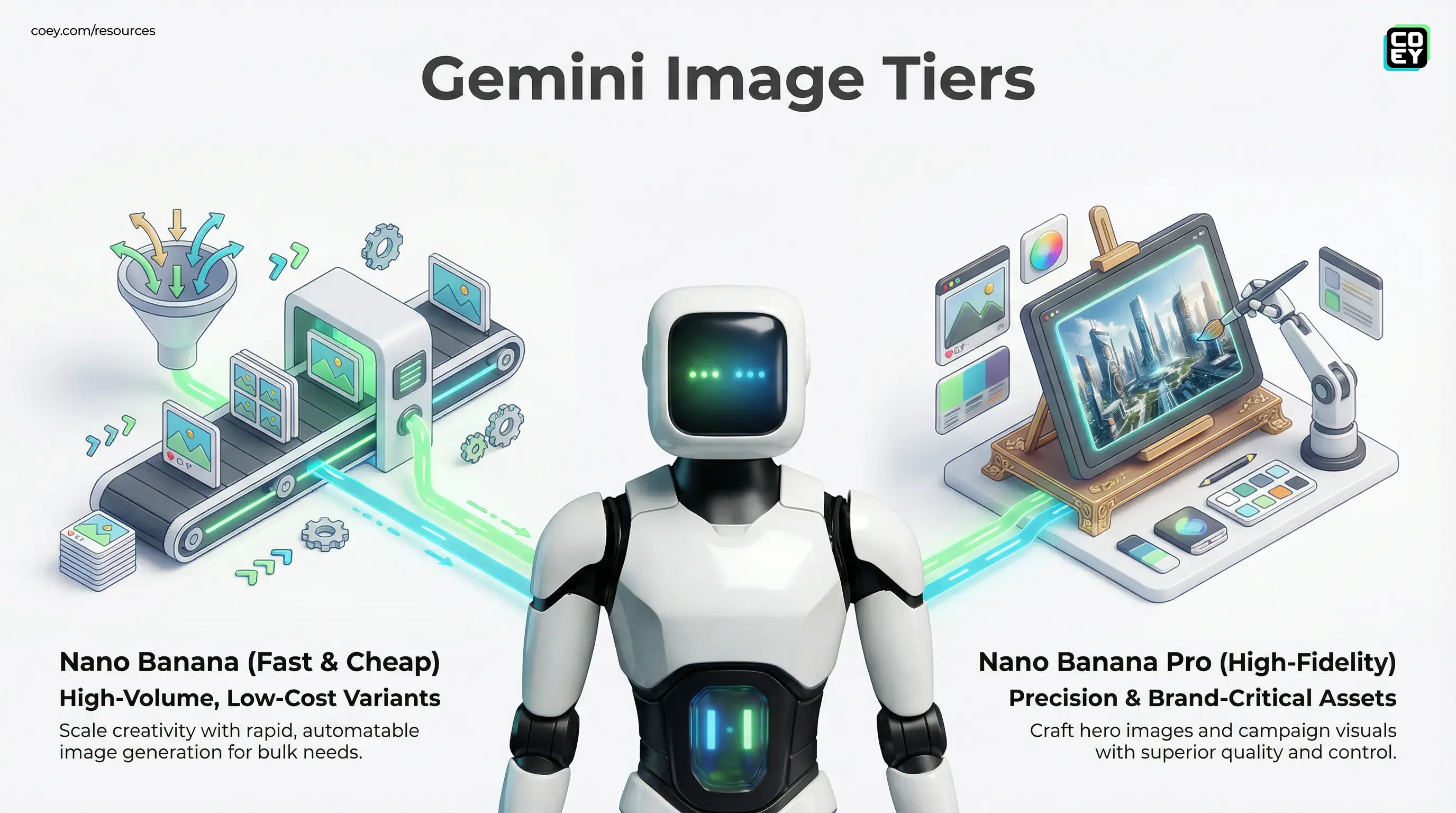

Also important: the internet is currently messy with naming. “Nano Banana 2 Flash” does not show up as a clearly documented, first party model name in public sources we can validate. What is checkable is that Google has an image generation stack exposed through the Gemini API and AI Studio, and that there are distinct image generation options with different tradeoffs: faster and lower cost choices for volume and higher fidelity options for precision and brand critical work.

Translation for execs: this is Google trying to turn image generation into infrastructure. Not a creative party trick, an assembly line component.

What actually shipped (minus fan fiction)

Here is what we can confidently say based on public, checkable docs and announcements:

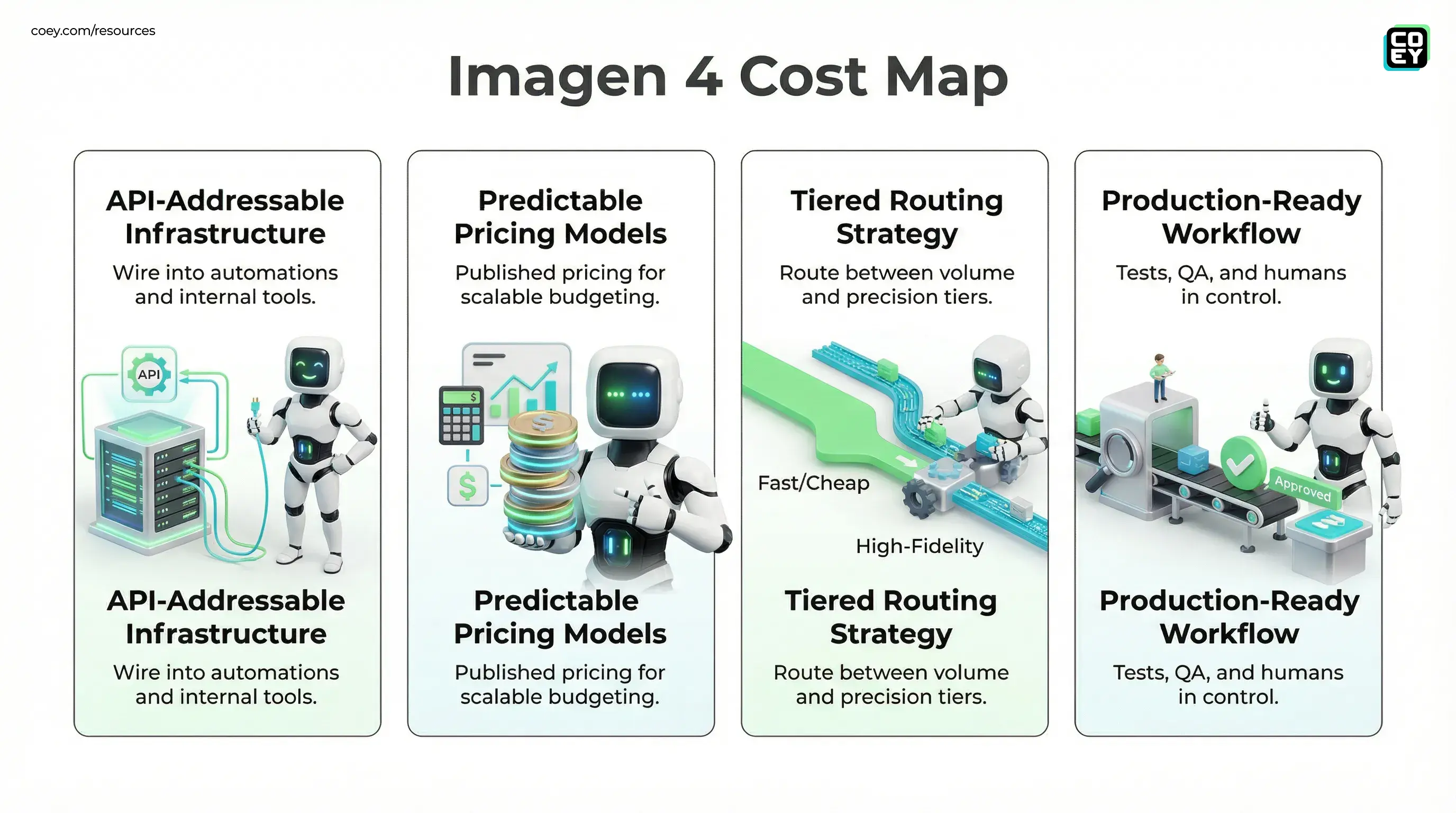

- Google supports API addressable image generation through Gemini, not just in a consumer UI. That means you can wire it into automations, batch jobs, and internal tools (docs).

- Google AI Studio is the builder front door for prototyping prompts and payloads before you operationalize them in code or no code automations (for example, Google’s Developers Blog announcement about Imagen 4 being available via the Gemini API and AI Studio: Google Developers Blog on AI Studio and Imagen 4).

- Pricing and model tiers are explicit. Google publishes pricing for Gemini API usage (Gemini API pricing).

What we cannot responsibly do: claim a specific, officially launched model called “Nano Banana 2 Flash” with defined specs, because we cannot validate that name as a first party public model name in documentation right now. If your team saw that name in a UI or limited rollout, treat it like a codename or early label, not a stable procurement target.

Why “Flash” tiers matter to real teams

Creators and marketers do not lose time because they cannot generate an image. They lose time because they need:

- 40 variants for paid social, not 1 hero render

- localizations, aspect ratios, and CTA swaps

- creative testing at scale without ballooning cost

- speed fast enough to iterate in the review meeting, not after it

This is where “Flash” positioning becomes operationally meaningful. In practice, the “fast” image option is a routing target for:

- high volume generation

- lower unit cost per usable asset

- lower latency so workflows do not stall

Workflow reality: the creative team does not need one perfect image. They need a pipeline that produces ten acceptable options quickly, then lets humans pick winners.

Automation readiness: can you plug it in?

Yes. This is the main point. Google’s image generation is not trapped in a consumer only experience. If it is in the Gemini API, it is workflow material.

Where it is callable

- Gemini API: direct HTTP calls from your backend, scripts, or internal tools (documentation).

- Google AI Studio: prototype prompts, test outputs, then translate into repeatable API payloads (announcement).

What “API availability” means in plain English

API access is the difference between:

- Designers generate images in a UI (nice, limited, manual)

- Your stack generates images on demand (scalable, measurable, automatable)

If you can call an endpoint, you can:

- generate 300 SKU images overnight

- trigger creative refreshes when performance dips

- auto produce thumbnails when a YouTube title changes

- pipe assets into a DAM, CMS, or ad ops workflow

What is real today vs what is still hype

| Question | Pragmatic answer | What it means for teams |

|---|---|---|

| Can we automate image generation? | Yes, via Gemini API | Hook into Make, n8n, or Zapier via HTTP, or build internal services |

| Is “Nano Banana 2 Flash” a stable name? | Not verifiable as a first party public model name | Do not bake the label into contracts, bake in a swappable routing layer |

| Is pricing predictable? | Published for Gemini API usage | Budgeting becomes possible, you can enforce spend policies |

| Is this production ready? | Ready for production tests if your pipeline includes QA | Humans still approve, machines scale the variants |

The tiering strategy teams are adopting

Even without obsessing over model names, the winning pattern is stable:

- Fast and cheap tier for bulk variants (for example, Gemini’s faster image model option documented under Gemini image generation)

- Higher fidelity tier for hero assets, brand campaigns, and “do not mess this up” moments (for example, higher end image models like Imagen 4 Ultra)

- Routing logic that escalates only when needed

If you want the COEY specific reference point for that higher precision tier, see Imagen 4 Ultra T2I.

This is exactly how modern teams scale creativity: humans provide intent, constraints, and taste; machines produce breadth; and the system routes spend where it actually matters.

Snarky but true: if you are using premium image generation for every single thumbnail variant, you are basically buying champagne to water your plants.

What to watch next (if you care about readiness)

If Google introduces new “Flash” image branding or a formal “Nano Banana 2” model, the question is not “is it cool?” The question is:

- Does it have stable API endpoints?

- Can you batch reliably?

- What are the quotas, rate limits, and failure modes?

- Can you enforce governance? (brand rules, forbidden claims, audit trails)

Because that is the line between shiny demo and workflow component.

Bottom line

Google’s image stack is moving in the direction the market actually needs: speed plus cost control plus API access. That is how image generation becomes a true collaborator in creative operations, one that scales output without scaling headcount.

Just do not anchor your strategy on an unverified model name. Anchor it on what is provable: the Gemini API image generation capabilities, published pricing, and a tiered routing approach that keeps humans in control while machines handle the grind.