How to Build

an AI Content System

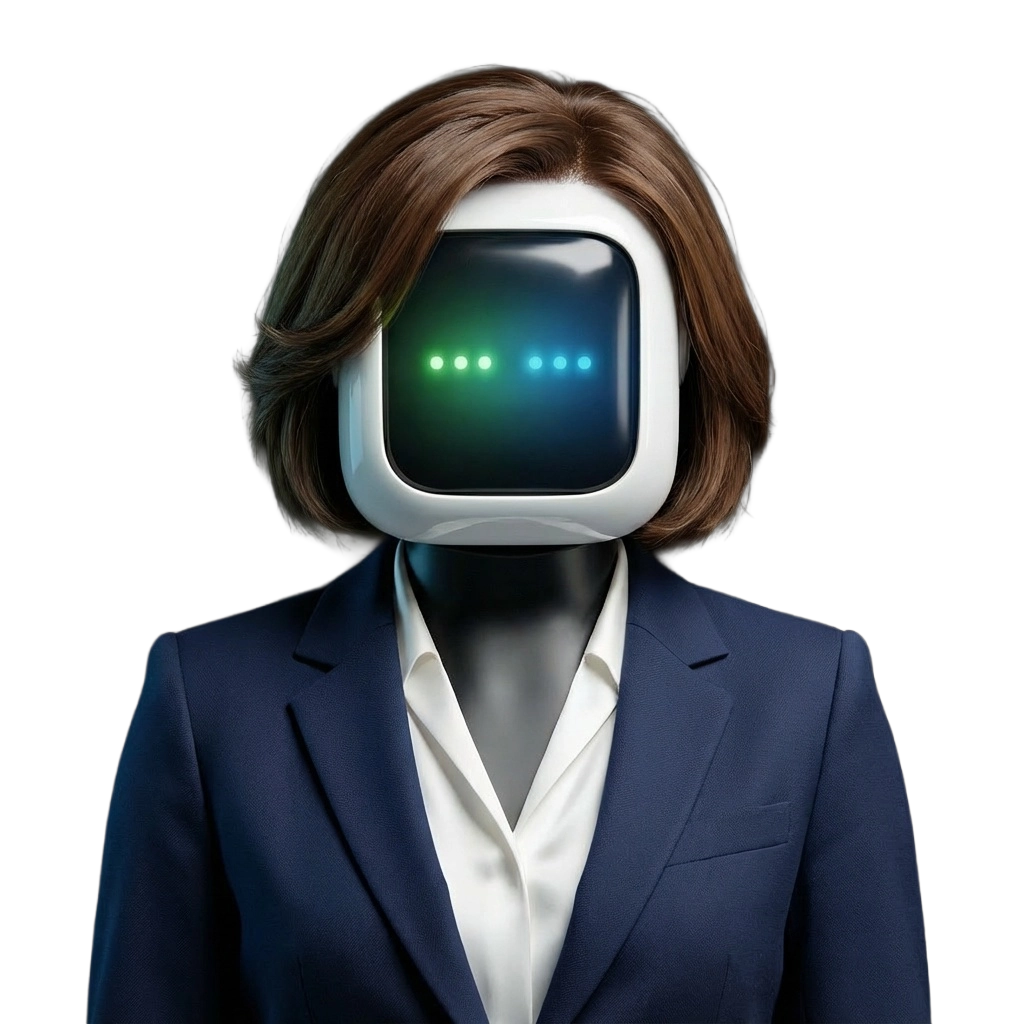

The playbook COEY uses to build production-ready AI content systems for brands and agencies, and the five pieces every C-suite team should understand before they buy, build, or hire.

Executive Takeaway

An AI content system is not a tool or a seat on a platform. It is the stack of models, prompts, brand controls, review flows, and automation that lets a marketing team produce on-brand content on a production timeline. The brands and agencies winning with AI content are the ones who built a system, not the ones who bought a subscription.

What is an AI content system?

An AI content system is the end-to-end setup a marketing team uses to turn briefs into finished, on-brand content at production scale, using generative AI as the core engine. It is the thing that sits between a CMO’s content calendar and the feed, ad placement, landing page, or email that a customer eventually sees.

Think of it the way a CFO thinks about the finance stack. Nobody calls QuickBooks a finance strategy. It is one tool in a system of tools, people, and controls that together produce a trustworthy set of books. AI content works the same way. ChatGPT is not a content strategy. Midjourney is not a content strategy. They are ingredients in a system that, when designed well, gives a marketing team the ability to ship more content, in more formats, on more channels, at a fraction of the cost and calendar of traditional production.

That system is what COEY builds. It is also what most in-house teams underestimate when they assume a single tool subscription will do the job.

The five parts of a working AI content system

Every functional AI content system we build for a brand or agency has five parts. Miss any one and the whole thing stalls. We have seen teams invest heavily in parts one and two, then wonder why nothing ever actually ships. The reason is almost always that parts three, four, or five were never designed.

01

Model selection and fit

The AI model landscape changes every month. A model that was the best text-to-image generator in Q1 may be the third best by Q3, and the cheapest may be the highest quality for your brand’s specific aesthetic. A working system has a model selection layer that maps the right model to the right job and that can swap models in and out as the frontier moves.

This is where head-to-head model comparisons come in, and why we publish ours publicly. A CMO does not need to know every model. They do need to know that the model powering their hero video this quarter is the right one for the job, and that the system will upgrade to the next one when it matters.

02

Prompt and style infrastructure

A single prompt in a chat window is not a content system. A prompt library is. A working AI content system treats prompts the way a design team treats a brand book. There are canonical prompts for each asset type, versioned templates for each campaign, and brand-specific style modifiers that keep the output consistent whether the prompt is run by a creative director or a coordinator.

For brand teams we build prompt libraries that encode voice, tone, visual style, and product-specific constraints. For agencies, we build client-specific prompt layers that a creative team can extend without starting from scratch on every engagement. Both cases share the same rule: the system should not depend on one person remembering the magic words.

03

Automation and orchestration

This is the piece most teams skip, and it is the one that decides whether the system can actually run at the pace the content calendar demands. Orchestration is the wiring that turns a brief into a sequence of model calls, chains them together, hands the output to the next step, and delivers a finished asset on the other side. It is what lets one creative lead generate, review, and ship a week’s worth of ad variants in an afternoon instead of a month.

A well-wired system handles retries, versioning, and cost controls automatically, and connects to whatever the team already uses for asset management, review, and delivery. Without it, you have a bunch of impressive demos and a team that is exhausted before the first sprint ends.

04

Review, brand safety, and governance

AI output has to be reviewed by humans before it goes live. That is not a weakness of AI content. It is the part that makes the whole system trustworthy. A working system has a review layer with the right people in the right places, clear sign-off criteria, logged approvals, and guardrails that catch off-brand, off-message, or legally risky output before it ever reaches a reviewer.

For brands with legal, regulatory, or compliance constraints, the governance layer is not optional. It is the thing that makes the system safe enough to use on real customer-facing work. For agencies pitching clients, it is the thing that turns AI content from a liability into a trust-building differentiator.

05

Measurement and continuous improvement

The final piece is the feedback loop. A working AI content system measures which prompts, which models, and which workflows are producing the content that actually performs. It feeds that signal back into the prompt library, the model selection layer, and the orchestration so the system gets better every quarter instead of drifting.

Most teams never build this because the AI tools themselves do not ship with it. That is a choice, and it is the choice that separates a content system from a pile of tool subscriptions. Without measurement, you do not know whether you are actually producing better content or just producing more of it.

Built for the tools you already use

A COEY-built AI content system is not a walled garden. It orchestrates the AI models your creative leads want to work with and plugs into the operational stack your team already runs on. Here are a few of the tools we routinely wire into production.

AI MODELS WE ORCHESTRATE

OpenAI

GPT family, image, voice, agents

Claude

Long-context reasoning and drafting

Gemini

Google multimodal and search

Grok

xAI real-time and reasoning

Llama

Meta open weights for private runs

OPERATIONAL STACK WE PLUG INTO

An open base layer, not a lock-in

The base layer is where your tools, your data, and the AI models meet. It decides which model to call, what to give it, how to publish the result, and what to log. Pick the wrong base layer and you trade one walled garden for another. Pick the right one and you get three things at once: flexibility to swap models, security over what data leaves your perimeter, and guardrails you can actually audit.

FLEXIBILITY

Model choice, not model lock

Your base layer should let you route a brief to Claude, a cost-sensitive batch job to an open-weights Llama, and a real-time answer to Grok, without rewriting your pipeline. When the frontier moves every three months, you want a swap, not a migration.

SECURITY

Your data stays where you put it

Self-hostable or perimeter-resident options mean customer data and brand IP do not have to leave your cloud. For regulated teams this is the difference between “maybe next year” and “ship it.” The base layer is the first place that decision gets made.

GUARDRAILS

Every action logged, every tool scoped

A real base layer gives you typed tool access, permission scopes, retries, idempotency, and an audit log. Not because you do not trust the model, but because you want the same production hygiene you already run on every other piece of infrastructure.

There is not one right answer. For workflow-heavy, code-execution-heavy work where guardrails matter most, n8n is hard to beat. It is open source, self-hostable, has hundreds of native integrations, and lets you do real work (publish a post, move a file, call an API) without burning a single AI token. For chat-first operator work where the team wants to describe outcomes instead of wiring nodes, desktop-and-chat tools like OpenClaw or Anthropic Claude Cowork turn the same pipelines into a conversation. Once your piping is in place, the whole operation can run through a chat window. And those worlds overlap more than people think. n8n has chat triggers. The chat-first tools can call out to code. The right answer is usually a mix, picked for your data, your team’s skill ceiling, and your risk posture.

n8n

OpenClaw

Claude Cowork

Three strong open-ish options. Not the only three, and not a requirement. COEY helps you pick and then builds on top of whatever fits. Not sure which one fits? Read our breakdown: n8n vs OpenClaw vs Claude Cowork for content automation.

Why most in-house AI content efforts stall

There is a pattern we see over and over again when a brand or agency tries to build an AI content system without outside help. It looks like this.

An innovation team or a forward-thinking creative lead picks up ChatGPT, Midjourney, and a few video tools. The output is impressive. A CMO sees a demo and gets excited. A budget materializes, seats get bought, and early confidence runs high across the team.

The team has produced viral internal Slack moments but zero production-ready assets. Tools are not the problem. Nobody built the system around them. Every asset is a one-off, nothing repeats, brand is inconsistent, and the legal team already has questions nobody can answer.

The budget conversation happens again and the tone is different. The CMO asks why the promised results never arrived. The honest answer is that the team bought tools and tried to build a real system in spare time, and the spare time was never actually there.

What a working AI content system actually saves you

Most C-suite conversations about AI content get stuck in promise mode. The real question is whether the system pays for itself, and by how much. Here is what we typically see when a brand or agency replaces ad hoc AI experiments with a working, COEY-built content system.

Real figures from a recent COEY client in a consumer vertical, covering research, scripting, generation, edit, and publish. The traditional range matches comparable brand shorts with shoot, edit, and post budget.

Same client, measured on live channels against two comparable brand channels over a matched window. The per-view gap widens further when AI content lands on an audience that actually wants it.

Illustrative range across COEY engagements. The traditional side covers shoot, retouch, or licensed stock plus design hours. The AI side is API spend plus light human QA on every asset.

TIME SAVINGS ARE WHERE THE REAL LEVERAGE IS

A full campaign cycle that used to take a week now ships inside a day, and sometimes faster than that.

Twelve variants of an ad or landing page, generated and live the same afternoon. Dead concepts die faster.

Adding a new content surface like shorts, podcasts, or a newsletter now takes weeks of setup, not a quarter of hiring.

The part of the pipeline that is pure production collapses to minutes, so humans stay on concepting and sign-off.

Where the savings actually come from

Per-asset cost is the easiest part to show in a chart, but it is rarely the largest piece of the savings. The real leverage is time. Here is what we watch for on an engagement, in rough order of impact on the P&L.

1. Cycle time compression. A working content system does not just make assets cheaper. It changes how fast a campaign can enter, test, and exit the market. Weeks become days. Days become hours. That compounding is where most of the dollar value actually lives, because every shortened cycle frees the team to run another one.

2. Kill-bad-work speed. When a creative lead can generate and review twelve variants in an afternoon, campaigns stop bleeding budget on concepts that were never going to land. The system does not just produce content faster. It ends bad content faster, which is where a lot of wasted spend quietly lives today.

3. Per-asset cost collapses. A recent COEY client in a consumer vertical was producing finished short-form videos in the $1.50 to $2.50 range, end to end. Comparable brand channels were spending in the $300 to $500 range for the same deliverable. That showed up as 85 to 121 times lower cost per view over a matched window, which is when the conversation stops being about production and starts being about reach.

4. Consolidated tool spend. Most teams we audit are paying for three to five overlapping SaaS tools to do what a well-orchestrated AI content system does end to end. Consolidating into a metered stack typically frees a meaningful chunk of fixed monthly spend, and that money is almost always worth more when it is flexible.

We do this every day for ourselves.

COEY is its own case study. The content, the site, the research, the ops, the measurement – all of it runs on the same kind of system we build for clients. Every engagement we ship teaches us something, and every one of those lessons compounds into the next build. Clients do not just get the system we scope on paper. They get everything we have learned running this every day, on ourselves first.

Per-asset economics, relative to traditional production

Traditional production is anchored at 100% for each metric. The COEY system bar is its actual share of that cost.

How we model ROI on an engagement

Before we start any build, we walk the numbers with your team. That means pulling the current per-asset cost and cycle time of your top content types, mapping the hours and tool line items inside them, and projecting what the same output looks like running through a production AI content system. By the time we scope the engagement, everyone involved knows roughly what it costs, roughly what it saves, and when the system crosses into positive ROI.

Payback windows range widely. The fastest positive ROI we have delivered was the same day. Sometimes the answer is not a custom build at all – sometimes the right call is to teach your team how to drive the base layer they already have, and the teaching alone is enough to take cost out and give them time back. On more involved engagements the payback runs to a quarter or two, usually because the stack needs meaningful governance work before it can go live. Either way, the numbers get modeled before anyone signs anything.

Solutions do not always have to be complex. Sometimes we build, sometimes we teach, and the teaching on its own is the thing that pays for itself.

Two ways to build your AI content system with COEY

COEY is not a SaaS. We do not sell seats and we do not resell tools. We come in one of two ways, and some of our engagements combine both. The right choice depends on how your team wants to operate when the dust settles.

Frequently asked questions from CMOs and agency leaders

How long does it take to build an AI content system?

A focused COEY build engagement typically lands a working system in production in weeks, not quarters. The exact timeline depends on how many content types the system needs to produce on day one and how much existing infrastructure we can plug into rather than replace. We scope this upfront so the timeline is real, not aspirational.

Do we need to replace our existing marketing stack?

No. A well-designed AI content system plugs into whatever you already use for asset management, review, and delivery. COEY builds on top of your stack, not in place of it. The goal is leverage on the content you already produce, not another tool your team has to learn.

Is this only for large enterprise brands?

No. We build custom systems for mid-market brands, enterprise brands, and the agencies that serve them. The shape of the system scales with the content volume and the governance requirements. A direct-to-consumer brand with a lean team needs a different system than a regulated enterprise brand, and we build for both.

What about brand safety and legal review?

Brand safety, legal review, and governance are part of the system, not an afterthought. Every build we do includes a review and guardrails layer designed around the specific risks the brand or agency is carrying. For regulated industries we build additional controls and audit trails into the workflow.

How is COEY different from an AI content SaaS?

COEY does not sell software. Software companies sell you a tool and leave you to build a system around it. COEY builds the system. That system may use several tools, including ones we help you evaluate and pick, but the deliverable is the working content engine inside your operation, not a seat on a platform.

Ready to build your AI content system?

If you lead marketing at a brand or run an agency and you are trying to figure out how to get real leverage out of AI content, this is what COEY does every day. Start with a conversation, tell us what you are trying to ship, and we will tell you which of the two delivery modes fits your team.

Explore the related resources below while you are here: our AI model comparisons hub, the COEY AI Studio page for how we build custom content machines, and the AI Automation page for how we wire AI into marketing workflows. If you are a marketing leader or board member who wants hands-on AI fluency, explore the Executive AI Accelerator.