Ollama Ships ARM Builds and Hardware Acceleration: Local AI Gets a Major Speed Boost

Ollama Ships ARM Builds and Hardware Acceleration: Local AI Gets a Major Speed Boost

September 16, 2025

Ollama, the popular open-source toolkit for running large language models (LLMs) locally, just widened the lane for on-device AI. With native ARM builds landing across platforms and accelerated execution on supported GPUs, this release removes long-standing friction for anyone trying to scale creative workflows off the cloud.

Why it matters: Lower cost, faster iteration, fewer compliance headaches. This is practical local AI for marketers, creators, and automation builders, not a lab demo.

The Headline

Two shifts define this update:

- Native ARM builds: Ollama now offers ARM binaries alongside x86, bringing first-class performance to Apple Silicon and Linux ARM, and introducing a native Windows on ARM build. That means MacBooks, Copilot-class PCs, and edge devices can run models without emulation penalties.

- Hardware acceleration: On platforms with GPU support, Ollama taps the right hardware automatically. Apple Silicon accelerates via Metal; NVIDIA GPUs use CUDA; AMD cards run via ROCm. The result: faster model load times, lower latency, and viable multi-step chains on a single machine. See the GPU support documentation for specifics.

What is New and What is Actually Different

On-device LLMs have been “possible” for a while. What has changed is the tradeoff curve. ARM-native builds remove a hidden tax that made local setups feel like a novelty. Combined with GPU acceleration on supported platforms, you can now push real campaigns through a laptop or edge box without babysitting a status bar.

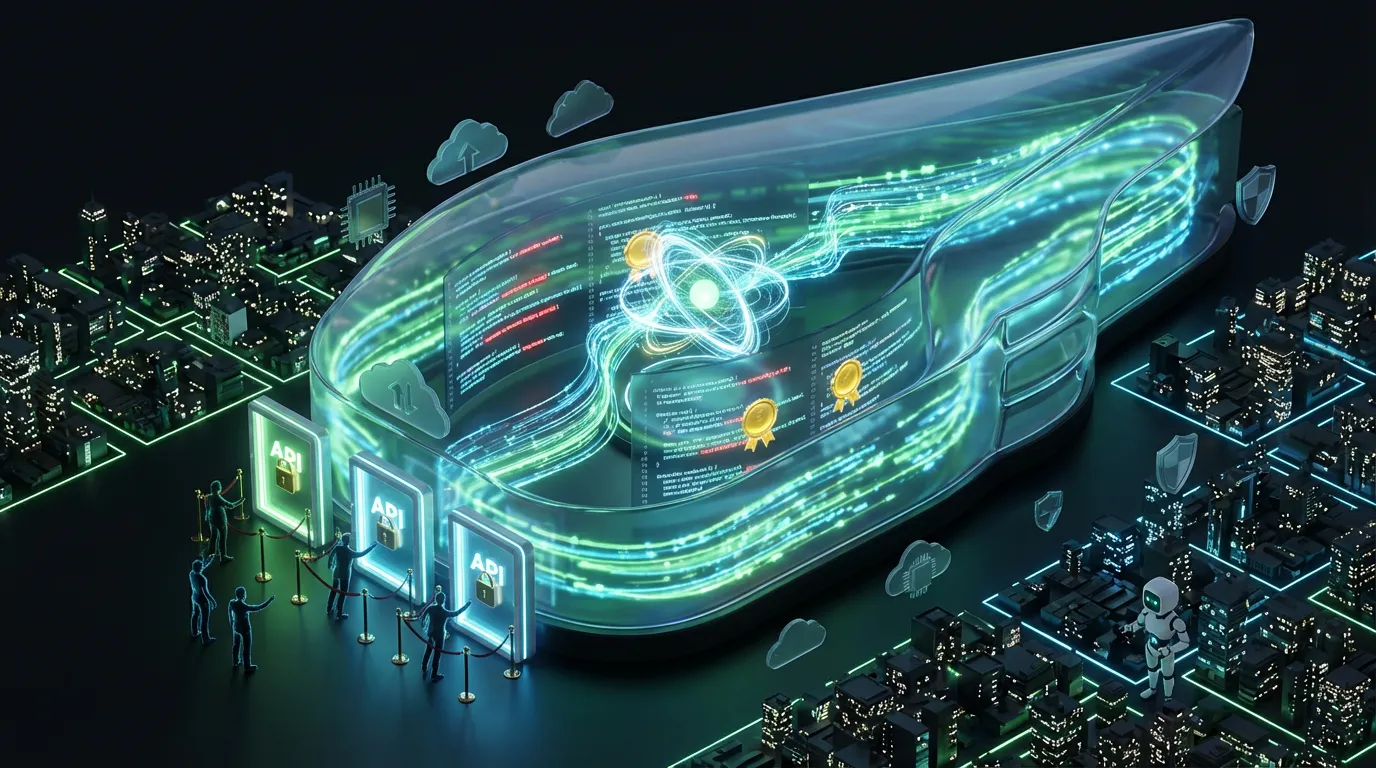

Automation Lens: Can You Plug This In?

Yes, if you are already automating with webhooks, scripts, or lightweight orchestrators, Ollama slots in neatly. The local server exposes a simple HTTP API for prompt/response, embeddings, and model lifecycle calls. That translates to:

- Triggerable tasks: Copy variants, UGC moderation, and product Q&A can be called from your automation stack with a POST request.

- Embeddings + retrieval: Ingest product catalogs, brand guidelines, or support docs locally and rerank results in line. No external vector DB required to start.

- Repeatability: Keep models, prompts, and data pinned to the same machine or fleet for consistent outcomes in audits and reviews.

On Apple Silicon and desktop GPUs, the acceleration story is strong today. Performance improvements move LLMs from “nice to test” to “ready to ship” for many creative operations.

Reality Check: Platform Nuance

Not all ARM is created equal, and not all acceleration is equal. Here is what is true right now:

- Apple Silicon (ARM): Mature and fast with Metal GPU acceleration. If you are on an M1 to M3 machine, consider this the smoothest path to local LLMs with acceleration.

- Linux + NVIDIA / AMD: Strong support on the GPU side through CUDA and ROCm; be mindful of driver versions and VRAM budgets. Consult the GPU docs before rollout at scale.

- Windows on ARM: There is a native build, but hardware acceleration is still evolving. Some users report early issues with certain models and instruction sets (see one example in this GitHub issue). Expect rapid iteration, but plan a little buffer if you are deploying to brand-new Copilot-class devices.

API + Integration Status (In Plain English)

- REST API: The local server runs on your machine and accepts simple HTTP requests. Translation: any tool that can call a URL can automate Ollama.

- Works with your stack: Use webhooks in n8n/Make/Zapier, scripts in Node or Python, or notebooks to batch prompts and evaluations. You do not need a special SDK to start.

- Local-first privacy: Nothing leaves your device unless you send it out. For regulated or sensitive work (healthcare, finance, pre-release creative), this is a big deal.

What This Unlocks for Creators and Marketers Today

| Workflow | Yesterday (Cloud-first) | Today (Ollama + ARM/GPU) |

|---|---|---|

| Bulk copy variants | API costs, rate limits, waiting on queues | Run locally at device speed; fixed compute cost |

| Content moderation / safety passes | Compliance reviews and redaction overhead | Keep data on-device; log and audit locally |

| RAG on catalogs/briefs | External vector DB, latency hits | Local embeddings + retrieval; low-latency reranking |

| Agentic prompt chains | Multi-API orchestration complexity | One box, one API, small fleet replicated at the edge |

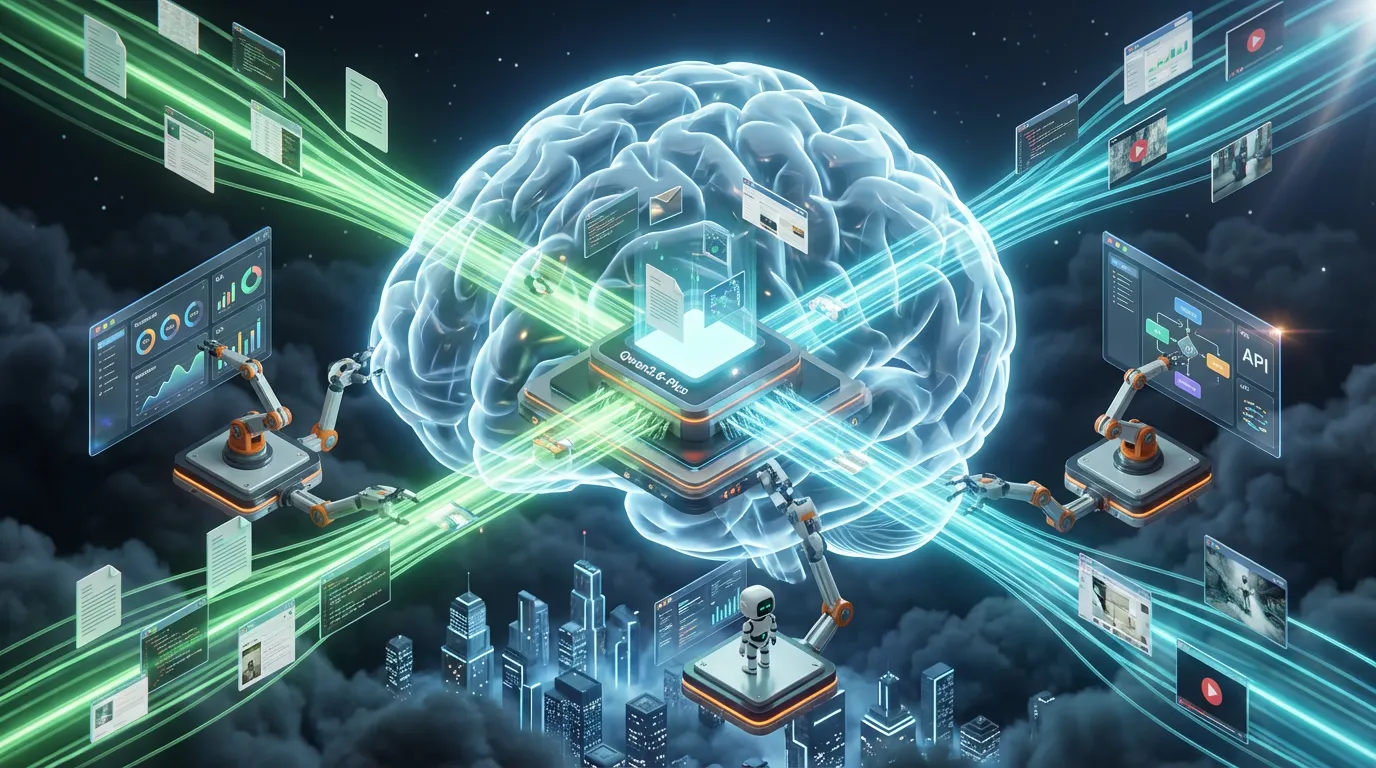

Multi-Format Relevance: Text, Image, Audio, Video

This is primarily a language-model update, but it reverberates across formats:

- Text: Obvious win: faster drafting, summarization, tagging, and QA.

- Image: Pair Ollama with a local image model (for example Stable Diffusion or ControlNet via a local runner) and use the LLM as the director that writes prompts, curates outputs, and enforces brand tags.

- Audio: Run local transcripts with a speech-to-text tool, then push summaries, highlights, and social captions through Ollama.

- Video: Use frame grabs plus transcripts as inputs. The LLM can write titles, descriptions, and chapter markers, and score versions for A/B tests.

The pattern: LLMs orchestrate, structure, and QA; specialized local models render and transcode. Glue them together with webhooks and a local queue and you have a fully offline creative pipeline.

Availability

- ARM-native builds are available now across macOS, Linux ARM, and a native Windows on ARM build is included in the latest releases. Start on the Releases page or grab Linux installers from Ollama’s Linux download.

- Acceleration support differs by platform and GPU; consult the GPU docs for CUDA, ROCm, and Metal notes before rolling out at scale.

What is Ready Now vs. What is Next

| Area | Today | Near-Future |

|---|---|---|

| ARM coverage | Apple Silicon and Linux ARM are solid; Windows ARM build available | Broader Windows ARM acceleration and stability improvements |

| GPU/NPU acceleration | Metal on Apple; CUDA and ROCm on desktop GPUs | Richer NPU/NNA utilization on consumer ARM devices |

| Automation | REST API works with webhooks and scripts; easy to chain tasks locally | Deeper OpenAI-compatible adapters and turnkey connectors |

| Multimodal | Language-first; can coordinate image/audio tools locally | More native multimodal models as open-source catches up |

Limits to Note (So You Don’t Over Promise)

- Model size vs. VRAM: 7B to 13B parameter models shine for everyday marketing and ops; 70B+ still demands beefy hardware and careful quantization.

- Windows on ARM: Expect rapid progress, but it is early days for full acceleration. Some users report model load issues on certain devices.

- No native generative media: Ollama does not render images or video by itself. Plan to pair with local media models and use Ollama as the workflow brain.

Editor’s Take: The Real-World Readiness

In a world where cloud tokens get spendy and compliance reviews slow everything down, local AI needs to be both fast and boring in the best way. This release makes Ollama boringly dependable on Apple Silicon and desktop GPUs, and promising on Windows ARM. If you run a creative team or performance marketing function, the move is straightforward: pilot a few workflows that grind your budget today (copy variants, RAG on product data, moderation), keep everything local, and measure time-to-approve, not just time-to-first-token.

The vibe: less “look what I can do,” more “look what ships on Tuesday.” That is how human + AI collaboration scales.

Bottom Line

Ollama’s ARM builds and hardware acceleration flip local LLMs from experiment to execution. The APIs are simple, the privacy story is strong, and the performance is finally there where it counts. Pair Ollama with your orchestration tool of choice, wire in your brand data, and start moving work off the cloud meter. Local AI just graduated from hobby to production.