Automating the Screen: UI Agents Go Pro

Automating the Screen: UI Agents Go Pro

November 13, 2025

The Interface Awakens The Browser as Automation Layer

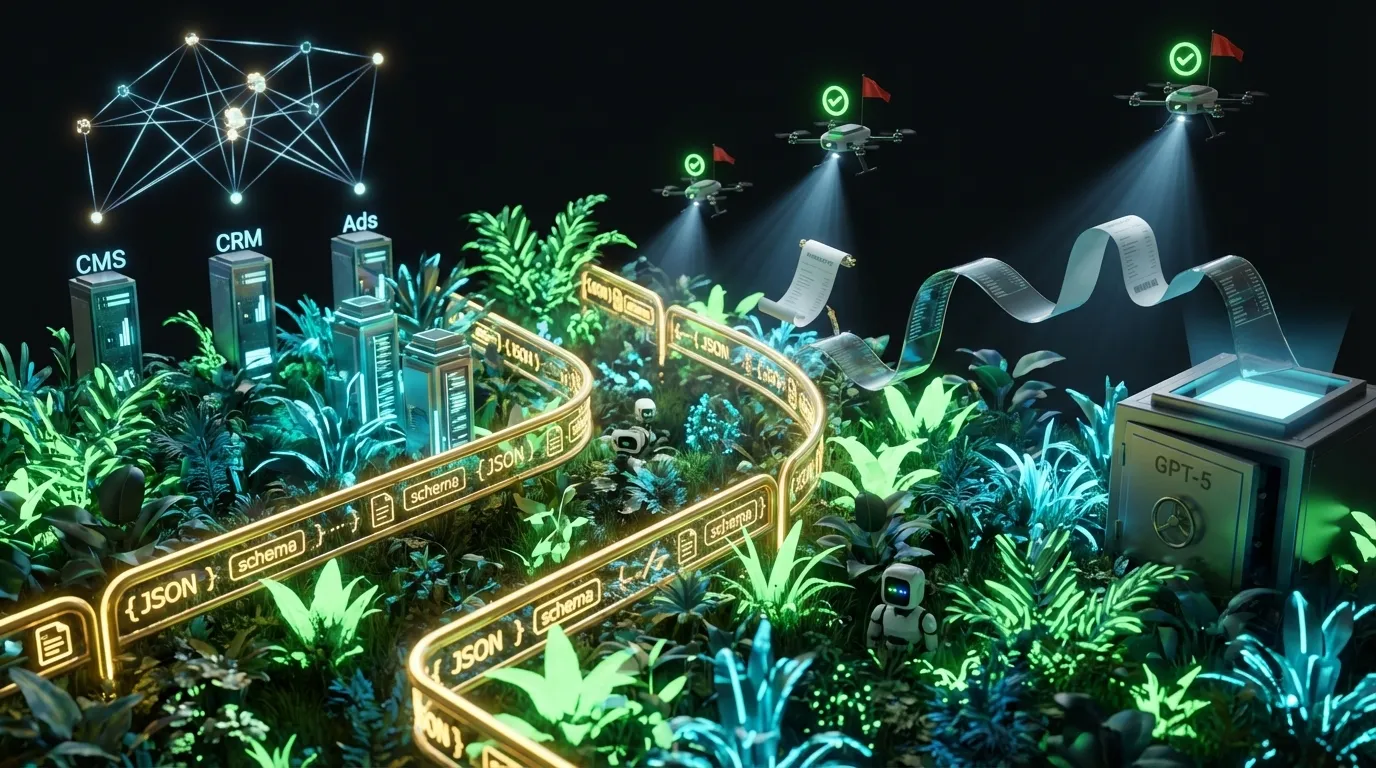

For years, workflow automation was a rigged game: winners got clean APIs, everyone else settled for scripting chaos on brittle screens. Old-school browser macros broke at every redesign. Even robotic process automation (RPA) looked like a glorified game of Whac-A-Mole. All of that is shifting fast. Thanks to the latest generation of vision-native, tool-using AI models, plus platform-level Computer Use from OpenAI and modern open-weight models like Llama 4, agents can now see, read, click, and recover from the UI chaos that previously drove teams to despair. Even better, enterprise platforms are shipping hardened computer use controls: sandboxes, logs, prompts, and policies, so clicky bots stay inside the rails.

This is not another vaporware push for sentient browsers. It is a hands-on, production-ready way to close the integration gap: glue your CRM to the latest ad platform, tame your HR portal, or bridge legacy CMS screens you just cannot kill. The magic is not AI wizardry, it is disciplined targeting, clear code-as-policy, and observable, boxed-in bots.

Why This Time Is Different UI Agents Get Practical

- Agents see and act across the stack. Modern vision models parse on-screen text, forms, charts, and even tricky overlays, then drive UI tasks with strong reliability if you keep things boxed in.

- Self healing (kind of) works. Smart agents recover from UI drift, leverage visual anchors, and adapt when selectors break or buttons shuffle around. Maintenance tax drops by orders of magnitude.

- Native browser agent mode. Cloud stacks and enterprise layers now offer agents their own secure browser, complete with policy checks, screenshots, and audit logs. Your lawyer finally exhaled.

- Grown up guardrails. Containerized sessions, click and keystroke quotas, policy prompts, and screenshot retention are governed, logged, and retrievable. No more just trust the bot.

- Platform politics are the new normal. UI agents on public platforms hit limits like rate caps and bot detection. Use them inside owned B2B portals or SaaS seats and they shine both legally and operationally.

API or Screen RPA or Agent When and Why

| Approach | Strengths | Weaknesses | Best Fit |

|---|---|---|---|

| API-first integration | Stable, fast, debuggable, versioned | Limited access, vendor backlog, often not enough UI control | Data sync, bulk ops, reporting, analytics |

| Classic RPA | Deterministic, good for static screens and repeatable processes | Breaks on UI drift, hard to generalize beyond golden paths | Legacy desktop, known layouts, simple repetitive flows |

| Computer use UI agents | Adapts to UI changes, can bridge API gaps, works on web apps | Higher per-task cost, needs robust guardrails, more logging | API-less portals, ad ops tweaks, QA tasks, sticky vendor tools |

| Hybrid API + UI agent | Best of both: API for bulk, UI for exception paths | Complex, needs orchestration, more moving parts | Marketing ops, content publishing, live QA, complex flows |

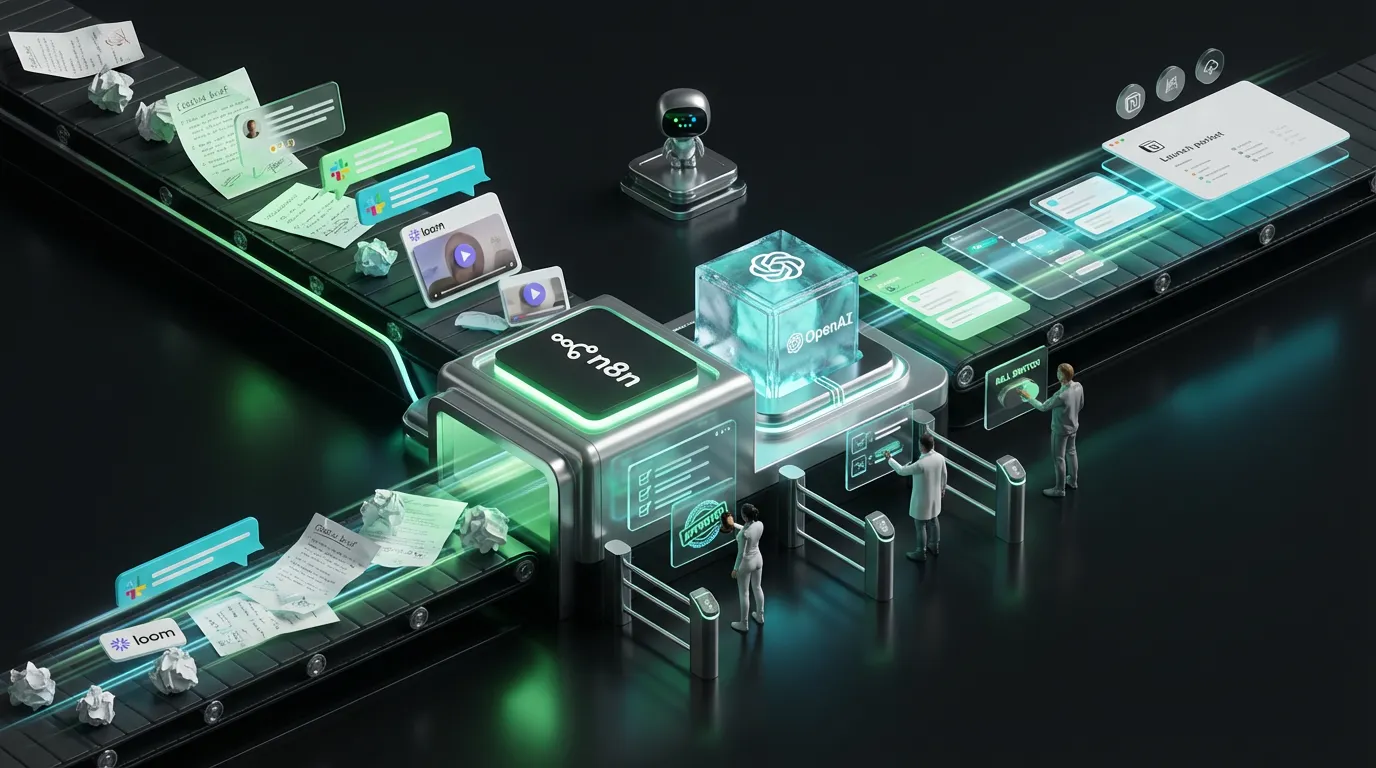

Blueprint Time How Modern UI Agents Actually Ship

Yes, this is what your near-future automation stack actually looks like. Get used to JSON, logs, and critics.

[Trigger]

→ CRM, schedule, in-app webhook, or a manual prompt

[Plan]

→ Parse intent → select model/tools → assign risk and token budget

[Observe]

→ Open containerized browser or VM → set locale and device profile

[Act]

→ Navigate • click • fill forms • drag-n-drop • screenshot evidence

[Critic]

→ Apply schema and visual checks → enforce policies → audit cost and history

| pass → [Publish or escalate]

| fail → [Auto repair or loop in human]

[Log]

→ Track every step, audit screenshot, token bill, result, reviewer

Sample Policy Pack for Computer Use UI Agents

{

"computer_use": {

"viewport": {"width": 1440, "height": 900, "dpi": 2.5},

"input": {"max_keystrokes": 700, "max_clicks": 75},

"allowlist_domains": ["ads.example.com", "cms.example.com", "partners.example.io"],

"block_patterns": ["/billing", "/export", "/delete"],

"sensitive_actions": {

"requires_user_confirm": ["purchase", "delete", "publish"],

"2fa": {"type": "human_approval"}

},

"privacy": {

"mask_selectors": ["input[type=password]", "#ssn", ".credit-card"],

"screenshot_retention_days": 10

},

"network": {

"rate_limit_per_min": 24,

"idle_timeout_sec": 60

},

"budget": {

"max_usd": 1.44,

"frontier_calls": 1,

"retry_limit": 1

}

}

}

Where UI Agents Print Money Today

- Ad ops janitor. Rotate ads, fix policy strikes, patch sitelinks, sync budget caps across fragmented screens that platforms gatekeep.

- Marketplace portals and partners. Listing upload, price edits, seasonal promos, content tweaks where APIs are locked away or non-existent.

- Opinionated CMSes. Headless is great until it is not. Agents can file posts, set meta, attach files, and walk preview flows on screens, not endpoints.

- Light compliance QA. Check legal footers, badge render, locale prices, product labels on actual web pages before legal gets the angry call.

- Competitor intel. Pull visible pricing, feature grids, and public changelogs on a schedule with rate limiting and a serious dose of ethics.

Threats and Defenses That Actually Work

- Prompt injection happens in the wild. Bad actors hide instructions in page text. Ignore non-internal instructions, keep secrets out of clipboard, always sanitize inputs.

- UI drift plus dark patterns. Button labels move, helpful overlays pop up, ads disguise as controls. Agents should use multiple cues: role, text, DOM position, visual anchor.

- 2FA fatigue and auth weirdness. Bake in session refreshes, human-in-the-loop passcodes, and real-time Slack or email approvals.

- PII leaks. Mask sensitive content in screenshots, redact logs, minimize task scope and exposure per run.

- Rate limits and moats. Honor platform rules, act only on owned or consented accounts, and cap request frequency.

Defense in Depth Config Snippet

{

"safety": {

"content_filters": ["profanity", "pii"],

"instruction_whitelist": ["task", "system", "user"],

"page_instruction_blacklist": ["aria-label*='ignore-policy'", "data:text/*"],

"action_limits": {"downloads": 2, "uploads": 4},

"escapes": {"on_unknown_state": "pause_notify_human"}

},

"observability": {

"screenshots": "on_step",

"video_logs": false,

"log_fields": ["page_url", "selector", "action", "latency_ms", "cost_usd"],

"privacy_masks": [".payment", "#passwordField"]

}

}

Hybrid Patterns for Power and Price

Do not let models chew through budget because it is cool to use vision everywhere. Use APIs for bulk, precision, or anything possible. Drop to the UI for the gaps only.

- Ad ops hybrids. Pull data via API, trigger agent for UI-only toggles, edge tweaks, or creative swaps.

- CMS hybrids. Push via API, then sweep live page for visuals, broken links, and roll back if needed.

- Pricing/Compliance hybrids. Database is source of truth, agent finds mismatches at pixel level across locales, devices, and A/B variants.

How to Not Go Broke Containing Agent Costs

| Cost Driver | What It Looks Like | How to Control |

|---|---|---|

| Vision tokens | Capturing every pixel on every click | Screenshots only on step boundaries, crop, no wallpaper |

| Overzealous planning | Agent spins on trivial sub-tasks | Route to small models first, escalate only if needed |

| Retry storms | Infinite retries on flaky UX | Stop early, backoff, cap at one retry |

| Task sprawl | Tons of semi-duplicate runs | Run caps, deduplication, nightly batching |

Reference Budget Controls for UI Agents

{

"budget_control": {

"max_task_usd": 1.50,

"retry_limit": 1,

"frontier_calls": 1,

"vision": {"max_screens": 8, "crop_default": true},

"router": {"prefer_small": true, "escalate_on": ["uncertain", "novel_ui"]}

}

}

No Vibes-Only Outputs Agents Need Receipts

Automation is only grown up if you can prove what happened. Each agent output should be machine verifiable: what changed, how, and with evidence. For a deeper dive on evidence-first systems, see our post on proof-first automation.

{

"ui_task_report": {

"task_id": "ad_fix_2025_11",

"goal": "Remove expired promo in AdGroup Q4",

"changes": [

{"selector": "#promo_banner", "old": "Save big in Summer", "new": "Shop holiday offers"}

],

"evidence": [

{"step": 2, "type": "screenshot", "hash": "sha256:..."},

{"step": 3, "type": "text_capture", "value": "Status: Active"}

],

"policy": {"blocked_phrases": [], "banned_terms": 0},

"cost": {"usd": 0.95, "tokens": {"input": 12_133, "output": 2_312}},

"decision": {"status": "done", "risk_tier": "low"}

}

}

What You Should Automate Now Text Photo Video Audio

- Text. CMS updates, metadata patching, quick localizations, all with critics in the loop.

- Photo. Batch image uploads, swap alt text, check for legibility and contrast in production views, not just a Figma comp.

- Video. Automated upload, thumbnail pick, subtitles on, playlist trims across platforms that hide options behind modals.

- Audio. Push new voiceover, add transcripts, verify rich embeds render in every template, not just previews.

Show Me the Metrics Prove It or Kill It

- Task success rate. How often agents hit the goal with no human save.

- First pass validity. Share of runs that pass all critics on attempt one.

- Exception rate. How often tasks get escalated for hand holding. Lower is better until quality falls.

- Mean time to done. Trigger-to-complete lag by task family.

- Cost per proofed task. Dollars per complete run, with receipts and logs.

- Defect rate by surface. Post-launch issues caught in production or flagged by support.

Playbooks for Every Team Size

Creators & Micro Teams

- Pick one portal. Hard-code playbooks for a narrow task (for example, swap thumbnail or update promo text).

- Record at least three golden paths with screenshots for regression testing.

- Keep routing to small models, escalate only if something looks really new.

Mid Market Orgs

- Keep an allowlist of covered portals and tasks with owners and SLAs.

- Enforce critic chains: schema, tone, claims, locale, accessibility. If there is no source, do not ship it.

- Batch long jobs overnight, hold daytime for real-time fixes and escalations.

Enterprise Scale

- Run agents inside secure sandboxes, per-vendor credentials, minimum privilege per flow.

- Policy packs by region and vertical. Every asset gets consent and provenance tracking.

- Regression tests monthly against at least 20 top flows. Pin model versions, alert on drift.

A Month to Automation 30 Days to Value

Week 1: Inventory and Target

- List the UI-only workflows slowing the team.

- Pick two targets: one low risk, one medium. Define what success and failure mean in hard terms.

- Create annotated golden paths with screenshots, field hints, and policy notes.

Week 2: Critics and Budgets

- Wire up schema, visual, and policy critics. Block on known fail patterns.

- Set strict budget and retry caps. Route small-model-first always.

- Enable step logs and screenshot journals.

Week 3: Human in the Loop Pilot

- Run 20 to 50 tasks. All sensitive actions require human approval. Log every exception and failure.

- Patch UI locators and visual anchors based on what drifts.

- Sharpen escalation so humans only intervene when they add value.

Week 4: Prove ROI and Expand

- Measure and report on goal rate, time saved, and cost per task compared to manual.

- Auto-publish low-risk tasks, keep human review for the rest.

- Nominate the next two or three portals by measured ROI.

Fast Fixes for Top Failure Modes

| Failure | Why it Happens | Fast Fix |

|---|---|---|

| Clicks on ads or overlays | Decoys styled like real buttons, UI clutter | Require role attributes and text matches; add overlay dismissors |

| Login loop hell | Session expiry, forced 2FA mid run | Pre-warm sessions, schedule auth refresh, inject human passcode |

| Invisible field errors | Silent validation, error text hidden or obscured | OCR next to fields, scroll and capture suspected error zones |

| Cost spikes | Trigger-happy screenshots and frontier model calls | Step-boundary capture, auto-crop, escalate only on need |

| Policy drift | Out-of-date docs, rules not in code | Codify in JSON, enforce in live critic chain, block on fail |

Actual Automation Governance Not Just Paperwork

- Tier your risk. Ship low without review, hold medium for editors, send high straight to legal or the true owner.

- Manifest provenance. Every run should package the agent version, prompts, logs, and screenshots. If someone asks why, you can show them with no guesswork.

- Big red kill switch. One flag freezes a portal, class, or model. No half measures, please.

Practical Realism and The Take

- Autonomy is a budget risk. Unbounded planning and novelty hunting crush budgets, so cap retries and frontier calls, and always review bills.

- Humans are still your taste-test layer. Agents excel at rules, not brand or gut decisions. Keep people in the loop for novel, sensitive, or subjective UI flows.

- Truth data first, cleverness second. Garbage in always means garbage out, only faster. Data and policy hygiene beats clever prompts any day.

The Take UI Agents Are the Adapter Your Stack Needed

Screen UI agents are not magic. They are the bridge between your modern automations and the crusty, questionably supported, revenue-critical screens nobody has API access for. Treat the screen like an API: typed outputs, strict critics, receipts. Start targeting systems where value is highest, code your cost controls, and log everything that moves. Hybrid automation wins. Your next meaningful integration is probably on-screen. Automate it like it counts.