Synthetic Audiences: The New Focus Group Revolution

Synthetic Audiences: The New Focus Group Revolution

November 21, 2025

Meet Your 24/7 Focus Group. Seriously.

Modern marketing has a physics problem. You launch assets into turbulent, unpredictable environments, with campaign budgets under the microscope and feedback loops that move at a dinosaur’s pace. Enter synthetic audiences: AI-driven customer simulators that let you pressure test creative, offers, and journeys before you pour gasoline on your media spend. It is basically a wind tunnel for campaigns. You are not replacing real air, just making sure your asset will not explode five seconds after liftoff.

What made this possible over the last few cycles? Two things. First, the latest frontier models like GPT-5, Gemini 2.5, and Llama 4 can reason about buyer tasks, tools, and real-world circumstances with far more reliability than the vibe-heavy days. Second, businesses are finally structuring truth packs for products, pricing, policy, and eligibility. Merge the two and you get what-if trials that respect your rules, your brand, and your target ROI. For a deeper dive on this approach, see our post on simulating your market with synthetic audiences.

Synthetic Audiences Explained

A synthetic audience is a digitally simulated cohort of AI agents modeled to behave like your actual customers but constrained by rules you set. Each agent embodies goals, budgets, preferences, and blind spots. They digest your ad creative, flow through conversion paths, and output measurable actions that correlate with real user segments. Not lorem ipsum zombies, these are simulated decision-makers with receipts.

- Modeled from evidence. Agents encode behavior patterns gleaned from historical campaigns, surveys, and support logs.

- Grounded in your truths. Your SKUs, prices, specs, regions, policies, and brand rules are hardcoded, not left to AI guesswork.

- Evaluated experimentally. Holdouts, control groups, and A/B splits, run all the science you want using the same math as your live ops.

Why Synthetic Is Finally Taking Off

- AI agents can reason and tool up. Comparing plans, checking eligibility, price optimization, agentic execution is now table stakes.

- Privacy regulations bite. With third-party tracking curtailed and cookies under pressure, synthetic pretests let you reduce risky and costly live experiments. See Google’s Privacy Sandbox direction for Chrome in 2025: next steps.

- No-code stacks have arrived. It is drag-and-drop easy to connect truth sources, simulators, and analytics without waiting for long engineering sprints.

What Synthetic Can and Cannot Replace

| Method | Best Uses | Weak Spots | Cost/Speed |

|---|---|---|---|

| Synthetic audience simulation | Preflight pressure tests, journey QA, edge-case friction, offer comparisons | Complex cultural nuance, reactions to truly novel concepts | Ultra-low cost, near-instant |

| Live in-market A/B | Ground truth, channel-specific flows, unexpected audience moves | Expensive, slow, regulatory risk if claims fail scrutiny | Medium/high cost, slow |

| Panels/interviews | Deep qualitative feedback, emotional resonance, unmet needs | Tiny samples, scheduling pain, subjectivity | High cost, glacial speed |

| Agentic micro-tests (owned channels) | Rapid-fire copy/UX tweaks in email, SMS, on-site modules | Local maxima, small sample bias | Low cost, fast |

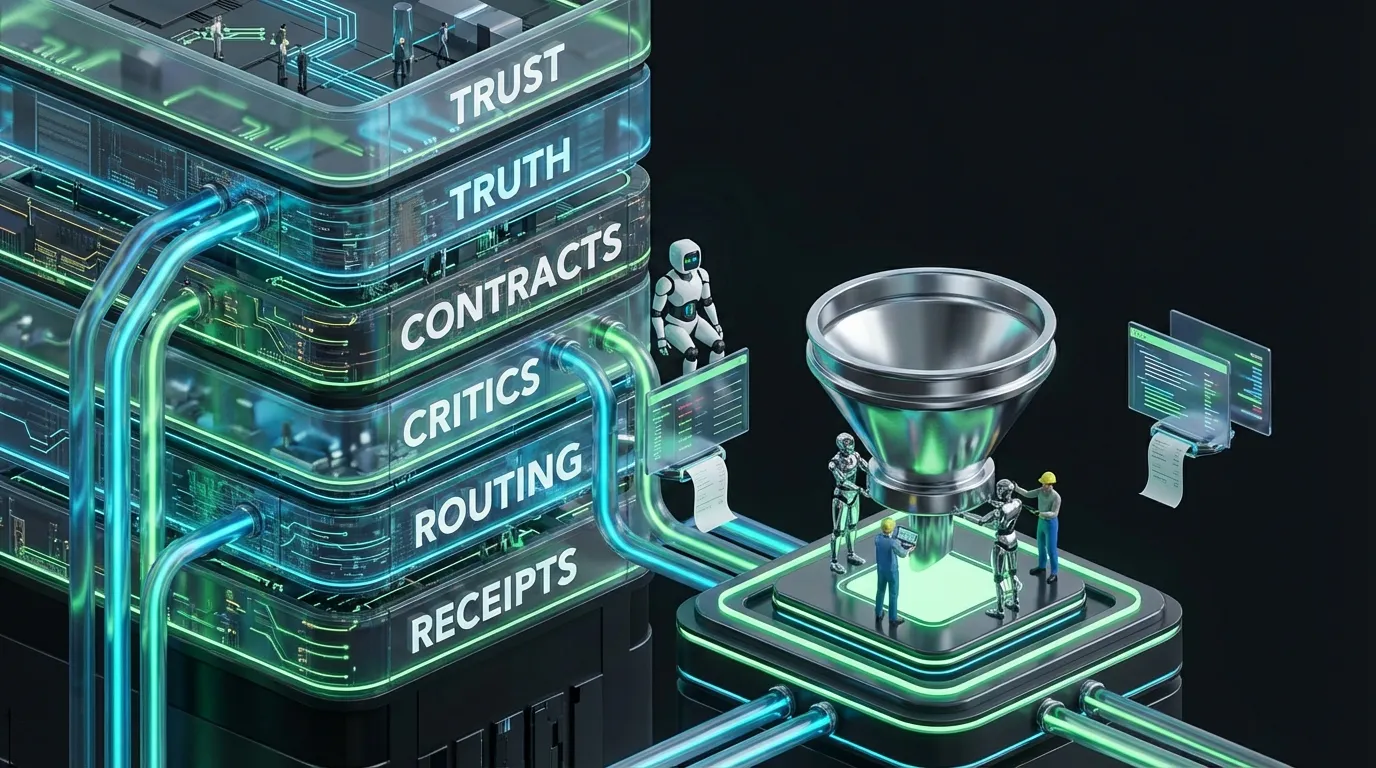

Stacking a Simulation Lab Into Your Workflow

A simulation lab is not a spaceship. You wire it from stuff you already have if you are intentional.

[Signals]

→ performance history • CRM segments • support tickets • price/inventory

[Truth Packs]

→ product specs • compliance rules • claim sources • brand DNA/design tokens

[Persona Engine]

→ goals • budgets • channel usage • constraints

[Scenario Builder]

→ tasks (e.g., 'switch provider') • steps • context

[Simulation Surface]

→ render variants • track actions • enforce policies

[Critics]

→ validate: schema • claims • tone • locale • fairness • cost checks

[Metrics]

→ predicted lift • risk alerts • per-insight cost

[Handoff]

→ export winners to ad ops, email, site modules, or assistants

Machine-Usable Personas, Not PowerPoint Artifacts

Agents cannot eat PowerPoint decks. Personify with data, not post-its.

{

"persona": {

"id": "ops_mgr_mid_us",

"goals": ["reduce manual toil", "hit SLAs"],

"budget_cap_usd": 15000,

"price_sensitivity": 0.6,

"proof_demand": 0.8,

"risk_tolerance": 0.3,

"channels": ["search", "assistant", "email"],

"dislikes": ["vague superlatives", "bait pricing"],

"eligibility": {"regions": ["US"], "industry": ["SaaS", "Retail"]}

}

}

Keep Scenario Configs Honest (and Useful)

Define the agent’s job, real-world constraints, and results you will actually measure.

{

"scenario": {

"name": "switch_internet_provider",

"context": {"current_speed_mbps": 100, "work_from_home": true},

"constraints": {"install_window_days": 7, "max_monthly_usd": 95},

"success": {"primary": "start_checkout", "secondary": "book_install"},

"channels": ["assistant", "answer_card", "rich_sms"],

"variants": ["card_v3_proof_numeric", "card_v4_testimonial"],

"policy_pack": "claims_and_locale_v2"

}

}

Critics: Preventing Simulation Fan-Fiction

- Schema critic: Rigid output formats for hassle-free parsing.

- Claims critic: Numeric claims must cite sources from your truth pack, no exceptions.

- Locale critic: Localization for dates, currencies, and spelling.

- Fairness critic: Expose bias and unfair treatment in synthetic cohorts.

- Cost critic: Clamp down on runaway compute, retries, and high-end model requests.

{

"critics": {

"schema": {"enforce": true, "schema_id": "AnswerCardV6"},

"claims": {"numeric_require_source": true},

"locale": {"region": "US"},

"fairness": {"enabled": true, "max_delta_accept_rate": 0.05},

"budget": {"max_cost_usd": 0.008, "retry_limit": 1, "frontier_calls": 1}

}

}

Calibration Is Mandatory, Not Optional

Even state-of-the-art simulators drift away from the chaos of real humans. Enter calibration.

- Use train-test splits: Hold back some real campaigns to calibrate, never blend them into training.

- Match distributions: Align clickthrough, acceptance, and sensitivity slopes with reality.

- Effect direction checks: Correlate simulated lift with live campaign results across variants.

- Version everything: Persona kernels, critics, and truth packs, no stealth edits.

{

"calibration": {

"metrics": ["accept_rate", "click_rate", "price_elasticity"],

"targets": {"accept_rate": 0.31, "click_rate": 0.07},

"tolerances": {"accept_rate": 0.03, "click_rate": 0.01},

"effect_agreement_threshold": 0.7,

"version": "sim_kernel_2.4"

}

}

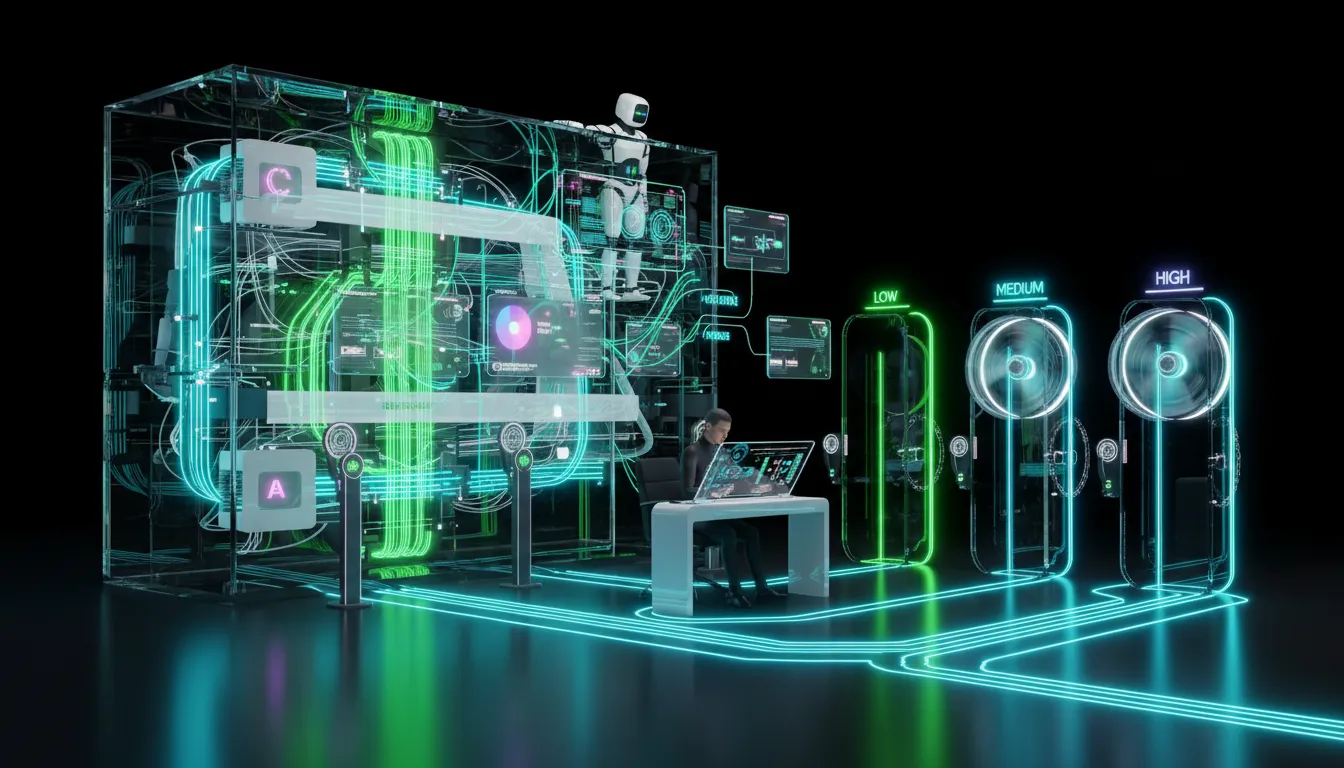

Budget Control: Make Your Lab Cheaper Than Your Media

| Cost Driver | Symptom | Control Mechanism |

|---|---|---|

| Recursive planning | Agents get stuck overthinking | Confidence-based early stopping |

| Frontier model overuse | Everything escalates to the biggest model | Start with smaller models, escalate selectively |

| Variant sprawl | 200+ tiny-probability concepts | Capped variants, min-delta pruning |

| Over-retrieval | Bloated prompts, draggy runs | Adaptive top-k ranking, ceiling limits |

{

"budget": {

"max_runs": 5000,

"max_cost_per_run_usd": 0.003,

"retry_limit": 1,

"frontier_calls_per_100_runs": 5,

"variant_caps": {"per_concept": 6}

}

}

What to Test Right Now with Synthetic Audiences

- Headline testing: See which proof-driven answer cards outperform for specific surfaces.

- Claims framing: Stack numeric versus testimonial versus social proof for identical offers.

- Funnel friction: Find drop-off hot spots in checkout simulations.

- Localization QA: Catch translation and tone fails before real customers do.

- Pricing sensitivity: Predict acceptance curves for subtle price shifts.

Reliable Measurement Beats Vibes

- Effect direction agreement: How often do your sim and live tests pick the same winners?

- Rank correlation: Does your top 3 in simulation match your top 3 in live, within a margin?

- Coverage: How many of your priority personas and channels does the sim credibly represent?

- Cost per insight: What did you spend for a statistically confident answer?

- Time to decision: Minutes from test design to sim recommendation.

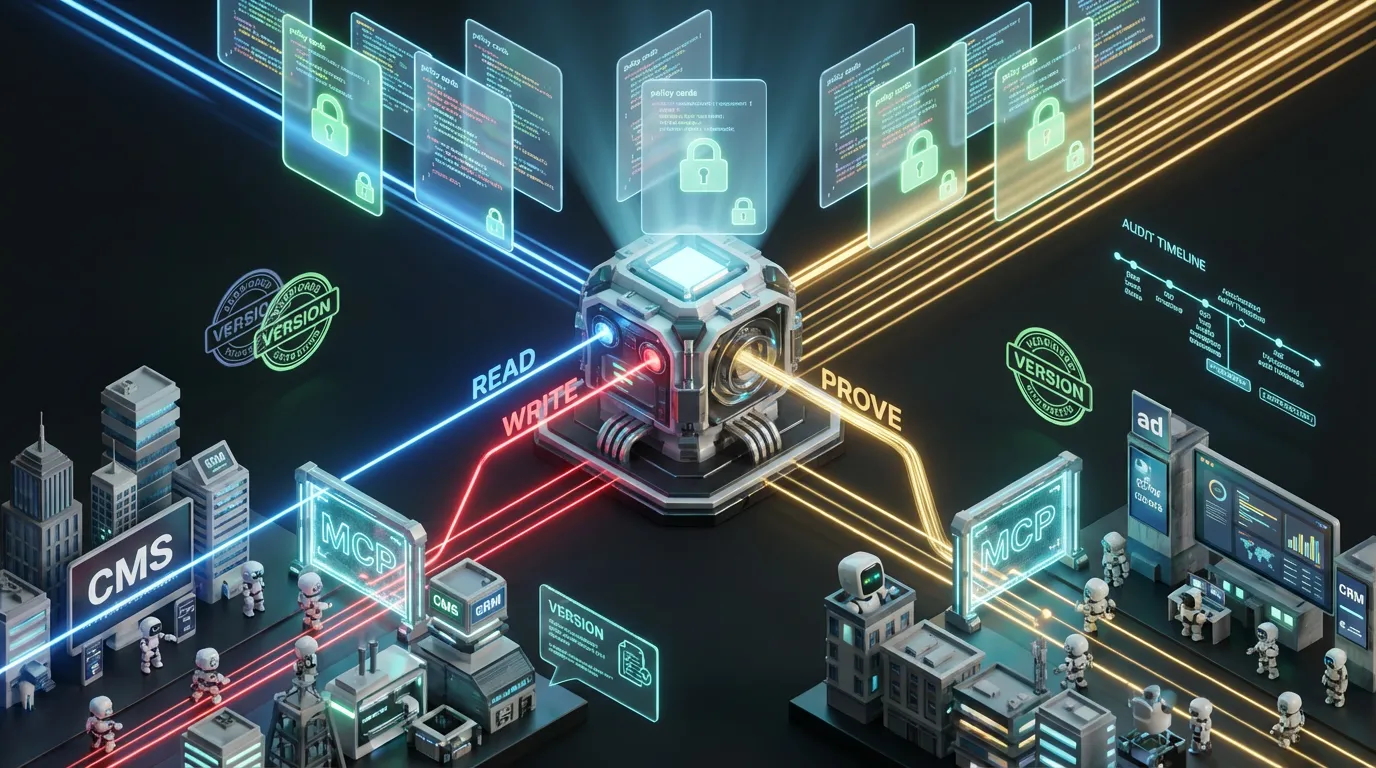

The Automation Blueprint: No Magic, Just Wiring

You do not need a rebuild. Drop this into your current stack for an instant upgrade.

- Truth pack endpoint: Serve prices, specs, claims, tokens, and rules from a single real-time API.

- Schema-first assets: Define answer cards and modular content as typed objects, not blobs of copy.

- Critic chain: Validate stepwise: schema, claims, locale, fairness, cost. Auto-repair small misses, fail fast on big ones.

- Observability: Log model versions, prompts, used sources, run cost, and critic results per run.

- Automated handoffs: Export winners straight to your email, ad, or CMS systems.

Governance and Ethics for the Automation-First Age

- Zero PII exposure: Only use aggregate, consented signals. Private data is radioactive, treat accordingly.

- Fairness auditing: Compare synthetic responses by protected attributes and audit any unexplained gaps.

- Policy as code: All business and legal constraints must be code-executable, not buried in PDFs.

- Receipts for everything: Every asset and conclusion gets a full provenance manifest.

{

"provenance": {

"sim_kernel": "2.4.1",

"models": {"router": "small_first_v7", "reasoner": "reason_llm_v3"},

"truth_pack": "pricing_claims_qa_2025_11",

"critics": ["schema_v6", "claims_v3", "fairness_v2"],

"cost_usd": 12.40,

"runs": 4800

}

}

Your Four-Week Rollout, Simplified

Week 1: Inventory and Schemas

- Move source-of-truth product data with references into structured JSON.

- Sketch strict schemas for cards and email modules, no loose fields.

- Pick one acquisition and one retention scenario to simulate.

Week 2: Personas and Critics

- Create three technically-usable personas with budget and channel specifics.

- Implement critics for schema, claims, locale, fairness, cost.

- Set caps for runs, retries, and high-end model calls.

Week 3: Calibrate and Compare

- Simulate 2 to 3 creative variants through the lab and log predicted lifts.

- Cross-check against a live test sample or recent campaign metrics; tweak until rank correlation stabilizes.

- Lock all versions for clarity this cycle.

Week 4: Automate Handoffs

- Wire winner export directly to ads, email, or CMS without copy and paste bottlenecks.

- Track cost per insight, effect agreement, and time to decision for reporting.

- Nominate the next batch of scenarios and repeat.

Failure Modes and Fast Fixes

| Failure | Why | Fix |

|---|---|---|

| Sim loves a line that flops live | Overfit persona data | Retrain using latest truths and calibrate on effect direction |

| Uncited numbers slide in | Claims critic misconfigured | Enforce strict source IDs, zero manual bypasses |

| Costs spiral | No limits on retries or model swaps | Clamp retries to one, enforce small-model first, set strict budgets |

| Persona mush | Vague, generic specs | Add budget caps, channel preferences, and disqualifiers |

| Bias creeps into best picks | Unbalanced synthetic group | Audit acceptance rates and rebalance as needed |

No-code and Low-code: For the Rest of Us

You do not need a data science SWAT team or lab coat budget. Practical loop: no-code or low-code routines fetch your truth packs, generate creative to strict schemas, pipe that into the sim with real personas, run critics, then publish straight to your CMS or DAM with provenance stamped. Winner flows into your ad manager with zero human copy or paste. Human review only for high-risk or truly novel assets. The rest, ship it and move on.

The Economics and Reality Check

- Autonomy is not free: Highly smart agents burn tokens, keep their decision loops tight.

- Human taste is still critical: Brand-defining moves and deep cultural nuance are where your team shines.

- Benchmarks are a guide, not gospel: Your own calibration beats any leaderboard stat.

- If the result is not in the logs, it never happened: Step-by-step receipts are non-negotiable.

The COEY Take

Synthetic audiences are not a crystal ball. They are wind tunnels designed to help you preflight creative and offers, flush out hidden friction, and stack up your best odds before letting real money or reputation fly. Encode your product truths, build machine-usable personas, set up critic chains, calibrate everything against your actual market, and cap costs and model scale to match your needs. Most importantly, automate your handoff so your winners are live without burning cycles on human copy and paste. Nail this and you will reduce campaign waste, move very fast, and avoid the kind of learning that leaves scars in public. No AI magic required, just disciplined, no-nonsense simulation labs plugged into your marketing OS.