Synthetic Focus Groups for Smarter Creative

Synthetic Focus Groups for Smarter Creative

November 25, 2025

Marketers love a new toy, especially if it cuts guesswork and burn. Enter synthetic focus groups, the high-speed, AI-powered panels that flip the script on pre-launch creative testing. Forget lethargic, costly human panels or the roulette wheel of unpaid internet feedback. With platforms layering the latest Llama 4, Gemini 2.5, and GPT‑5 into structured evaluation loops, synthetic audiences are no longer a novelty, they are a competitive advantage. When woven into a real hybrid workflow, these signal boosters filter duds, accelerate iteration, and guide your creators with feedback that feels like an upgrade, not a chokehold. For a deeper primer on the concept, see our take on synthetic audiences.

Why Synthetic Testing Has Real Teeth in 2025

Three tectonic shifts have made synthetic evaluation not just possible, but practical:

- AI Models Have Grown Up: State-of-the-art architectures like Claude Haiku 4.5 and Gemini 2.5 Pro now understand tone, persuasion, and conversion nuances across formats.

- Scoring Moved Beyond Vibes: Structured outputs, enforced schemas, and programmable critics mean you can trust more than your gut and a unicorn obsession with engagement.

- Costs Have Collapsed: Routing, budget capping, and model specialization make twenty variants cost what two would just a year ago.

Mix this cocktail and you get instant pre-market signals: cheap, granular, and shockingly predictive, once you tune the loop against actual campaign results.

What Synthetic Panels Are, And What They Are Not

A synthetic focus group is not a mechanical turk of poorly paid humans. Think of it as a rotating cast of well-defined AI personas that read, watch, or listen to your creative, scoring against criteria that map to real business KPIs. These bots do not replace human intuition or live-market feedback. They are your fast filter, a first defense and creativity multiplier, not a replacement for good taste or live sales signals.

| Method | Speed | Cost | Signal Quality | Best Use |

|---|---|---|---|---|

| Human panels | Slow | High | Nuance, context | Flagship creative, sensitive topics |

| Live A/B | Medium | Media spend | Ultimate truth | In-market winner validation |

| Synthetic panel | Fast | Low | Risk screening, idea shaping | Pre-flight, variant pruning |

| Hybrid loop | Fast to live | Disciplined | Great, when calibrated | Most campaigns, channel refresh |

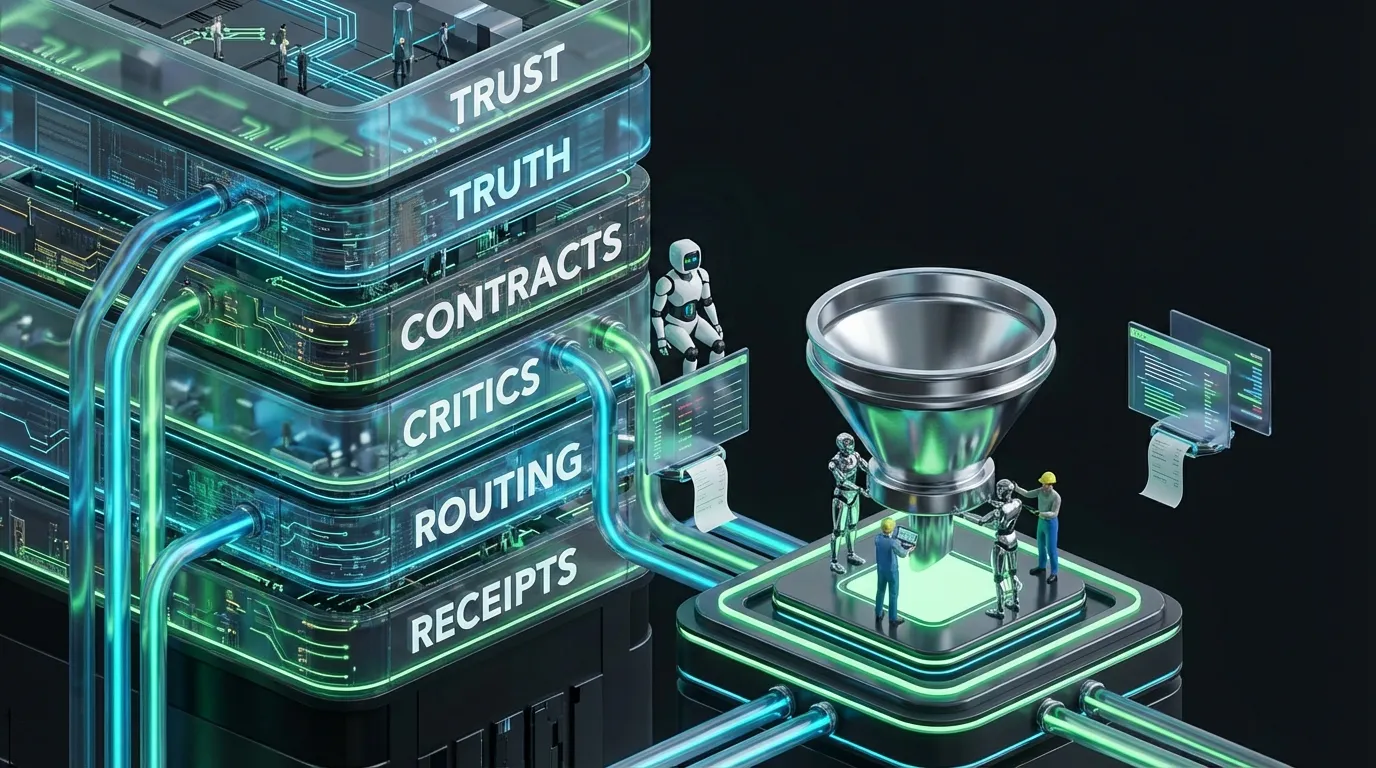

The Hybrid Evaluation Stack: Automation-First and Still Human-Aligned

This is not “AI eats research”. It is “AI prunes waste and boosts taste”. Here is your assembly line:

[Truth]

→ brand tokens • product claims • proof • banned phrases • glossary

[Generate]

→ variants to strict schemas (headline • body • CTA • proof • captions)

[Simulate]

→ synthetic panel per persona • channel • locale

[Critic]

→ schema • tone • claims • accessibility • cost

[Calibrate]

→ compare panel outputs to live campaign data • tweak weights

[Select]

→ top variants to humans or micro live test

[Learn]

→ retro the rubrics • bin duds • pin winners

Notice how calibration is built in. Your automation only works if it mimics what moves the needle in the wild.

Do Not Prompt, Productize Your Synthetic Panel

No single-shot prompts. No genius templates. If you want repeatable value, structure your synthetic panel as a product: modular, measurable, disciplined. Here is what that looks like:

Panel Blueprint

{

"panel": {

"name": "b2b_smb_paid_social_v2",

"personas": [

{

"id": "ops_manager",

"goals": ["save_time", "reduce_errors"],

"objections": ["too_expensive", "workflow_disruption"],

"channel": "paid_social",

"locale": "en-US"

},

{

"id": "founder",

"goals": ["grow_revenue", "ship_faster"],

"objections": ["lock_in", "setup_complex"],

"channel": "paid_social",

"locale": "en-US"

}

],

"rubric": {

"clarity": {"weight": 0.25, "criteria": ["plain_language", "benefit"]},

"fit": {"weight": 0.25, "criteria": ["persona_goal_alignment", "channel_style"]},

"proof": {"weight": 0.20, "criteria": ["credible_claim", "source_visible"]},

"action": {"weight": 0.20, "criteria": ["CTA_strength", "minimal_friction"]},

"risk": {"weight": 0.10, "criteria": ["policy_breach", "banned_phrases"]}

},

"outputs": ["scorecard", "suggested_edits", "risk_flags"]

}

}

Variant Schema: No More Franken-Ads

{

"$schema": "https://json-schema.org/draft/2020-12/schema",

"title": "AdVariantV3",

"type": "object",

"required": ["headline", "body", "cta", "proof"],

"properties": {

"headline": {"type": "string", "maxLength": 70},

"body": {"type": "string", "maxLength": 160},

"cta": {"type": "string", "enum": ["Book a demo", "Try free", "See how"]},

"proof": {

"type": "object",

"properties": {

"type": {"enum": ["numeric", "testimonial", "third_party"]},

"text": {"type": "string"},

"source_id": {"type": "string"}

},

"required": ["type", "text", "source_id"]

},

"alt_text": {"type": "string", "maxLength": 120}

}

}

From Evaluator Vibes to Scorecards You Can Ship

Forget endless essays. Good evaluators output structured, bankable data, scorecards, not riddles.

{

"scorecard": {

"persona": "ops_manager",

"scores": {

"clarity": 0.84,

"fit": 0.77,

"proof": 0.68,

"action": 0.80,

"risk": 0.95

},

"suggested_edits": [

{"field": "headline", "suggest": "Automate approvals—no workflow change needed"},

{"field": "proof.text", "suggest": "Teams slashed approval time 46%"}

],

"risk_flags": ["banned_phrase: guaranteed"],

"decision": {"route": "repair", "reason": "proof subpar, banned phrase present"}

}

}

Calibration, From Toy to Trusted Advisor

A synthetic panel that is not anchored to real results is, at best, a novelty. Track how each evaluator’s composite score predicts true lifts, CTR, reply rate, or add-to-cart. You do not need R-squared perfection. You need clear signal and the confidence to cut bottom-quartile variants, redirecting resources to what works.

Calibration Manifest: Dials and Knobs Made Real

{

"calibration": {

"panel": "b2b_smb_paid_social_v2",

"metric": "ctr",

"window": "rolling_90d",

"weights": {"clarity": 0.3, "fit": 0.2, "proof": 0.3, "action": 0.2},

"correlation_target": 0.45,

"update_rule": "weekly weights by maximizing Spearman correlation",

"holdout": {"share": 0.15, "method": "geo_split"}

}

}

Tip: Even 0.3 to 0.5 correlation between synthetic panels and live response justifies trimming variants before you send them into the wild. That is real ad spend reclaimed.

Preventing AI Hallucinations and Brand Drift

Nothing torpedoes credibility faster than wild stats or off-brand language. Critic chains keep synthetic panels in check: enforcing tone, claim sourcing, accessibility, and more. Blowing these stops creativity, but using them correctly saves your brand.

{

"critic_chain": {

"schema": {"enforce": true},

"tone": {"rules": ["specific"], "ban": ["vague", "hype"]},

"claims": {"numeric_require_source": true, "allowed_sources": ["product_specs", "case_studies"]},

"locale": {"currency": "auto", "date": "auto"},

"accessibility": {"alt_text": "required", "contrast_min": 4.5},

"cost": {"max_asset_usd": 1.10}

}

}

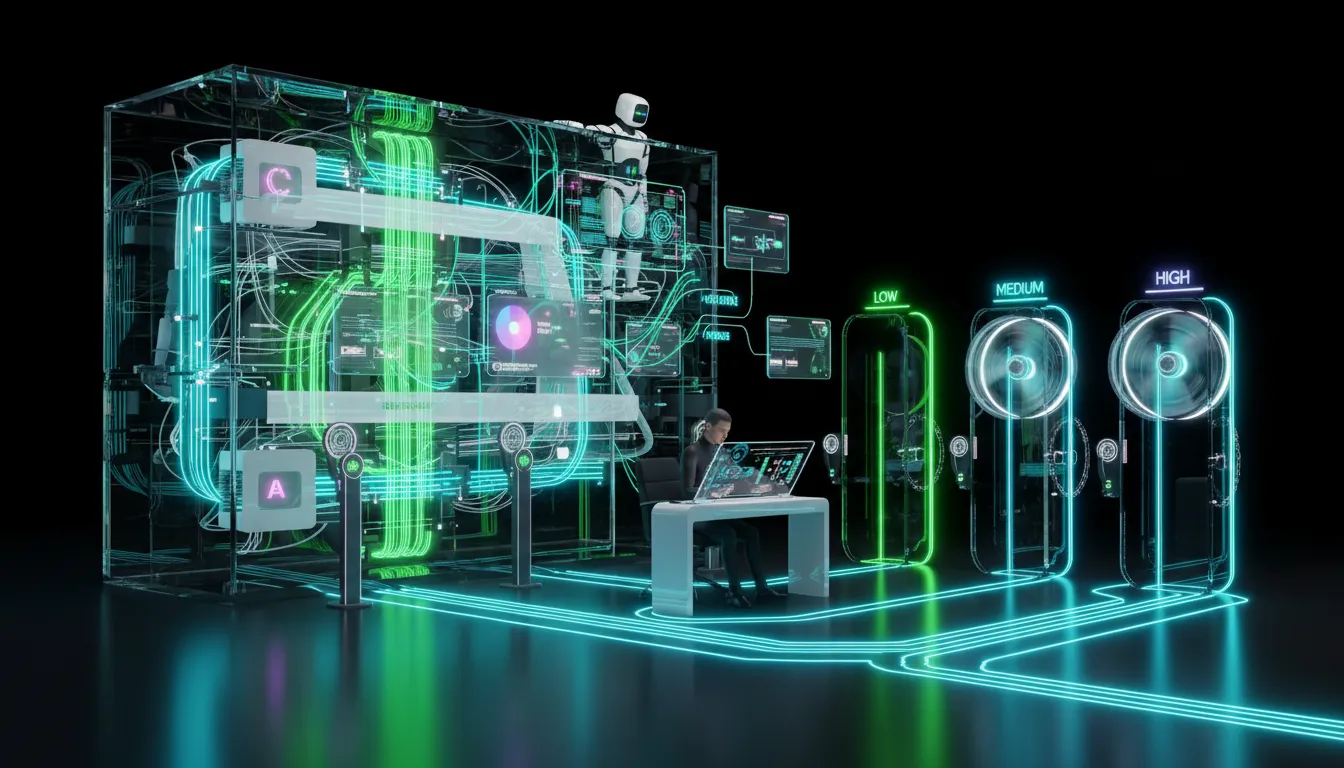

Budget, Routing, and the Art of Not Torching Cash

AI can churn through assets and your wallet if left unchecked. Smart routing sends routine jobs to small, fast models and only escalates complex cases to frontier models like Llama 4 or GPT‑5.

{

"router": {

"tasks": {

"draft": {"prefer": ["mistral_next"], "fallback": ["llama_4"], "max_cost": 0.0025},

"evaluate": {"prefer": ["small_judge"], "fallback": ["frontier_judge"], "latency_ms": 700},

"repair": {"prefer": ["mistral_next"], "retry_limit": 1}

},

"rules": {

"escalate_on": ["low_conf", "schema_error"],

"deny_on": ["dlp_fail"],

"retry_limit": 1

}

},

"budget": {

"campaign": "q_launch",

"caps": {"max_assets": 900, "max_per_asset_usd": 1.15, "frontier_calls": 1},

"alerts": {"daily_limit_usd": 400, "spike_pct": 20}

}

}

Creative Formats, How Panels Flex Across Channels

- Text: Headlines, post copy, and CTAs. Panels assess clarity and specificity. Critics enforce schema and banned phrases.

- Image: Overlays, cropping, icon choices. Panels flag clutter. Critics check contrast and brand rules.

- Video: Hook timing, captions, VO pace. Panels score emotional arc and beat clarity. Critics check SRT timing and accessibility.

- Audio: Script cadence and believability. Panels judge tone and trustworthiness. Critics penalize poor prosody or missing disclosure.

Playbooks, Team Size Edition

Creators and Micro Teams

- Start with two personas, one rubric, and a single channel. Keep the loop lean.

- Let panels autopublish low-risk edits, but review final headlines and claims manually.

- Cap variants at three. Quality over sprawl, every time.

Mid-Market GTM Teams

- Spin up panels per channel: paid social, email, landing. Share tone and claims packs across teams.

- Calibrate every week. Rubrics must match what is working live.

- Batch process overnight. Ship edits in daylight, with human signoff for front-and-center assets.

Enterprise and Regulated

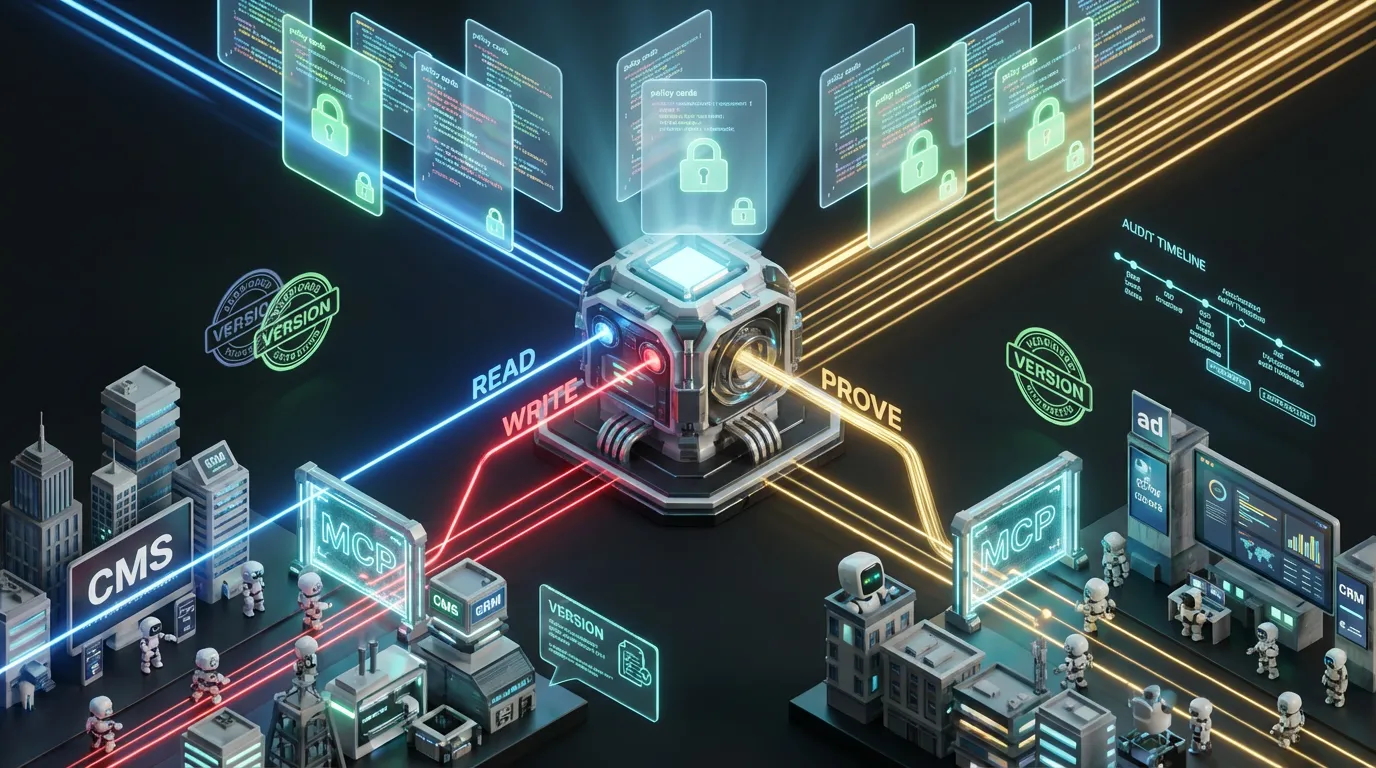

- Policy-as-code: Every panel checks claims, accessibility, and legal disclosures before approving an asset.

- Mandate asset provenance. If it is not logged, it is not live.

- Customize regional panels. Sensitive outputs get routed through legal before launch.

Metrics That Matter: No Dashboard Bloat Allowed

- First-pass validity: Share of variants passing test on the first go. Aim for 80% or higher on routine assets.

- Panel-to-live correlation: Do scores predict actual KPI lift? This is your “is this working?” sanity check.

- Variant reduction: How many off-target ideas you are killing before spending a penny on distribution.

- Cost per compliant: Total compute plus review per asset that clears all gates.

- Time to publish: Minutes from brief to ready-to-ship draft for each channel.

Evaluator Dashboard: Event Anatomy

{

"event": {

"id": "evt_5241",

"asset_id": "ad_v41",

"persona": "founder",

"scores": {"clarity": 0.88, "fit": 0.81, "proof": 0.72, "action": 0.83, "risk": 0.97},

"decision": "promote_to_live_cell",

"latency_ms": 612,

"cost_usd": 0.0019,

"critic": {"schema": "pass", "claims": "pass", "tone": "pass"}

}

}

Common Failure Modes (and How to Fix Them Fast)

| Failure | Why It Happens | Fast Fix |

|---|---|---|

| Evaluator overfitting | Panel likes a single style | Rotate exemplars, cap n-gram overlap, stress counterfactuals |

| Low live correlation | Rubrics out of sync with the real world | Reweight by actual KPIs, use holdouts, retrain often |

| Unsourced claims | Panel lets invented stats slip through | Claims critic must enforce source IDs, no source, no ship |

| Variant sprawl | Overgeneration without limits | Hard cap variants, prune near duplicates immediately |

| Bias or tone drift | Panels inherit cultural bias | Diversity audits, neutrality checks, localize rubrics |

Ethics and Compliance, No Melodrama Required

- Consent and provenance: Track rights for every voice, image, and testimonial. Attach manifests as default, not afterthought.

- Privacy: Strip out or hash PII before anything ever pings a model node.

- Fairness: Audit outputs across protected classes and sensitive verticals. No bias, no blowback.

- Transparency: If AI touches your assets, disclose. Nothing torpedoes trust faster than gotcha revelations.

30-Day Synthetic Panel Rollout Checklist

Week 1, Blueprint and Guardrails

- Choose one channel plus two personas. Nail the JSON schema and a barebones rubric.

- Centralize your truth: brand claims, proof, glossary, banned phrases.

- Build a critic chain for schema, tone, accessibility, claims.

Week 2, Simulate and Repair

- Create 5 to 8 variants per brief. Run them through panels and critics.

- Auto repair the fixables (length, minor tone). Escalate the tricky stuff to humans.

- Cap both tries and variants hard at three each.

Week 3, Calibrate and Score

- Deploy top two variants into small live tests with a control.

- Compare synthetic panel scores to live KPI deltas. Retune rubric weights now.

- Log costs, speed, and first-pass validity rates. Broadcast the wins.

Week 4, Lock It and Scale It

- Codify routers and budget limits. Set spend and error spike alerts.

- Publish no-nonsense creator advice: what the panel likes, what critics block cold.

- Add a second channel only if your calibration holds up.

Synthetic Panels and the 2025 Creator Economy

- Draft in minutes, not days: Get actionable, persona-aware edits instantly. More momentum, fewer nuclear option redos.

- Brand and creator handshake: Brands distribute rubric packs for creators to self check before submission. Less friction, faster pay.

- Channel harmony: Accessibility, policy, and brand critics minimize takedowns and rework across the fragmented platform universe.

The COEY Deep Dive Bottom Line

Synthetic panels are not your customer, but they are the budget-smart filter that lets your real buyers see only your best work. It is a simple loop: discipline your generation, build your evaluators as products, and calibrate fearlessly to live data. Route the cheap stuff first; only escalate if the numbers demand it. Never publish a number without a receipt. Let your humans own taste, edge cases, and risk. Stick this workflow and your pre-flight becomes the launchpad, not the bottleneck.