Meta Puts GenAI Inside Ads Manager: What You Can Automate

Meta Puts GenAI Inside Ads Manager: What You Can Automate

December 21, 2025

Meta is pushing generative AI deeper into the place marketers actually live: Ads Manager. Instead of bouncing between design tools, copy docs, and five-tab chaos, more advertisers are seeing AI creative generation and optimization suggestions baked directly into campaign build flows, an extension of the Advantage+ direction Meta’s been telegraphing for a while. The upside is obvious: faster variants, faster tests, faster learnings. The catch is also obvious: much of this power still lives behind the UI, not your automation stack.

Meta’s broader push has been building since its early genAI ad creative rollouts (background generation, image expansion, text variations) and has expanded to generating more complete image variations, not just swapping backgrounds (TechCrunch). Now, the important shift is placement: it’s not “a cool beta feature,” it’s increasingly integrated into the default campaign build flow for many advertisers, especially where Advantage+ creative options are available (Social Media Today).

Translation: Meta is trying to turn Ads Manager into a creative factory, where iteration is cheap, personalization is continuous, and make 50 versions is no longer a staffing problem.

What changed (and why it matters)

Meta’s generative AI in Ads Manager isn’t one feature, it’s a workflow stance. The platform is moving upstream from optimize delivery to manufacture inputs. Historically, Meta automation mostly lived in:

- Placements

- Bidding and budget pacing

- Audience expansion

- Delivery optimization (Advantage+)

Now Meta is tightening the loop: generate creative variants, launch them faster, read performance, and keep rotating. The biggest impact isn’t that AI can write a headline. It’s that the system is designed to produce more shots on goal and let the algorithm pick winners.

What’s new inside Ads Manager

Based on Meta’s direction and reporting on the rollout of generative AI ad features, advertisers are increasingly able to generate and adapt creative within the same campaign flow, especially in Advantage+ contexts. Key capabilities include:

AI image generation and variations

Meta’s genAI capabilities started with background generation and expansion, then progressed toward creating fuller image variations. In practice, that means you can take a product image and quickly produce alternate looks for different audiences, placements, or seasonal angles without reopening Photoshop or begging your designer for just one more size.

Text variations for captions and headlines

Meta can generate multiple copy options from your seed text, helpful for rapid testing when you already know the offer but need different hooks, tones, or benefit framing. Not revolutionary. Very operationally useful.

Placement-friendly creative adaptation

Image expansion and aspect-ratio adaptation matters because modern campaigns are multi-placement by default: Feed, Stories, Reels, Marketplace, right column (hello, 2016). Creative that doesn’t fit gets skipped. AI resizing and expansion reduces that friction.

More visibility into AI-driven outcomes

One under-discussed but important piece: Meta has added reporting clarity for flexible formats and AI-generated image ads via Creative breakdown, which helps teams see how individual creative elements and variations are performing (PPC Land). If you’ve ever tried to explain to your CMO why the algorithm liked that version, you know why this matters.

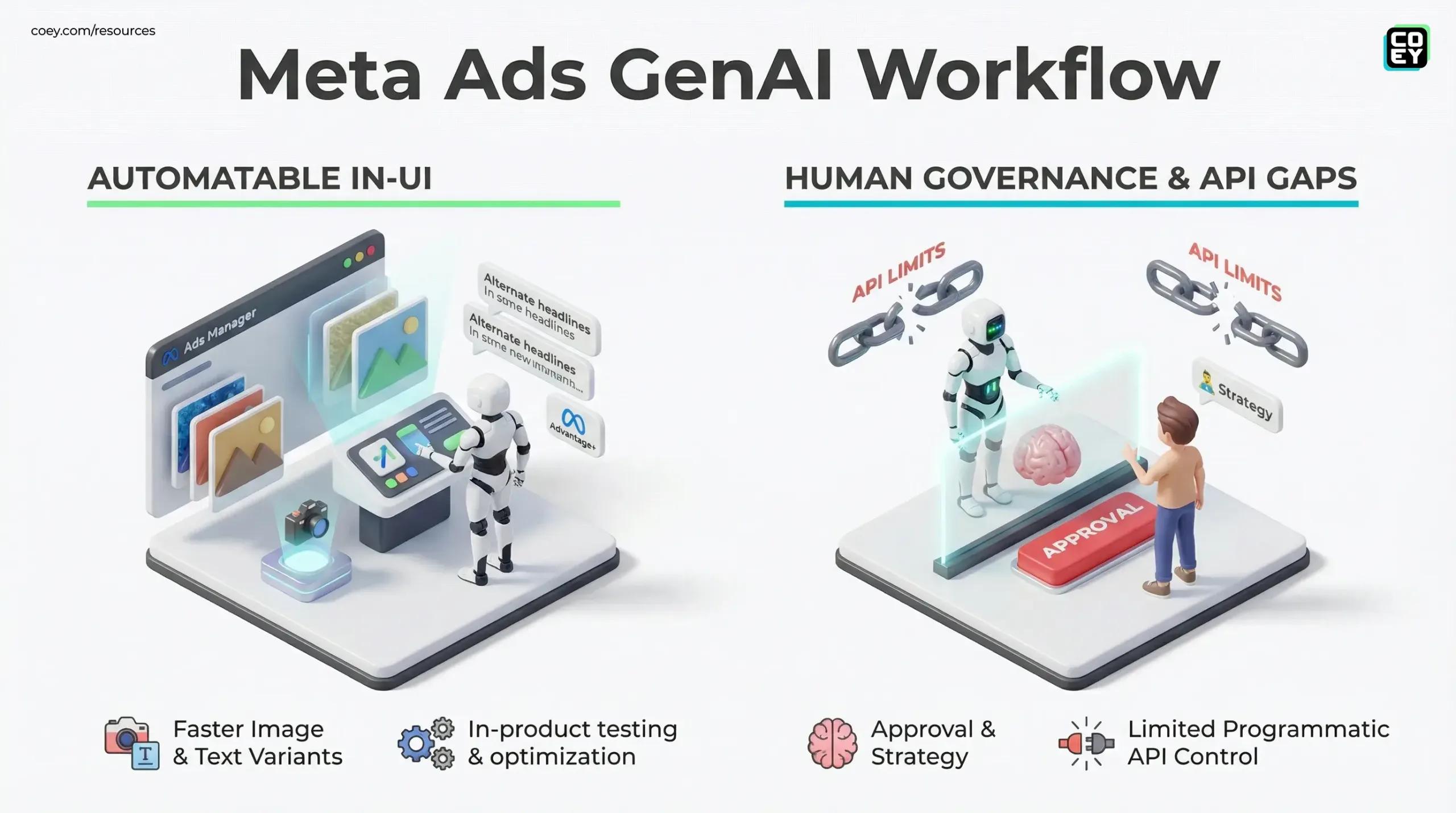

The automation reality check

Let’s answer the question COEY readers actually care about: can you automate this?

| Area | Automatable today? | What’s blocking scale |

|---|---|---|

| Creative generation inside Ads Manager | Yes (manual-in-UI) | Mostly UI-only; limited programmatic control over gen outputs |

| Campaign creation + updates | Yes (via Meta Marketing API) | GenAI feature parity via API is limited and not consistently documented |

| High-volume variant testing | Partially | Hard to mass-produce in-product genAI variants via integrations without exposed endpoints |

| Creative performance reporting | Yes | Interpretation still human-heavy; attribution remains messy |

UI automation is not workflow automation

Meta embedding genAI into Ads Manager is great for humans moving fast. But if you’re trying to scale like a modern content org, brief to generate to approve to deploy to learn to regenerate, your bottleneck becomes obvious:

- The generation happens where your automation tools can’t easily reach (inside Ads Manager).

- Your source of truth (DAM, CMS, brand system) is outside Meta.

- Your approvals likely happen in Slack, Asana, Notion, not in Meta.

If genAI is trapped in the UI, your best workflow is still a very fast person, not a reliable system.

API availability: what we can say now

Meta’s Marketing APIs remain the backbone for programmatic campaign management, creating campaigns, ad sets, ads, uploading assets, pulling reporting, and orchestrating spend at scale.

What’s still murky for most teams is whether the newest generate me a bunch of creatives actions you see in Ads Manager map cleanly to public, stable API endpoints. As of late 2025, the most reliable pattern for automation is still: generate or edit assets (with Meta’s in-product tools or external generators), then use the Marketing API to upload and assemble creatives and run delivery. In other words: you can automate the media machine, but the newest in-product creative generator can still require a human driver.

What needs to happen for real automation

To make this truly automation-native (not just faster manual work), Meta would need to expose:

- Deterministic genAI endpoints (generate X variants from prompt + brand inputs)

- Asset lineage metadata (what prompt, what model settings, what source image)

- Workflow hooks (webhooks for variant created, flagged, approved, rejected)

- Brand control surfaces (style guides, disallowed claims, regulated terms, product truth constraints)

Multi-format implications: text, photo, video, audio

Right now, the most mature implementations are in static image + text variation land. That’s where Meta can reliably generate, adapt, and deploy quickly. But the direction points toward cross-format automation:

Photo to video is the next pressure point

Meta has publicly discussed and shipped AI-driven video creation features for advertisers (including tools that can generate video variations from existing creative inputs), but availability varies by market, account, and campaign type. If Meta can generate better image variants, the natural evolution is generating lightweight motion variants for Reels placements, think same offer, different scene, at scale. It’s not universally controllable programmatically yet, but the incentive is obvious: Reels inventory wants Reels-native creative.

Audio and voice: transparency is the landmine

Audio generation (realistic voice) is where policy and disclosure get spicy fast, especially for political or social issue ads. Meta requires disclosure for political, electoral, and social issue ads that use AI or digital methods to create or alter photorealistic imagery, video, or realistic-sounding audio in certain ways (CNBC). As genAI capabilities expand, brands should assume scrutiny rises with realism.

What marketers can do today

This is the practical playbook that’s real right now for most teams:

Use Meta’s genAI for speed, not strategy

Let the AI generate volume, backgrounds, expansions, text variants, then apply human judgment to pick which ideas deserve your brand’s name and budget. The model is a slot machine; you’re still the casino manager.

Pair in-product generation with external governance

Even if generation happens in Ads Manager, approvals don’t have to. Teams can standardize:

- Prompt templates (On-brand, Offer-first, UGC-style, Founder voice)

- Compliance checklists (claims substantiation, regulated copy, disclosures)

- Creative naming conventions (so reporting doesn’t become hieroglyphics)

Build a learnings loop that travels

The real scaling win is not generating 30 variants. It’s capturing what worked and reusing it across formats and channels. Treat Meta performance data as creative R&D, then feed winning hooks into:

- Email subject lines

- Landing page hero copy

- YouTube hooks

- TikTok scripts

- Podcast ad reads

What’s coming next (and what’s still locked)

Meta’s direction is clear: more AI in the build flow, more Advantage+ defaults, more just give us inputs and we’ll handle outputs. The missing piece for creators and brands trying to scale with intelligent systems is programmability.

Until generative creative features have consistent API access (or reliable integration surfaces), the readiness level is:

- High for teams scaling manually (fast operators inside Ads Manager)

- Medium for teams scaling systematically (automation + governance across channels)

- Low for teams trying full creative autopilot (because you still need humans to drive the UI and approve)

If you want a related COEY perspective on how Meta is turning more user behavior into ad targeting signal, see Meta AI Chat Prompts Now Influence Your Ads.

Bottom line for brands scaling creativity

Meta putting genAI directly into Ads Manager is a real workflow acceleration, less AI demo day, more this is how ads get made now. It makes iteration cheaper, testing faster, and personalization more attainable for teams without massive creative departments.

But if you’re building a modern marketing machine, where briefs turn into assets across text, photo, video, audio through automations, the key question isn’t can Meta generate an image? It’s:

Can your creative system reliably generate, govern, deploy, and learn without being trapped in one platform’s UI?

Meta is making the in-platform factory stronger. The teams that win will be the ones who treat it like a production line component, connected to their brand standards, approvals, reporting, and cross-channel repurposing, so humans stay focused on intent and taste, and machines handle the grind.