Seedance 1.5 Pro: Text-to-Video With Real Audio Sync

Seedance 1.5 Pro: Text-to-Video With Real Audio Sync

December 29, 2025

ByteDance is pushing generative video past the “cool silent clip” era with Seedance 1.5 Pro, a text-to-video model that generates video plus synchronized audio in one shot, including dialogue, ambient sound, and lip-sync. If you’ve ever tried to stitch together AI video, AI voice, AI SFX, and then spent an hour fixing drift like you’re defusing a bomb, yeah. This is aimed directly at that pain.

Translation: Seedance 1.5 Pro is trying to make “generate then trim then publish” a real workflow, not a fantasy you pitch in a deck.

What actually shipped

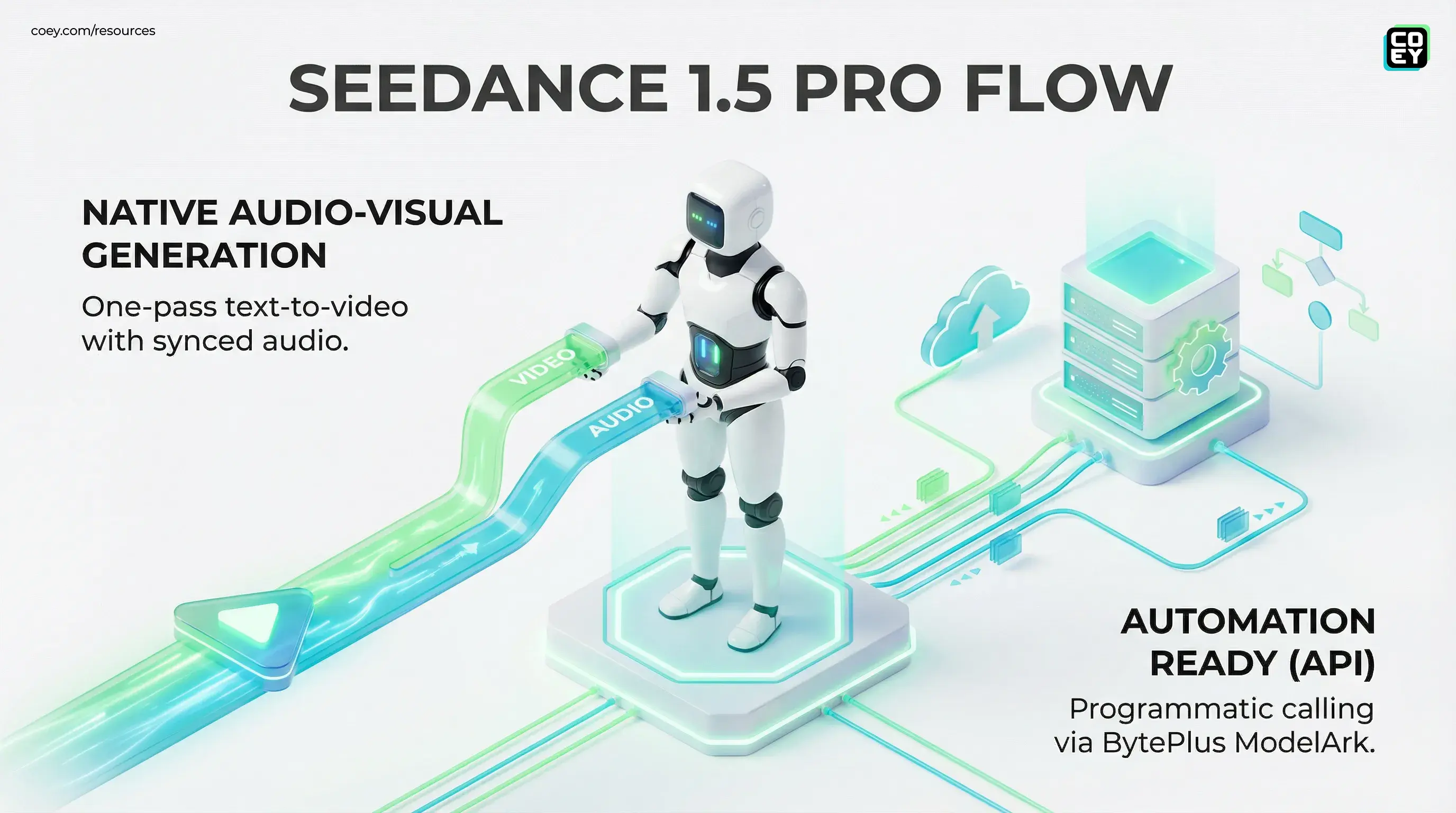

Seedance 1.5 Pro’s headline move is native audio-visual generation. Instead of outputting a silent MP4 and leaving you to do voice, music, SFX, and lip-sync in a separate pipeline, it can output a clip where sound and visuals are designed to land together.

ByteDance’s ecosystem positioning matters here. Seedance isn’t being treated as a research flex. It’s being productized through BytePlus ModelArk, which is a big signal for anyone asking the only question that matters in ops: can we call it programmatically?

What creators are noticing in early demos

Early community clips are highlighting the practical stuff marketers care about: talking-head shots that don’t look like ventriloquism, multi-character scenes that don’t melt on line two, and audio that doesn’t feel stapled on.

Because the original X link provided could not be reliably validated, here’s a stable way to evaluate the tech without relying on a single social post: use the RunComfy Seedance 1.5 Pro page to test outputs and compare audio sync behavior across prompts.

Why “audio-native” is the real milestone

Most generative video workflows still look like a Rube Goldberg machine:

- Generate silent video

- Generate a voice track somewhere else

- Find or generate music and SFX

- Align everything in an editor

- Discover timing drift, re-export, repeat

Seedance 1.5 Pro is trying to collapse that into one generation step. That matters because post-production syncing is the hidden tax on “AI video at scale.” The visuals might be 80% there, but audio misalignment is the fastest way to make a clip feel fake, or just annoying.

Audio drift is the uncanny valley of marketing ops. Viewers forgive imperfect visuals. They bounce on bad sync.

What this changes for marketing workflows

If Seedance 1.5 Pro holds up in real use, the workflow upgrade is less about “better video” and more about faster iteration loops. That’s the compounding advantage: the ability to generate more viable variants per week without multiplying editing headcount.

Where it lands first

- Paid social variants: Hook swaps, CTA swaps, angle testing, without rebuilding audio every time.

- UGC-style ads: Script-driven “creator voice” content that still needs believable speech timing.

- Localization: Re-run the same scene with different dialogue per locale, with mouth motion and sound aligned.

- Explainers and onboarding: Lightweight scripted scenes that don’t justify a full shoot.

This is also the first time “batch video generation” gets meaningfully easier, because the output is closer to “ready-to-cut” instead of “ready-to-begin-post.”

Automation lens: is it actually API-ready?

Yes, with important nuance. Seedance 1.5 Pro isn’t just a UI toy. It’s positioned with API access through BytePlus via ModelArk, and it’s also appearing in third-party wrappers that simplify usage.

For teams that aren’t technical: API availability means you can treat Seedance like a service your systems call, triggered by a spreadsheet, a CMS update, a campaign brief form, or an automation tool like n8n or Make, rather than a tool a human has to click inside all day.

| Workflow need | What Seedance enables | What to watch for |

|---|---|---|

| Batch ad variants | Generate multiple clips from structured prompts | Queue times, retry logic, cost per usable clip |

| Localization runs | Swap dialogue text, keep scene intent consistent | Voice consistency, pronunciation, compliance by locale |

| Workflow integration | Callable model endpoint via BytePlus ModelArk | Governance: approvals, logging, brand constraints |

Third-party access is showing up too

Seedance 1.5 Pro access is also being surfaced in third-party platforms like RunComfy, which is useful for fast testing and for teams that don’t want to build directly on raw model endpoints on day one.

Real-world readiness: what’s solid vs what’s still hype

Seedance 1.5 Pro is directionally big, but teams should separate “demo impressive” from “workflow dependable.” Here’s the pragmatic split.

Real: less post-production friction

If the model consistently returns clips where speech timing and mouth movement match well enough for social placements, you remove an entire stage from the pipeline. That’s not sexy. It’s just insanely valuable.

Real: faster creative testing

Marketing teams don’t win by generating one perfect clip. They win by generating enough good clips to let distribution and performance data pick winners. Audio-native generation makes “more shots on goal” cheaper.

Still not magic: brand safety and approvals

Audio-native doesn’t mean “brand-safe by default.” You still need:

- claim verification (especially for regulated categories)

- rights and consent controls (voices, likeness, referenced brands)

- human review gates for anything high-stakes

The model is not the system. The system is your governance layer, routing rules, and review process wrapped around the model.

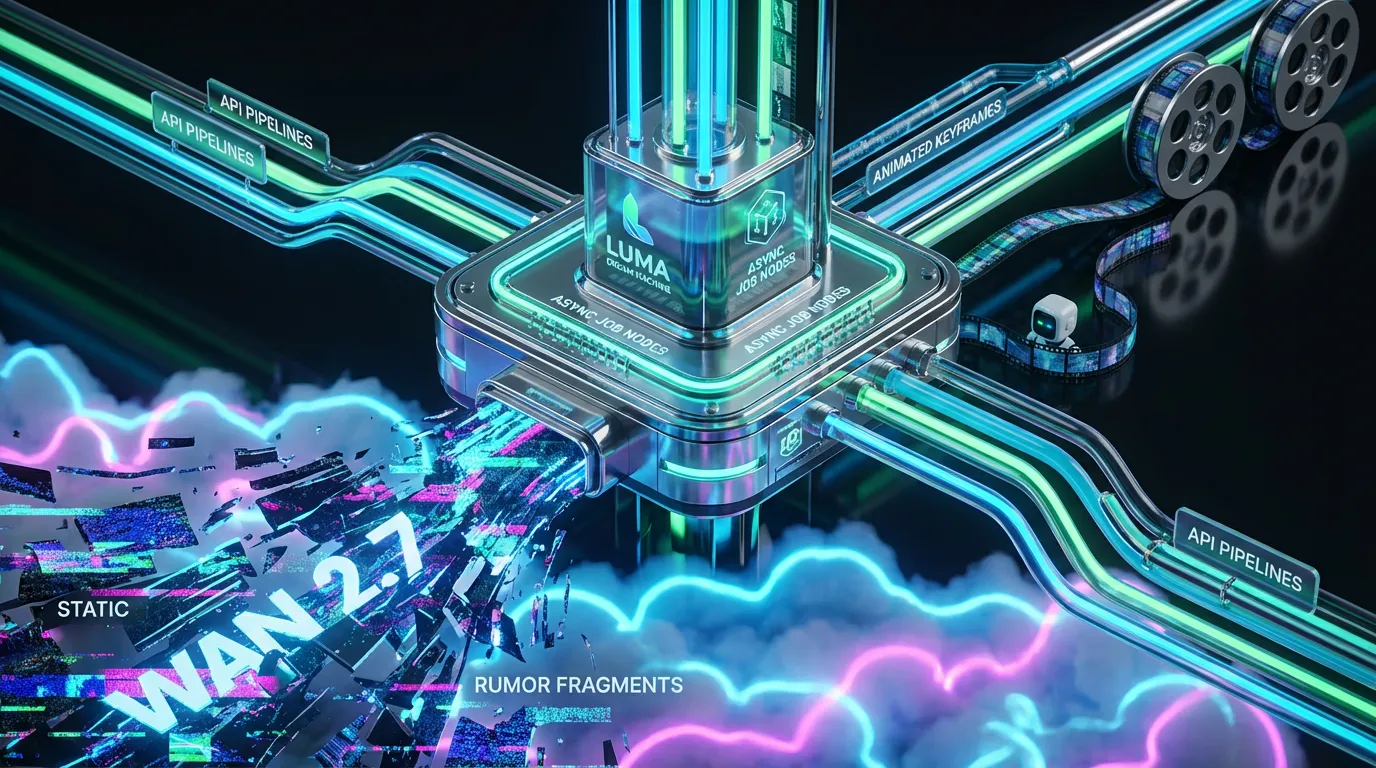

The competitive context: everyone’s chasing the same unlock

This release lands in the same broader trend we’ve been tracking across AI video: the shift from “single cool clip” to “repeatable, programmable output.” Alibaba’s Wan line has been pushing multi-shot and API workflows, see our recent coverage: Wan 2.6 Makes AI Video Multi Shot Ready, while other platforms are trying to solve identity consistency, scene control, and editability.

Seedance 1.5 Pro’s differentiator is simple: audio is not a bolt-on. That’s the operational leap. And if you’re scaling creative with human plus machine collaboration, reducing steps is how you get compounding output without compounding chaos.

Bottom line

Seedance 1.5 Pro is ByteDance betting that the next generation of AI video isn’t just visual, it’s audio-native and automation-friendly. If it performs consistently outside demos, it meaningfully shortens the path from script to publishable clip by removing the ugliest part of the pipeline: post-production audio syncing.

For executives, the takeaway is straightforward: this moves AI video closer to being a workflow component you can budget for and integrate, not just a creative experiment. For marketers and creators, it’s a speed play: more variants, faster localization, fewer hours lost in the sync mines. Just keep it real: you still need governance, approvals, and a system around it, because scaling creativity is fun. Scaling mistakes is not.