When AI Should Shut Up: Abstention Stack

When AI Should Shut Up: Abstention Stack

January 13, 2026

The smartest thing your automation can do is sometimes nothing

Marketing teams love two words: auto and publish. The internet loves one word: screenshots. Living in the space between those realities is the most brutal truth of AI in 2026: your AI’s most expensive mistake is when it confidently, quietly, and blissfully automates something wrong, only for you to find out via a public post or a CRM full of junk data. Autopilot is great until the plane plows into the side of a mountain.

This isn’t just a hypothetical. Modern automation stacks push your AI into live CRMs, dynamic ads, outbound campaigns, and product pages without a moment’s pause. We talk endlessly about critics, contracts, and control planes, but most teams are missing one critical discipline: abstention. Not bland, policy-induced refusals. True abstention is the system realizing “this isn’t safe,” then escalating to a human or a slower, safer path.

Deep Dive Thesis: The next breakthrough for AI in marketing automation is not more autonomy. It’s selective autonomy. Fast, cheap, unsupervised runs for high-confidence tasks. Escalation, slow-rolling, or shutdown for everything else. If your AI does not know how to shut up, it cannot be trusted to ship.

Why abstention is the grown-up feature your stack is missing

Most teams are still stuck on the “always answer” treadmill. The model outputs something, an automated validator gives it a cursory check, maybe you rubber-stamp it, and then it’s live, because your launch date isn’t moving, but your patience is wearing thin. Everything works fine until AI starts writing or rewriting your systems of record:

- Updating lifecycle email copy across all segments

- Deploying ads directly to Google Ads, TikTok, or whatever

- Dropping product claims and feature details on landing pages

- Auto-tagging and enriching CRM records at scale

- Summarizing support tickets into a customer-facing knowledge base

Once AI has write access, mistakes go from “bad copy” to operational disasters. Welcome to the age of error propagation powered by beautifully confident models.

This is not a vibes issue it is cold multiplication

Agentic workflows and tool-using agents compound risks at every step. If each tool has a 90% success rate, by the time your workflow chains five tools together, you’re down to a sad 59% overall reliability. Not because your AI is evil, just because basic probability won’t cut you any slack.

The real questions are:

- How can the system recognize when it is likely to go off the rails?

- How do you halt automation before the CRM or CMS gets polluted?

- How do you do this without putting humans in every single loop?

Enter abstention: the scaling lever nobody told you about.

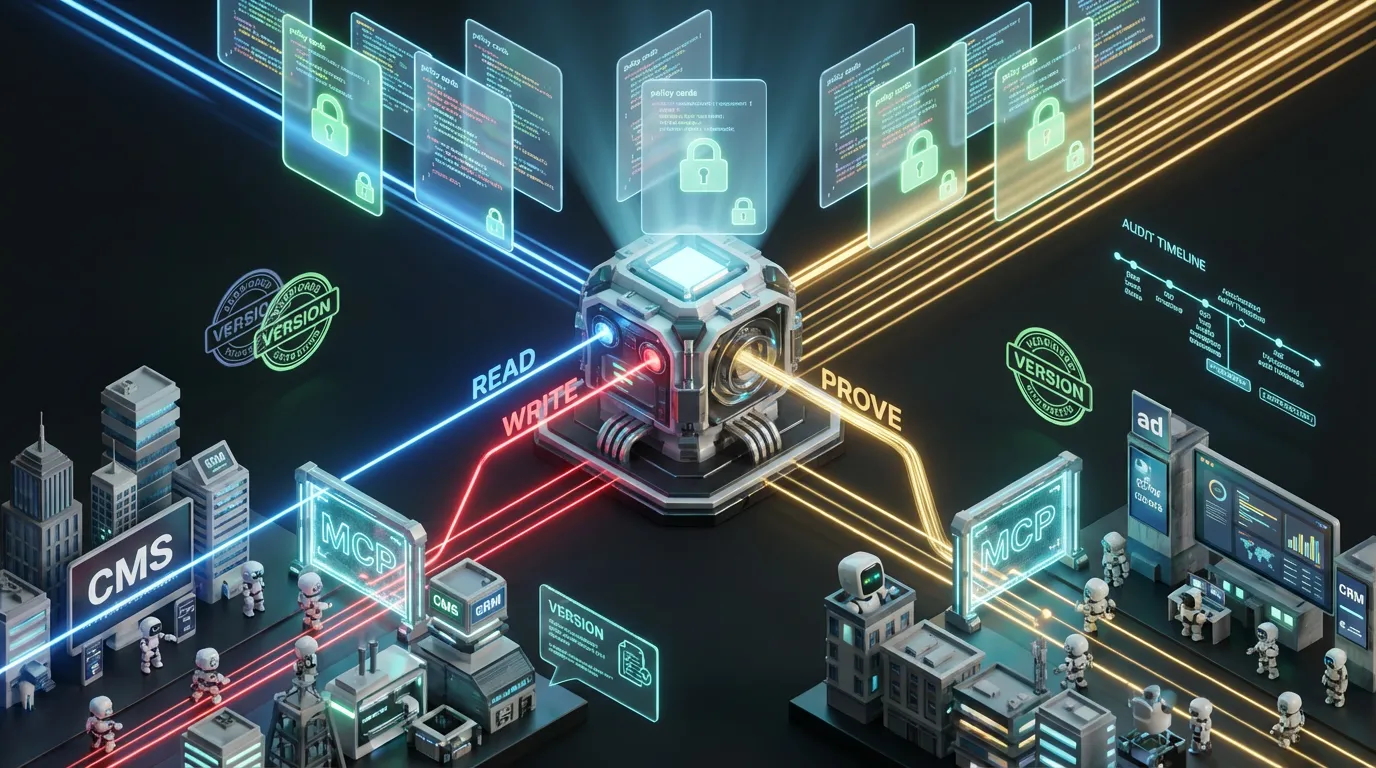

Abstention in the new marketing automation stack what it actually means

Abstention is not “refuse by policy.” That’s table stakes. True abstention is an uncertainty response. The system says: “Not enough evidence here, stopping.” The right stack makes abstention an actionable, auditable decision, not just a sad error string. If your abstention module drops a structured object, your ops team actually has something to route:

{

"decision": {

"action": "abstain",

"reason_code": "insufficient_evidence",

"risk_tier": "high",

"blocked_steps": ["publish_to_cms"],

"needs": [

{"type": "source", "detail": "Numeric claim requires approved source_id"},

{"type": "human_review", "detail": "Offer language implies guarantee"}

]

}

}

That object is the inflection between “we caught it in QA” and “why are we trending with the hashtag #WorstAdCopyEver”?

The research world now agrees selective generation is the future

The past few months have brought a new consensus, both in fresh preprints and in product launches: models should be trained not just to answer, but to know when not to answer. Most notably, recent work on learning conformal abstention policies uses conformal prediction plus adaptive risk policies to help models hold their tongue under distribution shift. Why does this matter for your bottom line? Because real-world inputs are weird, your funnel changes daily, and classic confidence thresholds set in sandbox-mode are brittle at best and hazardous at worst.

Adaptive abstention isn’t optional. It’s the only sustainable alternative to rolling the dice on every automation run.

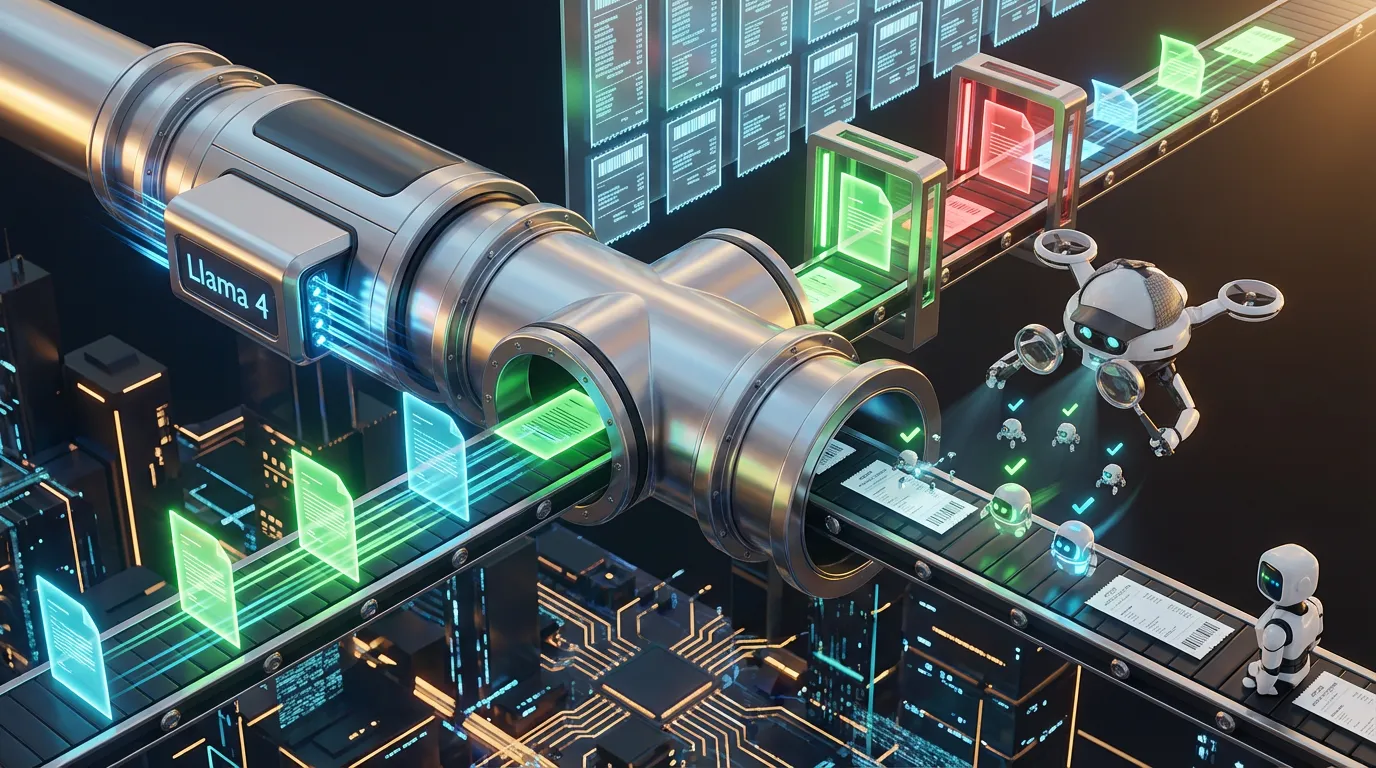

The abstention stack Five layers for real-world safety

To make abstention real, not just a last-mile patch, you need a pipeline where risk is managed upstream. Here’s a practical stack for marketing and content ops:

| Layer | What it checks | On fail |

|---|---|---|

| Deterministic critics | Schemas, URLs, required fields, forbidden terms | Block, auto-fix, never publish |

| Evidence critics | Claims matched to approved sources, compliance, product truth | Abstain and request proof |

| Uncertainty signals | Low output confidence, high entropy, model disagreement | Route to safer model or human |

| Action gates | Publish permissions, budget and rate limits | Hold, no production writes |

| Receipts | Trace inputs, version diffs, approvals | Log, notify, enable rollback |

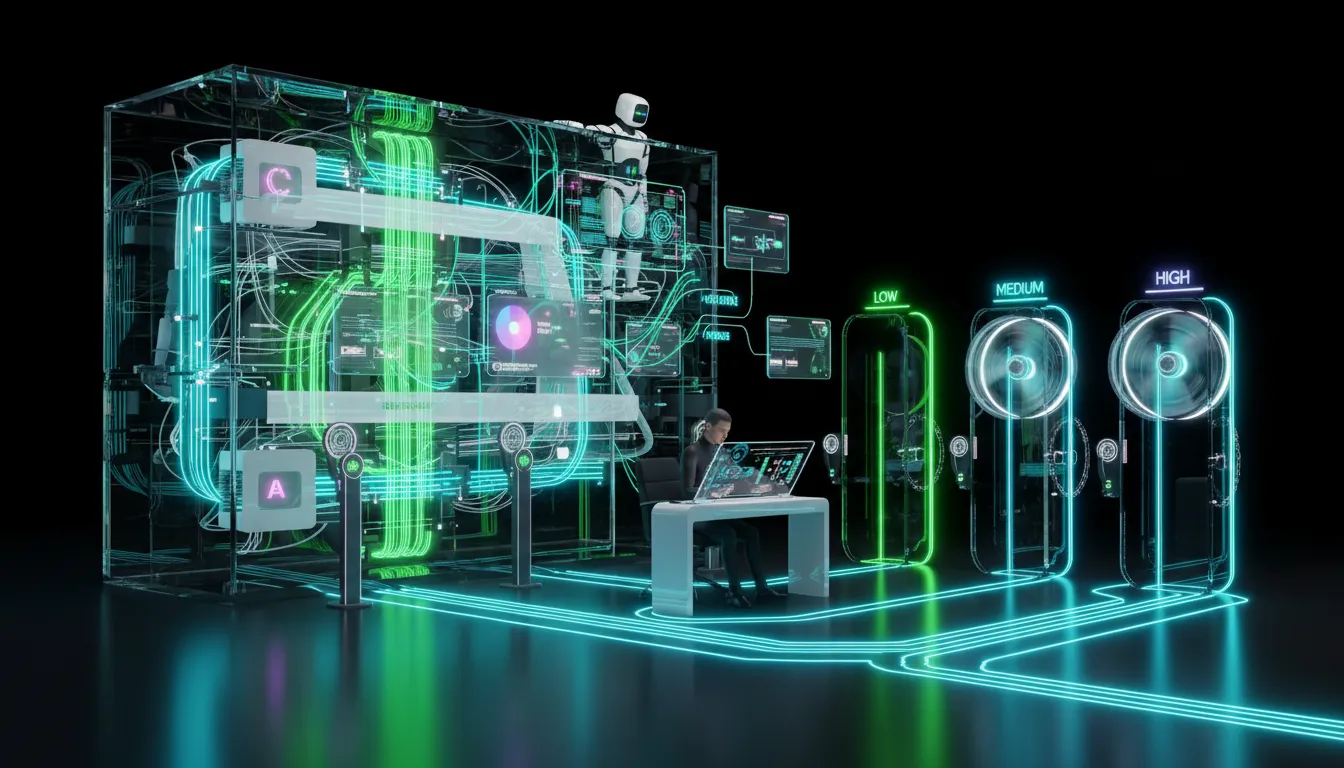

Where teams get abstention wrong

Too many teams see abstention as a binary: answer or refuse. But that’s 2023 thinking. The smart way is dynamic risk routing, calibrate your flow by the consequences:

- Low risk: generate, validate, publish

- Medium risk: generate, validate, hold for quick diff review

- High risk: generate, validate, abstain unless there’s proof attached

This logic is already central to workflow firewalls and control towers. Abstention just adds a kill switch before the machine can generate a high-visibility crisis.

Abstention is not just for AI PhDs It’s table stakes for content ops

Here are high-stakes marketing workflows where abstention saves your brand and your budget:

1) Dynamic landing page generation from product feeds

- AI excels at: assembling blocks, spinning headlines, segmenting content

- Abstain on: price, performance, or guarantees lacking fully-approved sources

2) Multichannel campaigns from a single brief

- AI excels at: drafting email, social, and paid ad variants from one prompt

- Abstain on: claims that morph across platforms or lack a source in your claims registry

3) CRM enrichment and lead scoring

- AI excels at: summarizing calls, guessing intent, suggesting plays

- Abstain on: writing to lifecycle stages, churn risk, or qualification gates unless the evidence is airtight

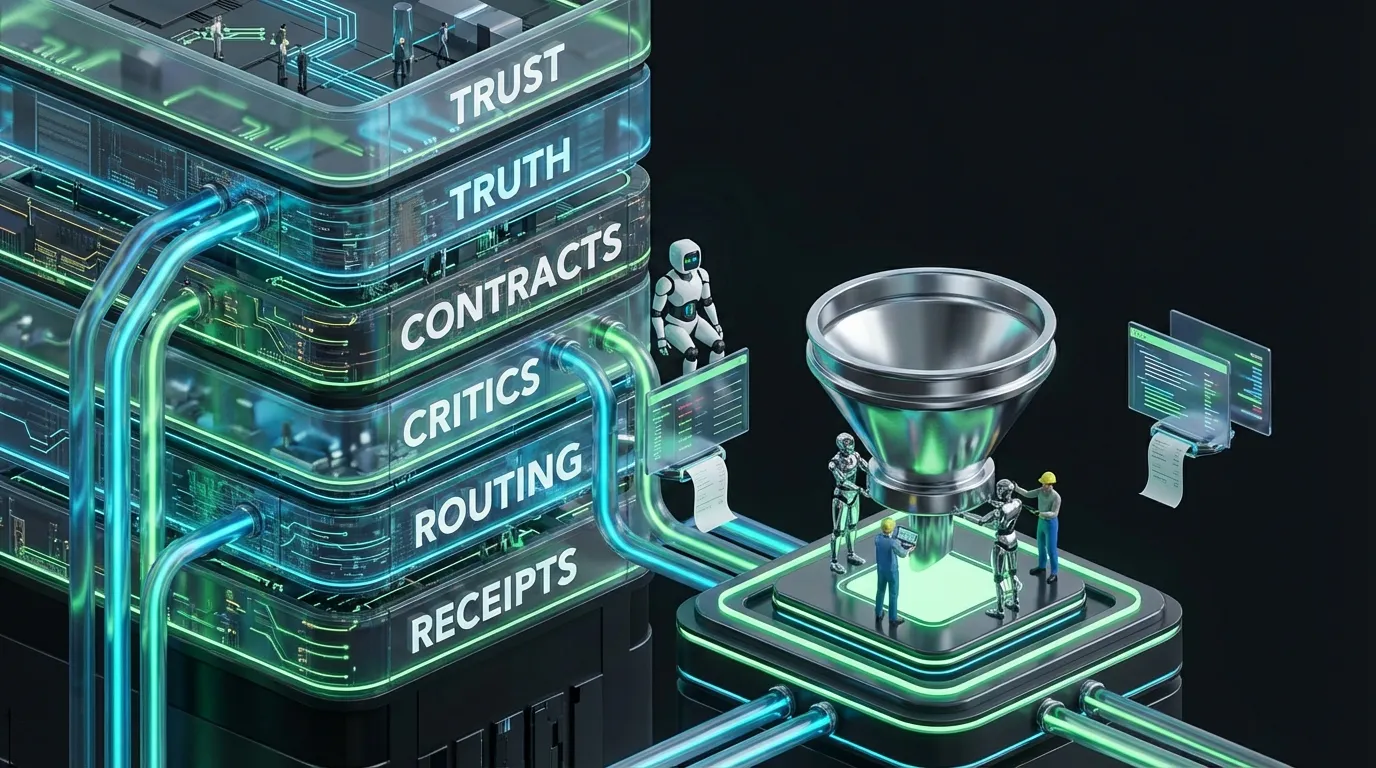

How abstention fits into the COEY governance frame

This is not a new philosophy. It’s tightening the bolts on the automation toolbox you already need:

- Contracts: Specify what a “valid” output must look like

- Critics: Define your bar for “safe enough”

- Receipts: Make every action traceable and reversible

- Budgets: Clamp endless retries and tool sprawl

- Abstention: Decide when silence is the best possible output

If you want a more expansive governance blueprint, start with Your Stack Needs an AI Control Plane, and layer in AI Workflow Firewalls: Marketers’ New Must-Have.

How to route without killing velocity

“Abstention” sounds like it means “stop the line.” Done well, it keeps you moving by cutting down on expensive rework, rollbacks, and public fire drills. Here’s a production-ready pattern that any low-code stack can implement:

[Trigger]

offer_updated | brief_submitted | segment_changed

[Context]

pull: offer contract | approved claims | required consent | brand rules

[Generate]

create: structured assets

[Critics]

schema_valid | links_ok | claims_have_sources | forbidden_terms

[Abstention Gate]

if claims_missing_sources OR low confidence OR workflow_chain_too_deep:

hold + request evidence + route to human

else:

proceed

[Write]

first to staging, only to production by risk level

[Receipts]

track: inputs + diffs + approvals + spend

Budgets and retry caps the boring discipline that saves your runway

Endless abstention loops are as bad as endless retries. Your AI can burn through tokens and time, chasing confidence that isn’t coming. You need rules:

- Cap retries per stage

- Hard-stop the number of tool invocations

- Limit premium model escalations per asset

- Mandate human handoff on top-tier failure

This is just applying the same discipline you’d use in any automation, only now the guardrail is silence, not more guessing.

Fast decision table For the “should we ship it” crowd

| Question | Signal you need | Best move |

|---|---|---|

| Can we trust this to publish? | Source evidence, all critics green | Auto-publish (low risk only) |

| When should the pipeline halt? | Missing proof, model uncertainty, high risk | Abstain, structured reason code |

| How do we keep velocity? | Humans only on flagged exceptions | Risk-level routing and diff approvals |

The COEY take

Everyone wants self-driving marketing. Nobody wants to explain to Legal why some AI just promised a “guaranteed ROI” on your homepage. The right move isn’t less automation. It’s better automation. Abstention is how you make automation-first stacks resilient, not reckless. It lets you ship at speed, but only when what you ship can be proven. It keeps your team out of fire drills and puts “AI safety” on the roadmap as an actual operational behavior:

If your AI cannot shut up, it cannot be trusted to ship.

Welcome to the automation stack for actual grown-ups. Selective silence. Operate at scale without scaling the mess.