Tencent HY-MT1.5 Brings Offline Translation to Workflows

Tencent HY-MT1.5 Brings Offline Translation to Workflows

January 14, 2026

Tencent just released HY-MT1.5-1.8B, a compact multilingual translation model that is explicitly built for low-latency, low-memory deployment. The headline is not “new MT model exists” (we have enough of those). The headline is: translation is getting small enough, fast enough, and open enough to move from “buy an API” to “ship it inside your product or pipeline” without begging procurement or praying your latency holds up in the real world.

HY-MT1.5-1.8B sits in Tencent’s Hunyuan MT 1.5 family and targets a very specific pain: translation is everywhere in modern content ops (localization, subtitles, customer support macros, product pages, UGC moderation), but the default implementation is still a metered cloud endpoint. That’s fine until you care about speed, privacy, offline access, or unit economics at scale. This release is Tencent saying: you can own the capability.

If you want broader context on Tencent’s current open-weights posture across media categories, see our earlier COEY coverage: HunyuanVideo 1.5 Makes Local AI Video Practical.

What Tencent actually shipped

HY-MT1.5-1.8B is a 1.8B parameter neural machine translation model designed to cover multilingual translation while staying deployable on constrained hardware. The model is positioned for edge devices and low-latency cloud inference, not just research.

Core capabilities called out across the release ecosystem:

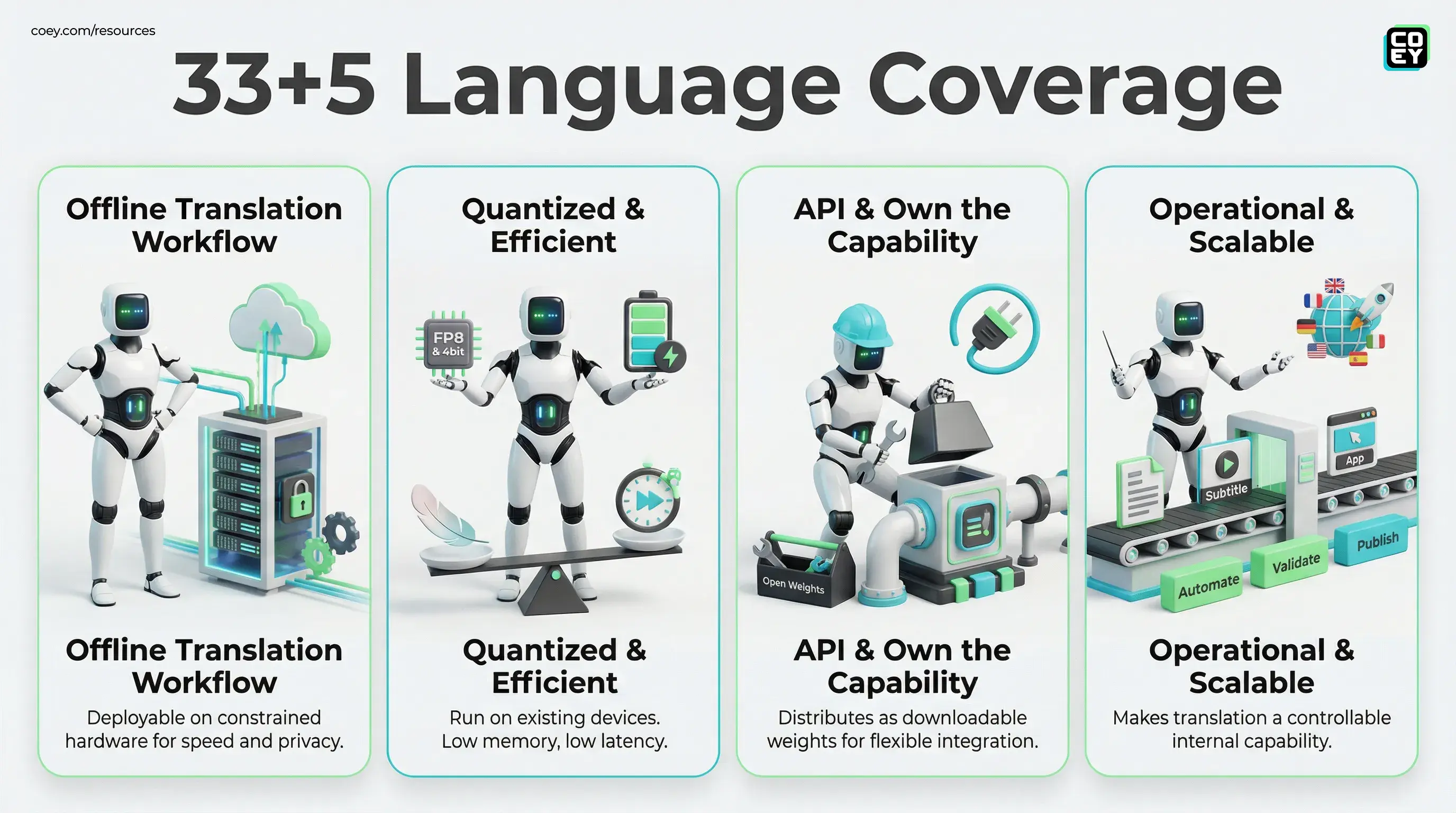

- Language coverage: Tencent describes support for 33 languages plus 5 Chinese dialects or variants.

- Efficiency posture: It is framed for on-device or edge scenarios, especially when paired with quantization. Multiple writeups cite about 1GB memory class deployments after quantization and very low per-request latency for short snippets. Your actual numbers will depend on runtime, quant level, and hardware.

- Workflow features beyond raw MT: The model is promoted with promptable patterns for terminology control, context-aware translation, and format or tag preservation.

Translation in production is not “convert words.” It is “keep my formatting, keep my terms consistent, and do not invent new product names.” The framing signals Tencent knows that.

Why this matters now (and why it is not just hype)

The machine translation world has a familiar pattern: bigger models get better, but they also get heavier, slower, and more expensive to operationalize. HY-MT1.5-1.8B is the opposite bet: good-enough quality with high throughput and local deployability. That matters because most orgs do not lose money on “translation quality that is 2% worse.” They lose money on:

- Latency: translation inside an app or chat flow cannot feel like a network roundtrip tax.

- Cost scaling: per-character pricing is fine until you automate localization at volume.

- Privacy plus compliance constraints: plenty of teams cannot (or should not) send customer, legal, or pre-launch content to third-party services.

- Workflow friction: if translation requires humans to copy and paste through a UI, it is not automation. It is just faster suffering.

Compact open models shift translation from a service you rent to a capability you integrate, more like image resizing or search indexing than a special project.

Quantization is the deployment unlock

The real operational story here is the availability of quantized variants. HY-MT1.5-1.8B is available in multiple builds across the ecosystem, including official FP8 and community GGUF distributions, which is how you get from “cool checkpoint” to “this runs on the machines we already own.”

One widely used GGUF packaging is available here: mradermacher/HY-MT1.5-1.8B-GGUF.

Why creators and marketers should care about quantization (non-technical translation): it is what makes translation cheap enough and fast enough to run continuously in the background of your content pipeline without your cloud bill turning into a horror story.

| Deployment option | What it optimizes for | Where it is most realistic |

|---|---|---|

| Full precision | Quality headroom | Server GPUs, batch localization |

| FP8 | Speed plus lower memory | Low-latency cloud endpoints |

| INT4 or GGUF builds | Small footprint plus edge viability | Local apps, offline workflows |

API availability: open weights, not a hosted endpoint

This is the part that decides whether it plugs into real workflows.

Tencent is distributing HY-MT1.5-1.8B as downloadable weights on Hugging Face, not primarily as “here is a paid translation API.” That means:

- There is no single official Tencent-hosted API endpoint for HY-MT1.5-1.8B on the model page that you can paste into Zapier and call it done.

- It is still absolutely API-capable, because anything you can run can be wrapped as an internal service your stack calls.

- Automation readiness depends on you: if you can host models (or use a hosting partner), you can make translation a first-class pipeline component.

Open weights are the “own your leverage” play. But they come with a reality tax: you need deployment plumbing, monitoring, retries, rate control, and a review gate when the output matters.

Where this changes creative operations fastest

HY-MT1.5-1.8B is especially relevant for teams that already produce content at volume and keep tripping over translation bottlenecks. Not because it is magical, because it is operationally shapable.

1) Localization as a background process

If your content pipeline includes blog posts, product releases, landing pages, lifecycle emails, or app store updates, you can translate on publish events and route the results for human review. The human stays in charge of nuance. The machine does the repetitive first pass.

2) Subtitle and transcript translation at scale

Subtitle translation becomes dramatically more practical when you can run inference locally or in a controlled environment, especially for sensitive footage, pre-launch campaigns, or client work that you do not want leaving your system.

3) In-app translation without cloud dependency

For product teams, on-device translation is a UX win (latency) and a business win (cost predictability). It also unlocks offline experiences, still a big deal outside perfect Wi-Fi fantasyland.

Real-world readiness: what is solid vs what to pressure-test

This release is usable, but usable does not mean “set it and forget it.” Here is the pragmatic split.

Looks genuinely ready

- Open distribution: predictable access, easy evaluation, easier internal standardization.

- Deployment flexibility: FP8 and GGUF availability signal real deployment intent.

- Production-friendly behaviors: terminology control and format-preserving prompting hint at workflow alignment.

Still needs validation in your stack

- Brand terminology consistency: test your product names, slogans, legal phrases, and “never translate this” terms.

- Domain accuracy: marketing copy, legal terms, medical and finance claims, do not assume general MT equals safe MT.

- Automation governance: you need routing rules (what auto-ships vs what gets reviewed) or you will scale mistakes with impressive efficiency.

Bottom line

HY-MT1.5-1.8B is Tencent pushing machine translation toward deployable infrastructure, not just a cloud feature. For executives, that means translation can become a controllable internal capability with better latency and more predictable economics. For marketing and creative ops, it means localization, subtitling, and multilingual repurposing can move closer to a real automation loop: generate, validate, review, publish, without translation living behind someone else’s UI and pricing model.

It is not a magic button. But it is the kind of release that makes human plus machine collaboration scale: humans bring intent and taste; machines remove the grind and keep the pipeline moving.