Anthropic Claude Opus 4.6 Pushes Long-Context + “Agent Teams” Into Real Workflow Territory

Anthropic Claude Opus 4.6 Pushes Long-Context + “Agent Teams” Into Real Workflow Territory

February 5, 2026

Anthropic’s new Claude Opus 4.6 is less “new model drop” and more “okay, so you’re really trying to run a company on this thing.” The headline features are a 1M token context window (beta) and Agent Teams inside Claude Code, two upgrades aimed at the part of AI that actually matters: keeping work coherent while it scales.

Big shift: the competitive edge isn’t “best writing” anymore. It’s durable memory + parallel work that can plug into real systems without your team babysitting every step.

What changed in Opus 4.6

Opus 4.6 is positioned as a drop-in upgrade from Opus 4.5 with the same familiar pay-per-token economics for standard usage, while adding a long-context tier that changes what “one session” can realistically contain. In practice, it’s a bet on long-running, high-complexity projects, the kind where context loss is the real productivity killer.

Two additions matter most:

- 1M token context (beta): enough room to keep entire product documentation sets, campaign histories, or large codebases “present” without constant chunking.

- Agent Teams (research preview): parallel sub-agents coordinating workstreams inside Claude Code, closer to a small internal team than a single assistant.

Early coverage has framed Agent Teams as a direct attempt to make Claude Code feel like a multi-person dev pod you can spin up on demand, not just a chat box with good vibes (TechCrunch).

Why 1M tokens is not just a flex

Long context is becoming the new horsepower number, but there’s a real operational unlock when it’s done right: you stop rewriting your company into every prompt.

With 200K-ish context, teams learned to survive with:

- external summaries

- RAG pipelines

- hand-built “brand voice” prompt blocks

- ritualistic copy/paste of the same docs every week

With 1M tokens, you can realistically keep a full working set in-play: brand rules, claims registries, product specs, last quarter’s launch analysis, plus the actual work being produced. That’s not convenience. That’s fewer workflow breakpoints, fewer handoffs, and fewer “it forgot the rule we set 10 minutes ago” failures.

Who actually benefits

Long context is most valuable in workflows where coherence is expensive:

- Marketing ops: multi-channel campaigns where one wrong claim or outdated pricing line becomes a screenshot that lives forever.

- Product marketing: deep specs + positioning docs + competitive notes = fewer contradictions across assets.

- Agencies: client-specific brand bibles and historical approvals staying in-thread, not scattered across Notion, Drive, and someone’s memory.

- Engineering + content teams: repo-wide changes and documentation generation without constantly reloading context.

Agent Teams: the “parallelism” moment

Agent Teams is the more strategic move. If 1M context is memory, Agent Teams is staffing.

Anthropic is effectively admitting something most teams already know: the best work isn’t one linear prompt chain. It’s multiple specialties running at once: drafting, validating, refactoring, testing, packaging, then merging into a coherent deliverable.

In Claude Code, Agent Teams means you can assign multiple agents distinct responsibilities and have them coordinate. Anthropic’s product leadership has described it as closer to a coordinated human team than a single assistant, emphasizing speed from parallel work (TechCrunch).

What “agent teams” looks like in creative ops

This isn’t just for developers. Marketing pipelines are already multi-agent in spirit (humans are the agents, Slack is the bus). The model version is simply faster and easier to automate if the guardrails exist.

- Agent A: drafts copy variants

- Agent B: enforces brand tone + banned phrases

- Agent C: checks claims against approved sources

- Agent D: formats output for CMS or ESP schemas

Done well, that’s not “AI replaces your team.” That’s AI turns your team into directors while machines run the repetitive production passes.

API availability and automation readiness

This is where the hype meets the grown-up question: can you actually wire it into your stack?

Claude API: the practical entry point

Anthropic’s developer ecosystem is mature enough that Opus 4.6 can slot into existing Claude integrations, and the Claude pricing structure remains familiar for standard usage. For teams already using Claude for content, coding, or agentic workflows, the biggest shift is that the “context budget” ceiling gets dramatically higher without needing new architecture for every use case.

1M context beta: real, but gated

The 1M window is in beta and not universally available. Anthropic’s docs indicate long-context access is gated, meaning teams should treat it as production-adjacent, not production-assumed until they validate latency, limits, and cost behavior in their environment.

Translation for non-technical leaders: you can build with it, but you need a pilot before you bet your quarter on it.

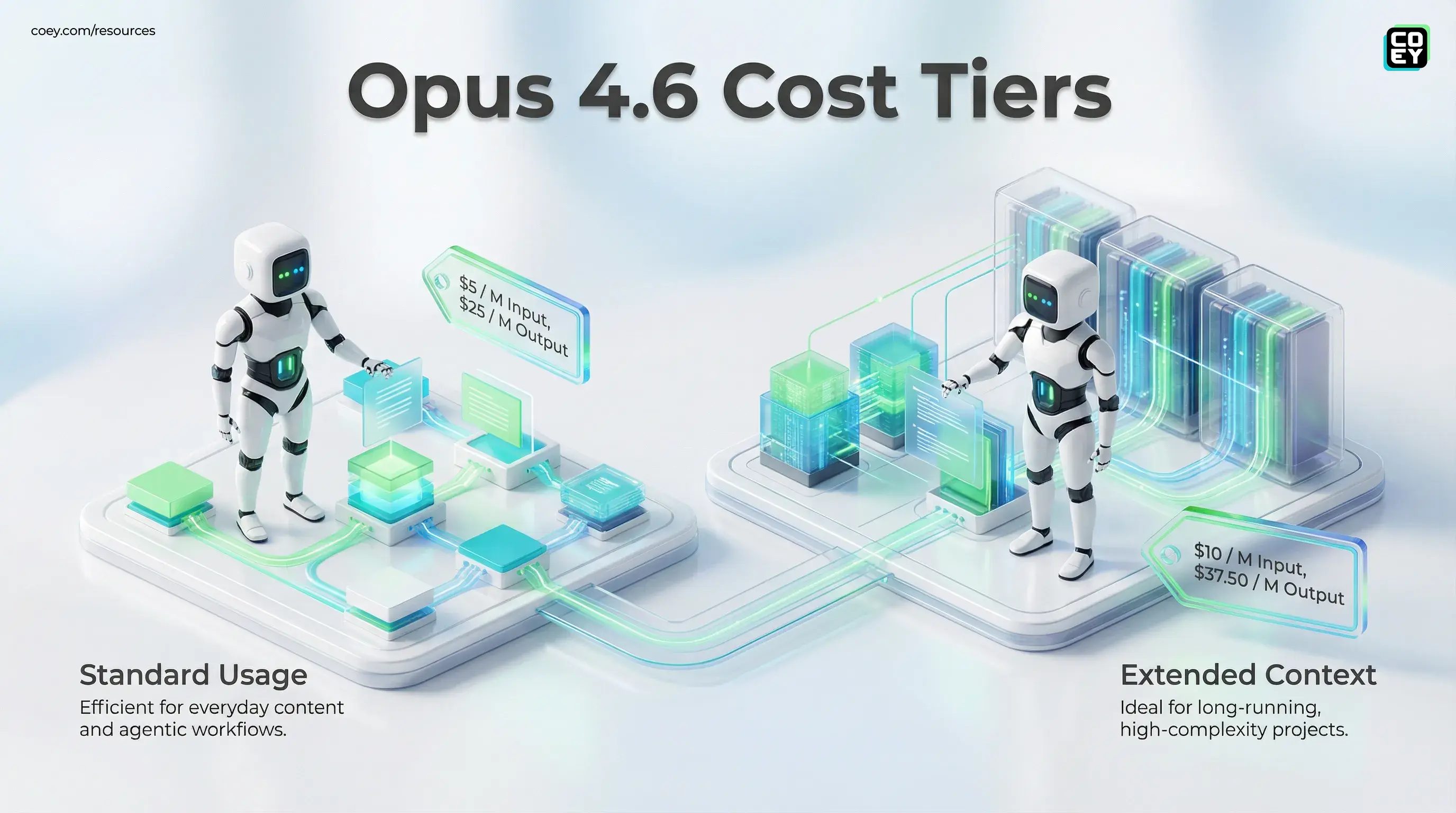

Pricing: the bill still matters

Opus pricing is still built on token economics, and long context can tempt teams into “just stuff everything in there” habits that spike costs. As of the current Claude pricing, Opus is listed at $5 per million input tokens and $25 per million output tokens for standard usage.

Reality check: Agentic workflows don’t call the model once. They call it, critique it, retry it, and sometimes argue with themselves. If you don’t budget and cap retries, you’re basically giving your automation a corporate Amex.

What’s ready now vs. what’s still preview energy

| Capability | What’s real today | What to pressure-test |

|---|---|---|

| Opus 4.6 core model | Usable as a primary model in Claude-based workflows | Quality drift vs. 4.5 on your specific tasks |

| 1M context (beta) | Available with access gating and beta enablement | Latency, rate limits, and cost at scale |

| Agent Teams | Research preview in Claude Code for parallel workflows | Governance, auditability, and safe tool permissions |

The operational implication: fewer handoffs, more throughput

When you combine massive context with parallel agent teams, you get a specific kind of leverage: fewer “human glue” moments.

That matters because most organizations aren’t blocked by creativity. They’re blocked by:

- finding the latest approved truth

- keeping output consistent across channels

- review cycles and version confusion

- manual packaging (formatting, tagging, exporting, uploading)

Opus 4.6’s direction suggests Anthropic is building Claude into a workflow substrate, not just a writing engine: longer memory, better coordination, more automation-native primitives.

What to watch before you standardize on it

This release is meaningful, but there are still “don’t be delusional” checks teams should run.

1) Context does not equal truth

Stuffing a million tokens into a model doesn’t guarantee it will pick the right policy line, the right pricing number, or the right disclaimer. Long context improves recall potential. It doesn’t replace verification.

2) Parallel agents can parallelize mistakes

If one agent hallucinated a claim and another agent formatted it beautifully, congratulations: you just shipped a high-quality error faster than ever.

3) The real maturity test is observability

To run Agent Teams in production, teams will need logs, receipts, and clear “who did what” traces, especially when agents can touch tools, code, or publishing systems.

If you’re already building toward that style of governance, our earlier work on cost and reuse patterns is relevant: Semantic Caching: The Unsung Hero of AI Pipelines.

Bottom line

Claude Opus 4.6 is a real signal that the “AI coworker” era is evolving into the “AI team” era. The 1M token context window (beta) attacks one of the most stubborn blockers in scaled automation: context loss. Agent Teams attacks the next one: sequential bottlenecks.

For marketing, content, and automation leaders, the main takeaway is pragmatic: this is the kind of release that can reduce handoffs, preserve intent across long projects, and make multi-step creative production more automatable if you pair it with cost controls, verification layers, and sane governance.

It’s not magic. But it’s closer to “plug it into the workflow and ship” than most model launches manage to be.