Google Gemini Adds AI Music Generation With DeepMind’s Lyria 3

Google Gemini Adds AI Music Generation With DeepMind’s Lyria 3

February 19, 2026

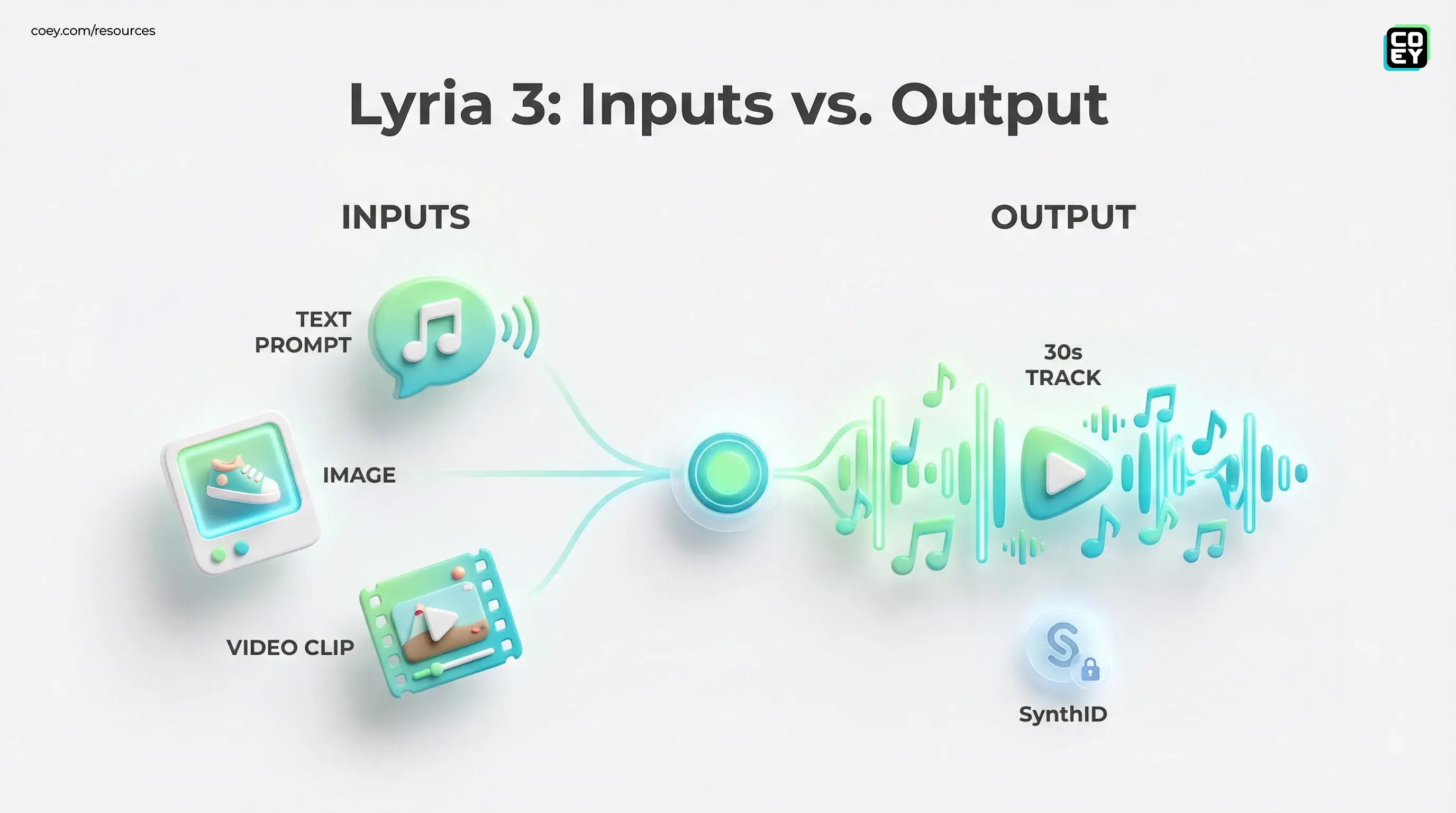

Google just put AI music generation inside the Gemini app, powered by DeepMind’s Lyria 3, meaning anyone with access can spin up a 30-second track from a text prompt, or even from an image or video. The official product write-up is here, and the feature ships with SynthID watermarking baked in.

This is one of those updates that sounds like a cool creator toy until you zoom out and realize what Google is actually doing: turning audio into the same kind of fast, modular, promptable asset that images became last year. For marketing teams living inside Reels, Shorts, and TikTok timelines, it is a direct shot at the last annoying bottleneck in short-form production: getting music that fits the vibe, clears rights, and does not require an email chain with “music options v7 FINAL final” in the subject line.

What actually shipped in Gemini

Lyria 3 in Gemini generates short music clips (30 seconds) designed for modern attention spans and ad formats. The inputs are the real headline:

- Text-to-music: “catchy summer jingle with whistles,” “dark cyberpunk trap intro,” etc.

- Image-to-music: upload a product photo, event shot, or mood board frame; Gemini composes to match.

- Video-to-music: drop a clip and generate a music bed that tries to align to pacing and energy.

It also generates cover art for each track, useful for quick sharing and organization, less useful if you are a brand that already has a design system and does not want random AI art attached to everything.

Google frames it as broad and global: the feature is available in the Gemini app for users 18+ and at launch supports eight languages: English, German, Spanish, French, Hindi, Japanese, Korean, and Portuguese. Outputs can be instrumentals and can also include vocals and auto-generated lyrics.

Translation: Gemini is now trying to be the “everything box” for quick campaign assembly: text, images, video, and now audio inside one consumer workflow.

Why this matters to marketers (not just musicians)

Most marketing orgs do not have a music pipeline. They have a music problem. It shows up at the worst possible time: the edit is ready, the deadline is in two hours, and you are still digging through stock libraries or reusing the same safe-but-boring track your audience has already heard 400 times.

Lyria 3 changes the default move from “search” to “generate.”

That is not just a convenience. It changes how teams iterate:

- More creative testing: test the same video with different music vibes without licensing friction.

- Faster localization: prompt in-market language and style, useful for region-specific campaigns.

- Less sameness: stock music creates the same sonic wallpaper across brands; custom generations help you stand out.

And because it is embedded in Gemini, already used for copy, ideation, and creative iteration, it is positioned where marketers actually work instead of in yet another niche music tool no one wants to learn.

Multi-modal prompting is the real upgrade

Text-to-music is table stakes now. The more operationally interesting bit is image and video conditioning.

If Gemini can reliably “read” a brand visual and return a track that matches (bright vs moody, luxury vs playful, fast cuts vs slow pans), you get a new automation-adjacent capability: music generation becomes downstream of your existing asset pipeline.

In other words: the video (or product image) becomes the brief.

That is the human plus machine collaboration sweet spot COEY cares about:

- Humans: choose the story, pick the visuals, define the brand vibe.

- Machine: generates ten audio directions instantly so humans can pick, refine, and ship.

SynthID for audio: provenance becomes a feature

Every track generated with Lyria 3 is embedded with SynthID, Google’s imperceptible watermarking system. DeepMind’s SynthID overview is here.

Gemini also supports detection: users can upload audio and ask whether it contains a SynthID watermark indicating it was generated with Google’s AI tools.

This is not just ethics theater. It is operational:

- Brand safety: teams can track what is AI-generated in their asset library.

- Compliance workflows: easier to implement AI disclosure required rules when you can detect origin.

- Receipts culture: provenance becomes part of the creative supply chain, not a last-minute spreadsheet note.

Reality check: SynthID detection is most useful inside Google’s ecosystem. It is not an industry-wide AI music detector for every model on earth. It is provenance for Google-generated media.

API and automation: what you can (and can’t) do

Here is the grown-up question executives ask: can this plug into production workflows?

Right now, Lyria 3 music generation is positioned as an in-app Gemini capability. That means it is excellent for:

- social teams producing daily content

- creative directors prototyping sonic directions

- agencies making quick iterations during approvals

But it is weaker for true automation at scale:

- No clearly documented standalone “music generation API” for Lyria 3 is publicly available in the same way Google documents its main Gemini API endpoints.

- No published batch pipeline feature for automatically generating and routing large sets of tracks into a DAM with metadata and approvals.

So today, “automation” looks like:

- generate in Gemini

- download or export

- drop into Premiere, CapCut, or your editor

- store in Drive or DAM manually

Still valuable. Just not the hands-free factory line version yet.

Readiness table

| What teams want | What Gemini delivers | Automation readiness |

|---|---|---|

| Fast custom music for short-form | 30-second tracks from text, image, or video | High (manual) |

| Provenance and auditability | SynthID watermark plus detection support | Medium-High |

| Pipeline integration (batch, triggers) | Primarily app-based generation | Low (for now) |

What’s hype vs what’s operational

The hype version: everyone becomes a composer.

The operational version: music becomes a configurable asset class, like copy variations and thumbnails, where you can generate lots of options quickly and let humans choose what fits.

What looks real today:

- Short-form fit: output length matches real social specs.

- Multi-modal direction: music can be guided by your actual visuals.

- Provenance embedded: SynthID lowers org-level anxiety about “what even is this file?”

What to stay skeptical about:

- Brand uniqueness: if everyone prompts “uplifting indie pop,” you will still get sameness, just AI sameness instead of stock sameness.

- Vocal and lyric risk: vocals raise higher stakes for sounds-like issues, cultural tone mistakes, and brand appropriateness.

- Workflow lock-in: app-only creation is great until you need scale, governance, and repeatability.

The bigger signal: creative stacks want audio parity

Google has been steadily building a multi-modal creation suite where each new modality (images, video, audio) comes with:

- consumer access first

- pro surfaces later

- provenance infrastructure as a default

Lyria 3 in Gemini continues that pattern. It also nudges the market toward a future where campaign assembly is increasingly prompt-driven and modular: visuals, voice, music, captions, cutdowns all generated as ingredients, then routed through humans for taste and approvals.

If the asset cannot be routed, logged, and governed, it is not automation. It is just speed. This launch is speed today with clear signs it is aiming at workflow infrastructure next.

Bottom line

Gemini adding Lyria 3 makes custom music for short-form fast, accessible, and provenance-tagged, exactly what modern marketing teams need when content velocity is the strategy. The automation ceiling is still capped because the capability lives inside the Gemini app rather than a clearly exposed, production-friendly API. But even in UI-only form, it meaningfully compresses time-to-publish, expands creative testing, and reduces licensing friction.

For teams scaling human creativity with machine collaboration, this is a practical new collaborator: humans set intent and taste, Gemini produces breadth, and SynthID helps keep the whole thing auditable when the asset count starts to explode.

Related from COEY: For a broader look at how Google is pushing multi-modal creation inside Gemini, see Veo 3.1 Finally Ships True Vertical AI Video.