Sarvam AI Drops India-Focused LLMs (105B + 30B) and Yes, You Can Actually Use Them

Sarvam AI Drops India-Focused LLMs (105B + 30B) and Yes, You Can Actually Use Them

February 19, 2026

Sarvam AI just launched two India-first large language models, Sarvam 105B and Sarvam 30B, with positioning that enterprise buyers actually care about: Indic language performance, India-first deployment posture, and a clear path for developers. If you are tracking the rollout, start with Sarvam’s documentation because this is not only a model story, it is an automation story: Sarvam API Docs.

Under the hood, Sarvam is playing a specific game: build models tuned for India’s linguistic reality while making them practical for population-scale workflows where latency, data boundaries, and cost are not footnotes.

What Sarvam actually launched

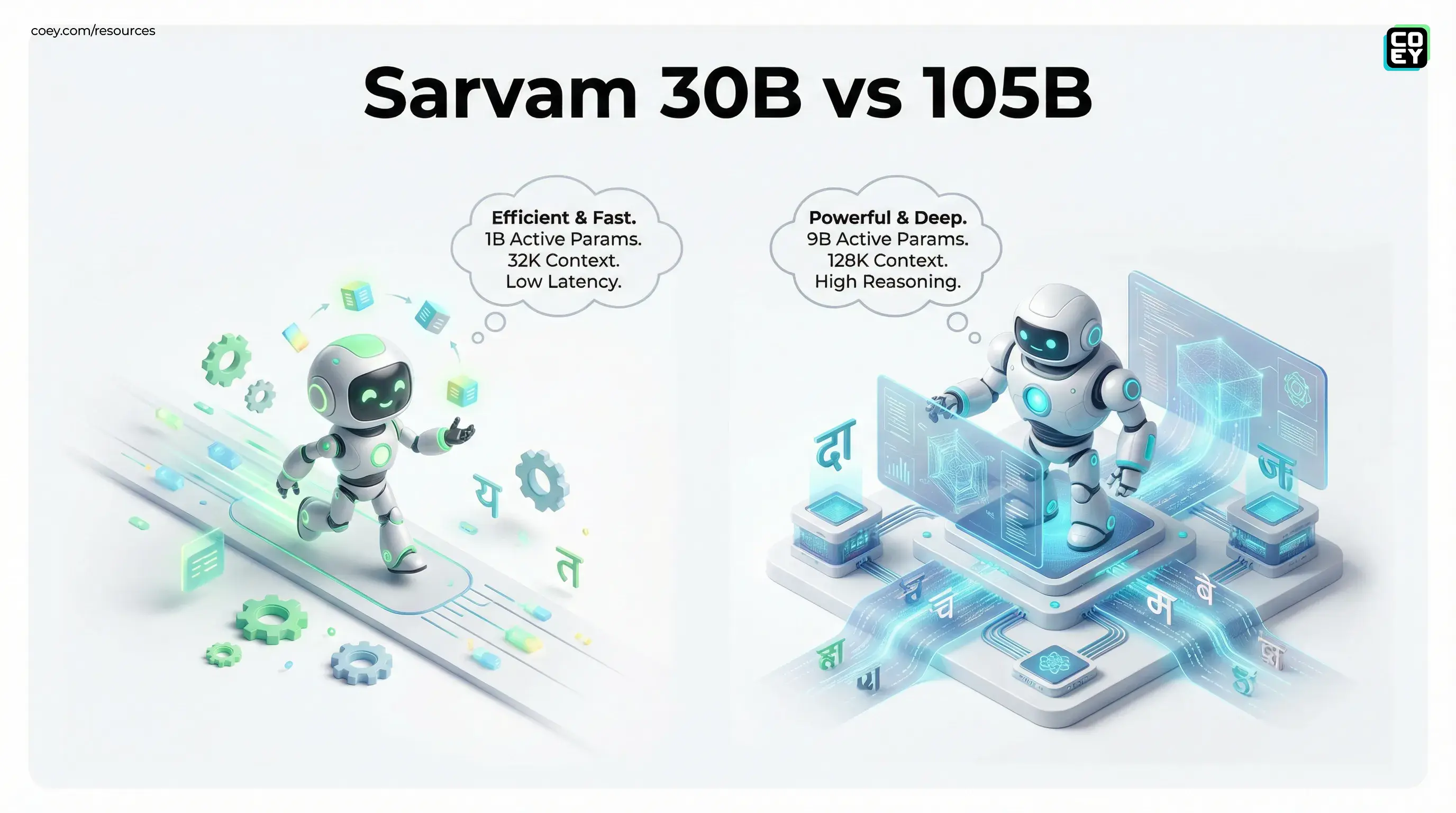

Sarvam introduced two new foundation models aimed at deployment across Indian enterprises and platforms: a 105B parameter model and a 30B parameter model. Reporting describes both as Mixture of Experts (MoE) models, meaning only a subset of parameters are active per token.

Reported details include:

- Sarvam 30B: about 1B active parameters per token, 32K token context

- Sarvam 105B: about 9B active parameters per token, 128K token context

Business Standard’s coverage frames the 105B as the flagship, with the 30B optimized for lighter deployments and cost control: Business Standard.

Moneycontrol also reports Sarvam’s claim that Sarvam 105B outperforms DeepSeek R1 and Gemini Flash on select benchmarks, which should be treated as directional until you reproduce results on your own tasks: Moneycontrol.

Why the Indic language angle is more than branding

Most “multilingual” models still behave like English-native systems that happen to support other languages. Teams working across Hindi, Tamil, Telugu, Bengali, Marathi, Kannada, Gujarati, Malayalam, Punjabi, plus code mixed and romanized realities, run into the same problems: awkward phrasing, missing cultural context, and outputs that feel like translations.

Sarvam’s pitch is that these models are tuned for Indian language usage as it is actually written and spoken, including mixed-script and romanized inputs that show up constantly in commerce and support.

The deployment story: sovereignty, latency, and data boundaries

Sarvam is also leaning into India-first infrastructure narratives. For many enterprises, the practical value is straightforward: lower latency and fewer data residency complications in regulated categories.

API availability: can it automate, or is it a walled garden?

Sarvam’s advantage may be the least glamorous: developer access that maps to standard automation patterns. Their documentation shows the usual platform basics, authentication, endpoints, and common workflow capabilities.

As of Feb 19, 2026, the public docs are clear about the broader API surface area, even if the new 30B and 105B model selectors are still rolling out in productized form. The safest starting point for implementation planning remains the core documentation: Sarvam API Docs.

From an executive perspective, “API access” means you can plug these capabilities into your stack, trigger them from events, and monitor outputs like any other system.

Where this becomes real for marketing, media, and support

The most immediate use cases are operational and high volume.

Multilingual campaign engines without translation voice

Instead of writing in English, translating, then repairing tone and cultural references, teams can push toward native-first variants and keep humans focused on creative direction and review.

Support automation across chat and voice

For businesses supporting customers in multiple languages, an India-tuned model family is directly relevant to routing, drafting, summarization, and QA across channels.

Media ops at population scale

Long context models matter when you need structured derivatives from full articles, transcripts, or long documents: summaries, titles, metadata, and localized scripts, with humans retaining editorial control.

Pragmatic caveats

- Benchmark claims vs your domain: pilot on your own data

- Cost transparency: MoE can help efficiency, but you still need predictable throughput and rate limits

- Safety and compliance: guardrails, redaction, logging, and policy enforcement decide whether this ships

- Ecosystem maturity: the best model is the one your team can integrate and operate without heroics

What to watch next

Sarvam’s move matters because it pushes the market toward a more practical definition of readiness in India: language fidelity plus infrastructure fit plus API-first automation. If Sarvam follows through on stable developer experience, enterprise-grade reliability, and predictable economics, these models will be less about headline parameters and more about workflow leverage.