smallest.ai Beta-Launches Hydra: Speech-to-Speech Voice Agents Get Serious

smallest.ai Beta-Launches Hydra: Speech-to-Speech Voice Agents Get Serious

March 4, 2026

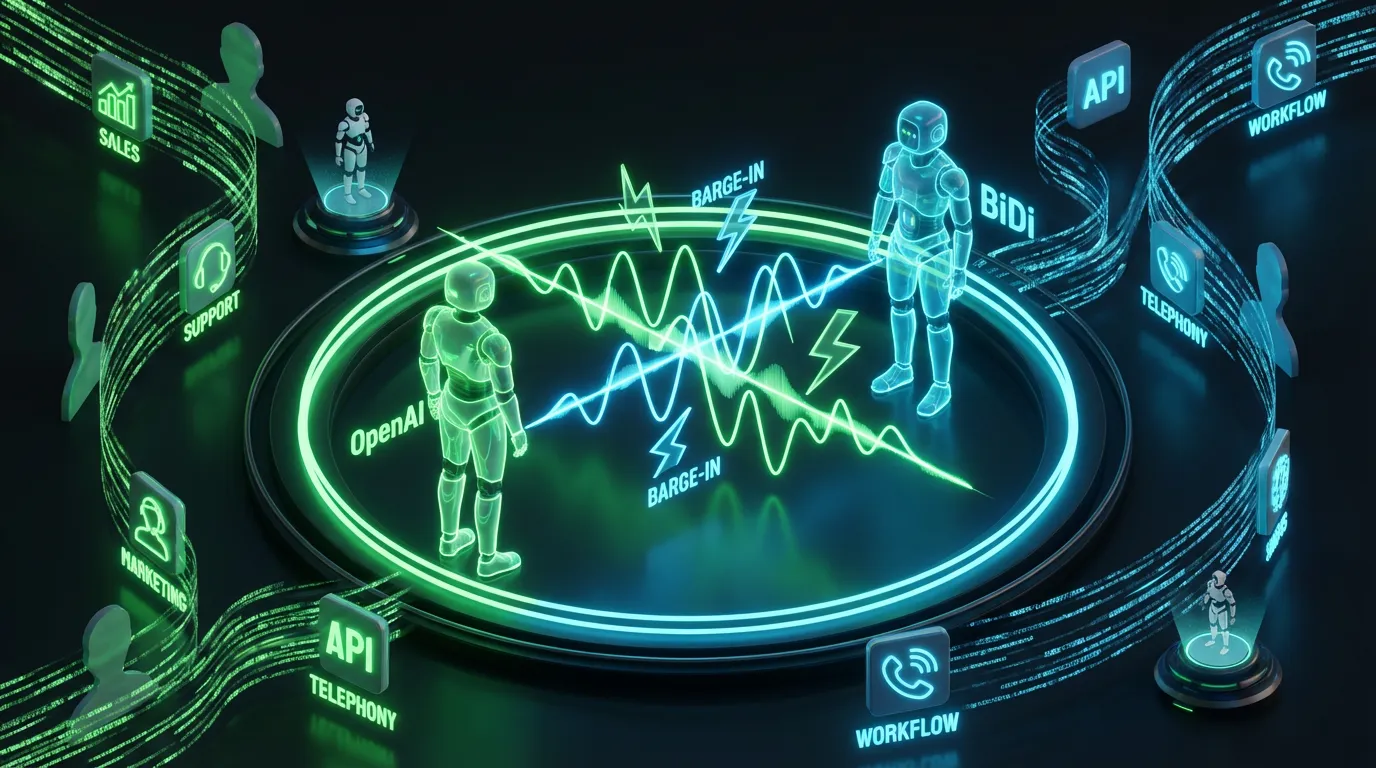

smallest.ai just beta-launched Hydra, a native speech-to-speech model that is built for real conversation: it listens and responds in the audio domain, fast enough to overlap like a human, and ambitious enough to call tools mid-utterance. If you have been living through the awkward era of voice bots that wait for silence like they are politely raising a hand in Zoom, Hydra is a clear signal: voice is finally moving from demo vibe to workflow component.

Hydra is smallest.ai’s bet on a voice stack that stops acting like a Rube Goldberg machine. Most voice AI still runs a three-step relay race: speech-to-text (ASR) → text reasoning (LLM) → text-to-speech (TTS). It works, but it also:

What Hydra actually is

Hydra is smallest.ai’s bet on a voice stack that stops acting like a Rube Goldberg machine. Most voice AI still runs a three-step relay race: speech-to-text (ASR) → text reasoning (LLM) → text-to-speech (TTS). It works, but it also:

- adds latency at every handoff

- flattens tone and intent into text

- creates turn-taking that feels like talking to a drive-thru menu

Hydra’s positioning is different: speech in, speech out, staying audio-native so it can preserve cues like pacing, hesitation, urgency, and emotional tone. smallest.ai describes the experience as asynchronous and full-duplex style, meaning it can start responding while you are still talking, rather than waiting for the clean end-of-turn pause that humans rarely provide in real conversation.

Translation: Hydra is not trying to be a better voicemail box. It is trying to be a conversational system that behaves like a colleague: fast, interruptible, and context-aware.

Why this launch matters now

Voice is having the same evolution text workflows had earlier: first came wow it generates, then came cool can we ship it, and now we are in the can we run it reliably inside operations phase. Hydra matters because it targets the boring stuff that determines adoption:

- Latency (if it pauses, people bail)

- Turn-taking (if it cannot handle interruption, it is not a conversation)

- Automation hooks (if it cannot trigger actions, it is just talking)

smallest.ai claims sub-300ms response latency in its release materials, fast enough to feel instant to most users, and fast enough to make overlapping speech feel natural instead of chaotic.

What asynchronous actually changes

Hydra’s biggest UX promise is that it can listen, reason, and respond simultaneously. That is not just a flex. It is the difference between:

- Voice as interface (talking to a system to get something done)

- Voice as performance (talking to a system to see if it can talk back)

In practical terms, asynchronous speech-to-speech is how you get:

- backchannels (got it, one sec, mm-hmm) that reduce perceived lag

- barge-in handling (you interrupt it, it does not collapse)

- midstream intent detection (it can act before you finish the sentence)

This is where voice starts behaving like a real-time medium again, not a series of audio emails.

Automation potential: tool calling is the point

Hydra’s most operationally interesting claim is mid-utterance tool calling, the ability to kick off external actions while the user is still speaking. That is a big deal because it shifts voice from front-end chat to workflow trigger. Think:

- Book me a demo with Jordan next week, then calendar lookup starts immediately

- What is the status on order 48192, then a CRM or order query begins before the sentence ends

- Send this to the team and tag it urgent, then a Slack or Teams draft plus priority routing triggers mid-turn

Done right, this reduces dead air and makes voice agents feel proactive instead of reactive. Done wrong, it becomes a chaos button. The difference is system design: confirmation steps, permissioning, and safe modes for high-impact actions.

Reality check: tool calling is only ready when you can control it: rate limits, retries, permissions, audit logs, and a clean handoff to humans when confidence is low.

API reality: developer surfaces exist

Hydra is being positioned as something developers can embed, not just try. smallest.ai’s primary public entry point for Hydra is its product page and beta access flow: smallest.ai speech-to-speech.

However, the two GitHub repository links previously referenced for Python and Node SDKs could not be validated as active public repositories under those exact URLs. To avoid sending readers to potentially broken or renamed repos, they have been removed from this post.

For non-technical teams, here is what the developer posture means in plain language: if it is callable, it is automatable. Voice stops being a standalone experience and starts becoming a component you wire into:

- your web app or mobile app

- your call flows (telephony layer plus streaming audio)

- your internal tools (support dashboards, sales enablement, knowledge bases)

- your orchestration stack (queues, webhooks, workflow engines)

Automation readiness snapshot

| Question | Hydra (beta) signal | What it means for teams |

|---|---|---|

| Is it speech-to-speech (native)? | Yes | Lower latency plus fewer components than ASR to LLM to TTS |

| Can we integrate programmatically? | Likely yes (developer surfaces exist) | Build voice agents as part of your product and workflows |

| Can it trigger actions mid-convo? | Claimed yes | Voice becomes a command layer, not just conversation |

| Is it enterprise-ready today? | Not fully proven (beta) | Pilot in bounded use cases and keep a human fallback |

Where Hydra is ready (and where it is spicy)

Speech-to-speech models are having a moment, and yes, a lot of it is shiny. Hydra’s framing is more production-shaped than most, but it is still beta, so the smartest move is to match it to workflows where speed matters and risk is containable.

High-confidence early deployments

- Inbound triage: capture intent, route correctly, summarize cleanly

- FAQ plus status lookups: order status, appointment scheduling, basic troubleshooting

- Lead qualification: collect fields quickly, push into CRM, schedule follow-up

- Creator workflows: voice-based brainstorming, outlining, and rapid iteration, especially when hands-free matters

Needs guardrails (do not YOLO this)

- Billing disputes plus cancellations: emotional plus policy-heavy equals brand risk

- Regulated advice: health, finance, legal without strict policy enforcement

- Transactions and permissions: anything that changes accounts or moves money needs verification

The model is not the system. Voice automation becomes real when you add permissions, escalation, monitoring, and a kill switch, because the weirdest failure always appears during your highest-volume day.

The strategic signal: voice is becoming programmable

Hydra fits a bigger market pattern: voice is splitting into two serious lanes.

- Hosted voice platforms (fast to integrate, less control)

- Model-first voice components (more control, more engineering)

Hydra looks like it wants to be the bridge: audio-native quality with a developer-first integration posture. If that holds, it becomes less new voice demo and more voice primitive you can build on.

If you want a strong internal comparison point in the same real-time, speech-to-speech direction, see our earlier COEY coverage: PersonaPlex-7B Brings Full-Duplex Voice Agents to Open Source (and That’s a Big Deal).

Bottom line

Hydra’s beta launch is a real step toward voice agents that can plug into workflows, not just perform in a demo. The most meaningful parts are not just that it sounds natural. It is the combination of low-latency speech-to-speech, interruption-friendly conversation flow, and the ambition toward mid-utterance tool calling, the feature that turns a voice model into an automation layer.

Keep it grounded: it is beta, and production readiness will come down to reliability under load, controllable tool execution, and the unglamorous plumbing (logging, fallbacks, escalation). But if smallest.ai delivers on the API-driven, workflow-native promise, Hydra is exactly the kind of human plus machine collaboration primitive that helps teams scale creativity without scaling grind.