OpenAI’s “BiDi” leak signals full-duplex voice

OpenAI’s “BiDi” leak signals full-duplex voice

March 8, 2026

OpenAI is reportedly testing a new bidirectional audio model nicknamed “BiDi” that can listen and speak at the same time, basically removing the awkward “your turn / my turn” rhythm that makes most voice agents feel like a bad drive-thru intercom. The most credible public trail right now is still indirect: it’s being discussed via reporting and social chatter rather than a clean product page, but it lines up tightly with the direction OpenAI has already made official in its realtime voice stack.

If you want the closest official surface that maps to the same technical destination (low latency, interruption friendly voice agents), start with OpenAI’s Realtime API voice design guidance: https://platform.openai.com/docs/guides/realtime/voice-design.

What’s actually new here: not “AI can talk,” but AI can handle overlap, interruptions, backchannels (“mm-hmm”), mid-sentence pivots, without forcing silence like a Zoom moderator.

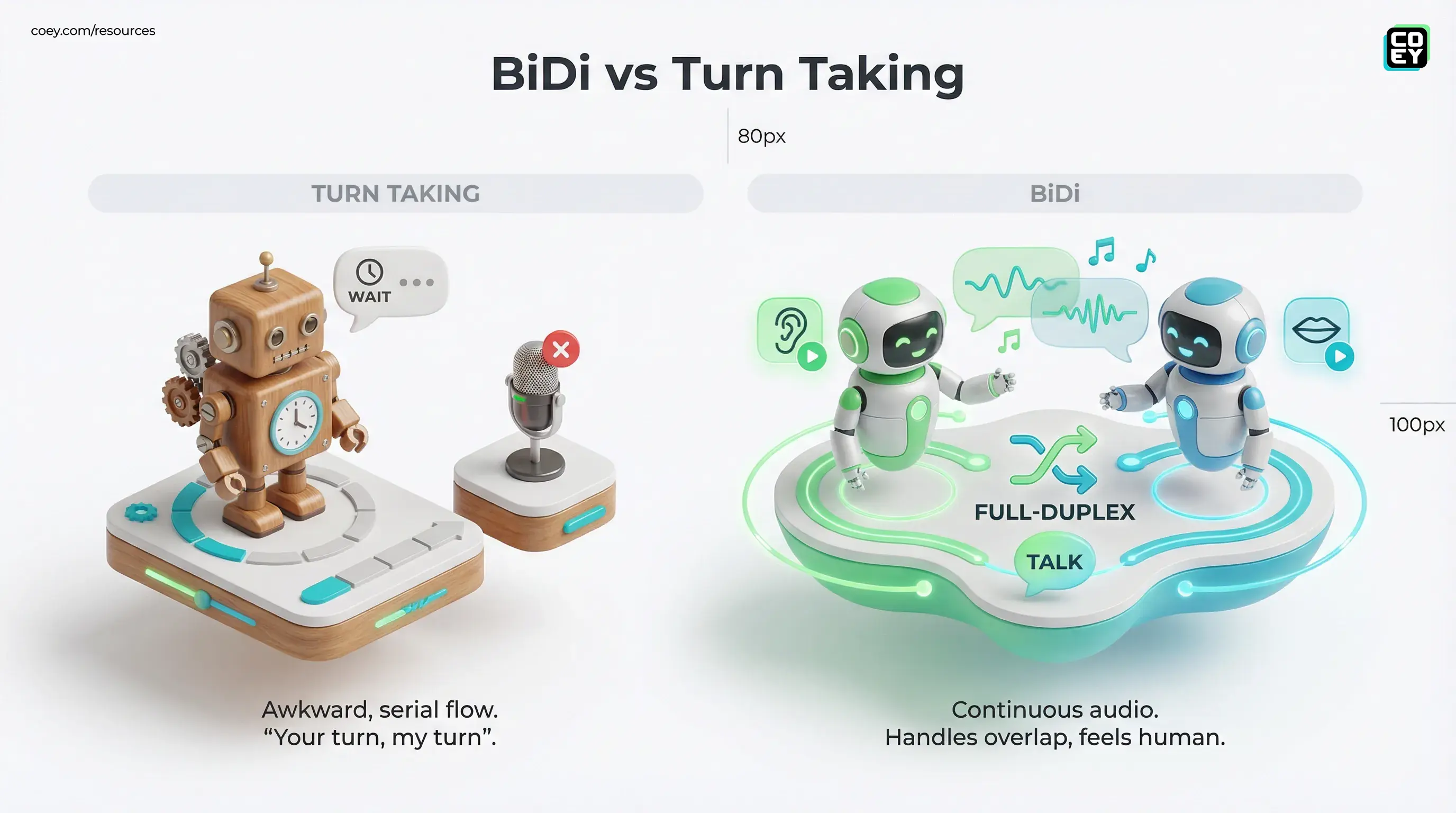

From turn-taking to overlap: why BiDi matters

Most production voice stacks are still “polite.” They wait for the user to stop talking, then do speech-to-text, then send text to an LLM, then run text-to-speech. Even when each piece is fast, the conversation feels slow, because humans don’t actually speak in clean, serialized turns.

BiDi’s reported goal is a full-duplex style interaction: continuous audio input while generating audio output. That means the model can:

- Yield instantly when the user cuts in (no finishing its monologue like a podcast host who won’t read the room).

- Adjust mid-utterance when the user clarifies (“No, I meant the Pro plan”).

- Use overlap as signal, backchannels and timing become part of “understanding,” not just text content.

This isn’t a minor UX tweak. It changes the base assumption for voice automation: the system no longer needs to “close” listening to start speaking. In workflow terms, that’s the difference between a bot that can handle calls and a bot that can handle conversations.

What we know vs what’s still rumor

Right now, “BiDi” is best treated as credible but not formally productized. Reporting and chatter describe it as a prototype that can glitch in longer sessions and is still being hardened for stability.

One write-up in Chinese tech press frames BiDi as OpenAI’s attempt to make voice interactions feel more human by handling interruptions and continuous speech processing: https://www.ithome.com/0/926/341.htm.

What is official: OpenAI has already shipped and iterated on production realtime voice primitives. OpenAI’s own post introducing gpt-realtime and Realtime API updates is explicit about low latency voice agents and tool (function) calling: https://openai.com/blog/introducing-gpt-realtime/.

So the pragmatic read is: BiDi looks like the next step in a roadmap OpenAI has already admitted it’s on. The name may change. The capability direction probably won’t.

API reality: can you automate this or is it locked?

Executives don’t need model gossip. They need the integration answer: Is this going to be callable?

Given OpenAI’s existing realtime architecture, the likely delivery vehicle is the Realtime API (WebRTC in-browser, WebSocket server-side), where the core automation hooks already exist.

If you want a COEY-level, workflow-first breakdown of what is already deployable in OpenAI’s realtime voice stack, start here: OpenAI Realtime Voice API Makes Agents Deployable.

- Streaming audio in and out with persistent sessions

- Function (tool) calling inside the conversation loop

- Event-driven control including interruption handling via realtime events, plus partial transcripts in transcription mode

For non-technical teams, translate that as: if it’s accessible through the same realtime endpoints, it’s automatable. It can become a component in your stack, connected to CRMs, ticketing systems, scheduling, order lookup, and analytics, rather than a standalone “voice bot product” you can only demo in a UI.

Automation readiness snapshot

| Question | Current best read | What it means for teams |

|---|---|---|

| Is full-duplex behavior real? | Prototype reports say “yes,” not GA | Pilot planning is reasonable; enterprise rollout needs patience |

| Is there an API path? | Likely via Realtime API surfaces (WebRTC or WebSocket) | Fits modern automation patterns (realtime session plus tools) |

| Can it trigger real actions? | Realtime stack supports tool (function) calling | Voice becomes “do work,” not “talk about work” |

| Is it production-ready today? | Not proven (prototype stability concerns) | Keep bounded use cases plus human handoff |

Where full-duplex voice actually changes the business

Voice agents have been “almost there” for years. The killer has been latency plus interruption handling. Fix that and suddenly a bunch of use cases become viable without users rage-hanging-up.

Sales: objection handling without dead air

Sales calls are interrupt-heavy. Prospects cut in, redirect, ask a question mid-pitch, correct assumptions. A turn-based bot fails here because it forces unnatural pauses. A full-duplex bot can:

- respond to objections in real time

- stop talking instantly when interrupted

- capture structured fields while still conversational (budget, timeline, use case)

Support: de-escalation is timing

In support, people interrupt because they’re stressed, not because they’re rude. Full-duplex improves the human feel (acknowledgments, faster clarification loops), which directly impacts containment rate and CSAT, if the knowledge and escalation logic is solid.

Marketing: voice becomes an input stream

Here’s the creator-to-creator angle: the real power isn’t “AI handles calls.” It’s that calls become structured content fuel.

- Objections turn into ad angles

- FAQs turn into landing page sections

- Feature confusion turns into onboarding scripts

That’s COEY’s mission in practice: humans set intent and narrative; machines capture, structure, and route reality at scale.

Hype check: what can still break (even if BiDi works)

Full-duplex is a huge unlock, but it doesn’t magically make voice automation safe. It just makes it possible to build voice systems that don’t feel broken.

- Long-session drift: prototype reports say BiDi can glitch after a few minutes. That’s the difference between a demo and a call center shift.

- Noise plus accents: real audio is hostile: background noise, speakerphones, crosstalk. You still need robust audio front-ends and fallbacks.

- Tool chaos: if you let an agent do things mid-call without permissions, confirmations, and audit logs, you’re not innovating. You’re speedrunning an incident report.

The model is not the system. Full-duplex audio is the engine. Production readiness is permissions, logging, retries, escalation, and a kill switch.

What to watch next (the real signals)

If BiDi is going to matter operationally, the signals won’t be a viral clip. They’ll be boring, and that’s good.

- Clear API exposure: documented endpoints, stable model names, quotas, pricing

- Telephony pathways: hardened patterns for PSTN and SIP via partner integrations (Realtime is typically used behind a telephony bridge, not as a direct PSTN endpoint)

- Controls: interruption tuning, barge-in handling, session observability

- Enterprise hygiene: retention controls, redaction, audit trails

Until then, the smartest move for most teams is to treat BiDi as a near-future capability and keep building the pieces that will make it valuable on day one: structured tool schemas, safe function calling, escalation paths, and analytics on outcomes, not vibes.

Bottom line

BiDi is the most important kind of voice progress: the kind that turns “AI voice” from a demo into a workflow primitive. If OpenAI lands true full-duplex stability, the practical impact is straightforward: faster calls, fewer misunderstandings, better interruption handling, and a much cleaner path to automating real voice-driven operations through APIs.

Just don’t confuse “it can overlap audio” with “it can run your customer experience unattended.” The winners will be the teams who pair full-duplex voice with grown-up automation design: permissions, confirmations, audit logs, and human handoff, so the machine scales the grind and the humans keep control of intent, trust, and brand.