Helios Goes Open: Real-Time AI Video Hits 19.5 FPS (and That Changes Workflows)

Helios Goes Open: Real-Time AI Video Hits 19.5 FPS (and That Changes Workflows)

March 15, 2026

PKU-YuanGroup’s “Helios” is being positioned as an open release of a 14B parameter long-video generation model, with public materials reporting real-time generation up to about 19.5 FPS on a single NVIDIA H100. That is not “a bit faster.” That is “interactive video synthesis becomes something you can design around,” at least for teams with serious GPU access.

Open video has had a pattern: exciting demos, slow inference, short clips, and the dreaded drift (where your protagonist gradually becomes a different species). Helios is swinging at the whole bundle: longer sequences, better temporal stability, and an architecture explicitly aimed at making minute-scale generation less brittle.

Translation for executives: if Helios performance holds up outside controlled benchmarks, video generation stops being a batch render job and starts looking like a callable, low-latency creative primitive you can plug into campaign ops, not just R&D.

What Helios actually shipped

Helios is described as a builders-first release: code + weights, not just a paper and vibes. The technical report is publicly accessible on arXiv: Helios: Real Real-Time Long Video Generation Model.

Important link check note: the post you provided links to https://github.com/PKU-YuanGroup/Helios, but that repository was not discoverable under the PKU-YuanGroup organization at time of validation. Until the repo is confirmed publicly accessible, it should not be linked directly. If the team publishes a corrected repo URL, swap it in here.

Core claim set (from release materials and writeups):

- Model size: 14B parameters

- Speed: up to about 19.5 FPS on a single H100 in a real-time distilled, few-step configuration

- Length: positioned for minute-scale generation, with the report describing outputs up to about 60 seconds in some settings

- Modes: text-to-video, image-to-video, and video-to-video in a unified setup

One extra detail worth clocking: Helios is framed as achieving speed without relying on common acceleration techniques (quantization, sparse attention, KV-cache). That is either genuinely impressive engineering discipline, or a reminder you will still need a real serving stack to reproduce “19.5 FPS” on your own workloads.

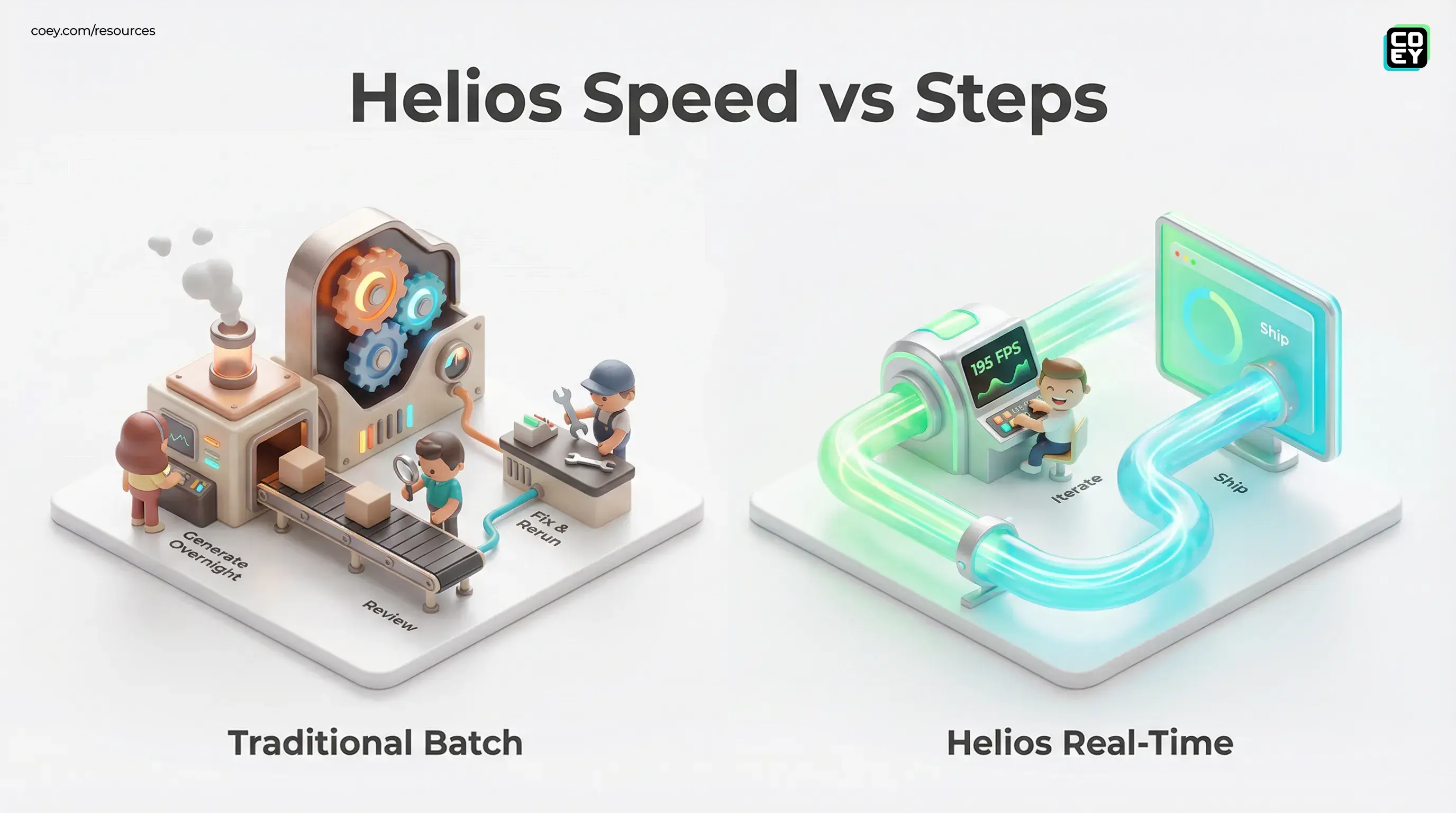

Why “real-time” is a workflow shift

Most marketing orgs do not lose to competitors because they cannot generate content. They lose because they cannot iterate content fast enough with humans in the loop.

Slow video generation forces a batch mentality:

- generate overnight

- review tomorrow

- fix prompts

- re-run

- repeat until your campaign window is gone

Helios real promise is changing the rhythm. If creators can get feedback fast enough to jam with the model like adjusting a cut in an editor, you can build systems where humans do what they are good at (direction, taste, selection) and machines do what they are good at (volume, variation, brute-force exploration).

Speed is not a nice-to-have. It is the difference between AI as a novelty tool and AI as part of your production cadence.

Model lineup and what it implies

The project describes multiple variants with different tradeoffs. The naming you will see referenced:

- Base (quality-first, more steps)

- Mid (balanced)

- Distilled (few-step sampling for max throughput, the real-time hero)

That matters because it maps cleanly onto real creative operations:

- Distilled for prototyping and rapid reviews (is this the right concept?)

- Mid/Base for higher-fidelity final candidates (is this shippable?)

Helios is not just trying to win a leaderboard. It is structured like something you can operationalize: draft fast, then render nice. That is how human teams already work.

API availability and automation reality

Helios is open-source infrastructure, not a hosted product. That is powerful and also where a lot of teams faceplant, because “we can download it” is not the same as “we can run it reliably.”

Non-technical translation: there is not a first-party SaaS API you can just connect to Make or Zapier as part of this release. But because it is code + weights, it is inherently API-possible if you can deploy it.

| Question | Best answer | What it means in practice |

|---|---|---|

| Can we automate it? | Yes, if you can serve it | Wrap inference in a job queue plus a simple HTTP service |

| Is there an official hosted API? | Not the point of this drop | Plan on self-hosting or a third-party wrapper |

| Is it workflow-ready? | For teams with GPU plus ops maturity | Ready depends on uptime, monitoring, retries, governance |

Once wrapped, Helios can slot into the same automation patterns creative teams already use for scalable production:

- Variant factories: generate 50 hooks, select 5, upscale and render finals

- Localization lanes: same storyboard, region-specific prompts and overlays

- Personalization at scale: data-driven prompt inputs (segment, offer, product) feeding batch jobs

Drift, coherence, and the minute-scale claim

Long video generation is where most open models start acting like they are in a hostage situation. You get:

- identity drift (faces morph)

- background mutation (objects teleport)

- motion loops (the cursed walk cycle forever)

Helios positioning is that it addresses drift more directly, with training strategies aimed at maintaining coherence over longer sequences. That is the right target: drift is the reason most teams keep AI video in the concept art lane instead of the campaign production lane.

Still: keep your skepticism sharp. “Up to about 60 seconds” is not the same as “your brand mascot stays perfect for 60 seconds across 200 variants.” The gap between research stability and production stability is where budgets go to die.

Pragmatic bar: if Helios reduces drift enough that humans can reliably pick winners (instead of repairing losers), it is a major operational win even if it does not eliminate drift entirely.

Hardware and readiness: who can use this now?

The speed headline is tied to an NVIDIA H100. That is an enterprise-class GPU: amazing if you have it, irrelevant if you do not.

Teams with GPU access (or cloud budget)

If you already run ML workloads or rent serious GPUs for bursts, Helios is a new contender for building a private video pipeline. Open weights are especially attractive if you care about keeping unreleased creative inside your environment.

Studios and agencies building internal tooling

The open release makes Helios a candidate for a video generation service inside your stack: brief in, candidates out, human selects, downstream edit tools finish the job.

Teams that should wait (for now)

If your org needs plug-and-play SaaS with support SLAs and a friendly UI, Helios is not that. You can get there via wrappers and platform partners, but the raw drop is builder-first.

The bigger signal: video is becoming programmable

Helios is a signal that open-source video is pushing into the zone that made open-source image generation explode: speed + control + deployability.

And once video is programmable, the creative stack changes:

- humans define constraints, intent, taste, and brand rules

- machines generate options, variations, and drafts at machine speed

- automation routes outputs through review, selection, compliance, and publishing

If you want a nearby reference point for the “open video becomes operational” arc, COEY has also covered Lightricks’ production posture with open video plus audio in LTX-2 Makes Open Video Plus Audio Practical.

Bottom line: Helios is one of the most workflow-shaped open video releases in a while, because it is not only chasing quality, it is chasing latency. That is what makes human plus AI collaboration feel natural instead of bureaucratic. If your team can operationalize deployment, Helios has a real shot at turning AI video from render-and-pray into iterate-and-ship.