Google’s Gemini API just got a lot more serious, and Gemini 3.1 is one of the clearest signals yet that Google is aiming at production workflows, not just “chatbot vibes.” The practical improvements are the ones operators care about: better multi step execution, cleaner structured outputs, stronger multimodal handling, and clearer deployment paths depending on whether you are prototyping in AI Studio or running governed workloads in Vertex AI.

If you’re a CMO, agency lead, or product marketer trying to scale content without scaling headcount, the question isn’t “is it smart?” It’s: can we plug it into workflows, trust it with brand safe execution, and ship faster this quarter? Gemini 3.1 is closer to that bar than earlier Gemini generations, but the fine print still matters: surface availability, model tier, quotas, latency, and whether you are building for batch jobs or real time experiences.

Bottom line: Gemini 3.1 is most interesting as a workflow model for document heavy, multi step, automation friendly work, more than as a “creative genius in a box.”

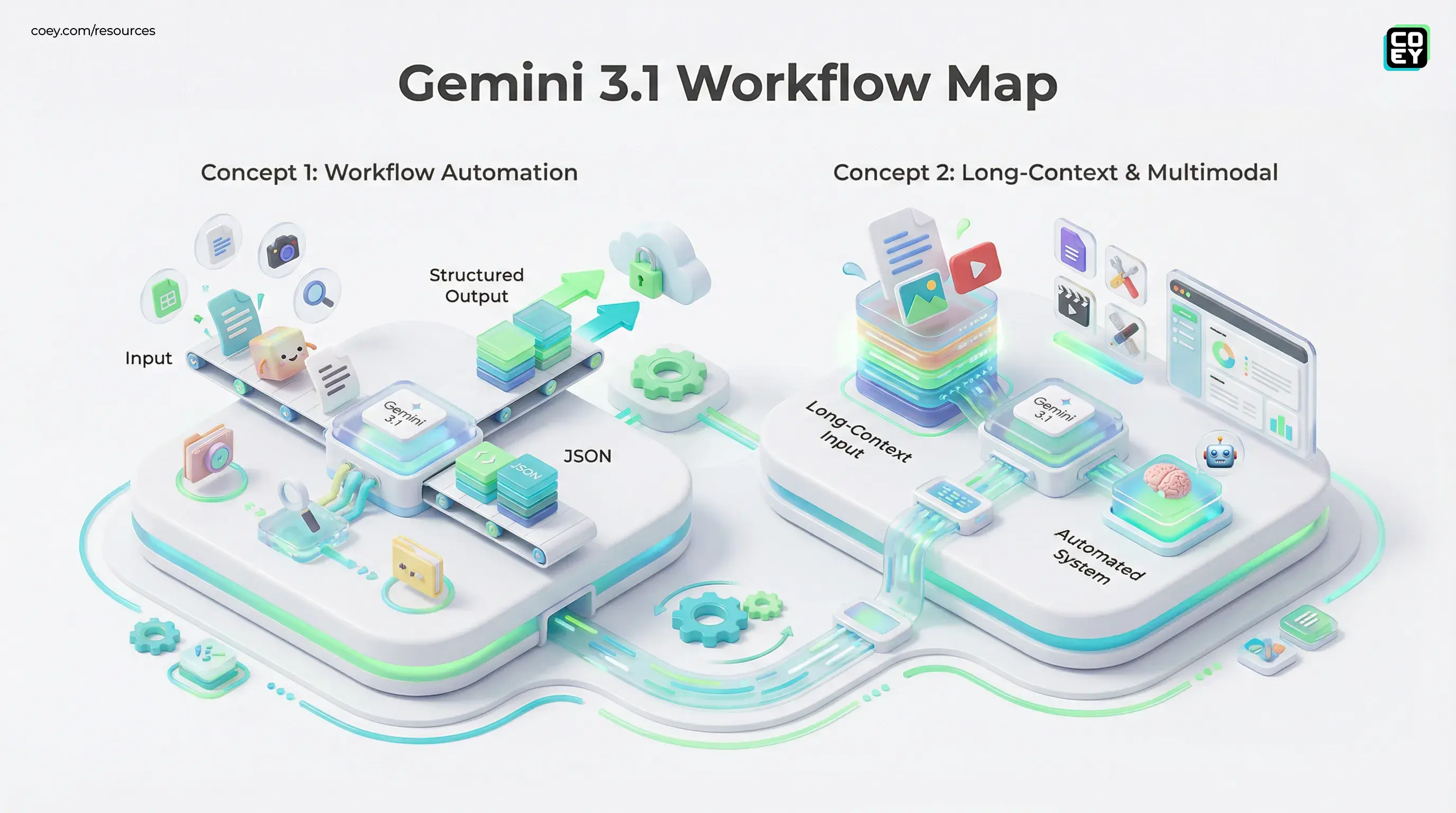

What Gemini 3.1 is really optimized for

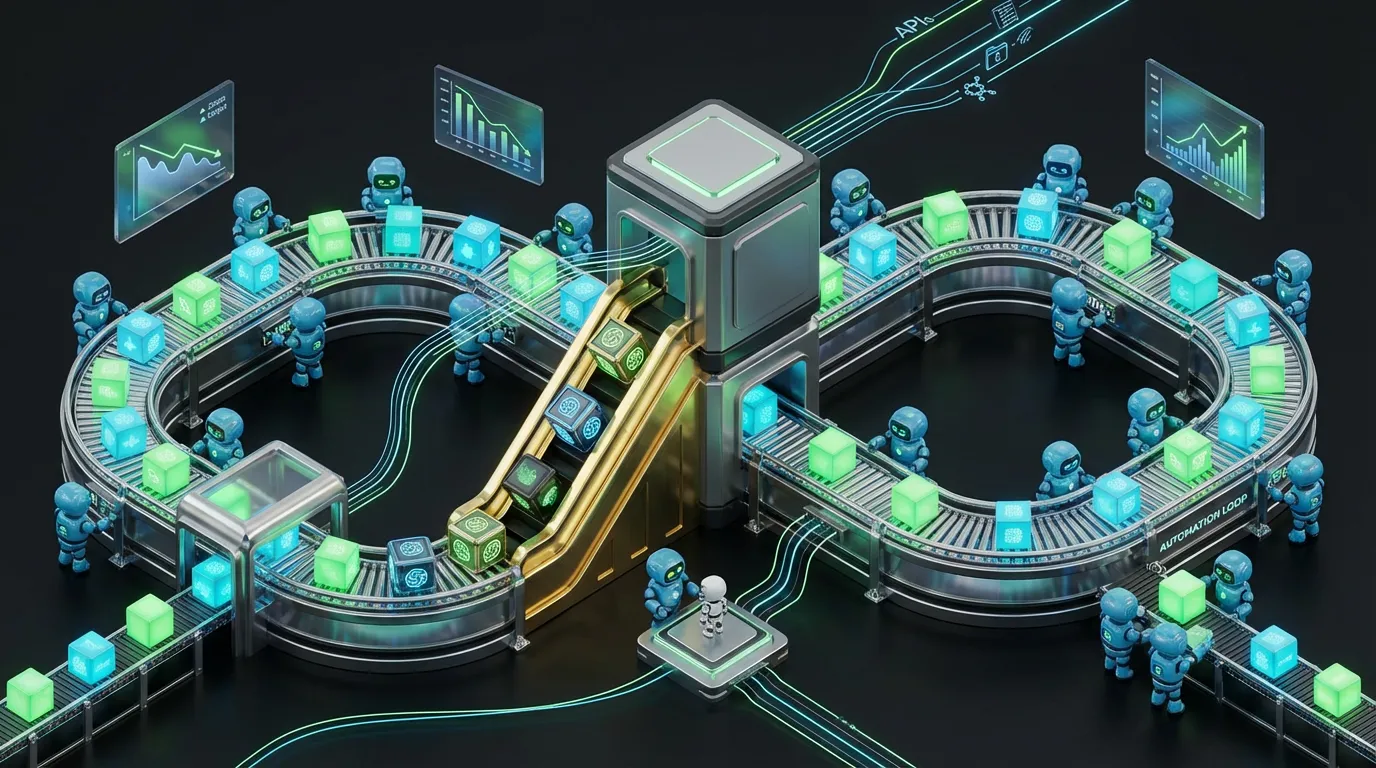

Gemini 3.1 reads like a response to how teams actually use LLMs now: not just generating copy, but orchestrating pipelines like brief to research to outline to drafts to variants to QA to publish. The differentiators are not only benchmark scores. They are operational features that reduce rework and make automation less fragile.

- Long context: fewer chunking hacks, more “drop the whole corpus in and ask precise questions.”

- Multimodal understanding: useful for creative review loops using screenshots, decks, and visual QA, not just image description.

- Structured outputs: critical for automation, especially when JSON must be reliable.

- Cloud pathways: positioned to run inside real stacks via Google’s developer surfaces and enterprise deployment options.

This matters because the winners in 2026 are not the teams with the most prompts. They are the teams with the most repeatable systems.

Reasoning: better at multi step work

Gemini 3.1’s most meaningful improvement is practical reasoning: less drift, more follow through. In production, this shows up as fewer “confident nonsense” moments and fewer broken chains across multi step workflows.

More reliable decomposition

Gemini 3.1 is more likely to break ambiguous requests into tractable steps and ask clarifying questions when the brief is fuzzy. That is not glamorous, but it is the difference between a one off draft and consistent weekly output without constant human babysitting.

Better evaluation and critique

For teams using AI as a second set of eyes for claim checks, tone audits, and compliance sweeps, better reasoning means you can hand it bigger chunks of work with less risk. You still need guardrails, but the “review and route” use case is where Gemini gets especially useful.

If you are using AI to draft, Gemini 3.1 helps. If you are using AI to review and route work through a pipeline, Gemini 3.1 helps more.

Multimodal: useful for creative operations

Multimodal is finally turning operational. Gemini 3.1 supports mixed inputs such as text plus images, and availability of additional modalities can vary by surface and tier.

- Ad and landing page critique: upload a screenshot, request conversion focused improvements, extract claims, flag mismatches with the brief.

- Deck and creative QA: scan a slide for brand issues, missing disclaimers, unreadable type, or off message sections.

- Document extraction: turn PDFs and scans into structured data for downstream automation.

Where teams get tripped up is generation versus understanding. Google has multiple model families and endpoints for image and video generation, and Gemini often plays the role of planner and orchestrator in those workflows. Treat “generation” claims as surface dependent, not universal.

Context window: big enough to change workflows

Long context is the quiet feature that changes the workflow math. With very large context windows in the Gemini family, it becomes easier to move from brittle chunked prompts to whole corpus analysis for tasks like campaign memory, compliance review, and deep synthesis.

That shifts workflows from:

“Summarize these 12 chunks and hope nothing breaks”

to

“Here is the full history, now answer precisely.”

- Campaign intelligence: feed past emails, landing pages, results, and brand notes to generate better next iterations.

- Sales enablement: ingest battlecards plus competitor pages plus call transcripts and output objection handling by segment.

- Legal and compliance support: run draft copy against policy docs and prior approvals.

Tradeoff: large context can be slower and more expensive. It tends to be best for batch jobs and less ideal for customer facing flows where latency becomes a UX problem.

API readiness: can it actually plug in?

This is where the difference between “cool demo” and “operational leverage” shows up. Gemini 3.1 is automation friendly when you are using it through Google’s developer stack and or through enterprise deployment options.

Gemini API and Google AI Studio

The Gemini API is the fastest path to testing and iterating on real workflows. It is where teams prototype internal tools, QA assistants, and structured content pipelines.

Vertex AI for enterprise workflows

Vertex AI is where governance, org controls, auditability, and scaled deployment typically live. Availability can differ by region and model variant, so teams should validate what is enabled for their org and location.

Speed tiers: Pro vs Flash logic

The practical strategy is to use multiple tiers, not pick a single “best” model.

- Use Flash style models for high volume work such as tagging, rewriting at scale, first pass summaries, and bulk categorization.

- Use Pro style models for high stakes outputs such as messaging frameworks, multi doc synthesis, technical content, and strategy heavy work.

Pricing and limits move quickly, so treat screenshots and secondhand claims as stale by default. For current pricing, use Google’s official pricing page: Gemini API pricing.

Safety and enterprise controls

Gemini 3.1 is designed to be deployable inside regulated environments, which is good news for brands that cannot afford “oops” moments. The tradeoff is that stricter guardrails can sometimes produce more sanitized outputs.

The practical fix is not “ditch the model.” It is to design the system:

- Use retrieval to ground outputs in approved brand language.

- Route edgy creative through human review.

- Separate ideation from publishable copy in your pipeline.

Safety is not the enemy of creativity. Uncontrolled automation is.

Leaderboard snapshot: treat rankings carefully

Benchmarks can signal momentum, but they do not guarantee performance for your niche workflow. If you want a reference point for specific benchmarks, use sources that publish updated tables and methodology.

| Benchmark | What it measures | Where to check |

|---|---|---|

| GPQA (Diamond) | Hard, graduate level QA | LLM Registry GPQA |

| LM Arena | Human preference, head to head | LM Arena |

Instead of betting on a leaderboard, the executive move is to pick the best model per job and standardize automation around outputs you can measure, test, and enforce with critics.

What to watch before betting the stack

Gemini 3.1 looks increasingly real world ready, but a few checks matter before you roll it across your org:

- Modality availability: confirm what inputs and outputs are actually enabled on your chosen platform and tier.

- Latency under load: long context is great until it bottlenecks your pipeline.

- Guardrails vs brand voice: test your real tone, not a sanitized demo prompt.

- Structured output reliability: if JSON breaks, automation breaks, so measure it.

Gemini 3.1 is not “the model that replaces your team.” It is the model that makes your team’s best thinking repeatable, especially when you wrap it in a system that knows what to do next.

If you want COEY’s closer operational breakdown of the Gemini 3.1 family and how it maps to automation tiers, see: Google Gemini 3.1 Pro Lands as a Reasoning Upgrade You Can Actually Automate.