Luma Uni-1 Tries to Make AI Visuals Less Chaotic

Luma Uni-1 Tries to Make AI Visuals Less Chaotic

March 24, 2026

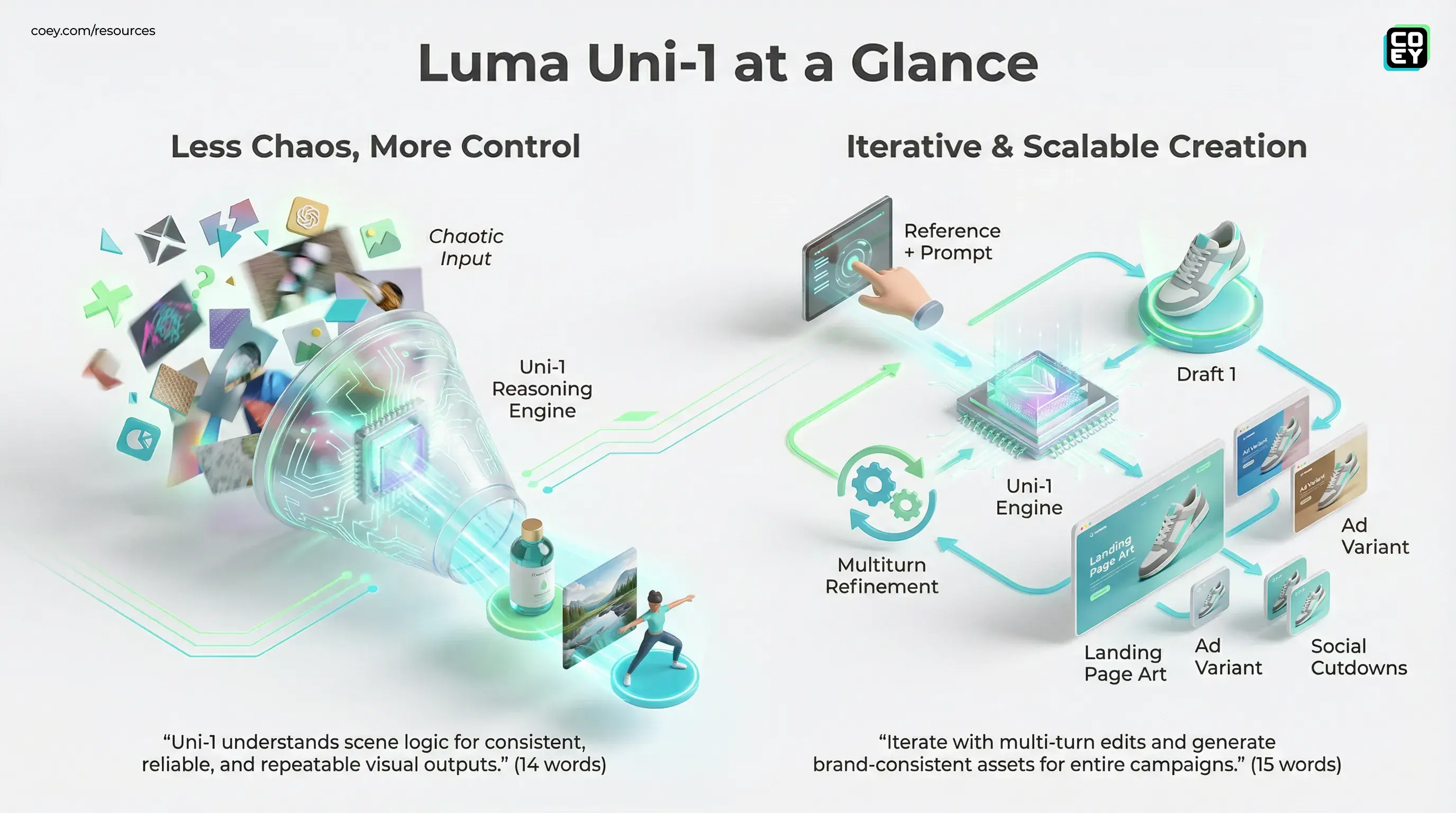

Luma has introduced Uni-1, a new model the company positions as a unified system for visual understanding and image generation. That wording matters. The pitch is not just “here’s another model that makes pretty frames.” It is that Uni-1 can reason about a scene before it renders, which is exactly the part most creator teams have been begging AI tools to stop fumbling. If you have ever watched a model nail frame one and then immediately forget the product, the lighting, and the laws of physics by frame three, yes, this launch is aimed at that pain.

For marketers, creators, and execs trying to separate signal from shiny-object syndrome, Uni-1 looks less like a novelty drop and more like Luma’s attempt to tighten the gap between concept and usable asset. The big question is not whether the demos look slick. The big question is whether this is ready to plug into real production, automate reliably, and hold up when a brand team starts asking for version 12 with “just a few small tweaks.”

Uni-1’s real promise is simple: less reroll roulette, more controllable output, and a better shot at turning AI visuals into repeatable workflow infrastructure instead of prompt-casino entertainment.

What Uni-1 actually changes

Uni-1 is built as a combined reasoning-and-generation model. According to Luma, it handles text and image inputs in one system and plans around spatial, logical, and temporal constraints before generating visuals. In plain English: instead of guessing its way through an image and hoping vibes carry the day, it tries to understand what belongs where, what should stay consistent, and how elements in a scene relate to each other.

That has big implications for teams producing campaign visuals, concept art, product scenes, and iterative design work. Most image and video models are still great at “one cool output” and less great at “now do that again, but with the same subject, same layout logic, same brand feel, and a new angle.” Uni-1 is trying to solve the second problem, which is the one that actually matters in production.

What stands out

- Scene reasoning before rendering: stronger adherence to relationships, composition, and instruction logic.

- Reference-aware generation: Luma says Uni-1 supports up to nine reference images to help anchor identity, style, composition, or edits.

- Multi-turn refinement: Luma is pushing Uni-1 as something you can iterate with, not just fire once and pray over.

- Benchmark positioning: Luma is publicly positioning Uni-1 as a top-tier model on human preference and reasoning-heavy visual evaluations, though those claims are still company-led and should be treated accordingly.

This is the broader trend worth watching: visual models are moving from raw generation toward structured co-creation. Less “surprise me,” more “follow the brief.” About time, honestly.

Why marketers should care

For most non-technical teams, the win is not some architectural breakthrough. It is whether the tool reduces revision pain. That is where Uni-1 gets interesting.

Brand and performance teams need visuals that survive repetition. The same product may need hero images, landing page art, ad variants, social cutdowns, and campaign extensions. If every render comes back with a different bottle shape, logo treatment, or lighting mood, the model is not helping much. It is just generating more work for humans to clean up.

Uni-1 appears designed for a more controlled loop: provide clearer references, establish constraints, and refine outputs with fewer random detours. That gives creative teams a better chance to scale content without giving up brand consistency.

| Need | What Uni-1 improves | Business outcome |

|---|---|---|

| Consistent campaigns | Better reference-following and scene logic | Fewer unusable outputs and faster approvals |

| Creative iteration | Multi-turn edits instead of one-shot rerolls | Shorter revision cycles |

| Scalable asset production | More predictable generation behavior | Easier batch creation for teams and agencies |

That does not mean Uni-1 eliminates human review. Not even close. But it could reduce the amount of tedious art direction-by-repair that currently makes AI image workflows feel like babysitting a very talented raccoon.

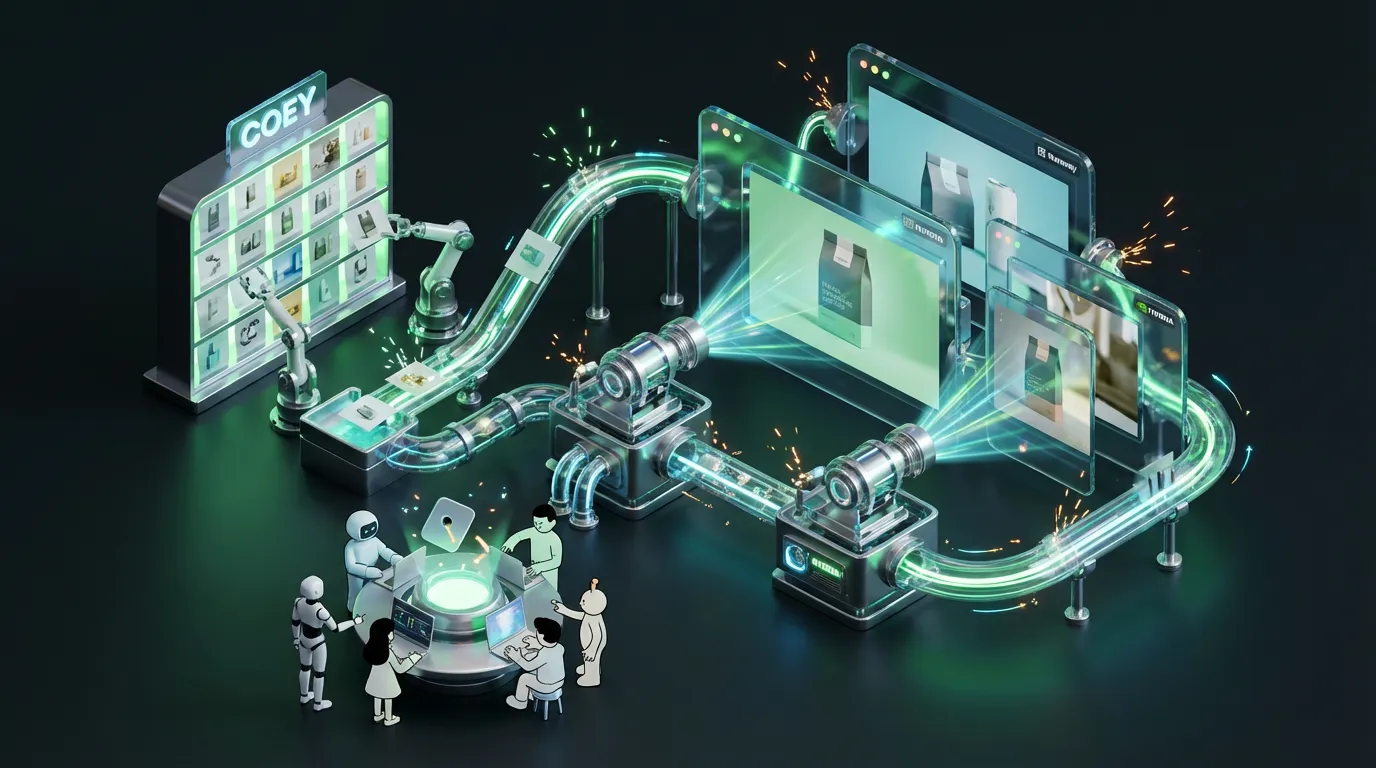

API reality matters here

This is where the launch gets more practical. Luma already offers a developer platform through its API platform, with production endpoints and public documentation for generation workflows. Current docs clearly support video models such as Ray 2 and Ray Flash 2, including job-based generation and polling patterns for async workflows. Luma also publicly says Uni-1 is available through its platform and API, but teams should note that the deepest Uni-1-specific API documentation and full rollout details still appear to be evolving.

What is less clear is how fully Uni-1 is exposed across those same API layers right now. Luma’s broader platform is developer-friendly, but teams should avoid assuming that every shiny new capability is instantly available in headless, production-grade form on day one. The smart read is this: Luma has real API infrastructure, and Uni-1 is being positioned for API access, but teams should confirm exact endpoint coverage, access level, and rollout status before planning around it.

What can likely be automated

- Prompt-driven image generation: trigger jobs from forms, spreadsheets, or campaign systems.

- Reference-based creative production: use stored brand assets to guide output.

- Asynchronous pipelines: submit jobs, monitor completion, then route assets into DAMs, CMSs, or editors.

- Variant generation at scale: useful for ad testing, ecommerce creative, and editorial packaging.

For a marketing leader, the translation is simple: yes, Luma can fit into a workflow stack. No, it is not yet a magic “push button, receive perfect campaign system” machine. You still need orchestration, approval steps, and quality control.

Where Uni-1 looks ready now

Uni-1 looks most credible today in areas where visual consistency and controlled iteration matter more than full cinematic production. Think:

- Campaign concepting with tighter art direction

- Product visuals that need reference adherence

- Creative testing for multiple ad or thumbnail directions

- Editorial and social asset development where speed beats perfection

- Internal prototyping for pitches, moodboards, and design exploration

If your team is already using AI for first-pass visuals, Uni-1 could improve output quality by making those first passes less random and more usable. That is not a small upgrade. The first draft is where automation either compounds value or creates cleanup debt.

What still feels early

It is also worth cooling the hype jets a bit.

Uni-1 is a strong signal, but not a full end-state. Luma’s announcement centers on image generation, image editing, and visual reasoning. While Luma’s broader platform includes video models and its Creative Agents push spans image, video, text, and audio through partner tools, Uni-1 itself should not be described as a single public model for fully unified text, image, video, and audio generation.

There are also the usual caveats that never make the sexy launch montage:

- Enterprise governance: policy controls, approvals, and provenance still matter.

- Consistency across long campaigns: better is not the same as bulletproof.

- Workflow glue: Make, Zapier, n8n, or custom middleware are still your friends.

- Cost discipline: the metric is not render cost, it is cost per approved asset.

If a model cannot survive brand review, legal review, and a late-stage stakeholder comment, it is not “workflow-ready.” It is just a very expensive brainstorming partner.

How it fits the bigger shift

Uni-1 is part of a broader industry move away from isolated generation tools and toward systems that can understand, plan, and collaborate more like production software. That shift matters because creative teams do not just need outputs. They need continuity, editability, and integration.

That is the bigger story here. AI visuals are maturing from spectacle into infrastructure. Slowly, unevenly, and with plenty of weird hands along the way, sure, but still. Uni-1’s significance is less about a single model win and more about the direction it represents: models that can reason enough to become more reliable creative collaborators.

For COEY’s audience, that is the threshold to watch. Not “is it impressive?” but “can a human use this to scale good creative work without scaling chaos too?” Uni-1 looks like a meaningful step toward yes.

Bottom line

Luma’s Uni-1 is one of the more interesting visual AI releases because it focuses on the boring-but-essential thing that actually unlocks production value: control. By combining scene understanding with generation, it aims to reduce drift, improve reference adherence, and make iterative visual creation feel less random.

The automation story is promising because Luma already has a real developer stack at its API platform, not just a glossy front-end toy. Public Luma materials also point to Uni-1 API availability, but the pricing claim in the original draft needed correction. Current public pricing pages point to pixel-based image pricing rather than a clear public standard of $0.09 per 2K image, so teams should confirm current model pricing, access terms, and production readiness before hardwiring budgets or workflows around any estimate.

For creators and marketers, Uni-1 looks ready to make the first draft smarter, the revision loop tighter, and the human-machine collaboration a little less chaotic. In this market, that is not small. That is the difference between AI that dazzles in demos and AI that earns a spot in the stack.