Google’s Gemini Live Push Makes Voice AI More Useful. The API Reality Is the Real Story.

Google’s Gemini Live Push Makes Voice AI More Useful. The API Reality Is the Real Story.

March 26, 2026

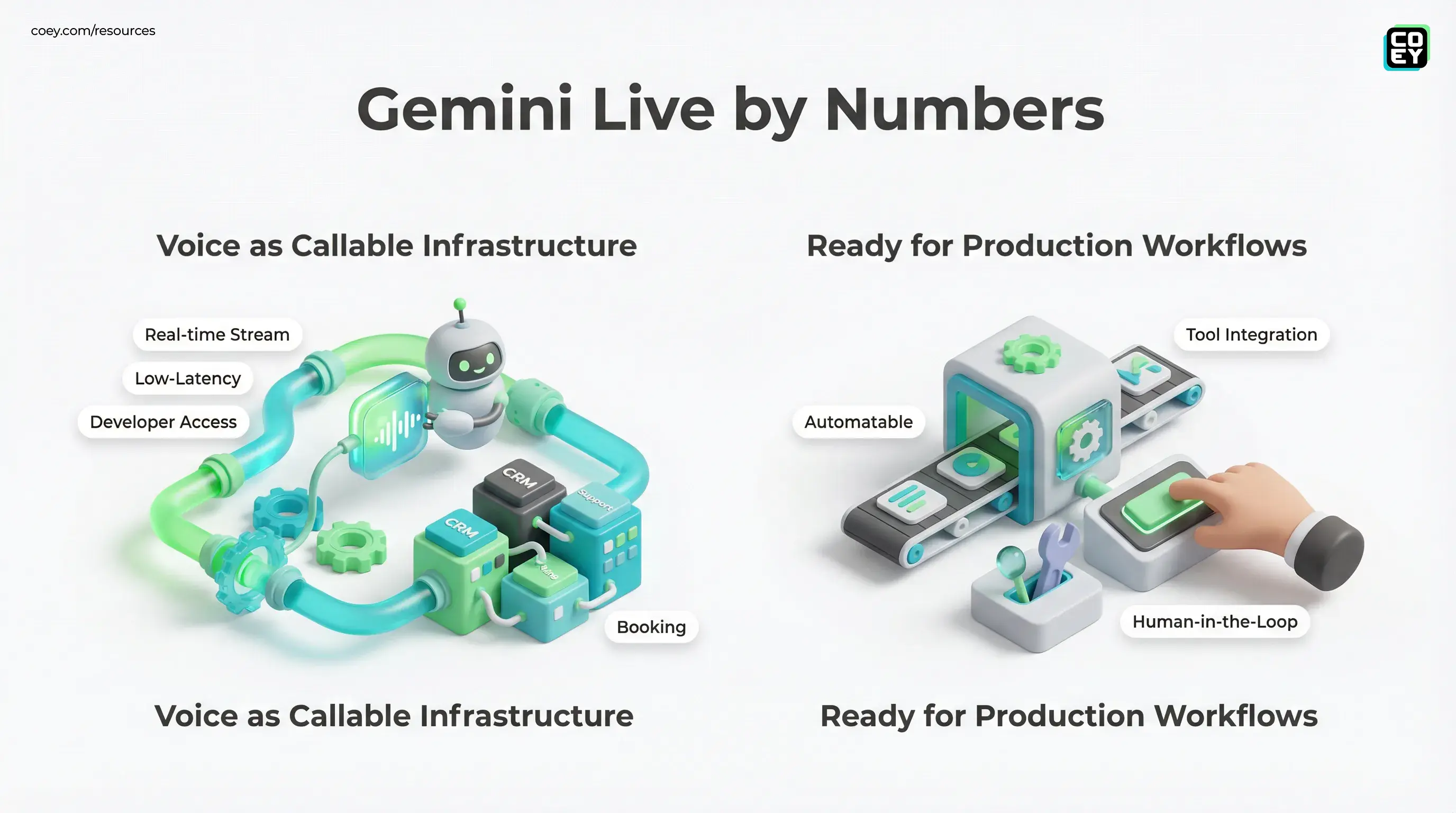

Google’s Gemini Live stack and Live API are getting more serious about real-time voice, and that matters for a much bigger reason than “AI can now sound more natural.” The real shift is that Google is turning voice from a flashy app feature into something closer to workflow infrastructure. For marketers, operators, and execs, that is the line that matters. Plenty of companies can demo a smooth AI conversation. Fewer can make voice usable inside support flows, lead capture, commerce journeys, or creator pipelines without the whole thing collapsing into latency, scripting hacks, or compliance anxiety.

The more grounded read is this: Google’s current Gemini Live and Live API capabilities point to a stronger real-time audio stack, but teams should evaluate it based on three boring adult questions: Can it be automated? Is there a real API path? Is it ready for production use beyond the keynote sizzle reel?

Voice AI is graduating from “cool demo” to “callable system layer.” That is more important than whether the voice sounds slightly more human.

What Google actually has now

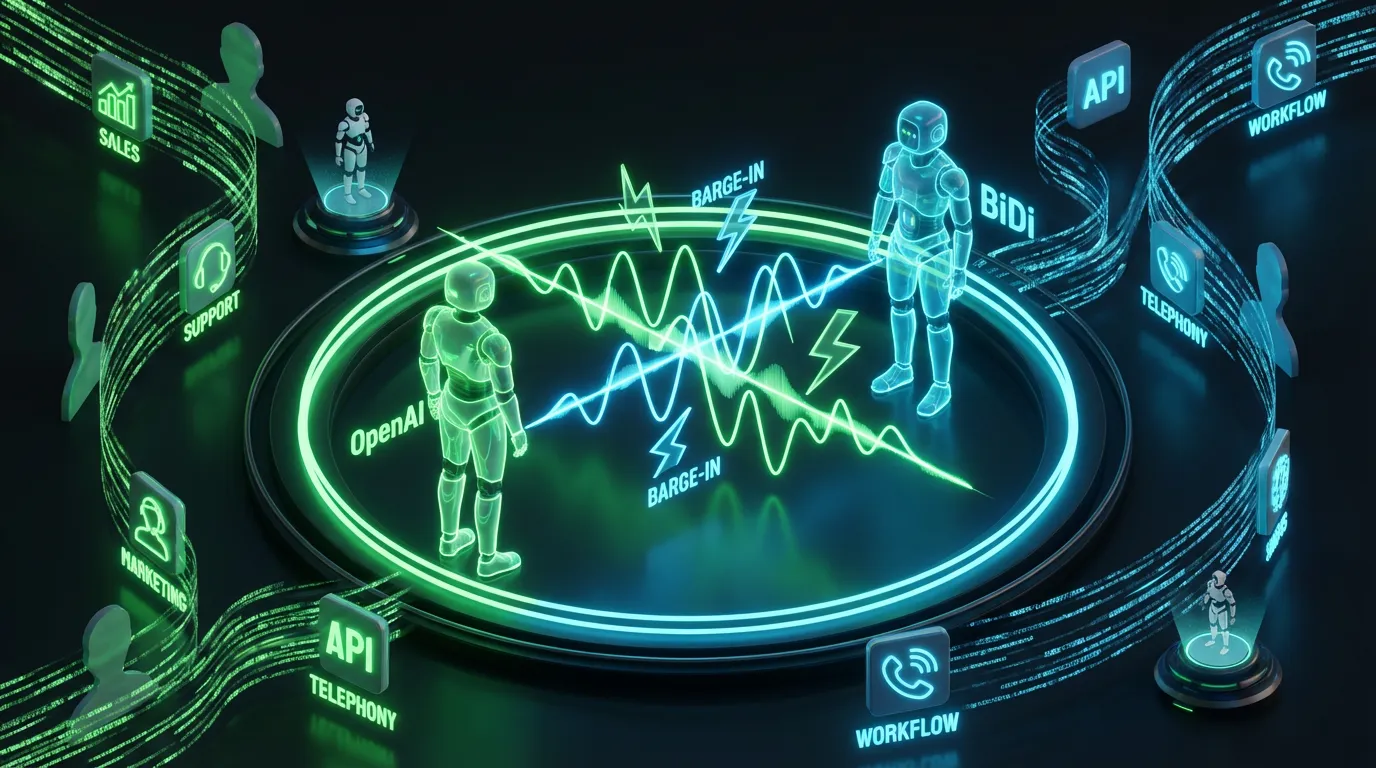

Google’s Live API is a WebSocket-based interface for streaming, bidirectional interactions using audio, text, and video input, with text and audio output depending on the model and configuration. In plain English: it is designed for conversations that happen in real time, not the old pattern of “record audio, upload, wait, receive a response, pretend that lag feels natural.” If you want the technical entry point, Google documents it directly in the Live API reference.

That matters because the architecture determines whether a tool can plug into operations. A polished UI demo is nice. A persistent, documented real-time endpoint is what lets a business wire voice into booking systems, CRMs, service workflows, product experiences, or internal tools.

Google’s current setup also supports automatic activity detection, interruption handling, configurable response modalities, transcription events, tool and function calling, and model options built for low-latency audio experiences. Those are not sexy bullet points, but they are exactly what separates “talking AI” from something a customer can actually use without wanting to throw their phone into a lake.

Why this needs a reality check

Some of the earlier framing around this category blended together product names, ecosystem chatter, and public documentation a little too casually. The cleaner version is simple: Google’s public docs clearly support the broader Gemini Live and Live API stack, while more specific naming and rollout details should be tied to the exact model and deployment path a team plans to use.

Here is the more useful version:

| Claim | Best current read | Why it matters |

|---|---|---|

| “Gemini 3.1 Flash Live” as a clear public product name | Google and ecosystem references now point to Gemini 3.1 Flash Live, but teams should still map implementations to current Gemini Live and Live API docs for exact behavior | Teams should anchor deployment decisions to docs and model IDs, not naming drift |

| 90+ languages for live voice | Current Google documentation describes broad multilingual support, but teams should validate exact live language coverage for their specific implementation | Important for global rollout planning |

| SynthID watermarking on generated speech | Recent Google messaging ties SynthID watermarking to generated audio in Gemini 3.1 Flash Live, but teams should still confirm model-specific coverage in their exact deployment path | Trust and provenance claims need precision |

This does not make Google’s voice story weak. It makes it more specific. And specificity is useful, especially when someone in your org is about to say “great, let’s deploy this everywhere.” Easy there, turbo.

Where the product is genuinely strong

Low-latency conversation design

The strongest part of Google’s current voice stack is that it is built for streaming interaction, not delayed call and response. That means faster turn-taking, better interruption handling, and a more natural conversational rhythm. For support, sales, onboarding, or interactive product guidance, this is the difference between “surprisingly capable” and “why does this thing pause like it’s buffering emotionally?”

Developer access exists

This is not trapped inside a closed consumer app. Google provides a Live API pathway through the Gemini developer stack, plus prototyping surfaces in AI Studio and Vertex AI. That means teams can experiment with real integrations rather than just playing in a branded sandbox.

For non-technical readers, translate API availability like this:

- Yes, this can be automated if your team or partners can call the Live API

- Yes, it can plug into workflows through backend services, orchestration tools, and event-driven systems

- No, that does not mean zero work because real-time voice still needs design, monitoring, permissions, and fallbacks

Multiple model modes matter

Google’s live stack also reflects a smart tradeoff the market is finally admitting: not every voice use case needs the fanciest native-audio model. Some production systems are better served by more controlled configurations and model choices, especially once tools, actions, and long workflows enter the picture. That is less romantic than “the model just vibes in audio,” but often more dependable when tools, actions, and long workflows enter the picture.

The best voice system is not the one that feels most magical in a demo. It is the one your team can monitor, constrain, and recover when something weird happens.

Can it actually plug into workflows?

Yes, and this is where the story gets interesting for COEY readers.

Because Google exposes live interaction through documented developer surfaces, Gemini voice can become part of broader automation systems. Think:

- Lead qualification tied to CRM updates and routing logic

- Support triage with ticket creation and knowledge retrieval

- Booking flows that talk to calendars and scheduling systems

- Content operations where voice interfaces collect FAQs, objections, or structured interview input

- Internal copilots for teams that want hands-free querying of docs or workflows

The key point is not that Gemini Live replaces humans. It is that it can reduce repetitive conversational work while keeping a human handoff available when judgment, escalation, or brand sensitivity matters.

What is ready now vs. still a watch item

| Area | Looks real now | Still needs caution |

|---|---|---|

| API access | Yes, via Gemini Live and Live API | Org-specific limits, quotas, regions, and model availability still matter |

| Workflow automation | Good fit for real-time routing and interactive flows | Needs tool schemas, guardrails, and human fallback |

| Global deployment | Strong multilingual support exists | Do not assume the same live language behavior across every model and interface |

| Trust layer | Google has broader SynthID provenance work, and recent Gemini 3.1 Flash Live messaging ties SynthID to generated audio | Do not overstate watermarking coverage across every Live model or deployment path |

This is the mature posture teams should take now: treat Gemini voice as production-leaning infrastructure, not fully solved infrastructure.

What this means for marketers and creators

For marketing teams, the immediate value is not “AI host replaces your whole funnel.” Please log off if that was the dream. The value is that real-time voice can become a new interface layer for collecting intent, answering questions, qualifying demand, and routing conversations faster.

For creators and media teams, the implications are also broader than synthetic narration. Voice models that can handle live interaction open up new formats for interview capture, interactive content, multilingual experiences, and audience feedback loops. But again, the unlock is biggest when the output can move into a system, not just sit in a demo.

If you want adjacent context from COEY on how voice systems become real infrastructure, our earlier post on full-duplex voice systems tracks the same market direction: conversational AI is becoming an operational layer, not just a chat gimmick.

Bottom line

Google’s Gemini Live push matters because it strengthens the path from voice AI novelty to voice AI operations. The most important part is not the branding, and it is not any single claim about language count or watermarking. It is that Google has a real-time developer surface that makes voice callable, streamable, and increasingly workflow-friendly.

That means the useful question for executives is no longer “can AI talk?” Obviously it can. The better question is: can this system participate in real work, inside real software, with enough control to trust it?

Google’s answer is getting closer to yes. Not magic yes. Not “fire your support team and let the bot cook” yes. More like grown-up yes: useful, integratable, increasingly scalable, and worth serious testing anywhere human creativity and human judgment still lead, but machine speed can remove the grind.