Runway’s Gen-4.5 Pushes AI Video Forward, but the Real Workflow Story Is More Complicated

Runway’s Gen-4.5 Pushes AI Video Forward, but the Real Workflow Story Is More Complicated

April 7, 2026

Runway’s Gen-4.5 announcement lands exactly where the AI video market is fighting hardest right now: better motion, better prompt adherence, and outputs that look less like “impressive demo” and more like something a brand team might actually ship. That matters. What matters just as much, though, is separating the headline from the stack. Gen-4.5 looks like a meaningful upgrade for high-end video generation, but the popular “Expand Video” workflow is not the same thing as Gen-4.5 itself. If you’re an executive, marketer, or studio lead trying to decide whether this is ready for production, that distinction is doing a lot of work.

The short version: Runway is clearly improving the quality ceiling of AI video, and it does offer automation pathways through its API. But this is not yet a one-click replacement for a production team, and Expand Video should be understood as a separate workflow feature rather than a native Gen-4.5 capability. Translation: exciting, yes. Fully consolidated creative infrastructure? Not quite.

What Gen-4.5 actually improves

Runway positions Gen-4.5 as a major step up in visual fidelity, motion realism, and prompt responsiveness. That aligns with the company’s public launch materials. The broader market direction is the same: less novelty, more consistency. Brands do not need another haunted-limbed tech demo. They need clips that hold up under review, survive client notes, and do not collapse the second someone asks for a second take.

The big appeal here is not just prettier pixels. It is higher first-pass reliability. If a model can translate a prompt into something closer to usable footage on the first or second generation, teams waste less time burning credits and patience trying to get out of the “almost there” zone.

AI video gets operational when creative teams spend less time babysitting generation and more time directing it.

That is the real threshold. Not whether the model can make a cool clip, but whether it can make repeatable assets that fit an actual campaign workflow. For marketing teams producing storyboards, paid social variants, product visuals, or mood films, Gen-4.5 appears closer to that bar than earlier generations. For adjacent context, COEY’s earlier take on Runway Gen-4.5 reached a similar conclusion: the real story is not novelty, it is whether the model is stable enough to behave like infrastructure.

Where it looks strongest

- Concept development: faster creation of polished visual prototypes for campaign reviews

- Short-form branded content: stronger output for social, teasers, and visual experiments

- Prompt-driven ideation: more precise camera, motion, and scene interpretation

- Creative velocity: fewer retries to get something presentation-ready

That last point is underrated. In most AI workflows, the hidden tax is not generation time. It is revision time. Every extra prompt tweak, every weird frame artifact, every “why did the mug become a lamp” moment adds friction. Gen-4.5’s value proposition is basically: less chaos, more control.

Expand Video is useful, but separate

Now for the part that needs a little daylight. “Expand Video” is real, useful, and extremely relevant for content ops. But based on currently available Runway information, it is presented as a separate product workflow feature, not as Gen-4.5 itself. That means some of the original framing around a combined Gen-4.5 plus Expand Video release blurs two different workflow layers together.

Why does that matter? Because for teams planning automation or procurement, feature location matters as much as feature existence. If your favorite production-saving function lives in a different workflow environment, then your process may be less streamlined than the headline suggests.

Still, the value of Expand Video is obvious. Reframing clips across aspect ratios is one of the most practical use cases in AI-assisted post. Social teams constantly need to convert, extend, or reposition visuals for vertical, square, and landscape placements without rebuilding the entire asset from scratch. If the tool can preserve scene continuity while extending the frame, that is not just flashy. That is labor reduction.

| Capability | Why teams care | Reality check |

|---|---|---|

| Gen-4.5 video generation | Higher-quality text-to-video and image-to-video output | Available through Runway and the API for short clips, still not full production replacement |

| Expand Video | Reframe and adapt content for formats | Useful, but it is a separate workflow feature rather than Gen-4.5 itself |

| API access | Automate generation and content pipelines | Real, usage-based, and best suited for teams with process discipline |

Can you automate it?

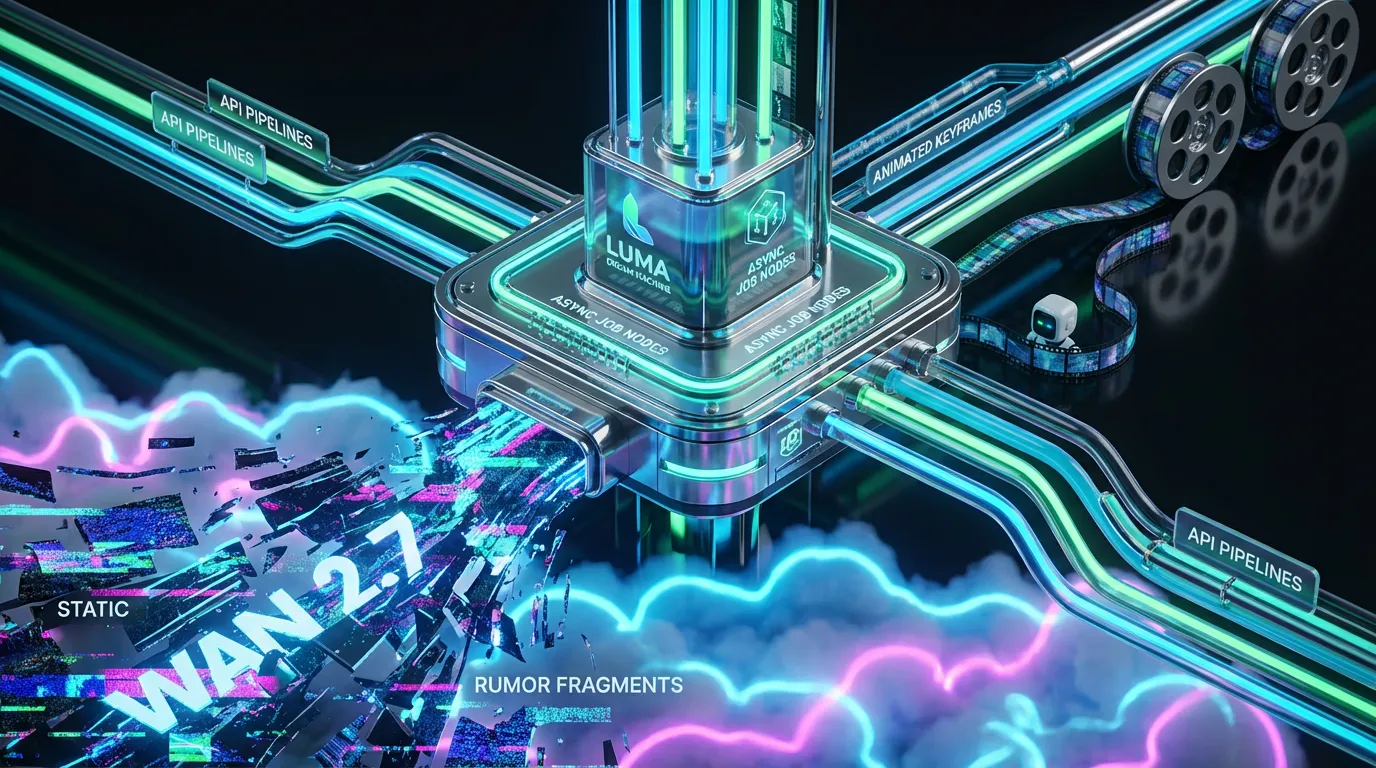

Yes, and this is where Runway gets more interesting than a pure creator toy. Runway offers API access through its developer platform, including Gen-4.5 model endpoints and usage-based pricing through credits. For non-technical readers, here is what that means in plain English: you can plug Runway into a larger content machine instead of using it only inside a browser tab at 1:00 a.m. with vibes and ambition.

With an API, a team can build workflows that:

- trigger video generation from approved prompts or campaign briefs

- create multiple ad variants from a shared creative theme

- route outputs into review systems or asset libraries

- chain generation into editing, publishing, or localization steps

Runway’s developer docs and API pricing documentation make clear that this is not just a consumer-facing studio product. It is becoming part of the programmable media layer. Runway’s API pricing guide lists credits at $0.01 each, and Gen-4.5 text-to-video and image-to-video generation at 12 credits per second, or about $0.12 per second generated.

That said, API availability does not automatically mean workflow maturity. You still need governance, prompt standards, approvals, asset handling, and human review. Otherwise you are not building automation. You are just industrializing randomness.

What API-ready means in practice

For most COEY readers, the key question is simple: can this move from experimentation into repeatable business output?

Mostly yes, with constraints.

- Good fit: ad concepting, content variation, mood films, social visuals, rapid prototypes

- Mixed fit: polished client-facing deliverables that require frame-perfect continuity

- Weak fit: long-form narrative production needing high consistency across many scenes

That is not shade. It is just the current state of the medium. AI video is getting very good at short bursts of controlled creativity. It is still less reliable when the ask becomes sustained narrative precision over time. If you want a broader workflow lens on that shift, COEY’s post on image-to-video workflows is useful context because it shows how these models become genuinely valuable once they fit inside a repeatable system.

What this means for marketers

For marketers, Gen-4.5 is less about replacing shoots and more about expanding the number of creative swings you can take before committing budget. That is the unlock. You can test visual territory faster, pressure-test concepts internally, and produce more variants for channels that eat content like a raccoon in a snack aisle.

If you are running paid campaigns, organic social, launch teasers, or product storytelling, the workflow upside is pretty direct:

- More concepts per cycle without growing headcount linearly

- Faster adaptation when stakeholders change direction late

- Better pre-production communication using moving visuals instead of static mockups

- Higher throughput for teams already managing multichannel output

This is where human-plus-machine collaboration actually shows up. The machine handles speed, iteration, and rough synthesis. The human sets intent, taste, brand boundaries, and final judgment. That split is not a compromise. It is the model.

What still needs caution

Even with quality gains, there are still practical limitations. Gen-4.5 output remains short-form. Runway’s API pricing and generation model support reinforce that this is optimized for short clips, so longer narratives still require stitching, editing, and more supervision. Some well-known video-model issues also linger across the category, including logic breaks, object inconsistency, and causality glitches. In other words, the model may know the aesthetic of a scene before it fully understands the physics of one. Very online. Sometimes very usable. Not identical things.

Runway’s pricing also signals that serious usage is a budgeting decision, not a casual add-on. The current pricing page lists Standard at $12 per user per month billed annually with 625 monthly credits, Pro at $28 per user per month billed annually with 2,250 monthly credits, and Unlimited at $76 per user per month billed annually with 2,250 priority credits plus relaxed generation. High-volume experimentation can get expensive quickly, especially if teams generate without a clear review process. AI video rewards direction. It punishes prompt spam.

Runway is getting closer to real stack status

The bigger story here is not one feature drop. It is that Runway keeps moving toward becoming a real layer in the modern creative stack: generation, iteration, and programmable access in one ecosystem. That is a meaningful shift from the earlier era of AI tools that were fun in isolation but hard to operationalize.

Gen-4.5 strengthens Runway’s case as a serious option for creative teams that need speed with a shot at quality. But the current reality is nuanced. The latest model is promising. The automation hooks are real. The production benefits are tangible. Yet some of the handiest workflow features are still fragmented across separate features and workflows, and human oversight remains absolutely non-negotiable.

That does not make this hype. It makes it infrastructure in progress.

And honestly, that is the sweet spot. Not magic replacing the team. Magic giving the team range.

Your AI Marketing Agency, Built to Ship

COEY is the AI marketing agency that wires the whole stack together: n8n for orchestration, Claude Cowork for content intelligence, OpenClaw for open-source AI. Explore how we work across every channel, visit our AI Studio, or get in touch.