n8n vs OpenClaw vs Claude Cowork: What to use for content automation

n8n vs OpenClaw vs Claude Cowork: What to use for content automation

April 10, 2026

If you are setting up an AI content operation in 2026, the base layer you pick matters more than the model you pick. The model is a commodity that will change three times this year. The base layer is the thing that decides which model to call, what to hand it, how to publish the result, and what gets logged along the way. It is also the piece that decides whether your data leaves your perimeter, whether non-AI work costs you tokens, and whether your team can actually drive the system without a developer in the loop.

Three open or open-ish tools come up in almost every conversation we have with brand and agency teams trying to make this choice: n8n, OpenClaw, and Anthropic’s Claude Cowork. They solve overlapping problems in very different ways, and the right answer for most teams is a mix. This is the COEY breakdown.

For marketing leaders ready to turn AI strategy into production workflows, explore the Executive AI Accelerator.

The three tools, in one breath each

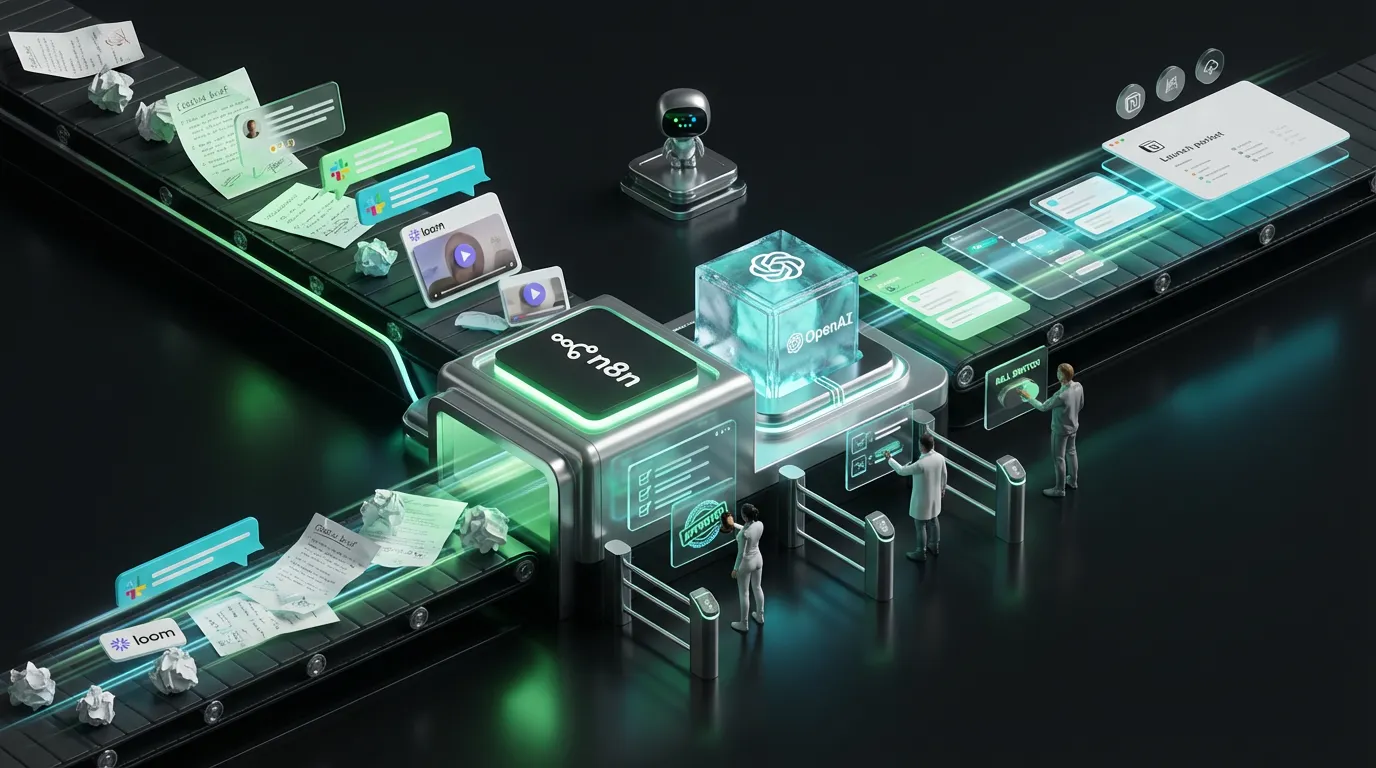

n8n is an open-source workflow automation platform. You build pipelines by wiring nodes together visually: triggers, HTTP calls, database writes, file moves, AI model calls, webhooks. It is self-hostable, has hundreds of native integrations, and treats code execution and publishing as first-class steps, not AI afterthoughts. If Zapier and a scripting runtime had a child that you could run inside your own VPC, that is n8n.

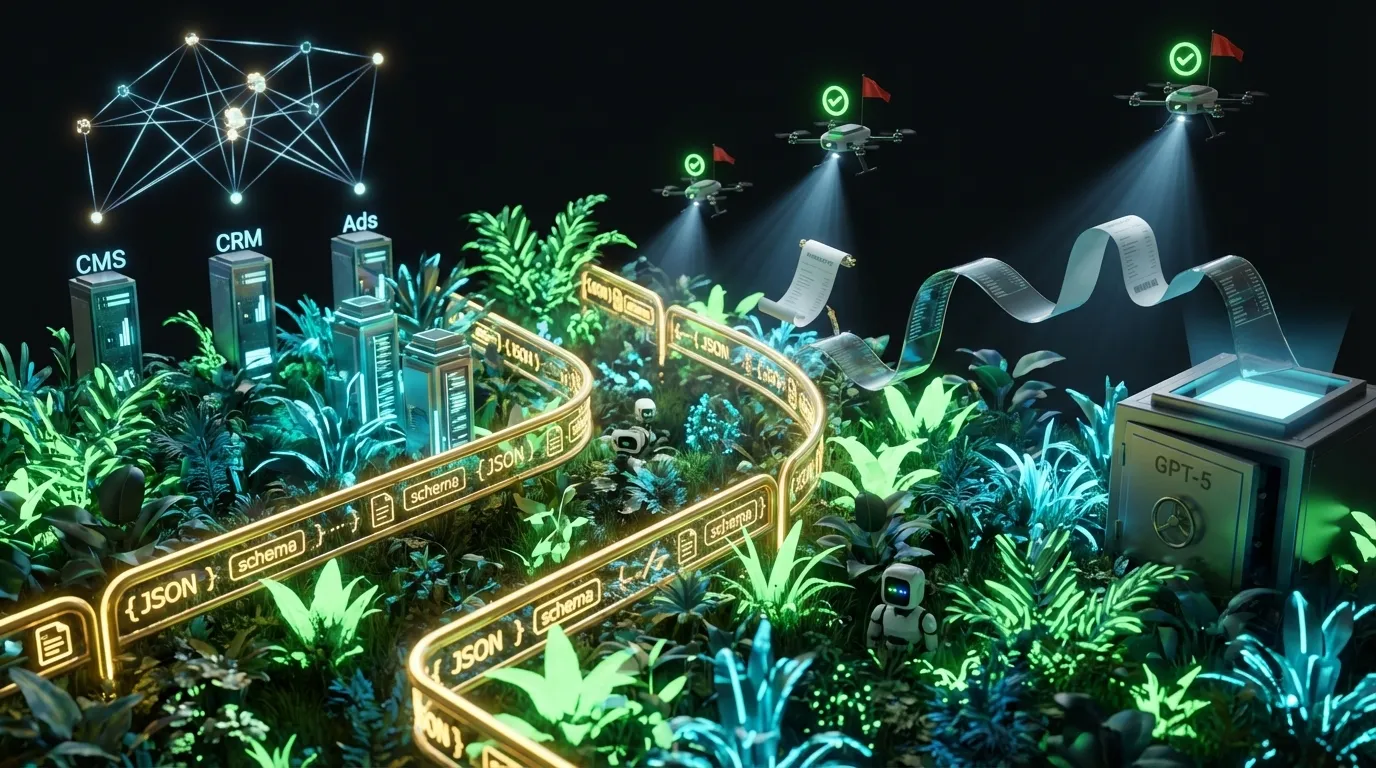

OpenClaw is a personal AI assistant that lives on your machine, talks to your files and apps, and runs real work end to end inside a chat window. It leans into the chat-first operator experience: you describe an outcome, it does the thing. For teams who want AI to feel like a coworker rather than a wiring job, this is a compelling direction.

Claude Cowork is Anthropic’s desktop agent for non-developer teams. It plugs into your local files, calendar, Slack, docs, and browser, and runs multi-step work on your behalf. Like OpenClaw, the interaction model is chat-first and outcome-oriented. Unlike OpenClaw, the AI is Claude and the integrations are curated by Anthropic.

At a glance

| n8n | OpenClaw | Claude Cowork | |

| Primary shape | Visual workflow and code platform | Personal AI assistant, chat-first | Desktop agent for operator teams |

| Who drives it | Engineers and ops leads, with handoff to non-technical users via triggers and forms | Any user who can describe an outcome in chat | Any user who can describe an outcome in chat |

| Open source | Yes, Sustainable Use License. Self-hostable. | Open approach, desktop product | Proprietary, Anthropic-hosted |

| Where it runs | Your VPC, your laptop, or n8n Cloud | Your machine | Your machine, talking to Claude in the cloud |

| Native AI model | Pick any: Claude, OpenAI, Gemini, Grok, Llama, local | Flexible: local or hosted models | Claude (Anthropic) |

| Non-AI work | First-class. Publishing, API calls, file moves, code all run without touching a model. | Possible via tool use, but the chat still mediates. | Possible via tool use, but the chat still mediates. |

| Best at | Deterministic pipelines with guardrails and cheap deterministic steps | Ad hoc knowledge work and personal operator flows | Team operator flows with Anthropic-grade safety |

What they connect into

Base layer tools are only as useful as the systems they talk to. Here is a rough map of what each reaches by default and how you extend them.

| System | n8n | OpenClaw | Claude Cowork |

| Marketing stack (HubSpot, Mailchimp, Klaviyo, Sendgrid) | Native nodes | Via web or API tools | Via web or API tools |

| CMS (WordPress, Ghost, Webflow, Shopify) | Native nodes | Via web or API tools | Via web or API tools |

| Files and docs (Drive, Dropbox, Box, local) | Native nodes | Native local file access | Native local file access |

| Collab tools (Slack, Notion, Linear, Asana) | Native nodes | Via integrations and web | Native integrations |

| Databases (Postgres, Mongo, Supabase) | Native nodes | Via API tools | Via API tools |

| Custom APIs (yours or a vendor’s) | First-class HTTP Request node | Via tools you define | Via tools you define |

| AI models | Any provider via node or HTTP | Pluggable | Claude family |

The short version: if most of your content operation is passing data between other systems, n8n has the widest set of doors wired in on day one. OpenClaw and Cowork lean on browsing, local file access, and tool use to reach the same places, which is fantastic for ad hoc work and less fantastic when you want the same step to run identically a thousand times in a row.

Security and where your data actually goes

For regulated teams, the first question is not “can this tool do the work.” It is “where does the data live while the tool is doing the work.” This is where the three tools diverge the most.

| n8n | OpenClaw | Claude Cowork | |

| Self-host inside your VPC | Yes | Runs locally | Runs locally; Claude calls go to Anthropic |

| Data leaves your perimeter? | Only when you tell a node to call an external API. You control every hop. | Depends on model choice and tools invoked. | Yes, for any step that calls Claude. |

| Secrets management | Credentials store, environment vars, Vault integration | OS keychain and local storage | OS keychain and local storage |

| Use with PII, PHI, regulated data | Practical with the right deployment and BAA-covered model endpoints | Possible with local models and scoped tools | Check Anthropic’s data policy for your use case |

None of this is a knock on Cowork or OpenClaw. Both are designed for real operator work and both are safe enough for most marketing content. But if you are in financial services, healthcare, or any setting where a legal review signs off on where bytes travel, n8n gives you the tightest story because every external call is explicit and reviewable.

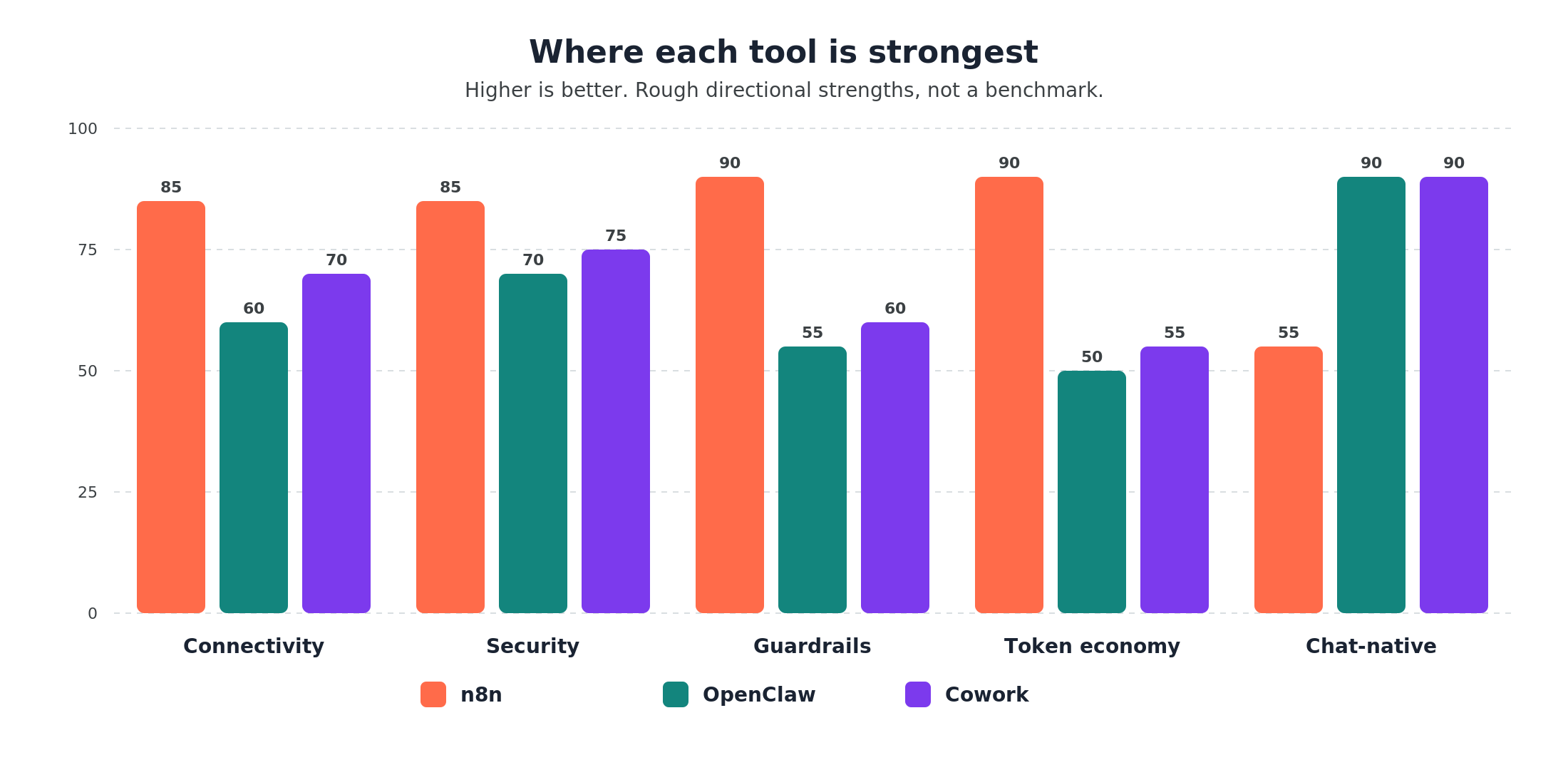

Guardrails, retries, and audit (where n8n wins big)

Guardrails are the production hygiene layer. Not because you do not trust the AI. Because you want the same operational discipline around AI work that you already run for every other piece of infrastructure. This is where n8n has the clearest lead.

n8n gives you typed node inputs and outputs, retries with backoff, idempotency keys, conditional branches, error-handling workflows, execution logs, and a history you can replay. If a step fails at 2am, an oncall person can open the execution, see exactly what the input was, what the model returned, what failed, and re-run from the failure point. That is table stakes for production content ops and it is built in.

OpenClaw and Claude Cowork both give you tool-level scoping and can run long chains of steps reliably, but the discipline is lighter. A chat agent that ran five minutes ago is harder to replay than an n8n execution from five minutes ago. For ad hoc work this is fine. For recurring, regulated, or high-volume content ops, the audit trail matters and n8n’s execution model wins.

If you care about any of the following, lean on n8n for that part of the pipeline:

- Replayable executions with full input and output history

- Fine-grained retry, backoff, and error-branching logic

- Typed tool scopes and credential isolation per workflow

- Deterministic runs that behave identically across environments

- Approval gates tied to real identity and signed audit logs

Token economics: many things do not need an AI model

This is the part most teams underestimate. A surprising amount of a content operation is not AI work at all. It is moving files, publishing posts, updating calendars, sending notifications, writing to databases, renaming things, checking statuses. In a chat-native tool, every one of those steps goes through the model, even when the model has no interesting decision to make. That means tokens burn on bookkeeping.

n8n treats non-AI work as first-class. A node that publishes to WordPress, or moves a file from S3 to Google Drive, or writes a row to Supabase, runs with zero tokens. You only spend tokens when a step actually needs a model. For a pipeline where the AI does the drafting and the scaffolding does the shipping, the difference in monthly cost can be an order of magnitude.

OpenClaw and Claude Cowork can invoke tools that do non-AI work, but the surrounding chat still consumes tokens as it reasons about what to do next. That is fine for a handful of operator tasks a day. It is not the right economics for a system that ships a hundred assets a week, because the chat-level token overhead on repetitive runs is pure waste.

Rule of thumb: if the same sequence of steps runs more than a dozen times a day and most of those steps are deterministic, it belongs in n8n. If the steps change every time and the person driving them needs to think out loud, it belongs in a chat tool.

Chat-native operation: where OpenClaw and Cowork shine

The strongest thing a chat-first base layer gets you is that once the pipes exist, the whole operation runs from a single conversation. “Pull yesterday’s top posts from our Slack, draft three short-form scripts from them in our brand voice, queue them in the review folder, and ping Maya when they are ready” is a sentence. In n8n, that is a workflow. In OpenClaw or Claude Cowork, it is a sentence.

That is a real and underrated advantage for operator teams. Creative and marketing leads do not want to maintain node graphs. They want to describe outcomes. The chat-native tools meet them where they already work.

One caveat worth stating clearly: n8n has chat triggers too. You can point a Slack command, a Telegram bot, or a web widget at an n8n workflow and let people drive the same deterministic pipeline through natural language. The difference is that with n8n, the chat is the front door to a rigid workflow. With OpenClaw or Cowork, the chat is in charge and the workflow is whatever the model figures out each run. Both are valid. Both live in real production.

When to pick which

| If you need… | Lean on | Why |

| Deterministic, high-volume content pipelines | n8n | Retries, logs, and typed steps survive production traffic |

| Strong data perimeter, regulated data, legal sign-off | n8n | Self-host and explicit external calls make the story easy to write |

| Non-AI steps that would otherwise burn tokens | n8n | Publishing, moving, and writing are free compute |

| An operator team that wants to describe outcomes in chat | OpenClaw or Cowork | The skill ceiling is “can you describe what you want” |

| Research, summarization, drafting, and knowledge work | Cowork | Claude-grade reasoning, live in the desktop next to your files |

| A personal AI assistant that runs on your machine | OpenClaw | Open, local-first, chat-native, flexible model choice |

The real answer is usually “all three”

In most of the content operations we help build or run, the right stack is not one of these tools. It is a division of labor.

n8n owns the production layer: the things that must run on a schedule, must not lose data, must retry correctly, and must log every action they take. Campaign pipelines, brief routing, asset publishing, measurement, cross-tool sync, approval gates. This is the spine of the operation.

Cowork or OpenClaw owns the operator layer: the places where a human is in the loop and the fastest way to get something done is to describe it. Research, drafting, ad hoc exploration, one-off work, first passes on new content types, driving a pipeline n8n has already wired together, checking on the status of a campaign, reviewing queued assets, having a real conversation with your stack. This is how the team actually uses the system day to day.

Hook them together and you get the best of both worlds. The operator describes an outcome in chat. The chat tool calls an n8n workflow that runs the deterministic part, with full logs and retries, and returns a result. The operator reviews, asks for a tweak, and the chat tool calls the workflow again. You get chat ergonomics on top of production rigor. That is the stack we keep arriving at.

What COEY actually uses

COEY is its own case study. We run our content operation on a mix of all three. n8n handles the publishing spine, the scheduled research jobs, the cross-tool wiring, the automated assembly of research hubs, and the measurement plumbing that feeds back into the next brief. Claude Cowork and OpenClaw sit on top as the operator layer, where we drive the system, brief campaigns, pull context from files, and run one-off research or draft work without having to touch a node graph.

Every client engagement we run reinforces the same conclusion: neither half of this stack is optional. The production layer without the operator layer is a system nobody on the team wants to use. The operator layer without the production layer is a system that nobody on the team can trust to run overnight. The combination is the real answer.

If you are standing up a base layer today

Two practical suggestions if you are actively picking a base layer for a content operation this quarter. First, do not pick a single tool. Pick the shape of your stack and then pick a tool per layer. Second, build your n8n piece first. It forces the hard decisions about data, guardrails, and approvals early, which is exactly when you want to make them. The chat layer is easier to swap out later.

If you want a second set of eyes on your plan, read our playbook on how to build an AI content system and then start a conversation. We either build the first version of the stack with you or teach your team how to run it. Some engagements do both.