Grok Voice Agent API: Deployable Real-Time Voice Automation

Grok Voice Agent API: Deployable Real-Time Voice Automation

January 1, 2026

xAI just turned voice from “cool demo” into “callable infrastructure” with the Grok Voice Agent API. It’s a real-time, speech-to-speech API that can hold fluid conversations and trigger tool calls mid-call, without forcing teams to stitch together separate STT, LLM, and TTS vendors. If your org has been waiting for voice to be more than a novelty (or a customer support horror story), this is one of the cleaner “yes, you can wire this into production” launches we’ve seen.

Translation: xAI isn’t selling a voice chatbot. It’s selling a programmable voice layer you can plug into scheduling, CRM, support systems, search, and internal workflows, fast enough that users don’t feel the machine thinking.

What xAI actually shipped

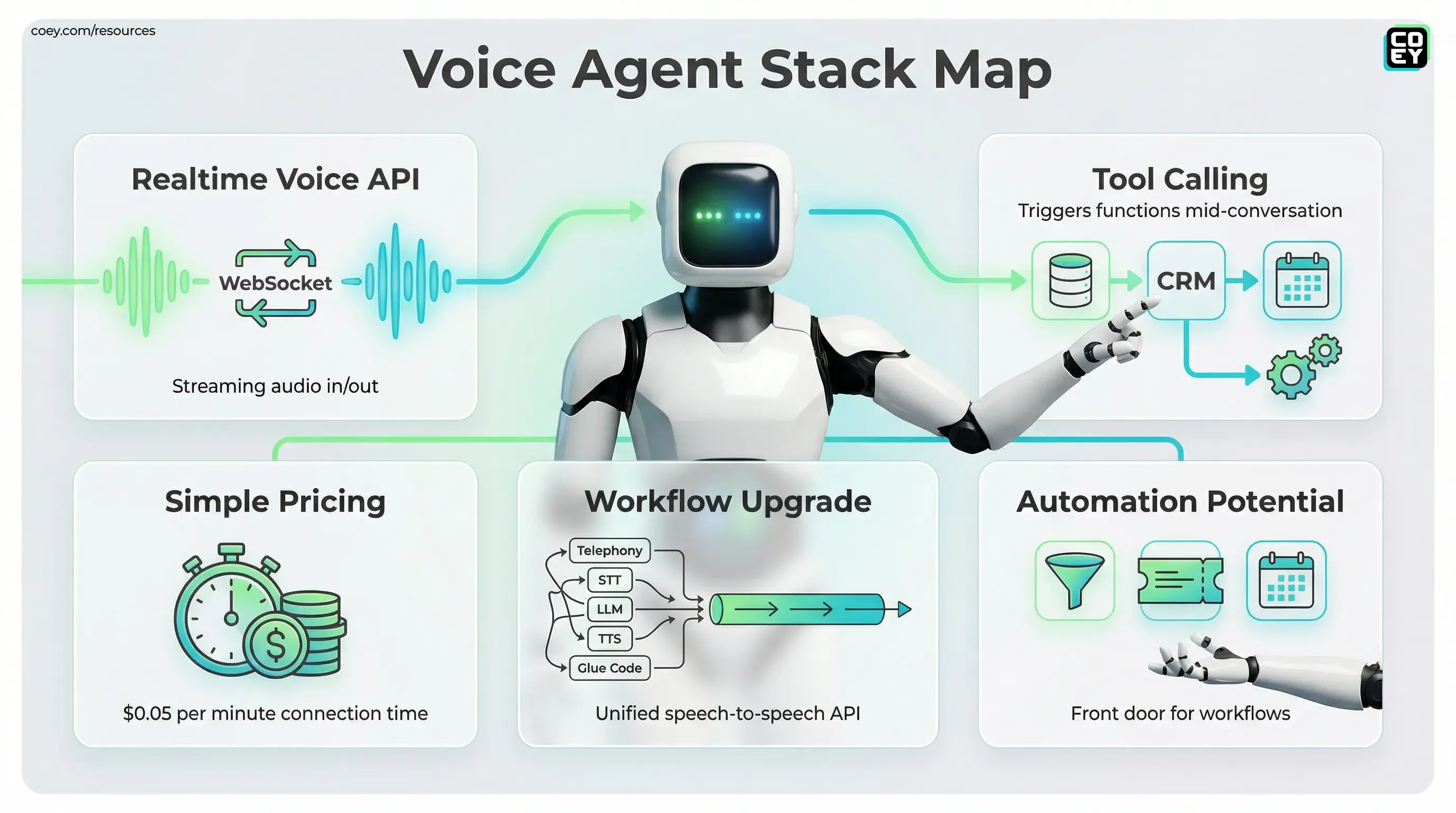

At the core: a low-latency, bidirectional voice experience that runs over a realtime connection (WebSocket), designed for turn-taking that feels like a human conversation instead of a customer calling a loading spinner. The product framing is “speech-to-speech,” meaning audio goes in, audio comes out, with no visible “transcribe then think then speak” chain exposed to the user.

The important operational detail is that this is not trapped in a UI. xAI documents it as a developer API with a realtime endpoint and voice build guidance here: xAI Voice Guides. If you’ve built anything with realtime AI before, you’ll recognize the pattern: streaming I/O, session state, and function and tool execution as part of the conversation loop.

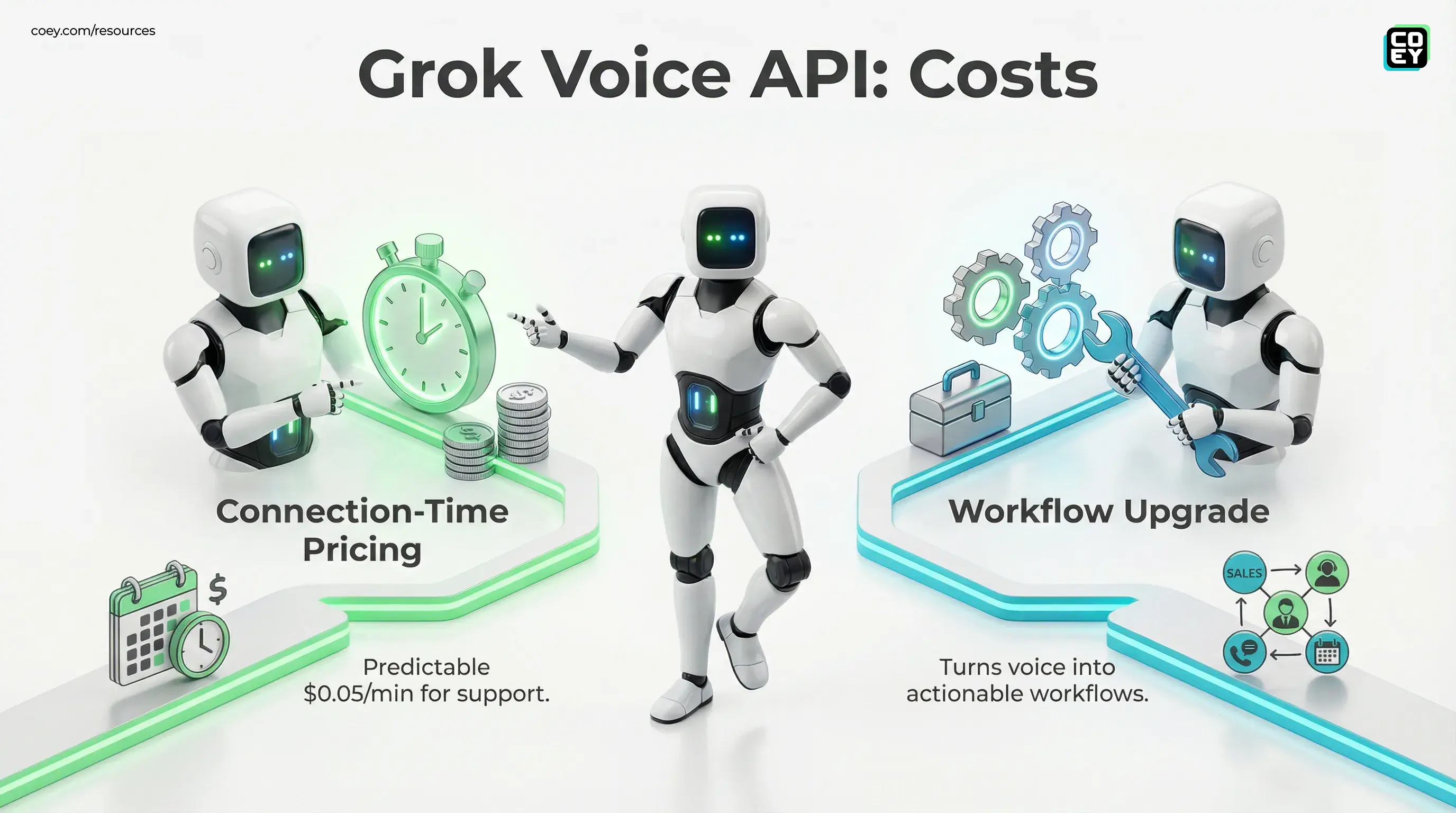

Pricing: simple (and budgetable)

xAI lists Grok Voice Agent at $0.05 per minute of connection time on the announcement page. That’s refreshingly legible compared to multi-meter stacks where you get billed separately for transcription minutes, model tokens, synthesis characters, and then your own engineering team’s therapy.

| Item | What it is | Why it matters |

|---|---|---|

| Realtime voice API | Streaming audio in and out over WebSocket | Latency becomes predictable enough for real calls |

| Tool calling | Agent triggers your functions mid-conversation | Voice stops being “answering” and becomes “doing” |

| Connection-time pricing | $ per minute connected | Easier forecasting for support and sales teams |

Why “speech-to-speech” is a workflow upgrade

Most voice agents fail in the unglamorous place: orchestration. A traditional stack usually looks like:

- telephony provider (call control, recordings)

- speech-to-text (transcription)

- LLM (reasoning plus tool calls)

- text-to-speech (voice output)

- glue code (buffering, retries, state, edge-case hell)

Every boundary adds latency, brittleness, and “why did it misunderstand the user’s name again?” incidents. A unified speech-to-speech API collapses that surface area. It doesn’t eliminate engineering, but it reduces the number of moving parts that can break at 2 AM.

Voice automation doesn’t usually fail because models are dumb. It fails because stacks are fragile and nobody budgets time for the boring failure states.

Automation potential: where it gets interesting

Tool calling is the headline for anyone building automations. Talking is table stakes. Talking while writing to systems of record is where ROI shows up.

In practical terms, Grok Voice Agent can be the front door for workflows like:

- Sales: qualify inbound leads, capture requirements, log the call, create an opportunity, book a meeting

- Support: identify issue type, fetch account or order data, open a ticket, escalate with context

- Ops: scheduling, appointment reminders, rescheduling flows, confirmations

- Marketing: interactive promos, contest entries, opt-ins, outbound reactivation calls (carefully)

And because it’s API-first, the “tool” can be anything you can expose safely: your CRM, your database, your help desk, your internal pricing lookup, your knowledge base, your shipping status endpoint. Voice becomes a real interface into business logic, not a talking FAQ page.

API availability: what’s callable vs. what’s hype

This release clears the first bar we care about: it’s a documented API, not a closed demo experience. The docs describe a realtime endpoint and agent session behavior, plus how to wire in tools here: Grok Voice Agent documentation.

Compatibility lowers migration pain

One under-rated detail: ecosystem compatibility. LiveKit has published integration details for using xAI’s realtime model in LiveKit Agents: LiveKit xAI integration docs. LiveKit also notes that xAI’s realtime API is compatible with the OpenAI Realtime API specification, which can reduce how much code you need to change when trialing Grok in an existing realtime pipeline.

For non-technical leaders, that translates to: faster proof of concept and lower switching costs. Your team can spend time on call flows and governance instead of re-implementing plumbing.

Real-world readiness: where this is usable now

Voice agents get dangerous when teams try to automate the messiest, most emotional calls first. The sweet spot is structured interactions with clear outcomes and clean handoffs.

Best “right now” deployments

- Inbound triage: identify intent, collect key fields, create ticket, route to the right queue

- Lead qualification: capture budget, timeline, needs, write to CRM, offer scheduling

- Status lookups: order, shipping, account status with authenticated guardrails

- Survey calls: NPS and CSAT collection when email response is dead

Where teams still get burned

- High-stakes disputes: billing, cancellations, “you ruined my life” calls

- Regulated categories: medical, legal, financial advice without strict policy enforcement

- Identity and auth gaps: doing account actions without robust verification steps

The model is not the system. A great voice brain without permissions, policies, and audit logs is just a faster way to make an expensive mistake.

What this means for creative teams

Voice isn’t only a support channel. It’s a high-signal creative input stream. A voice agent can generate artifacts you can route into the rest of your content engine:

- Structured insights: objections, FAQs, feature requests, sentiment, logged cleanly for marketing

- Auto follow-ups: call recap emails or “next step” messages drafted instantly (with human review where needed)

- Content fuel: repeated questions become video scripts, blog sections, landing page FAQs, sales enablement

If you’re building “human plus machine” creative operations, this is the pattern: machines capture and structure reality at scale; humans decide what story to tell with it.

Bottom line

Grok Voice Agent is a pragmatic step forward for anyone who wants voice automation that can actually plug into workflows. The API surface (realtime streaming plus tool calling) is the point. It’s not just about sounding natural. It’s about doing useful work while sounding natural, with pricing simple enough to forecast and a realtime interface designed for interactive voice.

Just don’t confuse “can speak” with “can run your call center unattended.” The winners will be the teams that treat this like infrastructure: wrap it in governance, permissions, handoffs, and logging, then let humans focus on judgment, nuance, and the parts of the conversation where trust is on the line.

If you want a broader framing on how agents plug into real automation stacks, see our internal post: Agentic AI Automation: The Smarter, API-Ready Edge for Marketers in 2025.

Your AI Marketing Agency, Built to Ship

COEY is the AI marketing agency that wires the whole stack together: n8n for orchestration, Claude Cowork for content intelligence, OpenClaw for open-source AI. Explore how we work across every channel, visit our AI Studio, or get in touch.