TranslateGemma 27B Makes Translation Deployable

TranslateGemma 27B Makes Translation Deployable

January 21, 2026

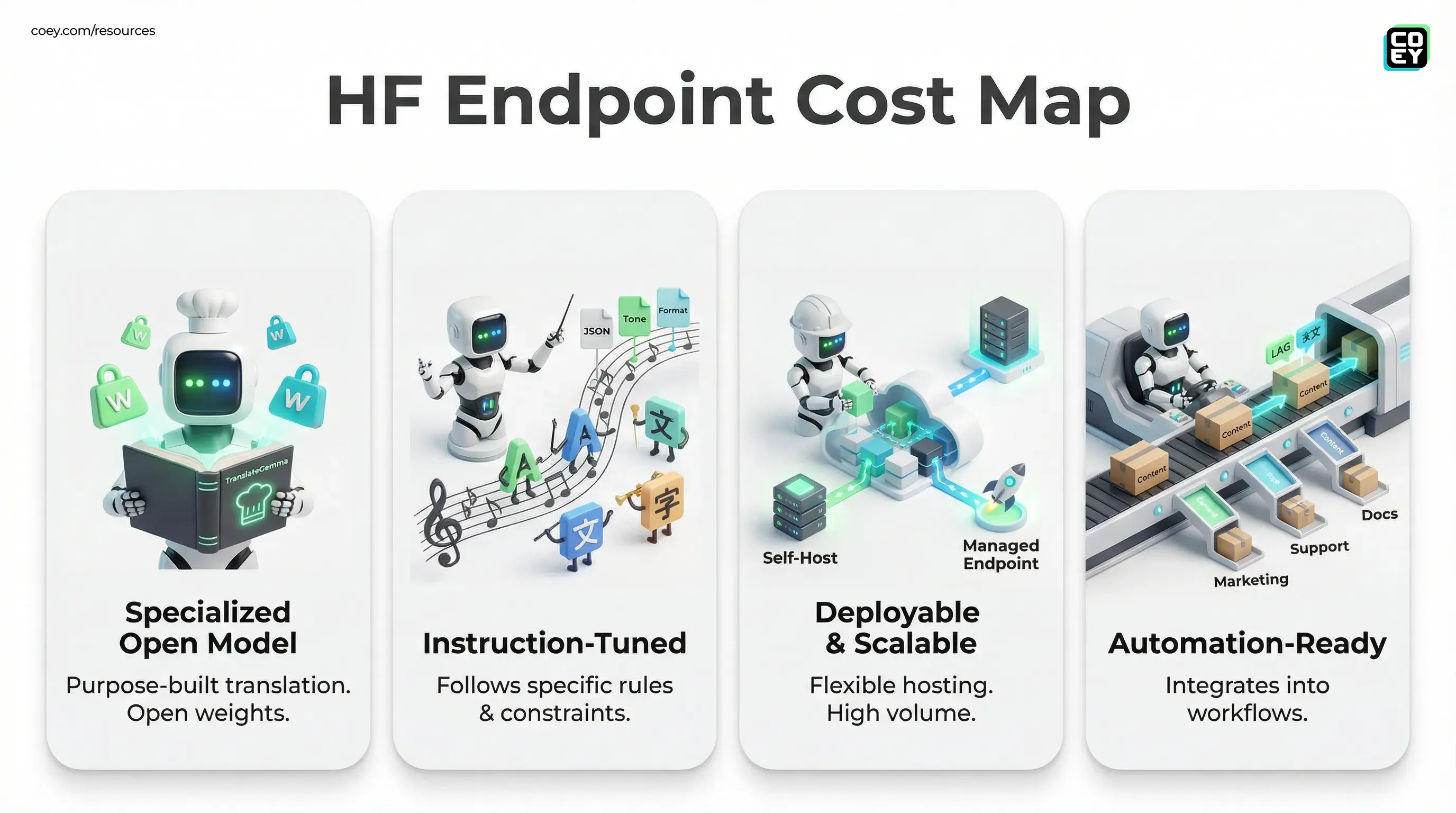

Google just dropped TranslateGemma 27B IT on Hugging Face, an open-weights, instruction-tuned model built specifically for multilingual translation. The headline is not “wow, AI translates” (we’ve all been here before). The headline is: translation is becoming a programmable, ownable layer in your content stack, and this release makes it easier to bring that layer in-house.

TranslateGemma sits on top of the Gemma family (Google’s Gemma 3 line) and aims straight at real production pain: high-volume localization, brand voice drift across markets, and translation workflows that still look like a spreadsheet relay race between agencies, PMs, and over-caffeinated marketers.

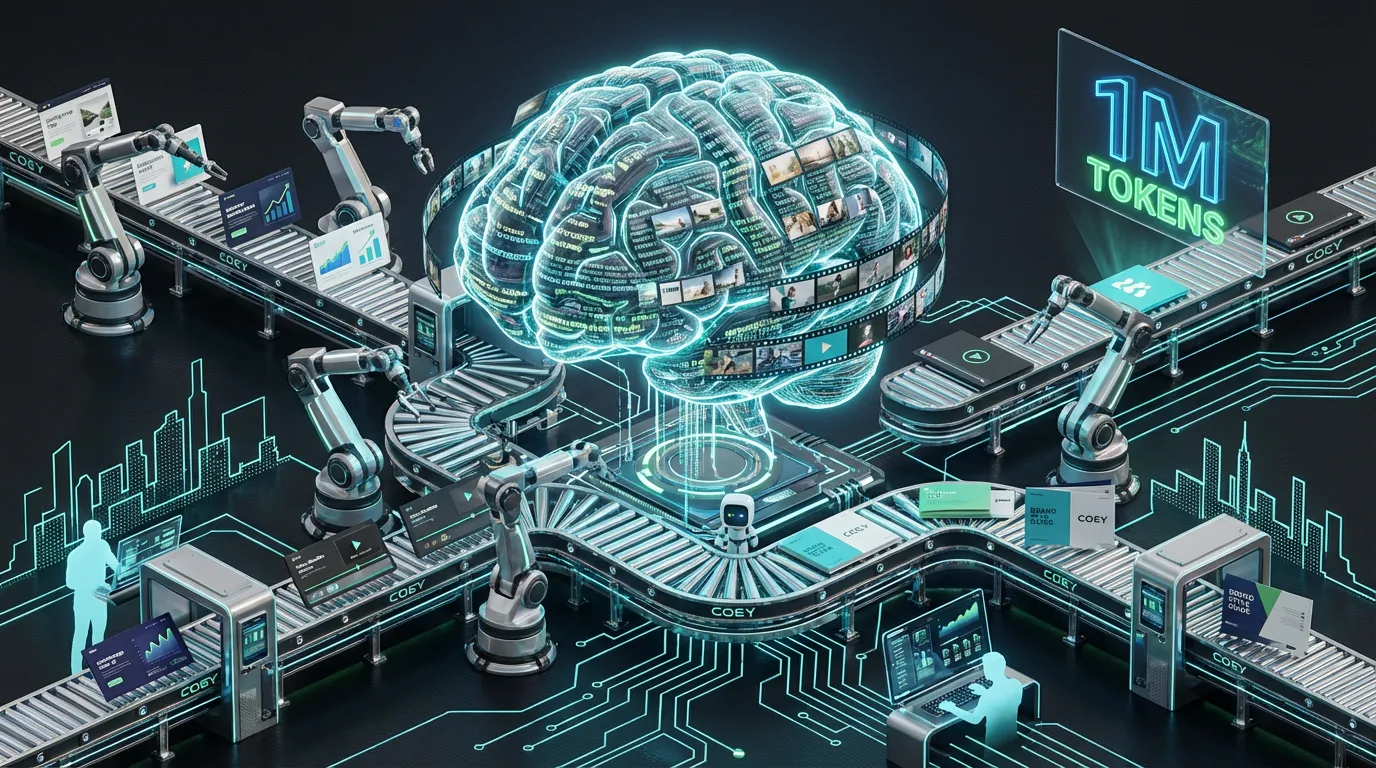

Translation is no longer a vendor relationship. It’s a workflow primitive. The teams who win don’t “translate better.” They translate faster, cheaper, more consistently, and they automate the boring parts without letting quality implode.

What Google actually released

TranslateGemma 27B IT is a 27B parameter, decoder-only Transformer tuned for translation and prompt-following (that “IT” matters). Unlike generic chat models that can translate “pretty well,” this is a purpose-built model designed to behave when you give it constraints like:

- “Translate to Spanish, keep product names unchanged, preserve markdown.”

- “Return JSON with

title,subtitle,ctafields.” - “Use informal tone for LATAM, formal tone for Spain.”

Open weights means you can run it where your data lives (on-prem, private cloud, locked-down VPC) instead of piping every paragraph through a third-party SaaS translation box and hoping procurement doesn’t ask too many questions.

TranslateGemma is part of a family

Google positions TranslateGemma as a family available in multiple sizes. As of this release, the TranslateGemma family includes 4B, 12B, and 27B parameter variants. The 27B model is the “big swing” for quality and nuance. If you’re translating short UI strings, you probably don’t need a 27B hammer. If you’re translating campaigns, landing pages, support docs, and long-form content where tone matters, bigger starts making sense.

Why instruction-tuning matters for teams

A lot of translation pain isn’t “did it translate correctly?” It’s:

- Did it keep formatting intact? (HTML, markdown, variables, links, tokens)

- Did it preserve legal disclaimers exactly?

- Did it keep brand voice consistent across markets?

- Did it avoid rewriting the meaning while “sounding nicer”?

Instruction-tuning improves adherence to these constraints. That’s the difference between a model that’s fun in a demo and a model you can put into a pipeline without your localization manager developing a twitch.

Automation-ready translation is less about fluency and more about obedience. Great translation engines are good writers. Production translation engines are good listeners.

API reality check: can you automate this?

Yes, with a few practical choices depending on how “enterprise” your needs are.

Option A: Self-host (most control)

Because it’s on Hugging Face with downloadable weights (after accepting Google’s Gemma license terms), you can deploy it internally using common inference stacks (for example, Transformers-compatible serving or high-performance inference servers). This is the path if you care about data residency, compliance, or cost optimization at volume.

Option B: Managed endpoint (fastest to production)

If you want an API without running infrastructure, Hugging Face Inference Endpoints can wrap models like this behind a managed HTTPS endpoint. You get the operational basics (scaling, auth, monitoring) without building your own serving layer from scratch. Pricing is compute-based (billed by the minute) and varies by provider and instance type, as outlined in Hugging Face’s Inference Endpoints pricing docs.

Translation in the real world is rarely “one prompt, one output.” It’s usually batch jobs, queues, retries, diffing, QA, and approvals, aka the stuff that makes automation worth doing. The good news: endpoints make this model scriptable, which is the real requirement for plugging into your stack.

What “API availability” means in plain English

| Need | Can TranslateGemma support it? | What you’d use |

|---|---|---|

| Batch translate thousands of assets | Yes | Endpoint + queue/worker |

| Auto-translate on publish in CMS | Yes | Webhook → API call |

| Enforce JSON/format rules | Yes (IT helps) | Prompt + validation |

| Instant, low-latency chat translation | Maybe | Depends on infra + model size |

Real-world readiness: what’s solid, what’s shaky

TranslateGemma 27B IT is compelling because it’s both specialized (translation-focused) and deployable (open weights). But production translation isn’t just model choice. It’s systems design.

What looks production-friendly

- Open deployment: run it in controlled environments, not just a closed SaaS UI.

- Workflow fit: instruction-tuning supports structured output and consistent formatting.

- Localization leverage: build reusable “translation specs” (tone, glossary rules, do-not-translate lists) and apply them automatically.

What still needs grown-up guardrails

- QA is not optional: even great models can mistranslate names, numbers, or legal phrasing. Build review loops.

- Latency vs. cost: 27B is not tiny. If you need real-time responses everywhere, you’ll either pay for serious compute or choose a smaller model.

- Consistency needs tooling: you still need glossaries, terminology rules, and regression tests. Models don’t magically respect your product taxonomy unless you force them to.

If your workflow is “paste text, hope, ship,” TranslateGemma will still feel like magic. If your workflow is “publish automatically,” you’ll want validation and routing before you hand it the keys.

Where this hits hardest: marketing ops

Marketing teams don’t just translate. They localize at speed while juggling campaigns, seasonal pushes, brand voice, and compliance constraints across regions. That’s exactly where an instruction-following translation model becomes a force multiplier.

If you are building automation that needs receipts and enforceable specs, the same mindset applies beyond translation. See Spec Tests: The Glue Your AI Stack Needs.

High-impact automation use cases

- Campaign localization at scale: translate launch messaging into dozens of languages while preserving CTA structure, character constraints, and required terms.

- Always-on content syndication: push blog posts, newsletters, and product updates into regional channels automatically, then route only flagged segments to humans.

- Support + help center acceleration: translate and refresh docs in batches when product changes ship, instead of waiting for quarterly localization cycles.

This is also where “open” matters culturally: teams can build translation as a repeatable internal capability instead of treating it as an external service line item that slows launches.

Licensing and ownership (readiness includes legal)

TranslateGemma is distributed on Hugging Face under Google’s Gemma Terms of Use. Teams should treat model adoption like any other third-party dependency: confirm the license terms, confirm distribution rules, and confirm whether your intended deployment (including fine-tuning) is covered. Start here: Gemma Terms of Use.

The practical implication: open weights gives you leverage. You can keep sensitive drafts private, build domain-specific improvements, and avoid being locked into a single vendor’s translation API pricing forever.

What this signals in the bigger model market

Translation is quietly becoming one of the most operationally important AI categories, not because it’s flashy, but because it’s everywhere: campaigns, documentation, product UX, ads, support, community, and internal comms. When Google ships a specialized open translation model, it’s not just a model drop. It’s a statement:

- Specialization is back. General chatbots aren’t the endgame for every workflow.

- Open weights are a strategy. Teams want control, customization, and predictable automation, not a mystery box API.

- Translation is infrastructure. The winners will treat localization like CI/CD, not like a last-minute scramble.

TranslateGemma 27B IT won’t replace every translation workflow overnight. But it does make one thing very clear: if your global content engine still runs on manual handoffs and agency queues, your competitors are about to ship in your markets while you’re still “waiting on the French version.”