Google’s Gemini 3.1 Flash-Lite Is a Speed-and-Cost Upgrade Built for Real Automation

Google’s Gemini 3.1 Flash-Lite Is a Speed-and-Cost Upgrade Built for Real Automation

March 3, 2026

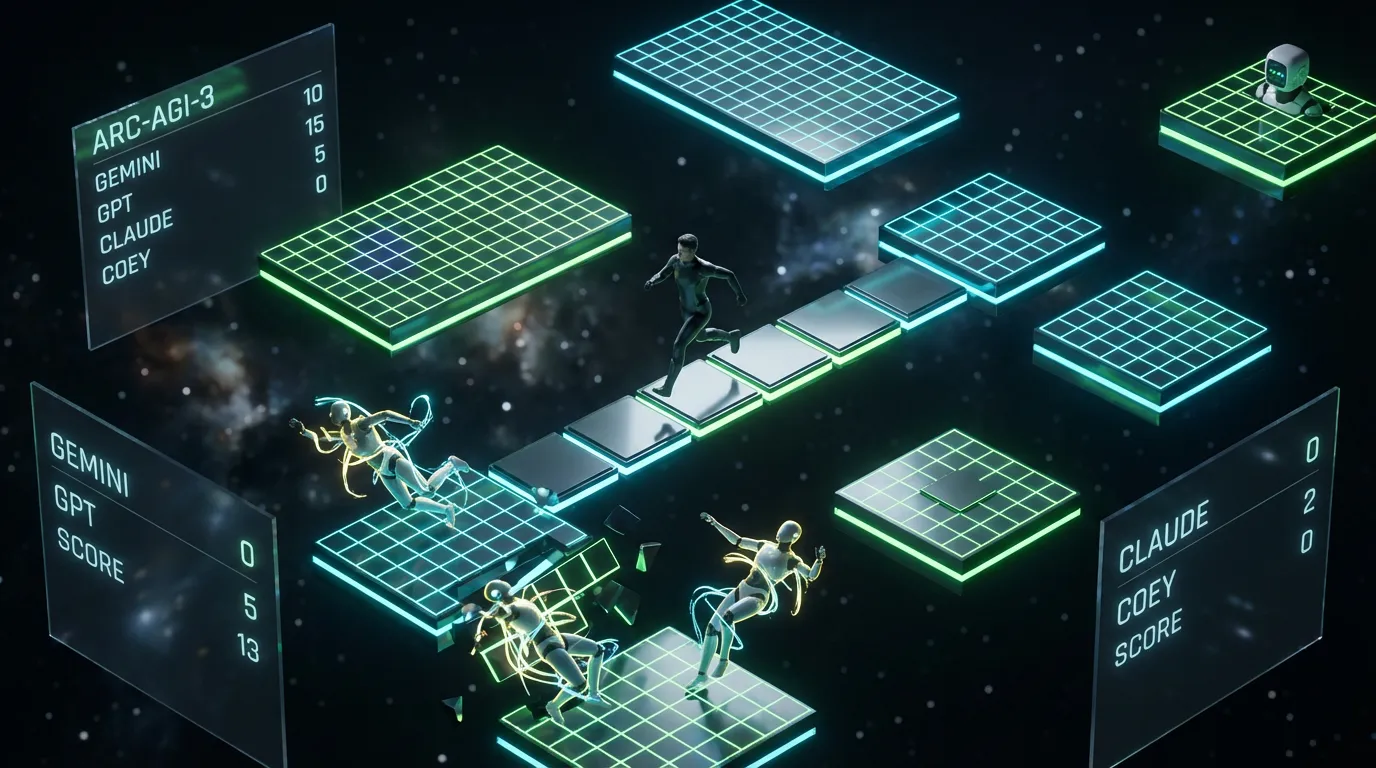

Google just added a new “small but serious” option to the Gemini lineup: Gemini 3.1 Flash-Lite. The headline is not “wow, the chatbot is witty.” It is that Google is optimizing for the thing most teams quietly struggle with once they move beyond demos: high-volume throughput with predictable cost and making it available where automation actually happens (Gemini API, AI Studio, and enterprise paths like Vertex AI).

Flash-Lite is the kind of release that will not trend for long on X (no new personality, no cinematic screenshots), but it absolutely will show up in budgets, routing layers, and “why didn’t we do this sooner?” performance reviews. Because when your workflows are generating thousands of drafts, tags, summaries, variants, or support replies, you do not need the fanciest brain. You need the fastest brain that is good enough, and you need it behind an API.

Translation for execs: Gemini 3.1 Flash-Lite is an “automation model,” not a “demo model.” It is built for systems that call an LLM constantly without turning your cloud bill into a jump-scare.

What Google actually shipped

Gemini 3.1 Flash-Lite is positioned as the most cost-effective model in the Gemini 3.1 family, tuned for speed and high request volume. Google is putting it in preview in Google AI Studio and the Gemini API, with enterprise availability via Vertex AI (which matters if you care about IAM, quotas, audit logs, and the adult supervision tools).

Two concrete, workflow-relevant claims stand out from Google’s announcement:

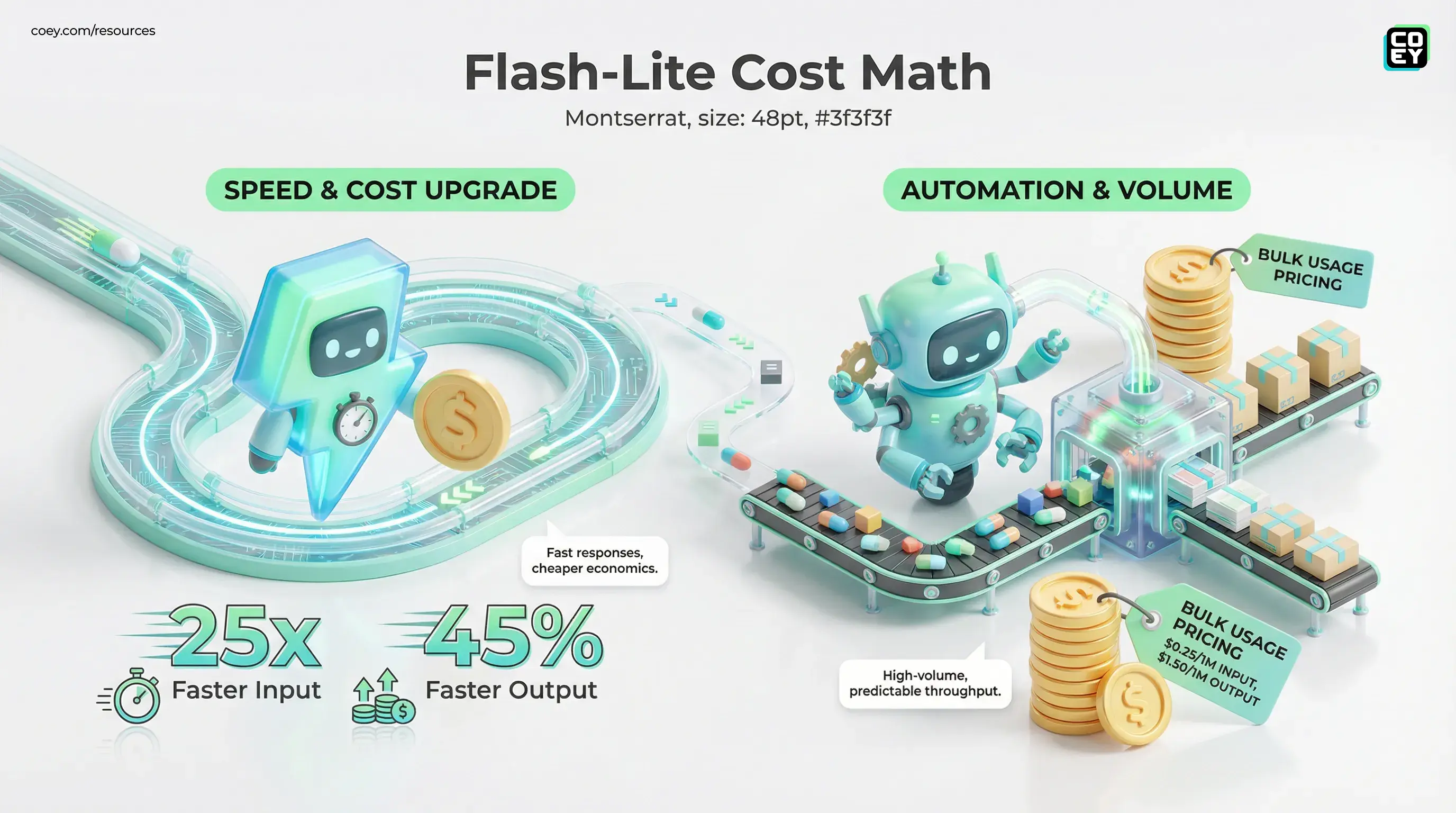

- Pricing aimed at bulk usage: Flash-Lite is priced at $0.25 per million input tokens and $1.50 per million output tokens.

- Speed improvements that change behavior: Google reports improved latency and throughput versus prior Flash-tier baselines, including a 2.5x faster time-to-first-token and 45% faster output speed compared to Gemini 2.5 Flash.

In other words: Google is betting that the next wave of adoption is less “one user chatting” and more “many systems generating constantly.” That aligns perfectly with how creativity scales in the real world: humans set intent and constraints, machines run the loops.

Why “Lite” is the real enterprise move

Most organizations do not fail at AI because the model cannot write. They fail because they cannot operate the writing. The moment you automate anything at scale, you inherit three problems:

- Latency: slow responses break user experiences and jam pipeline steps.

- Cost: per-call pricing becomes a “death by a thousand cuts” problem.

- Reliability: the model needs to behave consistently across thousands of near-identical tasks.

Flash-Lite is clearly designed for that operational lane. It is not trying to win every reasoning benchmark. It is trying to become the model you can afford to run all day.

Snarky but true: using a frontier model for every email variant is like renting a Formula 1 car to deliver groceries. It is impressive. It is also financially unserious.

The “thinking level” control is the sleeper feature

Flash-Lite also ships with a practical lever that is increasingly becoming table stakes for automation: configurable reasoning depth. In Gemini 3 models, Google exposes this via a thinking_level setting in the API, which lets teams trade off speed and cost versus deeper reasoning when it is actually needed.

You can read Google’s official Gemini 3 developer guidance here: Gemini 3 Developer Guide, and the thinking controls here: Gemini API thinking documentation.

Why this matters in business terms: you can route effort like a budget policy. Most tasks are repetitive and should run “low.” A small subset are complex and deserve “high.” That is how you scale without lighting money on fire.

What this enables in practice

- Cheap default behavior: run low-latency mode for tagging, rewriting, formatting, and templated variants.

- Escalation only when triggered: bump thinking level for edge cases, policy checks, nuanced objections, tricky summarization, or tool-using agent steps.

- Better workflow predictability: you stop relying on “prompt vibes” and start enforcing “effort policies.”

API availability: yes, this is automation-ready

Flash-Lite is available via the Gemini API (through AI Studio) and via Vertex AI for enterprise operations. The current preview model ID is gemini-3.1-flash-lite-preview. For teams who are not technical: that is the difference between “we can play with it” and “we can build a system on it.”

Two implications matter immediately:

- It can plug into your existing orchestration stack: anything that can call HTTPS can call a Gemini endpoint. That includes custom middleware, internal tools, and automation platforms like n8n, Make, and Zapier (via HTTP steps).

- It can be governed like infrastructure: Vertex AI is where you get the controls that make compliance and operations possible, identity, quotas, logging, and enterprise deployment posture.

If you want a familiar mental model: AI Studio is the “prompt lab,” Gemini API is the “callable brain,” and Vertex AI is the “enterprise runtime.”

What marketing teams can automate first

Flash-Lite is most valuable where work is high-volume, structured, and repeatable. That is not a creative limitation. It is the sweet spot where automation actually compounds.

High-confidence workflow fits

- Variant factories: subject lines, hooks, CTAs, ad copy variations, landing page microcopy, mass generation with human selection.

- Content operations: metadata generation, summaries, excerpting, formatting into CMS fields, alt text drafts.

- Customer support drafting: fast, consistent replies for common issues, with escalation paths for complex tickets.

- Moderation and classification: routing, labeling, “is this on-topic?” checks, and basic policy triage.

Where you still want guardrails

- Hard-claims content: anything with numbers, guarantees, or regulated language still needs verification and source grounding.

- Autopublishing: API available is not publish without review. Build gates, critics, and approvals unless you enjoy damage control.

If it is in the API, it can be automated. If it is monitored, gated, and logged, it can be trusted. Flash-Lite gets you the first part. Your workflow design earns the second.

Automation readiness snapshot

| What you need | What Flash-Lite gives | Real-world readiness |

|---|---|---|

| High-volume generation | Fast responses plus cheaper unit economics | High |

| Workflow routing control | Thinking level knob for “cheap vs deep” | Medium-High |

| Enterprise governance | Vertex AI path for IAM, logging, quotas | High |

What to watch (hype vs operational truth)

Flash-Lite is positioned as preview, and preview always means one thing for operators: test it under your load. The real questions are not benchmarks. They are operational:

- Rate limits and quota behavior: can it sustain your peak campaign windows?

- Consistency under batching: does output quality drift when you run 5,000 calls?

- Cost-per-valid-asset: not cost per prompt, cost per usable, approved output.

Google’s broader direction is clear: Gemini is being built as an ecosystem of right-sized models you can route by task. Flash-Lite is the default worker lane, exactly where scalable creative systems need the most help.

If you want more context on how Gemini 3.1 is being positioned for automatable, multi-step workflows, see our earlier breakdown: Google Gemini 3.1 Pro Lands as a Reasoning Upgrade You Can Actually Automate.

Bottom line

Gemini 3.1 Flash-Lite is Google making a direct play for production-scale creative automation: fast inference, low-cost economics for volume, a programmable thinking level control, and availability through the Gemini API and Vertex AI. For marketing and ops teams, the unlock is not novelty. It is throughput. Humans keep the intent and taste. Flash-Lite handles the grind, at a price point that makes always-on workflows feel less like a science project and more like infrastructure.