Claude Opus 4.6 Makes “One Session” Feel Like a Workspace

Claude Opus 4.6 Makes “One Session” Feel Like a Workspace

February 11, 2026

Anthropic’s Claude Opus 4.6 just shipped with two upgrades that change how AI fits into real work: a 1M-token context window (beta) and Agent Teams. If prior model launches were about “better answers,” this one is about something more operational: keeping a project coherent while it scales across docs, channels, and stakeholders without you re-explaining your entire company every time you open a chat.

The shift: the new competitive edge isn’t “best copywriter bot.” It’s durable memory + parallel execution that can actually plug into workflows, not just impress in a demo.

What actually changed in 4.6

Opus 4.6 is positioned as a drop-in evolution of the Opus line: stronger performance, new long-context capability, and an explicit push toward multi-worker automation. The headline additions are:

- 1M-token context window (beta): enough room to keep huge working sets like brand rules, research, historical campaigns, product docs, and even large codebases in a single session.

- Agent Teams: a parallel-agent setup framed as a research preview in Claude Code, coordinating multiple specialized Claude “workers” on the same objective.

Coverage has already clocked the strategy: Anthropic isn’t just chasing IQ points, it’s chasing throughput. Mainstream reporting frames Agent Teams as a move toward AI that works like a coordinated team, not a single assistant doing sequential tasks (TechCrunch).

Why 1M tokens is more than a flex

Context-window discourse usually devolves into horsepower bragging (“my model eats more PDFs than your model”). But long context becomes genuinely valuable when it reduces the hidden tax that kills AI adoption: workflow breakpoints.

At around 200K tokens, teams learned survival tactics:

- chunking documents

- maintaining external summaries

- RAG pipelines for retrieval

- copying and pasting “brand voice” blocks like it’s 2017

With 1M tokens, the working style changes. It becomes plausible to keep a full “truth set” in-thread: brand claims, legal disclaimers, product specs, pricing logic, last quarter’s launch retro, plus the assets you’re currently producing.

Where it hits hardest for marketers

Marketing isn’t blocked by ideas. It’s blocked by consistency and review cycles. A bigger context window doesn’t magically make taste better, but it does make it easier to keep output aligned across a messy reality of:

- multi-channel campaigns: one wrong claim becomes an evergreen screenshot

- product + brand complexity: specs, positioning, competitive notes constantly shifting

- agency and client portfolios: multiple brand bibles and approval histories

- regulated workflows: compliance and mandated language that cannot “kinda” be right

This is the kind of capability that makes AI feel less like a clever intern and more like a long-running project collaborator, still needing oversight, but no longer needing constant memory refresh.

Agent Teams is the “parallelism” moment

If 1M tokens is memory, Agent Teams is staffing. This is Anthropic acknowledging the obvious: real work is not a single linear prompt chain. It’s drafting, validating, QA, formatting, checking claims, reconciling feedback, often in parallel.

Agent Teams aims to make that parallelism native. Instead of one Claude instance doing everything sequentially, you can split tasks across specialized agents that coordinate and merge outputs. That is why this release matters for creators and marketers, not because it writes another landing page, but because it reduces time spent herding the process.

What “agents” look like in creative ops

In practice, the pattern maps cleanly onto how teams already work:

- Agent A (Creative): generates variants for copy, hooks, CTAs

- Agent B (Brand): enforces voice, banned phrases, style rules

- Agent C (Claims and Compliance): checks assertions against approved sources

- Agent D (Packaging): formats for your CMS, ESP, and ad schemas

When this works, it doesn’t replace the team, it upgrades the team’s role from manual production line to creative direction + approvals. Humans own intent. Machines run the grind.

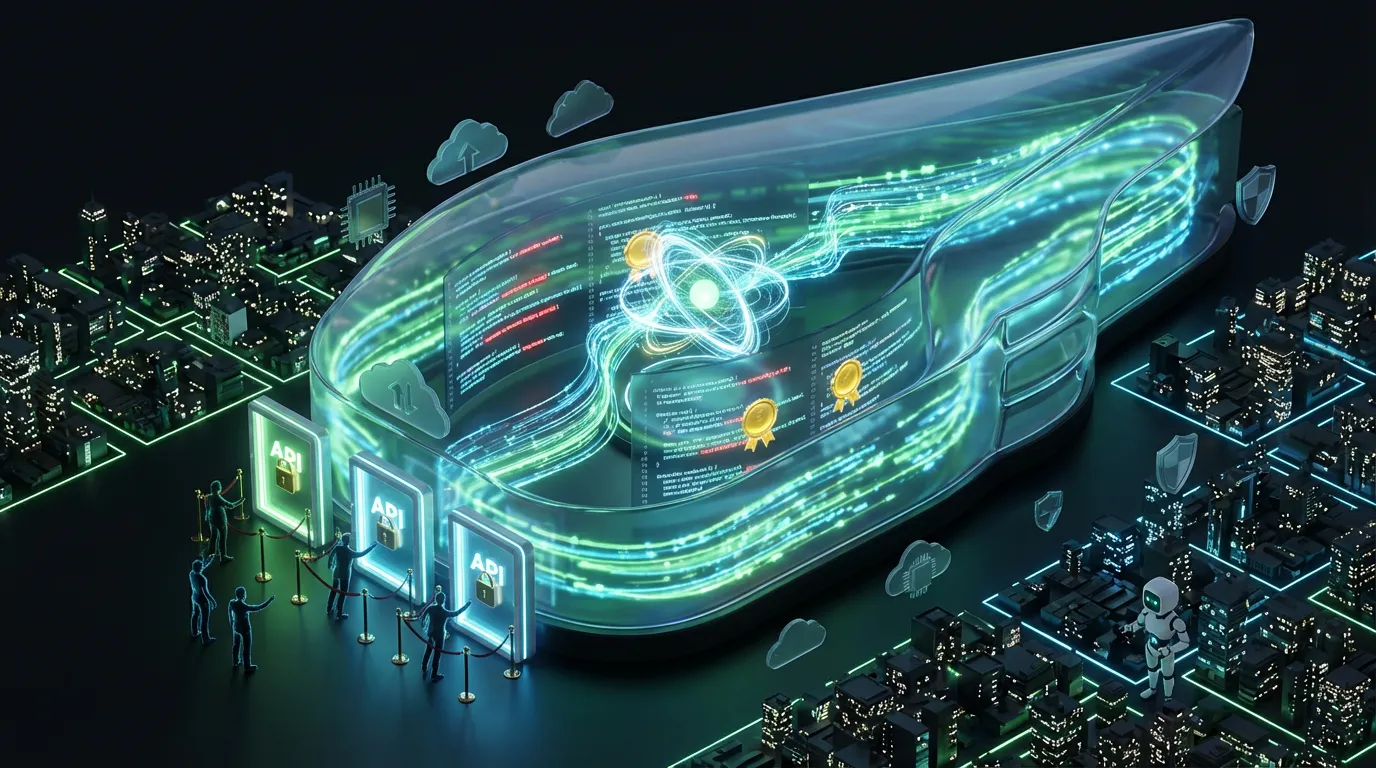

API access and automation readiness

This is where the hype either cashes out or collapses: can you automate it? Opus 4.6 is available via Anthropic’s API, and the 1M-token context is described as beta and gated.

1M context is real, but gated

According to Anthropic’s context window documentation, the 1M-token feature is beta and requires eligibility or enablement rather than being universally available by default (Anthropic docs). Translation for execs: you can pilot it, but do not assume every seat gets it instantly.

Ultra-long context also changes latency and cost dynamics. Even when a model can ingest a million tokens, you still need engineering and ops discipline, because “just shove everything in” is how budgets die.

Agent Teams: preview energy (for now)

Public framing positions Agent Teams as an experimental or research preview inside Claude Code, rather than a fully mature enterprise orchestration primitive. The most important readiness question is not whether the feature exists, it is whether you can run it with:

- audit logs (who did what and why)

- permissioning (which tools an agent can touch)

- budget caps (retry limits and escalation rules)

Reality check: agents do not call the model once. They call it, critique it, retry it, and sometimes argue with themselves. If you do not cap retries, you are basically giving your automation a corporate Amex.

Pricing signals matter (because automation scales spend)

Anthropic’s Opus pricing remains token-based, and the published rates (as of 2026-02) are $5 per million input tokens and $25 per million output tokens for the standard context tier. Anthropic also publishes a higher-priced tier for the extended (up to 1M) context beta: $10 per million input tokens and $37.50 per million output tokens. Always confirm the latest numbers on Anthropic’s pricing page (Anthropic pricing).

What’s ready now vs what needs testing

| Capability | What’s solid today | What to pressure-test |

|---|---|---|

| Opus 4.6 core model | Usable immediately in Claude-based workflows and via API | Quality drift vs your current model on your real tasks |

| 1M context (beta) | Available with gating and enablement | Latency, rate limits, and cost at scale |

| Agent Teams | Previewed as parallel workflow capability in Claude Code | Governance, auditability, safe tool permissions |

The operational implication: fewer handoffs

Here’s what makes Opus 4.6 feel like a workflow release instead of a model release: when you combine massive context with parallel agents, you reduce the number of human glue moments that slow everything down.

Most teams are not constrained by the ability to generate. They are constrained by:

- finding the latest approved truth

- keeping output consistent across channels

- review cycles and version confusion

- manual packaging: formatting, tagging, exporting, uploading

Opus 4.6 attacks that constraint set directly. Not perfectly. Not magically. But materially.

And yes, long context still does not equal truth

This is where we stay responsible and a little snarky: shoving a million tokens into a model does not mean it will pick the right sentence from your policy doc. It means the sentence is available. You still need verification layers, critics, and for high-risk publishing, human signoff.

If you are scaling agentic workflows, the real maturity test is observability: logs, receipts, and traces. For teams thinking about cost + reuse patterns while they scale, this earlier COEY piece pairs well with the direction Anthropic is heading: Semantic Caching: The Unsung Hero of AI Pipelines.

Bottom line

Claude Opus 4.6 is a credible step from “AI assistant” to “AI work system.” The 1M-token context window (beta) tackles context loss, the silent killer of scaled AI workflows. Agent Teams tackles sequential bottlenecks by making parallel production feel native.

For executives and marketing leaders, the pragmatic takeaway is simple: this release can reduce handoffs, preserve intent across long projects, and make multi-step creative production more automatable if you pair it with cost controls, verification, and governance. Otherwise you will just ship the same chaos, faster, with better prose.

AI Marketing That Goes Beyond the Hype

COEY builds the marketing automation systems that agencies and brands actually need: n8n workflows, Claude Cowork agents, OpenClaw models, all connected and delivering. See our automation capabilities, explore our channel work, or request a proposal.