Moonshot AI’s Kimi K2.6 Pushes Open Models Closer to Real Agent Work

Moonshot AI’s Kimi K2.6 Pushes Open Models Closer to Real Agent Work

April 22, 2026

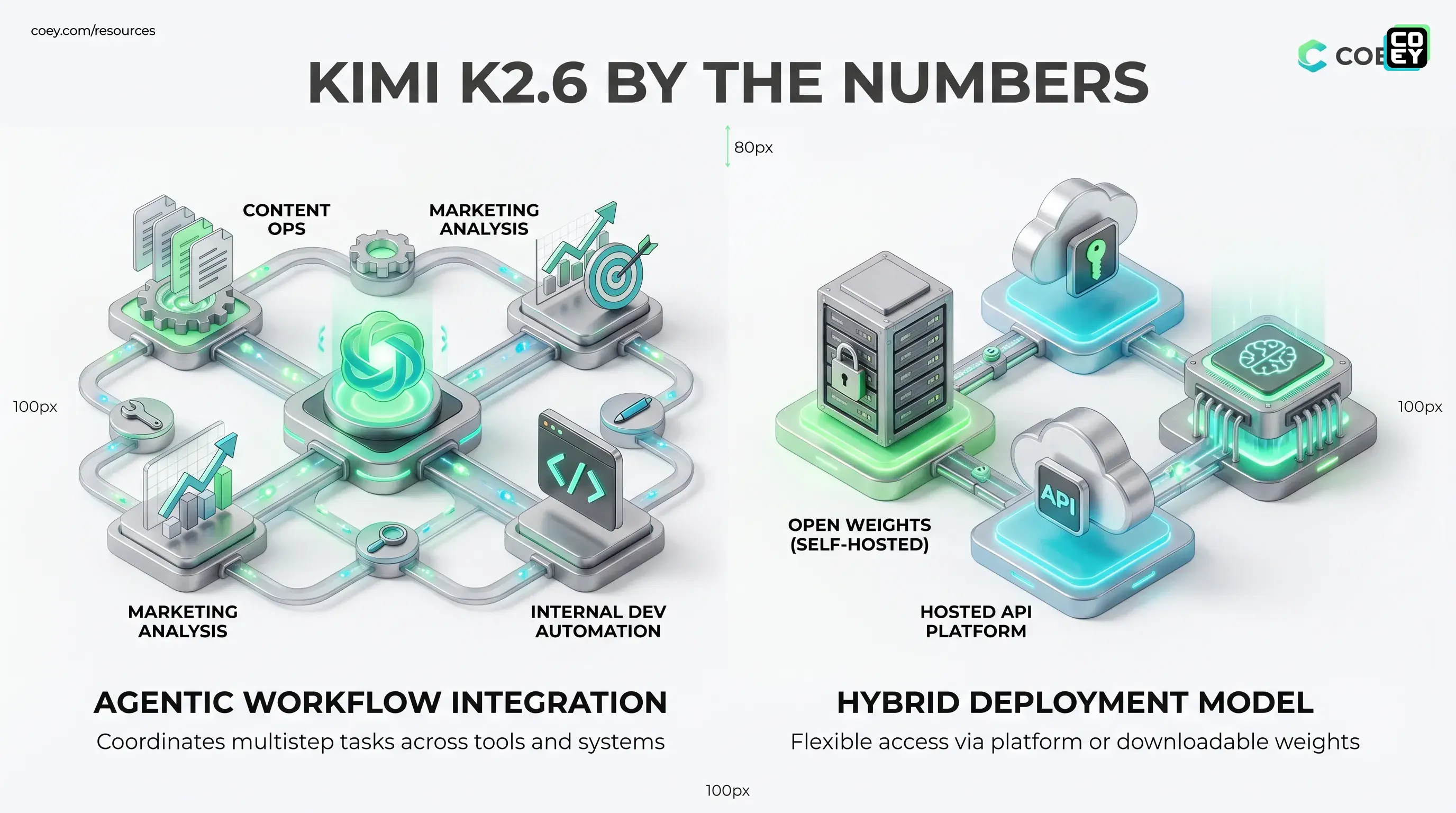

Moonshot AI has released Kimi K2.6, and this is the kind of model launch that matters for a very simple reason: it looks less like “another chatbot with a new benchmark chart” and more like infrastructure for real multi-step automation. That distinction is doing a lot of work here. Kimi K2.6 arrives as an open-weights Mixture-of-Experts model with a hosted API path, a self-hosting story, and a product posture built around agentic execution rather than one-and-done prompting. In a market drowning in “look how smart it is” demos, Moonshot is making a more operational claim: this model is meant to coordinate work.

For executives, marketers, and creative ops teams, the practical question is not whether K2.6 can win a debate club trophy. It is whether the model can be wired into systems that actually reduce grind. On that front, Kimi K2.6 looks unusually relevant. Public launch materials and current reporting point to a 1 trillion parameter MoE architecture with about 32 billion active parameters per token, a roughly 256K context window, open weights, and a hosted platform route for developers. That means this is not trapped inside a polished chat box whispering “enterprise” while refusing to meet your workflow halfway.

The real headline: Kimi K2.6 is not interesting because it is open. It is interesting because it is open and shaped for agent-style work that can plug into actual production systems.

What Moonshot actually shipped

Kimi K2.6 is being positioned as an open-weights model for long-horizon reasoning, coding, tool use, and agent orchestration. Current public details around the launch describe a large Mixture-of-Experts system with support for up to 256,000 tokens of context, hosted access through Moonshot’s platform, and downloadable weights available through the open model ecosystem, including Moonshot’s Hugging Face model page. Public listings also point to a Modified MIT license for the weights, with an attribution requirement to prominently display “Kimi K2.6” in certain product or service use cases.

There is also a more specific angle driving the conversation: Moonshot is emphasizing swarm-style agent execution. Current launch coverage says K2.6 can coordinate up to 300 sub-agents across as many as 4,000 steps in a single run. Even if teams never use the top-end numbers in practice, the product direction is clear. This is being framed less as a single assistant and more as a model substrate for distributed task handling.

| Capability | What it means | Why teams care |

|---|---|---|

| Open weights | Can be self-hosted or adapted | More control and less vendor lock-in |

| Hosted API | Can be called directly from software | Faster path to pilots and workflows |

| Agent-swarm posture | Built for multi-step execution | Better fit for automation than basic chat |

Why the architecture matters

K2.6 uses a Mixture-of-Experts design, which means only part of the total parameter pool is active for any given token. In plain English, the model is huge without behaving like a giant compute tantrum every time you ask it to do something useful. That matters because frontier-level capability is much more interesting when the deployment story does not immediately become a finance problem.

Moonshot’s more interesting architectural bet, though, is not just MoE. It is the agent coordination story. A lot of AI systems can produce good answers in one turn. Fewer can sustain a chain of work, break a problem into sub-tasks, call tools, and keep enough coherence to return something useful at the end. That is the lane K2.6 is trying to occupy.

For workflow builders, this is the difference between:

- a model that writes a draft

- a model that can help run a process

That second category is much more valuable if your goal is scaling creative and operational output without scaling headcount into oblivion.

API-ready, not just app-deep

This is the part non-technical readers should care about most: yes, Kimi K2.6 appears automatable. Moonshot is offering it through its platform, and third-party infrastructure providers are already surfacing access paths as well. That means the model is not just a product experience. It is a callable system component.

If your stack can send an API request, K2.6 can likely be tested inside:

- content ops pipelines for research, drafting, QA, and packaging

- marketing workflows for campaign analysis, audience synthesis, and variant generation

- internal copilots that need long context, structured output, and tool use

- developer and ops workflows involving scripts, repos, and task automation

That is why this launch feels more real than many open-model announcements. Moonshot is not asking teams to admire the weights from a distance. It is offering both a hosted route and a self-hosting route. For organizations trying to decide whether something can plug into n8n, internal apps, approval systems, or custom automation layers, that is the answer that matters.

If a model lives behind a stable API, it can become part of the machine. If it only lives in a UI, it mostly becomes another tab.

If you want the broader context on why this matters, COEY has already covered the same pattern in our look at OpenAI’s GPT-OSS release, where the bigger story was not open for open’s sake, but deployable infrastructure.

Where this looks useful now

Kimi K2.6 looks strongest in work that is too complex for a simple one-prompt interaction but too repetitive to deserve endless human babysitting.

Content operations

Editorial and content teams increasingly need systems that can do more than draft copy. They need tools that can pull source material, summarize patterns, produce structured outputs, run style or compliance checks, and hand the work back to a human reviewer in a sane state. K2.6’s agent-oriented positioning is a strong fit for that kind of pipeline.

Marketing analysis and production

Campaign work is full of chained tasks: research the market, review historical performance, generate variants, check claims, prep assets, update systems. A model designed for multi-step execution can remove more of that repetitive middle than a generic assistant can. Humans still own the brief, the positioning, and the final call. The machine handles more of the mechanical coordination.

Internal developer automation

Moonshot is also leaning hard into coding and software engineering benchmarks, and current launch reporting points to strong results on SWE-style tasks. Reported numbers include about 58.6 on SWE-Bench Pro, 80.2 on SWE-Bench Verified, and 66.7 on Terminal-Bench 2.0. That matters even for non-engineering teams, because modern marketing ops now depends on lightweight scripting, analytics plumbing, tracking fixes, integrations, and workflow maintenance. An agentic coding model is not just for app developers anymore. It is increasingly for anyone whose job touches a broken stack and a deadline.

| Use case | Readiness now | Human role |

|---|---|---|

| Research and synthesis | High | Validate conclusions and priorities |

| Multi-step content workflows | High | Review quality and approve outputs |

| Fully autonomous execution | Medium at best | Own permissions, guardrails, rollback |

What is real vs what is hype

Now for the adult supervision section.

Kimi K2.6 looks promising, but there are still a few caveats worth keeping firmly out of the hype blender.

First, open weights are not the same as easy deployment. Self-hosting a large model still requires infrastructure, monitoring, and people who know what they are doing. “It’s open” does not mean your ops team suddenly becomes a frontier model platform by lunchtime.

Second, agentic capability is not the same as trustworthy autonomy. A model that can take thousands of steps can also make thousands of bad choices if the workflow is poorly scoped. This is where guardrails, permissions, structured outputs, approval steps, and logging still matter. A lot.

Third, benchmark flexing still needs real-world testing. Current public materials point to strong coding and reasoning performance, including standout results on software engineering tasks. Great. Your workflow is still not a benchmark. Your workflow has weird source docs, edge cases, compliance headaches, and at least one spreadsheet built on pure organizational folklore.

So yes, this looks more operational than average. No, that does not make it plug-and-pray infrastructure.

Why this release matters

Kimi K2.6 matters because it reinforces a bigger market shift: AI competition is moving away from “who has the smartest chatbot” and toward “who can provide the most usable agent substrate.” That means open weights, API access, long context, tool use, and multi-step execution matter more than one dazzling sample output on launch day.

For COEY’s audience, the takeaway is straightforward. Kimi K2.6 appears to clear the three questions that matter most:

- Can you automate it? Yes

- Can it plug into your stack? Yes, through hosted API use or self-hosting

- Is it ready for real work? Promisingly yes, with normal guardrails and testing

Bottom line: Moonshot AI’s Kimi K2.6 looks like one of the more serious recent open-model releases because it combines open deployment options with an explicit focus on agentic, multi-step work. It is available via Moonshot’s hosted API and open weights, with current public pricing references placing it around $0.95 per million input tokens and $4.00 per million output tokens. That makes it more than a model drop for the timeline. It makes it a credible candidate for teams building human-plus-machine systems where AI does more than generate a paragraph and wait for applause. In other words: less chatbot cosplay, more workflow potential. That is the lane worth watching.